Mar. 18, 2024

Computer science educators will soon gain valuable insights from computational epidemiology courses, like one offered at Georgia Tech.

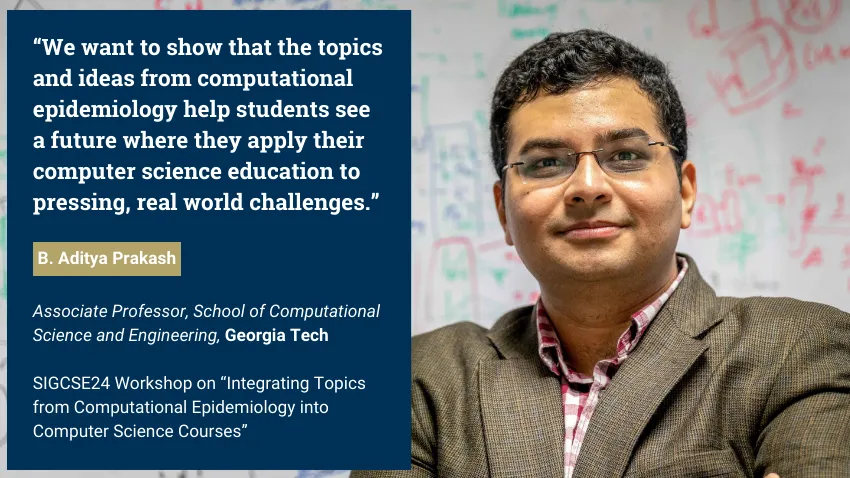

B. Aditya Prakash is part of a research group that will host a workshop on how topics from computational epidemiology can enhance computer science classes.

These lessons would produce computer science graduates with improved skills in data science, modeling, simulation, artificial intelligence (AI), and machine learning (ML).

Because epidemics transcend the sphere of public health, these topics would groom computer scientists versed in issues from social, financial, and political domains.

The group’s virtual workshop takes place on March 20 at the technical symposium for the Special Interest Group on Computer Science Education (SIGCSE). SIGCSE is one of 38 special interest groups of the Association for Computing Machinery (ACM). ACM is the world’s largest scientific and educational computing society.

“We decided to do a tutorial at SIGCSE because we believe that computational epidemiology concepts would be very useful in general computer science courses,” said Prakash, an associate professor in the School of Computational Science and Engineering (CSE).

“We want to give an introduction to concepts, like what computational epidemiology is, and how topics, such as algorithms and simulations, can be integrated into computer science courses.”

Prakash kicks off the workshop with an overview of computational epidemiology. He will use examples from his CSE 8803: Data Science for Epidemiology course to introduce basic concepts.

This overview includes a survey of models used to describe behavior of diseases. Models serve as foundations that run simulations, ultimately testing hypotheses and making predictions regarding disease spread and impact.

Prakash will explain the different kinds of models used in epidemiology, such as traditional mechanistic models and more recent ML and AI based models.

Prakash’s discussion includes modeling used in recent epidemics like Covid-19, Zika, H1N1 bird flu, and Ebola. He will also cover examples from the 19th and 20th centuries to illustrate how epidemiology has advanced using data science and computation.

“I strongly believe that data and computation have a very important role to play in the future of epidemiology and public health is computational,” Prakash said.

“My course and these workshops give that viewpoint, and provide a broad framework of data science and computational thinking that can be useful.”

While humankind has studied disease transmission for millennia, computational epidemiology is a new approach to understanding how diseases can spread throughout communities.

The Covid-19 pandemic helped bring computational epidemiology to the forefront of public awareness. This exposure has led to greater demand for further application from computer science education.

Prakash joins Baltazar Espinoza and Natarajan Meghanathan in the workshop presentation. Espinoza is a research assistant professor at the University of Virginia. Meghanathan is a professor at Jackson State University.

The group is connected through Global Pervasive Computational Epidemiology (GPCE). GPCE is a partnership of 13 institutions aimed at advancing computational foundations, engineering principles, and technologies of computational epidemiology.

The National Science Foundation (NSF) supports GPCE through the Expeditions in Computing program. Prakash himself is principal investigator of other NSF-funded grants in which material from these projects appear in his workshop presentation.

[Related: Researchers to Lead Paradigm Shift in Pandemic Prevention with NSF Grant]

Outreach and broadening participation in computing are tenets of Prakash and GPCE because of how widely epidemics can reach. The SIGCSE workshop is one way that the group employs educational programs to train the next generation of scientists around the globe.

“Algorithms, machine learning, and other topics are fundamental graduate and undergraduate computer science courses nowadays,” Prakash said.

“Using examples like projects, homework questions, and data sets, we want to show that the topics and ideas from computational epidemiology help students see a future where they apply their computer science education to pressing, real world challenges.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Dec. 20, 2023

A new machine learning method could help engineers detect leaks in underground reservoirs earlier, mitigating risks associated with geological carbon storage (GCS). Further study could advance machine learning capabilities while improving safety and efficiency of GCS.

The feasibility study by Georgia Tech researchers explores using conditional normalizing flows (CNFs) to convert seismic data points into usable information and observable images. This potential ability could make monitoring underground storage sites more practical and studying the behavior of carbon dioxide plumes easier.

The 2023 Conference on Neural Information Processing Systems (NeurIPS 2023) accepted the group’s paper for presentation. They presented their study on Dec. 16 at the conference’s workshop on Tackling Climate Change with Machine Learning.

“One area where our group excels is that we care about realism in our simulations,” said Professor Felix Herrmann. “We worked on a real-sized setting with the complexities one would experience when working in real-life scenarios to understand the dynamics of carbon dioxide plumes.”

CNFs are generative models that use data to produce images. They can also fill in the blanks by making predictions to complete an image despite missing or noisy data. This functionality is ideal for this application because data streaming from GCS reservoirs are often noisy, meaning it’s incomplete, outdated, or unstructured data.

The group found in 36 test samples that CNFs could infer scenarios with and without leakage using seismic data. In simulations with leakage, the models generated images that were 96% similar to ground truths. CNFs further supported this by producing images 97% comparable to ground truths in cases with no leakage.

This CNF-based method also improves current techniques that struggle to provide accurate information on the spatial extent of leakage. Conditioning CNFs to samples that change over time allows it to describe and predict the behavior of carbon dioxide plumes.

This study is part of the group’s broader effort to produce digital twins for seismic monitoring of underground storage. A digital twin is a virtual model of a physical object. Digital twins are commonplace in manufacturing, healthcare, environmental monitoring, and other industries.

“There are very few digital twins in earth sciences, especially based on machine learning,” Herrmann explained. “This paper is just a prelude to building an uncertainty aware digital twin for geological carbon storage.”

Herrmann holds joint appointments in the Schools of Earth and Atmospheric Sciences (EAS), Electrical and Computer Engineering, and Computational Science and Engineering (CSE).

School of EAS Ph.D. student Abhinov Prakash Gahlot is the paper’s first author. Ting-Ying (Rosen) Yu (B.S. ECE 2023) started the research as an undergraduate group member. School of CSE Ph.D. students Huseyin Tuna Erdinc, Rafael Orozco, and Ziyi (Francis) Yin co-authored with Gahlot and Herrmann.

NeurIPS 2023 took place Dec. 10-16 in New Orleans. Occurring annually, it is one of the largest conferences in the world dedicated to machine learning.

Over 130 Georgia Tech researchers presented more than 60 papers and posters at NeurIPS 2023. One-third of CSE’s faculty represented the School at the conference. Along with Herrmann, these faculty included Ümit Çatalyürek, Polo Chau, Bo Dai, Srijan Kumar, Yunan Luo, Anqi Wu, and Chao Zhang.

“In the field of geophysics, inverse problems and statistical solutions of these problems are known, but no one has been able to characterize these statistics in a realistic way,” Herrmann said.

“That’s where these machine learning techniques come into play, and we can do things now that you could never do before.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Nov. 29, 2023

The National Institute of Health (NIH) has awarded Yunan Luo a grant for more than $1.8 million to use artificial intelligence (AI) to advance protein research.

New AI models produced through the grant will lead to new methods for the design and discovery of functional proteins. This could yield novel drugs and vaccines, personalized treatments against diseases, and other advances in biomedicine.

“This project provides a new paradigm to analyze proteins’ sequence-structure-function relationships using machine learning approaches,” said Luo, an assistant professor in Georgia Tech’s School of Computational Science and Engineering (CSE).

“We will develop new, ready-to-use computational models for domain scientists, like biologists and chemists. They can use our machine learning tools to guide scientific discovery in their research.”

Luo’s proposal improves on datasets spearheaded by AlphaFold and other recent breakthroughs. His AI algorithms would integrate these datasets and craft new models for practical application.

One of Luo’s goals is to develop machine learning methods that learn statistical representations from the data. This reveals relationships between proteins’ sequence, structure, and function. Scientists then could characterize how sequence and structure determine the function of a protein.

Next, Luo wants to make accurate and interpretable predictions about protein functions. His plan is to create biology-informed deep learning frameworks. These frameworks could make predictions about a protein’s function from knowledge of its sequence and structure. It can also account for variables like mutations.

In the end, Luo would have the data and tools to assist in the discovery of functional proteins. He will use these to build a computational platform of AI models, algorithms, and frameworks that ‘invent’ proteins. The platform figures the sequence and structure necessary to achieve a designed proteins desired functions and characteristics.

“My students play a very important part in this research because they are the driving force behind various aspects of this project at the intersection of computational science and protein biology,” Luo said.

“I think this project provides a unique opportunity to train our students in CSE to learn the real-world challenges facing scientific and engineering problems, and how to integrate computational methods to solve those problems.”

The $1.8 million grant is funded through the Maximizing Investigators’ Research Award (MIRA). The National Institute of General Medical Sciences (NIGMS) manages the MIRA program. NIGMS is one of 27 institutes and centers under NIH.

MIRA is oriented toward launching the research endeavors of young career faculty. The grant provides researchers with more stability and flexibility through five years of funding. This enhances scientific productivity and improves the chances for important breakthroughs.

Luo becomes the second School of CSE faculty to receive the MIRA grant. NIH awarded the grant to Xiuwei Zhang in 2021. Zhang is the J.Z. Liang Early-Career Assistant Professor in the School of CSE.

[Related: Award-winning Computer Models Propel Research in Cellular Differentiation]

“After NIH, of course, I first thanked my students because they laid the groundwork for what we seek to achieve in our grant proposal,” said Luo.

“I would like to thank my colleague, Xiuwei Zhang, for her mentorship in preparing the proposal. I also thank our school chair, Haesun Park, for her help and support while starting my career.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Pagination

- Previous page

- Page 7