May. 02, 2025

Georgia Tech researchers played a key role in the development of a groundbreaking AI framework designed to autonomously generate and evaluate scientific hypotheses in the field of astrobiology. Amirali Aghazadeh, assistant professor in the school of electrical and computer engineering, co-authored the research and contributed to the architecture that divides tasks among multiple specialized AI agents.

This framework, known as the AstroAgents system, is a modular approach which allows the system to simulate a collaborative team of scientists, each with distinct roles such as data analysis, planning, and critique, thereby enhancing the depth and originality of the hypotheses generated

News Contact

Amelia Neumeister | Research Communications Program Manager

The Institute for Matter and Systems

Apr. 24, 2025

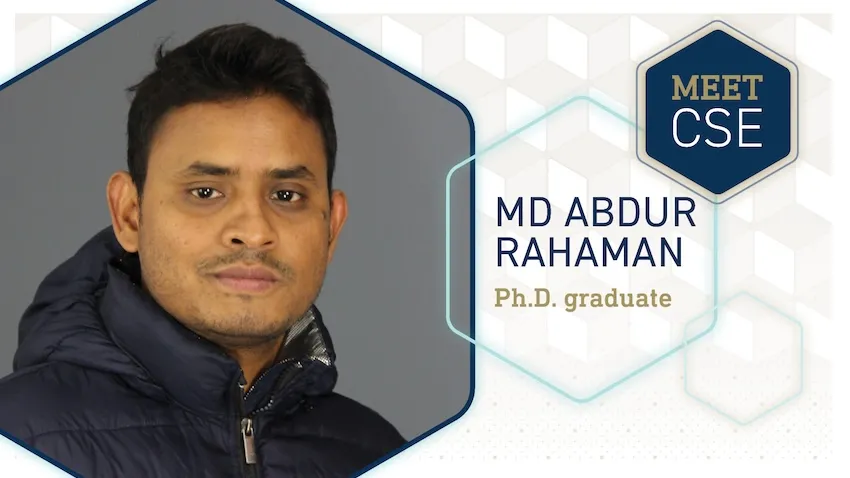

A Georgia Tech doctoral student’s dissertation could help physicians diagnose neuropsychiatric disorders, including schizophrenia, autism, and Alzheimer’s disease. The new approach leverages data science and algorithms instead of relying on traditional methods like cognitive tests and image scans.

Ph.D. candidate Md Abdur Rahaman’s dissertation studies brain data to understand how changes in brain activity shape behavior.

Computational tools Rahaman developed for his dissertation look for informative patterns between the brain and behavior. Successful tests of his algorithms show promise to help doctors diagnose mental health disorders and design individualized treatment plans for patients.

“I've always been fascinated by the human brain and how it defines who we are,” Rahaman said.

“The fact that so many people silently suffer from neuropsychiatric disorders, while our understanding of the brain remains limited, inspired me to develop tools that bring greater clarity to this complexity and offer hope through more compassionate, data-driven care.”

Rahaman’s dissertation introduces a framework focusing on granular factoring. This computing technique stratifies brain data into smaller, localized subgroups, making it easier for computers and researchers to study data and find meaningful patterns.

Granular factoring overcomes the challenges of size and heterogeneity in neurological data science. Brain data is obtained from neuroimaging, genomics, behavioral datasets, and other sources. The large size of each source makes it a challenge to study them individually, let alone analyze them simultaneously, to find hidden inferences.

Rahaman’s research allows researchers and physicians to move past one-size-fits-all approaches. Instead of manually reviewing tests and scans, algorithms look for patterns and biomarkers in the subgroups that otherwise go undetected, especially ones that indicate neuropsychiatric disorders.

“My dissertation advances the frontiers of computational neuroscience by introducing scalable and interpretable models that navigate brain heterogeneity to reveal how neural dynamics shape behavior,” Rahaman said.

“By uncovering subgroup-specific patterns, this work opens new directions for understanding brain function and enables more precise, personalized approaches to mental health care.”

Rahaman defended his dissertation on April 14, the final step in completing his Ph.D. in computational science and engineering. He will graduate on May 1 at Georgia Tech’s Ph.D. Commencement.

After walking across the stage at McCamish Pavilion, Rahaman’s next step in his career is to go to Amazon, where he will work in the generative artificial intelligence (AI) field.

Graduating from Georgia Tech is the summit of an educational trek spanning over a decade. Rahaman hails from Bangladesh where he graduated from Chittagong University of Engineering and Technology in 2013. He attained his master’s from the University of New Mexico in 2019 before starting at Georgia Tech.

“Munna is an amazingly creative researcher,” said Vince Calhoun, Rahman’s advisor. Calhoun is the founding director of the Translational Research in Neuroimaging and Data Science Center (TReNDS).

TReNDS is a tri-institutional center spanning Georgia Tech, Georgia State University, and Emory University that develops analytic approaches and neuroinformatic tools. The center aims to translate the approaches into biomarkers that address areas of brain health and disease.

“His work is moving the needle in our ability to leverage multiple sources of complex biological data to improve understanding of neuropsychiatric disorders that have a huge impact on an individual’s livelihood,” said Calhoun.

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Apr. 02, 2025

Kinaxis, a global leader in supply chain orchestration, and the NSF AI Institute for Advances in Optimization (AI4OPT) at Georgia Tech today announced a new co-innovation partnership. This partnership will focus on developing scalable artificial intelligence (AI) and optimization solutions to address the growing complexity of global supply chains. AI4OPT operates under Tech AI, Georgia Tech’s AI hub, bringing together interdisciplinary expertise to advance real-world AI applications.

This particular collaboration builds on a multi-year relationship between Kinaxis and Georgia Tech, strengthening their shared commitment to turn academic innovation into real-world supply chain impact. The collaboration will span joint research, real-world applications, thought leadership, guest lectures, and student internships.

“In collaboration with AI4OPT, Kinaxis is exploring how the fusion of machine learning and optimization may bring a step change in capabilities for the next generation of supply chain management systems,” said Pascal Van Hentenryck, the A. Russell Chandler III Chair and professor at Georgia Tech, and director of AI4OPT and Tech AI at Georgia Tech.

Kinaxis’ AI-infused supply chain orchestration platform, Maestro™, combines proprietary technologies and techniques to deliver real-time transparency, agility, and decision-making across the entire supply chain — from multi-year strategic orchestration to last-mile delivery. As global supply chains face increasing disruptions from tariffs, pandemics, extreme weather, and geopolitical events, the Kinaxis–AI4OPT partnership will focus on developing AI-driven strategies to enhance companies’ responsiveness and resilience.

“At Kinaxis, we recognize the vital role that academic research plays in shaping the future of supply chain orchestration,” said Chief Technology Officer Gelu Ticala. “By partnering with world-class institutions like Georgia Tech, we’re closing the gap between AI innovation and implementation, bringing cutting-edge ideas into practice to solve the industry’s most pressing challenges.”

With more than 40 years of supply chain leadership, Kinaxis supports some of the world’s most complex industries, including high-tech, life sciences, industrial, mobility, consumer products, chemical, and oil and gas. Its customers include Unilever, P&G, Ford, Subaru, Lockheed Martin, Raytheon, Ipsen, and Santen.

About Kinaxis

Kinaxis is a global leader in modern supply chain orchestration, powering complex global supply chains and supporting the people who manage them, in service of humanity. Our powerful, AI-infused supply chain orchestration platform, Maestro™, combines proprietary technologies and techniques that provide full transparency and agility across the entire supply chain — from multi-year strategic planning to last-mile delivery. We are trusted by renowned global brands to provide the agility and predictability needed to navigate today’s volatility and disruption. For more news and information, please visit kinaxis.com or follow us on LinkedIn.

About AI4OPT

The NSF AI Institute for Advances in Optimization (AI4OPT) is one of the 27 National Artificial Intelligence Research Institutes set up by the National Science Foundation to conduct use-inspired research and realize the potential of AI. The AI Institute for Advances in Optimization (AI4OPT) is focused on AI for Engineering and is conducting cutting-edge research at the intersection of learning, optimization, and generative AI to transform decision making at massive scales, driven by applications in supply chains, energy systems, chip design and manufacturing, and sustainable food systems. AI4OPT brings together over 80 faculty and students from Georgia Tech, UC Berkeley, University of Southern California, UC San Diego, Clark Atlanta University, and the University of Texas at Arlington, working together with industrial partners that include Intel, Google, UPS, Ryder, Keysight, Southern Company, and Los Alamos National Laboratory. To learn more, visit ai4opt.org.

About Tech AI

Tech AI is Georgia Tech's hub for artificial intelligence research, education, and responsible deployment. With over $120 million in active AI research funding, including more than $60 million in NSF support for five AI Research Institutes, Tech AI drives innovation through cutting-edge research, industry partnerships, and real-world applications. With over 370 papers published at top AI conferences and workshops, Tech AI is a leader in advancing AI-driven engineering, mobility, and enterprise solutions. Through strategic collaborations, Tech AI bridges the gap between AI research and industry, optimizing supply chains, enhancing cybersecurity, advancing autonomous systems, and transforming healthcare and manufacturing. Committed to workforce development, Tech AI provides AI education across all levels, from K-12 outreach to undergraduate and graduate programs, as well as specialized certifications. These initiatives equip students with hands-on experience, industry exposure, and the technical expertise needed to lead in AI-driven industries. Bringing AI to the world through innovation, collaboration, and partnerships. Visit tech.ai.gatech.edu.

News Contact

Angela Barajas Prendiville | Director of Media Relations

aprendiville@gatech.edu

Feb. 14, 2025

Men and women in California put their lives on the line when battling wildfires every year, but there is a future where machines powered by artificial intelligence are on the front lines, not firefighters.

However, this new generation of self-thinking robots would need security protocols to ensure they aren’t susceptible to hackers. To integrate such robots into society, they must come with assurances that they will behave safely around humans.

It begs the question: can you guarantee the safety of something that doesn’t exist yet? It’s something Assistant Professor Glen Chou hopes to accomplish by developing algorithms that will enable autonomous systems to learn and adapt while acting with safety and security assurances.

He plans to launch research initiatives, in collaboration with the School of Cybersecurity and Privacy and the Daniel Guggenheim School of Aerospace Engineering, to secure this new technological frontier as it develops.

“To operate in uncertain real-world environments, robots and other autonomous systems need to leverage and adapt a complex network of perception and control algorithms to turn sensor data into actions,” he said. “To obtain realistic assurances, we must do a joint safety and security analysis on these sensors and algorithms simultaneously, rather than one at a time.”

This end-to-end method would proactively look for flaws in the robot’s systems rather than wait for them to be exploited. This would lead to intrinsically robust robotic systems that can recover from failures.

Chou said this research will be useful in other domains, including advanced space exploration. If a space rover is sent to one of Saturn’s moons, for example, it needs to be able to act and think independently of scientists on Earth.

Aside from fighting fires and exploring space, this technology could perform maintenance in nuclear reactors, automatically maintain the power grid, and make autonomous surgery safer. It could also bring assistive robots into the home, enabling higher standards of care.

This is a challenging domain where safety, security, and privacy concerns are paramount due to frequent, close contact with humans.

This will start in the newly established Trustworthy Robotics Lab at Georgia Tech, which Chou directs. He and his Ph.D. students will design principled algorithms that enable general-purpose robots and autonomous systems to operate capably, safely, and securely with humans while remaining resilient to real-world failures and uncertainty.

Chou earned dual bachelor’s degrees in electrical engineering and computer sciences as well as mechanical engineering from University of California Berkeley in 2017, a master’s and Ph.D. in electrical and computer engineering from the University of Michigan in 2019 and 2022, respectively. He was a postdoc at MIT Computer Science & Artificial Intelligence Laboratory prior to joining Georgia Tech in November 2024. He is a recipient of the National Defense Science and Engineering Graduate fellowship program, NSF Graduate Research fellowships, and was named a Robotics: Science and Systems Pioneer in 2022.

News Contact

John (JP) Popham

Communications Officer II

College of Computing | School of Cybersecurity and Privacy

Dec. 18, 2024

As we go through our daily routines of work, chores, errands and leisure pursuits, most of us take our mobility for granted. Conversely, many people suffer from permanent or temporary mobility issues due to neurological disorders, stroke, injury, and age-related causes. Research in the field of robotic exoskeletons has shown significant potential to provide assistive support for patients with permanent mobility constraints, as well as an effective additional tool for rehabilitation and recovery after injury.

Though the field has made great progress in the hardware and devices for these assistive technologies, there are limitations in ease of use and in the ability to move from walking to running, from flat ground to slopes and stairs, and across different terrains. Recent developments to create exoskeleton controllers that are more responsive to the user’s environment via user-based variables such as gait and slope calculations provide rapid yet imprecise outputs. More recent inquiry into data-driven improvements such as vision-based labeling and classification are extremely promising additions in the goal to develop a true synchronous user and device interface. A major hindrance to this data-driven approach is the need for burdensome mounted cameras and on-board computing to allow for real-time in use adjustments to the environmental terrain encountered.

In order to address these barriers, Aaron Young, Associate Professor in the Woodruff School of Mechanical Engineering and Director of the Exoskeleton and Prosthetic Intelligent Controls (EPIC) Lab, and Dawit Lee, Postdoctoral Scholar at Stanford, have created an artificial intelligence (AI)-based universal exoskeleton controller that uses information from onboard mechanical sensors without the added weight and complexity of mounted vision based systems. The new work, published in Science Advances (Link to Be Added), presents a controller that holistically captures the major variations encountered during community walking in real-time. The team combined data from the Americans with Disabilities Act (ADA) building guidelines that characterize ambulatory terrains in slope level degrees with a gait phase estimator to achieve dynamic switching of assistance types between multiple terrains and slopes and delivery to the user with little to no delay.

In this work, we have created a new, open-source knee exoskeleton design that is intended to support community mobility. Knee assist devices have tremendous value in activities such as sit-to-stand, stairs, and ramps where we use our biological knees substantially to accomplish these tasks. The neat accomplishment in this work is that by leveraging AI, we avoid the need to classify these different modes discretely but rather have a single continuous variable (in this case rise over run of the surface) to enable continuous and unified control over common ambulatory tasks such as walking, stairs, and ramps. We demonstrate that on novel users of the device, we can track both the environment and the user’s gait state with very high accuracy out of the lab in community settings. It is an exciting time in the field as we see more studies, such as this one, showing promise in tackling real-world mobility challenges

The assistance approach using our intelligent controller, presented in this work, provides users with support at the right timing and with a magnitude that closely matches the varying biomechanical effort they produce as they move through the community. Our assistance approach was preferred for community navigation and was more effective in reducing the user’s energy consumption compared to conventional methods. We also open-sourced the design of the robotic knee exoskeleton hardware and the dataset used to train the models with this publication which allows other researchers to build upon our developments and further advance the field. This work demonstrates an exciting example of AI integration into a wearable robotic system, showcasing its successful outcomes and significant potential.

- Dawit Lee; Postdoctoral Scholar, Stanford

Using this combination of a universal slope estimator and a gait phase estimator, the team achieved results in the dynamic modulation of exoskeleton assistance that have never been achieved by previous approaches and moves the field closer to creating an adaptive and effective assistive technology that seamlessly integrates into the daily lives of individuals, promoting enhanced mobility and overall well-being. This work also has the potential to enable a mode-specific assistance approach tailored to the user’s specific biomechanical needs.

- Christa M. Ernst; Research Communications Program Manager

Original Publication

Dawit Lee, Sanghyub Lee, and Aaron J. Young, “AI-Driven Universal Lower-Limb Exoskeleton System for Community Ambulation,” Science Advances

Prior Related Work

D. Lee, I. Kang, D. D. Molinaro, A. Yu, A. J. Young, Real-time user-independent slope prediction using deep learning for modulation of robotic knee exoskeleton assistance. IEEE Robot. Autom. Lett. 6, 3995–4000 (2021).

Funding Provided by

NIH Director’s New Innovator Award DP2-HD111709

Dec. 03, 2024

A new machine learning (ML) model from Georgia Tech could protect communities from diseases, better manage electricity consumption in cities, and promote business growth, all at the same time.

Researchers from the School of Computational Science and Engineering (CSE) created the Large Pre-Trained Time-Series Model (LPTM) framework. LPTM is a single foundational model that completes forecasting tasks across a broad range of domains.

Along with performing as well or better than models purpose-built for their applications, LPTM requires 40% less data and 50% less training time than current baselines. In some cases, LPTM can be deployed without any training data.

The key to LPTM is that it is pre-trained on datasets from different industries like healthcare, transportation, and energy. The Georgia Tech group created an adaptive segmentation module to make effective use of these vastly different datasets.

The Georgia Tech researchers will present LPTM in Vancouver, British Columbia, Canada, at the 2024 Conference on Neural Information Processing Systems (NeurIPS 2024). NeurIPS is one of the world’s most prestigious conferences on artificial intelligence (AI) and ML research.

“The foundational model paradigm started with text and image, but people haven’t explored time-series tasks yet because those were considered too diverse across domains,” said B. Aditya Prakash, one of LPTM’s developers.

“Our work is a pioneer in this new area of exploration where only few attempts have been made so far.”

[MICROSITE: Georgia Tech at NeurIPS 2024]

Foundational models are trained with data from different fields, making them powerful tools when assigned tasks. Foundational models drive GPT, DALL-E, and other popular generative AI platforms used today. LPTM is different though because it is geared toward time-series, not text and image generation.

The Georgia Tech researchers trained LPTM on data ranging from epidemics, macroeconomics, power consumption, traffic and transportation, stock markets, and human motion and behavioral datasets.

After training, the group pitted LPTM against 17 other models to make forecasts as close to nine real-case benchmarks. LPTM performed the best on five datasets and placed second on the other four.

The nine benchmarks contained data from real-world collections. These included the spread of influenza in the U.S. and Japan, electricity, traffic, and taxi demand in New York, and financial markets.

The competitor models were purpose-built for their fields. While each model performed well on one or two benchmarks closest to its designed purpose, the models ranked in the middle or bottom on others.

In another experiment, the Georgia Tech group tested LPTM against seven baseline models on the same nine benchmarks in zero-shot forecasting tasks. Zero-shot means the model is used out of the box and not given any specific guidance during training. LPTM outperformed every model across all benchmarks in this trial.

LPTM performed consistently as a top-runner on all nine benchmarks, demonstrating the model’s potential to achieve superior forecasting results across multiple applications with less and resources.

“Our model also goes beyond forecasting and helps accomplish other tasks,” said Prakash, an associate professor in the School of CSE.

“Classification is a useful time-series task that allows us to understand the nature of the time-series and label whether that time-series is something we understand or is new.”

One reason traditional models are custom-built to their purpose is that fields differ in reporting frequency and trends.

For example, epidemic data is often reported weekly and goes through seasonal peaks with occasional outbreaks. Economic data is captured quarterly and typically remains consistent and monotone over time.

LPTM’s adaptive segmentation module allows it to overcome these timing differences across datasets. When LPTM receives a dataset, the module breaks data into segments of different sizes. Then, it scores all possible ways to segment data and chooses the easiest segment from which to learn useful patterns.

LPTM’s performance, enhanced through the innovation of adaptive segmentation, earned the model acceptance to NeurIPS 2024 for presentation. NeurIPS is one of three primary international conferences on high-impact research in AI and ML. NeurIPS 2024 occurs Dec. 10-15.

Ph.D. student Harshavardhan Kamarthi partnered with Prakash, his advisor, on LPTM. The duo are among the 162 Georgia Tech researchers presenting over 80 papers at the conference.

Prakash is one of 46 Georgia Tech faculty with research accepted at NeurIPS 2024. Nine School of CSE faculty members, nearly one-third of the body, are authors or co-authors of 17 papers accepted at the conference.

Along with sharing their research at NeurIPS 2024, Prakash and Kamarthi released an open-source library of foundational time-series modules that data scientists can use in their applications.

“Given the interest in AI from all walks of life, including business, social, and research and development sectors, a lot of work has been done and thousands of strong papers are submitted to the main AI conferences,” Prakash said.

“Acceptance of our paper speaks to the quality of the work and its potential to advance foundational methodology, and we hope to share that with a larger audience.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Oct. 18, 2024

The U.S. Department of Energy (DOE) has awarded Georgia Tech researchers a $4.6 million grant to develop improved cybersecurity protection for renewable energy technologies.

Associate Professor Saman Zonouz will lead the project and leverage the latest artificial technology (AI) to create Phorensics. The new tool will anticipate cyberattacks on critical infrastructure and provide analysts with an accurate reading of what vulnerabilities were exploited.

“This grant enables us to tackle one of the crucial challenges facing national security today: our critical infrastructure resilience and post-incident diagnostics to restore normal operations in a timely manner,” said Zonouz.

“Together with our amazing team, we will focus on cyber-physical data recovery and post-mortem forensics analysis after cybersecurity incidents in emerging renewable energy systems.”

As the integration of renewable energy technology into national power grids increases, so does their vulnerability to cyberattacks. These threats put energy infrastructure at risk and pose a significant danger to public safety and economic stability. The AI behind Phorensics will allow analysts and technicians to scale security efforts to keep up with a growing power grid that is becoming more complex.

This effort is part of the Security of Engineering Systems (SES) initiative at Georgia Tech’s School of Cybersecurity and Privacy (SCP). SES has three pillars: research, education, and testbeds, with multiple ongoing large, sponsored efforts.

“We had a successful hiring season for SES last year and will continue filling several open tenure-track faculty positions this upcoming cycle,” said Zonouz.

“With top-notch cybersecurity and engineering schools at Georgia Tech, we have begun the SES journey with a dedicated passion to pursue building real-world solutions to protect our critical infrastructures, national security, and public safety.”

Zonouz is the director of the Cyber-Physical Systems Security Laboratory (CPSec) and is jointly appointed by Georgia Tech’s School of Cybersecurity and Privacy (SCP) and the School of Electrical and Computer Engineering (ECE).

The three Georgia Tech researchers joining him on this project are Brendan Saltaformaggio, associate professor in SCP and ECE; Taesoo Kim, jointly appointed professor in SCP and the School of Computer Science; and Animesh Chhotaray, research scientist in SCP.

Katherine Davis, associate professor at the Texas A&M University Department of Electrical and Computer Engineering, has partnered with the team to develop Phorensics. The team will also collaborate with the NREL National Lab, and industry partners for technology transfer and commercialization initiatives.

The Energy Department defines renewable energy as energy from unlimited, naturally replenished resources, such as the sun, tides, and wind. Renewable energy can be used for electricity generation, space and water heating and cooling, and transportation.

News Contact

John Popham

Communications Officer II

College of Computing | School of Cybersecurity and Privacy

Oct. 16, 2024

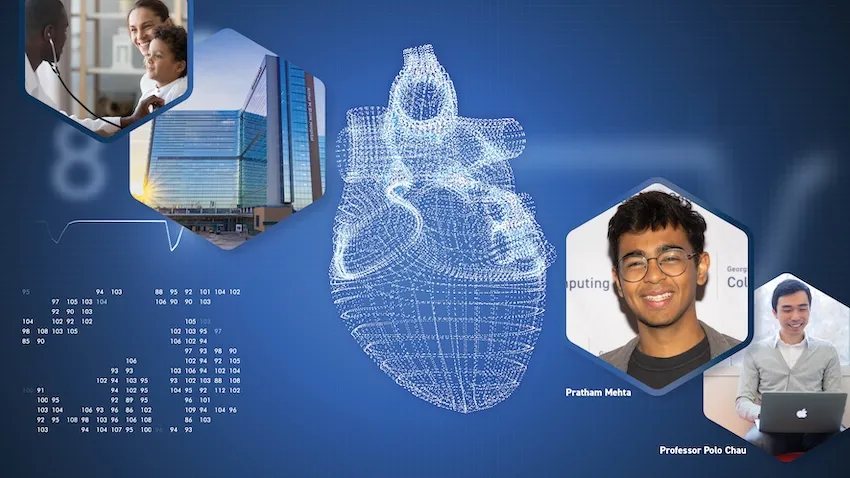

A new surgery planning tool powered by augmented reality (AR) is in development for doctors who need closer collaboration when planning heart operations. Promising results from a recent usability test have moved the platform one step closer to everyday use in hospitals worldwide.

Georgia Tech researchers partnered with medical experts from Children’s Healthcare of Atlanta (CHOA) to develop and test ARCollab. The iOS-based app leverages advanced AR technologies to let doctors collaborate together and interact with a patient’s 3D heart model when planning surgeries.

The usability evaluation demonstrates the app’s effectiveness, finding that ARCollab is easy to use and understand, fosters collaboration, and improves surgical planning.

“This tool is a step toward easier collaborative surgical planning. ARCollab could reduce the reliance on physical heart models, saving hours and even days of time while maintaining the collaborative nature of surgical planning,” said M.S. student Pratham Mehta, the app’s lead researcher.

“Not only can it benefit doctors when planning for surgery, it may also serve as a teaching tool to explain heart deformities and problems to patients.”

Two cardiologists and three cardiothoracic surgeons from CHOA tested ARCollab. The two-day study ended with the doctors taking a 14-question survey assessing the app’s usability. The survey also solicited general feedback and top features.

The Georgia Tech group determined from the open-ended feedback that:

- ARCollab enables new collaboration capabilities that are easy to use and facilitate surgical planning.

- Anchoring the model to a physical space is important for better interaction.

- Portability and real-time interaction are crucial for collaborative surgical planning.

Users rated each of the 14 questions on a 7-point Likert scale, with one being “strongly disagree” and seven being “strongly agree.” The 14 questions were organized into five categories: overall, multi-user, model viewing, model slicing, and saving and loading models.

The multi-user category attained the highest rating with an average of 6.65. This included a unanimous 7.0 rating that it was easy to identify who was controlling the heart model in ARCollab. The scores also showed it was easy for users to connect with devices, switch between viewing and slicing, and view other users’ interactions.

The model slicing category received the lowest, but formidable, average of 5.5. These questions assessed ease of use and understanding of finger gestures and usefulness to toggle slice direction.

Based on feedback, the researchers will explore adding support for remote collaboration. This would assist doctors in collaborating when not in a shared physical space. Another improvement is extending the save feature to support multiple states.

“The surgeons and cardiologists found it extremely beneficial for multiple people to be able to view the model and collaboratively interact with it in real-time,” Mehta said.

The user study took place in a CHOA classroom. CHOA also provided a 3D heart model for the test using anonymous medical imaging data. Georgia Tech’s Institutional Review Board (IRB) approved the study and the group collected data in accordance with Institute policies.

The five test participants regularly perform cardiovascular surgical procedures and are employed by CHOA.

The Georgia Tech group provided each participant with an iPad Pro with the latest iOS version and the ARCollab app installed. Using commercial devices and software meets the group’s intentions to make the tool universally available and deployable.

“We plan to continue iterating ARCollab based on the feedback from the users,” Mehta said.

“The participants suggested the addition of a ‘distance collaboration’ mode, enabling doctors to collaborate even if they are not in the same physical environment. This allows them to facilitate surgical planning sessions from home or otherwise.”

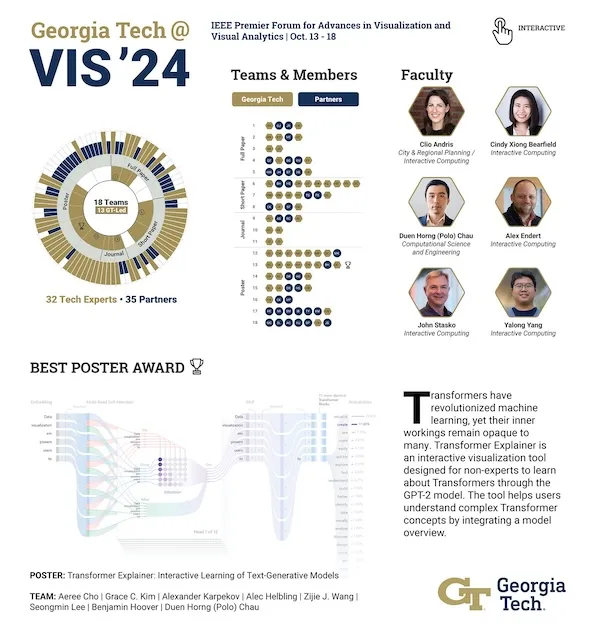

The Georgia Tech researchers are presenting ARCollab and the user study results at IEEE VIS 2024, the Institute of Electrical and Electronics Engineers (IEEE) visualization conference.

IEEE VIS is the world’s most prestigious conference for visualization research and the second-highest rated conference for computer graphics. It takes place virtually Oct. 13-18, moved from its venue in St. Pete Beach, Florida, due to Hurricane Milton.

The ARCollab research group's presentation at IEEE VIS comes months after they shared their work at the Conference on Human Factors in Computing Systems (CHI 2024).

Undergraduate student Rahul Narayanan and alumni Harsha Karanth (M.S. CS 2024) and Haoyang (Alex) Yang (CS 2022, M.S. CS 2023) co-authored the paper with Mehta. They study under Polo Chau, a professor in the School of Computational Science and Engineering.

The Georgia Tech group partnered with Dr. Timothy Slesnick and Dr. Fawwaz Shaw from CHOA on ARCollab’s development and user testing.

"I'm grateful for these opportunities since I get to showcase the team's hard work," Mehta said.

“I can meet other like-minded researchers and students who share these interests in visualization and human-computer interaction. There is no better form of learning.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Oct. 01, 2024

The Institute for Robotics and Intelligent Machines (IRIM) launched a new initiatives program, starting with several winning proposals, with corresponding initiative leads that will broaden the scope of IRIM’s research beyond its traditional core strengths. A major goal is to stimulate collaboration across areas not typically considered as technical robotics, such as policy, education, and the humanities, as well as open new inter-university and inter-agency collaboration routes. In addition to guiding their specific initiatives, these leads will serve as an informal internal advisory body for IRIM. Initiative leads will be announced annually, with existing initiative leaders considered for renewal based on their progress in achieving community building and research goals. We hope that initiative leads will act as the “faculty face” of IRIM and communicate IRIM’s vision and activities to audiences both within and outside of Georgia Tech.

Meet 2024 IRIM Initiative Leads

Stephen Balakirsky; Regents' Researcher, Georgia Tech Research Institute & Panagiotis Tsiotras; David & Andrew Lewis Endowed Chair, Daniel Guggenheim School of Aerospace Engineering | Proximity Operations for Autonomous Servicing

Why It Matters: Proximity operations in space refer to the intricate and precise maneuvers and activities that spacecraft or satellites perform when they are in close proximity to each other, such as docking, rendezvous, or station-keeping. These operations are essential for a variety of space missions, including crewed spaceflights, satellite servicing, space exploration, and maintaining satellite constellations. While this is a very broad field, this initiative will concentrate on robotic servicing and associated challenges. In this context, robotic servicing is composed of proximity operations that are used for servicing and repairing satellites in space. In robotic servicing, robotic arms and tools perform maintenance tasks such as refueling, replacing components, or providing operation enhancements to extend a satellite's operational life or increase a satellite’s capabilities.

Our Approach: By forming an initiative in this important area, IRIM will open opportunities within the rapidly evolving space community. This will allow us to create proposals for organizations ranging from NASA and the Defense Advanced Research Projects Agency to the U.S. Air Force and U.S. Space Force. This will also position us to become national leaders in this area. While several universities have a robust robotics program and quite a few have a strong space engineering program, there are only a handful of academic units with the breadth of expertise to tackle this problem. Also, even fewer universities have the benefit of an experienced applied research partner, such as the Georgia Tech Research Institute (GTRI), to undertake large-scale demonstrations. Georgia Tech, having world-renowned programs in aerospace engineering and robotics, is uniquely positioned to be a leader in this field. In addition, creating a workshop in proximity operations for autonomous servicing will allow the GTRI and Georgia Tech space robotics communities to come together and better understand strengths and opportunities for improvement in our abilities.

Matthew Gombolay; Assistant Professor, Interactive Computing | Human-Robot Society in 2125: IRIM Leading the Way

Why It Matters: The coming robot “apocalypse” and foundation models captured the zeitgeist in 2023 with “ChatGPT” becoming a topic at the dinner table and the probability occurrence of various scenarios of AI driven technological doom being a hotly debated topic on social media. Futuristic visions of ubiquitous embodied Artificial Intelligence (AI) and robotics have become tangible. The proliferation and effectiveness of first-person view drones in the Russo-Ukrainian War, autonomous taxi services along with their failures, and inexpensive robots (e.g., Tesla’s Optimus and Unitree’s G1) have made it seem like children alive today may have robots embedded in their everyday lives. Yet, there is a lack of trust in the public leadership bringing us into this future to ensure that robots are developed and deployed with beneficence.

Our Approach: This proposal seeks to assemble a team of bright, savvy operators across academia, government, media, nonprofits, industry, and community stakeholders to develop a roadmap for how we can be the most trusted voice to guide the public in the next 100 years of innovation in robotics here at the IRIM. We propose to carry out specific activities that include conducting the activities necessary to develop a roadmap about Robots in 2125: Altruistic and Integrated Human-Robot Society. We also aim to build partnerships to promulgate these outcomes across Georgia Tech’s campus and internationally.

Gregory Sawicki; Joseph Anderer Faculty Fellow, School of Mechanical Engineering & Aaron Young; Associate Professor, Mechanical Engineering | Wearable Robotic Augmentation for Human Resilience

Why It Matters: The field of robotics continues to evolve beyond rigid, precision-controlled machines for amplifying production on manufacturing assembly lines toward soft, wearable systems that can mediate the interface between human users and their natural and built environments. Recent advances in materials science have made it possible to construct flexible garments with embedded sensors and actuators (e.g., exosuits). In parallel, computers continue to get smaller and more powerful, and state-of-the art machine learning algorithms can extract useful information from more extensive volumes of input data in real time. Now is the time to embed lean, powerful, sensorimotor elements alongside high-speed and efficient data processing systems in a continuous wearable device.

Our Approach: The mission of the Wearable Robotic Augmentation for Human Resilience (WeRoAHR) initiative is to merge modern advances in sensing, actuation, and computing technology to imagine and create adaptive, wearable augmentation technology that can improve human resilience and longevity across the physiological spectrum — from behavioral to cellular scales. The near-term effort (~2-3 years) will draw on Georgia Tech’s existing ecosystem of basic scientists and engineers to develop WeRoAHR systems that will focus on key targets of opportunity to increase human resilience (e.g., improved balance, dexterity, and stamina). These initial efforts will establish seeds for growth intended to help launch larger-scale, center-level efforts (>5 years).

Panagiotis Tsiotras; David & Andrew Lewis Endowed Chair, Daniel Guggenheim School of Aerospace Engineering & Sam Coogan; Demetrius T. Paris Junior Professor, School of Electrical and Computer Engineering | Initiative on Reliable, Safe, and Secure Autonomous Robotics

Why It Matters: The design and operation of reliable systems is primarily an integration issue that involves not only each component (software, hardware) being safe and reliable but also the whole system being reliable (including the human operator). The necessity for reliable autonomous systems (including AI agents) is more pronounced for “safety-critical” applications, where the result of a wrong decision can be catastrophic. This is quite a different landscape from many other autonomous decision systems (e.g., recommender systems) where a wrong or imprecise decision is inconsequential.

Our Approach: This new initiative will investigate the development of protocols, techniques, methodologies, theories, and practices for designing, building, and operating safe and reliable AI and autonomous engineering systems and contribute toward promoting a culture of safety and accountability grounded in rigorous objective metrics and methodologies for AI/autonomous and intelligent machines designers and operators, to allow the widespread adoption of such systems in safety-critical areas with confidence. The proposed new initiative aims to establish Tech as the leader in the design of autonomous, reliable engineering robotic systems and investigate the opportunity for a federally funded or industry-funded research center (National Science Foundation (NSF) Science and Technology Centers/Engineering Research Centers) in this area.

Colin Usher; Robotics Systems and Technology Branch Head, GTRI | Opportunities for Agricultural Robotics and New Collaborations

Why It Matters: The concepts for how robotics might be incorporated more broadly in agriculture vary widely, ranging from large-scale systems to teams of small systems operating in farms, enabling new possibilities. In addition, there are several application areas in agriculture, ranging from planting, weeding, crop scouting, and general growing through harvesting. Georgia Tech is not a land-grant university, making our ability to capture some of the opportunities in agricultural research more challenging. By partnering with a land-grant university such as the University of Georgia (UGA), we can leverage this relationship to go after these opportunities that, historically, were not available.

Our Approach: We plan to build collaborations first by leveraging relationships we have already formed within GTRI, Georgia Tech, and UGA. We will achieve this through a significant level of networking, supported by workshops and/or seminars with which to recruit faculty and form a roadmap for research within the respective universities. Our goal is to identify and pursue multiple opportunities for robotics-related research in both row-crop and animal-based agriculture. We believe that we have a strong opportunity, starting with formalizing a program with the partners we have worked with before, with the potential to improve and grow the research area by incorporating new faculty and staff with a unified vision of ubiquitous robotics systems in agriculture. We plan to achieve this through scheduled visits with interested faculty, attendance at relevant conferences, and ultimately hosting a workshop to formalize and define a research roadmap.

Ye Zhao; Assistant Professor, School of Mechanical Engineering | Safe, Social, & Scalable Human-Robot Teaming: Interaction, Synergy, & Augmentation

Why It Matters: Collaborative robots in unstructured environments such as construction and warehouse sites show great promise in working with humans on repetitive and dangerous tasks to improve efficiency and productivity. However, pre-programmed and nonflexible interaction behaviors of existing robots lower the naturalness and flexibility of the collaboration process. Therefore, it is crucial to improve physical interaction behaviors of the collaborative human-robot teaming.

Our Approach: This proposal will advance the understanding of the bi-directional influence and interaction of human-robot teaming for complex physical activities in dynamic environments by developing new methods to predict worker intention via multi-modal wearable sensing, reasoning about complex human-robot-workspace interaction, and adaptively planning the robot’s motion considering both human teaming dynamics and physiological and cognitive states. More importantly, our team plans to prioritize efforts to (i) broaden the scope of IRIM’s autonomy research by incorporating psychology, cognitive, and manufacturing research not typically considered as technical robotics research areas; (ii) initiate new IRIM education, training, and outreach programs through collaboration with team members from various Georgia Tech educational and outreach programs (including Project ENGAGES, VIP, and CEISMC) as well as the AUCC (World’s largest consortia of African American private institutions of higher education) which comprises Clark Atlanta University, Morehouse College, & Spelman College; and (iii) aim for large governmental grants such as DOD MURI, NSF NRT, and NSF Future of Work programs.

-Christa M. Ernst

Sep. 19, 2024

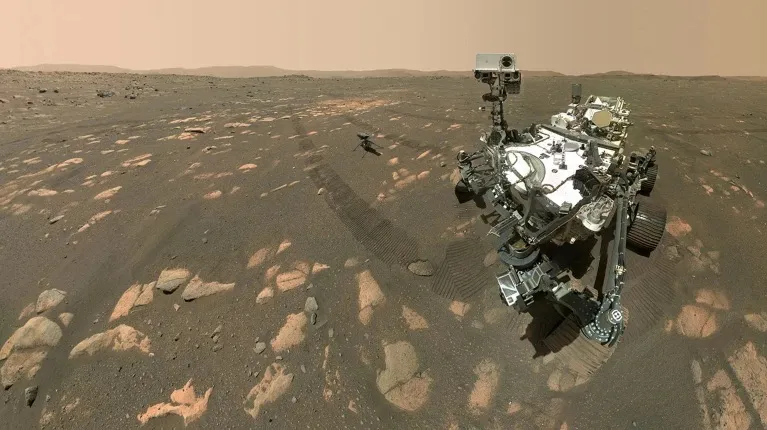

A new algorithm tested on NASA’s Perseverance Rover on Mars may lead to better forecasting of hurricanes, wildfires, and other extreme weather events that impact millions globally.

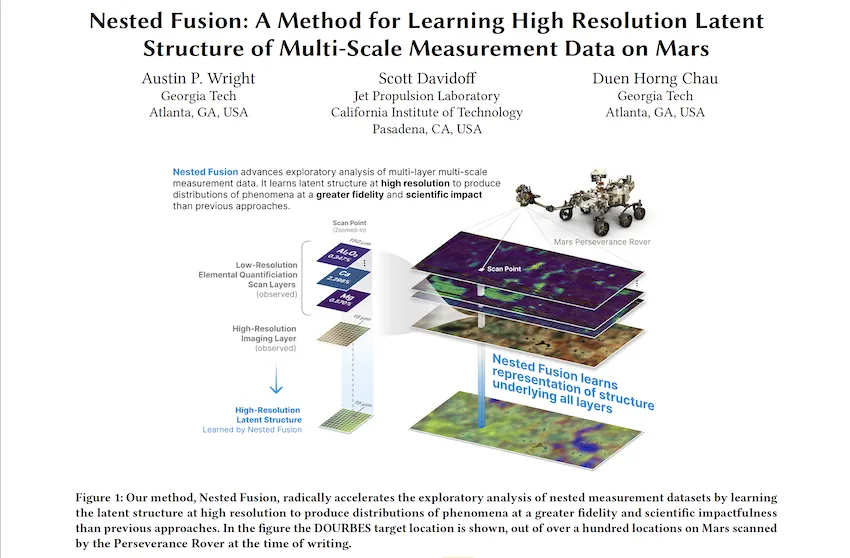

Georgia Tech Ph.D. student Austin P. Wright is first author of a paper that introduces Nested Fusion. The new algorithm improves scientists’ ability to search for past signs of life on the Martian surface.

In addition to supporting NASA’s Mars 2020 mission, scientists from other fields working with large, overlapping datasets can use Nested Fusion’s methods toward their studies.

Wright presented Nested Fusion at the 2024 International Conference on Knowledge Discovery and Data Mining (KDD 2024) where it was a runner-up for the best paper award. KDD is widely considered the world's most prestigious conference for knowledge discovery and data mining research.

“Nested Fusion is really useful for researchers in many different domains, not just NASA scientists,” said Wright. “The method visualizes complex datasets that can be difficult to get an overall view of during the initial exploratory stages of analysis.”

Nested Fusion combines datasets with different resolutions to produce a single, high-resolution visual distribution. Using this method, NASA scientists can more easily analyze multiple datasets from various sources at the same time. This can lead to faster studies of Mars’ surface composition to find clues of previous life.

The algorithm demonstrates how data science impacts traditional scientific fields like chemistry, biology, and geology.

Even further, Wright is developing Nested Fusion applications to model shifting climate patterns, plant and animal life, and other concepts in the earth sciences. The same method can combine overlapping datasets from satellite imagery, biomarkers, and climate data.

“Users have extended Nested Fusion and similar algorithms toward earth science contexts, which we have received very positive feedback,” said Wright, who studies machine learning (ML) at Georgia Tech.

“Cross-correlational analysis takes a long time to do and is not done in the initial stages of research when patterns appear and form new hypotheses. Nested Fusion enables people to discover these patterns much earlier.”

Wright is the data science and ML lead for PIXLISE, the software that NASA JPL scientists use to study data from the Mars Perseverance Rover.

Perseverance uses its Planetary Instrument for X-ray Lithochemistry (PIXL) to collect data on mineral composition of Mars’ surface. PIXL’s two main tools that accomplish this are its X-ray Fluorescence (XRF) Spectrometer and Multi-Context Camera (MCC).

When PIXL scans a target area, it creates two co-aligned datasets from the components. XRF collects a sample's fine-scale elemental composition. MCC produces images of a sample to gather visual and physical details like size and shape.

A single XRF spectrum corresponds to approximately 100 MCC imaging pixels for every scan point. Each tool’s unique resolution makes mapping between overlapping data layers challenging. However, Wright and his collaborators designed Nested Fusion to overcome this hurdle.

In addition to progressing data science, Nested Fusion improves NASA scientists' workflow. Using the method, a single scientist can form an initial estimate of a sample’s mineral composition in a matter of hours. Before Nested Fusion, the same task required days of collaboration between teams of experts on each different instrument.

“I think one of the biggest lessons I have taken from this work is that it is valuable to always ground my ML and data science problems in actual, concrete use cases of our collaborators,” Wright said.

“I learn from collaborators what parts of data analysis are important to them and the challenges they face. By understanding these issues, we can discover new ways of formalizing and framing problems in data science.”

Wright presented Nested Fusion at KDD 2024, held Aug. 25-29 in Barcelona, Spain. KDD is an official special interest group of the Association for Computing Machinery. The conference is one of the world’s leading forums for knowledge discovery and data mining research.

Nested Fusion won runner-up for the best paper in the applied data science track, which comprised of over 150 papers. Hundreds of other papers were presented at the conference’s research track, workshops, and tutorials.

Wright’s mentors, Scott Davidoff and Polo Chau, co-authored the Nested Fusion paper. Davidoff is a principal research scientist at the NASA Jet Propulsion Laboratory. Chau is a professor at the Georgia Tech School of Computational Science and Engineering (CSE).

“I was extremely happy that this work was recognized with the best paper runner-up award,” Wright said. “This kind of applied work can sometimes be hard to find the right academic home, so finding communities that appreciate this work is very encouraging.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Pagination

- Previous page

- Page 5

- Next page