Apr. 20, 2026

How did the earliest life on Earth build complex biological machinery with so few tools? A new study explores how the simplest building blocks of proteins — once limited to just half of today’s amino acids — could still form the sophisticated structures life depends on.

The paper, The Borderlands of Foldability: Lessons from Simplified Proteins, is a meta-analysis of six decades of protein research and reveals that ancient proteins may have been far more complicated and dynamic than previously thought.

Recently published in the journal Trends in Chemistry, the study includes Georgia Tech researchers Lynn Kamerlin, professor in the School of Chemistry and Biochemistry and Georgia Research Alliance Vasser-Woolley Chair in Molecular Design, and Quantitative Biosciences Ph.D. candidate Alfie-Louise Brownless.

Co-authors also include Institute of Science Tokyo graduate student Koh Seya and Liam M. Longo, who serves as a specially appointed associate professor at Science Tokyo and as an affiliate research scientist at the Blue Marble Space Institute of Science.

The research has implications ranging from the origins of life and the search for life in the universe to cutting-edge medical innovation. “One of the biggest unanswered questions in science is how life first began,” says Kamerlin, who is a corresponding author of the study. “Understanding how the first protein-like molecules formed and what the earliest proteins may have been like is a key part of that puzzle.”

“Proteins power our bodies — and all life on Earth,” she adds. “Simply put, the evolution of proteins is the reason that we’re able to have this conversation at all.”

A Protein Folding Paradox

If proteins are the scaffolding of life, amino acids are the components that make up that scaffolding. “Today, an average protein is constructed from a chain of about 300 amino acids, involving 20 different types of amino acids,” Kamerlin shares. Proteins fold when these chains twist into a specific 3-dimensional shape, creating structures critical for biology.

However, while these folds are essential, exactly how a protein knows which way to fold remains a mystery. “We know that proteins didn’t just fold randomly,” Kamerlin shares, “because randomly trying all possible configurations would take a protein longer than the age of the universe.”

It’s a cornerstone problem in biological science called “Levinthal’s Paradox,” and highlights a fundamental mystery: Proteins fold incredibly quickly into very specific combinations — but like a sheet of paper spontaneously folding into an origami swan, researchers don’t know how proteins “choose” the folds they make.

“We can predict what a protein will look like, but can’t tell you how it got there,” Kamerlin adds. “That’s what we’re interested in exploring: how small early proteins developed into the complex proteins that support every living thing on today’s Earth.”

Simple Letters, Sophisticated Structures

Early proteins likely had access to just half of today’s amino acids. “About 10-12 amino acids were likely available on early Earth,” Kamerlin says. Like writing a story with just the letters “A” through “L,” researchers assumed that the ‘vocabulary’ proteins could build from such a limited amino acid alphabet would also be constrained.

“There is a language to protein folding,” Kamerlin explains. “That language is hidden in their structures. Our research is in trying to understand the rules — the grammar and vocabulary that dictate a protein fold.”

The grammar they discovered was surprising: with a combination of creative techniques and environmental support, complex structures can arise from limited amino acid alphabets.

“We found that it is possible to develop complex folds with very simple tools — and certain environments, like salty ones, can help support that,” Kamerlin shares. “Early proteins could also cross-link and associate, interacting like LEGO blocks to create more complex structures.”

Pioneering Proteins

Now, the team is conducting research in environments that could mimic conditions on early Earth — aiming to discover more about how these regions could have given rise to today’s complex proteins. “This aspect of our research also ties into the amazing space research happening at Georgia Tech,” Kamerlin says. “While we’re interested in understanding early life on Earth, our work could help inform where best to look for evidence of life beyond our planet.”

Kamerlin specializes in creating computer models that simulate possible scenarios – creating an opportunity to quickly and efficiently test many theories. The most compelling of these can then be tested by her collaborator and co-author at Science Tokyo, Liam Longo, in lab experiments.

Protein folding is also at the forefront of medical innovation, ranging from diagnostic tools to cancer treatments and neurodegenerative diseases. “In the broader scope, we’re interested in discovering what we can design, what we can stress test, and what we can reconstruct with AI and other computational tools,” Kamerlin says. “Because if you can understand how proteins fold, you gain the ability to design them.”

Funding: NASA, the Human Frontier Science Program, and the Knut and Alice Wallenberg Foundation

Apr. 13, 2026

Artificial intelligence has been touted as the most transformative technology of our time. With only a few years of mainstream use, it’s changed how we work and communicate, generated billions of dollars in investments, and sparked global debate. But according to leading neuroethics expert Karen Rommelfanger, the race isn’t over yet.

“Can you think of a more transformative technology than one that intervenes with the fundamental organ that drives your experience in the world?”

That fundamental organ is the brain.

Technologies interfacing directly with the brain have been reserved for treating severe injury or disease for decades. Now, neurotechnology is expanding into brain-responsive wearables meant to enhance, augment, and monitor everyday life. As these technologies accelerate and AI is incorporated, the question is no longer if neurotechnology will transform society, but how — and who will shape the boundaries.

These are some of the questions on which Karen Rommelfanger has built her career. Trained as a biomedical researcher and neuroscientist, Rommelfanger went on to found the Institute for Neuroethics, the world’s first think and do tank devoted entirely to neuroethics, public engagement, and policy implementation.

“The brain is special; it’s central to who we are,” says Rommelfanger, who was also an inaugural recipient of the Dana Foundation Neuroscience and Society Award. “And that means when you intervene with the brain, there are unique responsibilities. The field of neuroethics addresses things like: How do you ensure mental privacy? How do you protect free will? How do you ensure that people have the power to be narrators of their own lives and their cognitive experience?”

Now, Rommelfanger is joining Georgia Tech’s Institute for Neuroscience, Neurotechnology, and Society (INNS) as a professor of the practice, where she will work to further embed neuroethics into Georgia Tech’s research and technology development ecosystem.

“Georgia Tech is producing the next generation of neurotechnologists, and Karen’s expertise will help ensure we’re preparing them to think about societal impact as deeply as they think about the technical and scientific aspects of their work,” says Christopher Rozell, executive director of INNS. “Her leadership strengthens the Institute in exactly the way this moment in neurotechnology demands.”

“Georgia Tech has many, many ways that it leads in the technology ecosystem. But one of the powerful, unique ways it can lead is through neurotechnology,” says Rommelfanger. “I hope that the INNS, given its unique mandate for neuroscience, neurotechnology, and society, can be a lighthouse for these types of conversations.”

Neuroethics by Design

From institutional review boards to mandatory responsible research conduct training, ethics are a foundational part of scientific research. But designing neurotechnologies raises ethical challenges beyond the scope of typical training. What happens when discoveries leave the lab and enter people’s lives?

That question sits at the core of Rommelfanger’s work. She argues it’s a neurotechnologist’s responsibility to recognize and proactively address the need for unique safeguards for privacy, autonomy, and long-term responsibility. Her solution is to move neuroethics upstream, embedding it directly into the research, design, and deployment of neurotechnology through an approach she calls “neuroethics by design.”

“Neuroethics by design considers ethics as a core criterion where principles can drive innovation with more of a lens toward societal outcomes,” she says — an approach informed by years of advising national-level brain research initiatives and her experience at the intersection of clinical practice and ethics scholarship.

Rather than treating ethics as a compliance checklist or a post hoc review, neuroethics by design integrates ethical thinking throughout the entire innovation lifecycle, from early ideation and research questions to product requirements, governance strategies, and long-term sustainability. She has used the approach for years as an embedded partner for neurotechnology startups in her neuroethics consultancy, Ningen Co-Lab.

After decades as a traditional academic professor and then years advising companies and policymakers with this philosophy, Rommelfanger says Georgia Tech is the right place to scale this work. With its strength in neurotechnology and INNS’s rare focus on neuroscience and society, “I could not think of a better place to launch and pilot this neuroethics by design scaling effort.”

She will work with INNS to help equip researchers, students, and industry partners with practical tools for ethical decision-making. Her vision is not to create neuroethicists as a standalone profession, but to cultivate ethically engaged neurotechnologists and engineers.

Central to her plans at INNS are hands-on training programs that bring ethics out of the abstract and into practice. “I wanted to be a professor of the practice because, while the field does need more scholars, what it really needs most at this point are practitioners.”

Rommelfanger is exploring modular content that can be embedded into existing courses across disciplines, as well as immersive training — such as neuroethics boot camps and problem-solving hackathons — that bring together students, faculty, and professionals to tackle real-world challenges collaboratively.

“No one discipline can solve all the ethical challenges ahead,” says Rommelfanger. She is particularly interested in creating spaces where experts from across science and engineering, policy and law, design and the arts, and philosophy can work side by side with people with lived experience of neurological conditions. “The onus is not on scientists alone, but is a shared responsibility that benefits immensely from dialogue, accountability, and action across diverse communities.”

By situating neuroethics within Georgia Tech’s broader research ecosystem, Rommelfanger hopes INNS can help shift how the field evolves globally.

“It's really difficult to get your arms around something once it's out of the gate,” she says, citing the rapid adoption of AI without proper ethical or policy guidelines. “With neurotechnology, we still have a little bit of time, but not that much time. We are at that moment where we could change the course of global history.”

News Contact

Audra Davidson

Research Communications Program Manager

Institute for Neuroscience, Neurotechnology, and Society (INNS)

Apr. 13, 2026

Vibe coding programmers are releasing batches of vulnerable code, according to researchers at the School of Cybersecurity and Privacy (SCP) at Georgia Tech, who have scanned over 43,000 security advisories across the web.

The programming style relies on using generative artificial intelligence (AI) to create software code using tools like Claude, Gemini, and GitHub Copilot. According to graduate research assistant Hanqing Zhao of the Systems Software & Security Lab (SSLab), no one had been tracking these common vulnerabilities and exposures before the launch of their Vibe Security Radar.

“The vulnerabilities we found lead to breaches,” he said. “Everyone is using these tools now. We need a feedback loop to identify which tools, which patterns, and which workflows create the most risk.”

The radar extensively scans public vulnerability databases, finds the error for each vulnerability, and then examines the code’s history to find who introduced the bug. If they discover an AI tool's signature, the radar flags it.

Of the 74 confirmed cases uncovered so far by the tool, 14 are critical risks, and 25 are high. These vulnerabilities include command injection, authentication bypass, and server-side request forgery. Zhao explained that since AI models tend to repeat the same mistakes, an attacker would need to find these bugs just once.

“Millions of developers using the same models means the same bugs showing up across different projects,” he said. “Find one pattern in one AI codebase, you can scan for it across thousands of repositories.”

Despite its success, the team has only scratched the surface of the problem. The radar can trace metadata like co-author tags, bot emails, and other known tool signatures, but it can't identify an issue if these markers have been removed.

The next step is behavioral detection. AI-written code has patterns in how it names variables, structures functions, and handles errors.

“We're building models that can identify AI code from the code itself, no metadata needed,” said Zhao. “That opens up a lot of cases we currently can't touch.”

The team is also improving its verification pipeline and expanding its sources to include more vulnerability databases. The goal is to get a more complete picture of AI-introduced vulnerabilities across open source, not just the ones that happen to leave signatures behind.

As more programmers rely on vibe coding, Zhao warns that it still needs to be reviewed as thoroughly as any other project.

“The whole point of vibe coding is not reading it afterward, I know,” he said. “But if you're shipping AI output to production, review it the way you'd review a junior developer's pull request. Especially anything around input handling and authentication.”

When prompting AI, SSLab also recommends providing more detailed instructions to get it closer to production-ready. There are also tools to check the code for vulnerabilities after code it has been generated. Not double-checking could lead to a catastrophe.

“The attack surface keeps growing,” said Zhao. “More people running AI agents locally means the attacker doesn't need to break into the company infrastructure. They just need one vulnerability in a model context protocol server that someone installed and never reviewed.”

One reason the attack surfaces are expanding rapidly is AI’s evolution. In the second half of 2025, the Vibe Security Radar found about 18 cases across seven months. Then, in the first three months of 2026, it identified 56. March 2026 alone had 35, more than all of 2025 combined.

Many tools, like Claude, are now more autonomous, allowing developers to write entire features, create files, and even make architecture decisions.

“When an agent builds something without authentication, that's not a typo,” said Zhao. “It's a design flaw baked in from the start. Claude Code and Copilot together account for most of what we detect, but that's partly because they leave the clearest signatures.”

News Contact

John Popham

Communications Officer II at the School of Cybersecurity and Privacy

Apr. 02, 2026

As students increasingly turn to artificial intelligence (AI) to help with coursework, some worry that their learning could be compromised. Georgia Tech researchers are working to counter this potential decline with an AI tool they hope will promote learning rather than hinder it.

TokenSmith is a citation-supported large language model (LLM) tutor that can be hosted locally on a user’s personal computer. The tutor only provides answers based on course materials, such as the textbook or lecture slides.

Associate Professor Joy Arulraj began the project with support from the Bill Kent Family Foundation AI in Higher Education Faculty Fellowship last year. The fellowship, led by Georgia Tech’s Center for 21st Century Universities, supports faculty projects exploring innovative and ethical uses of AI in teaching.

Arulraj has enlisted assistant professors Kexin Rong and Steve Mussmann to help build TokenSmith.

Mussmann said TokenSmith is a synergistic blend of a database system and a machine learning system. The model stores textbooks, textbook annotations by course staff, common questions and answers, a learning state of the student, and student feedback in a structured database system. However, machine learning plays a key role in the answer generation as well as adapting the system to the student, course staff guidance, and user feedback.

"What excites me most is demonstrating how data-driven ML and principled database systems design can reinforce each other — one providing adaptability and flexibility, the other providing structure and traceability — in a way that benefits students," Mussmann said.

Keeping the model local has been an important focus of the project. The team wanted to create an AI tutor that helps students learn from their class resources rather than just giving answers. With each response, TokenSmith cites the origin of the answer in the provided documents.

“One problem with LLMs is that they can hallucinate and provide wrong answers, but in this controlled environment, we can add these guardrails to make sure it’s actually helpful in an educational setting,” Rong said.

Rong said she feels that students often undervalue textbooks, and she hopes TokenSmith can motivate students to make better use of them.

“Textbooks can sometimes be daunting, but maybe if we combine them with the model, students might be more willing to read a paragraph or page in the textbook, and that could help clarify something for them,” she said.

Running the model locally is more cost-effective and helps preserve the user’s privacy. But running the new tool locally comes with technical challenges.

One challenge with creating the model is speed. Since it is a locally based model, TokenSmith depends solely on the user’s computer memory. Tests have also shown that the tutor currently struggles to answer more complex questions.

“We are interested in pushing the boundaries of these local models so that they give students good answers and also run fast enough to keep students engaged,” Arulraj said.

News Contact

Morgan Usry, Communications Officer

Apr. 01, 2026

Manufacturing is undergoing a significant transformation as artificial intelligence reshapes how industrial systems operate, adapt, and scale. The H. Milton Stewart School of Industrial and Systems Engineering (ISyE) has launched its Manufacturing and AI Initiative, which brings together faculty expertise in statistics, optimization, data science, and systems engineering to address emerging challenges and opportunities in modern manufacturing.

ISyE researchers are applying AI to complex manufacturing environments, including multistage production systems, asset management, quality improvement, and human‑centered manufacturing. Faculty leaders emphasize the importance of contextualizing large volumes of manufacturing data so AI can support reliable decision‑making, efficient operations, and sustainable outcomes. At the same time, the initiative acknowledges challenges such as data integration, system complexity, and the need to balance automation with human involvement. Together, these efforts position ISyE at the forefront of shaping AI‑powered manufacturing systems that are innovative, resilient, and socially responsible.

Read the full article in ISyE Magazine

News Contact

Annette Filliat, ISyE Communications Writer

Mar. 24, 2026

Tickets are now on sale for The Atlanta Opera’s NOW Festival, returning June 12–14, 2026. Celebrating its fifth year, the festival highlights bold, contemporary storytelling and emerging voices in opera through new works and performances.

This year’s festival features the world premiere of Water Memory (Jala Smirti), a chamber opera exploring how artificial intelligence can support individuals living with dementia and provide assistance for aging parents. The festival also includes the 96-Hour Opera Project, a signature competition showcasing newly created opera scenes by emerging artists.

Events will take place across multiple Atlanta venues, including the Ferst Center for the Arts at Georgia Tech and the Ray Charles Performing Arts Center at Morehouse College. Performances include the premiere of Water Memory on June 12, the competition showcase on June 13, and an encore performance on June 14.

Tickets start at $35, with festival passes available for $50. The NOW Festival continues to foster innovation in opera while mentoring the next generation of artists.

Mar. 31, 2026

While people use search engines, chatbots, and generative artificial intelligence tools every day, most don’t know how they work. This sets unrealistic expectations for AI and leads to misuse. It also slows progress toward building new AI applications.

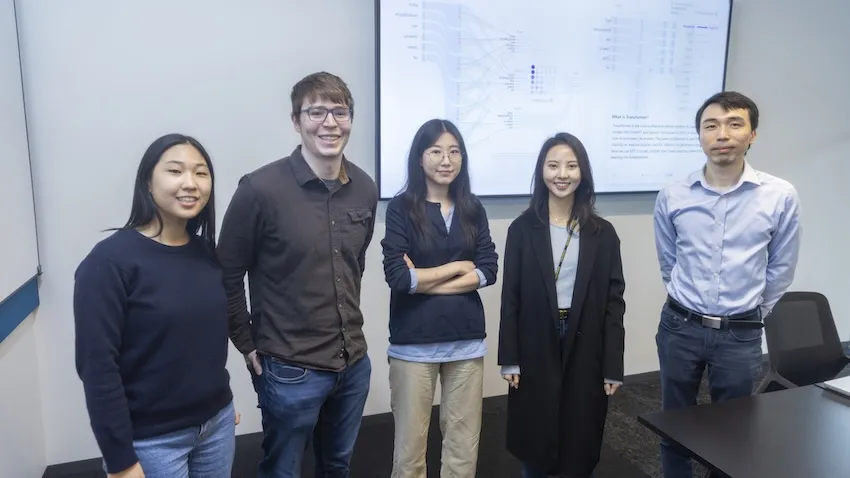

Georgia Tech researchers are making AI easier to understand through their work on Transformer Explainer. The free, online tool shows non-experts how ChatGPT, Claude, and other large language models (LLMs) process language.

Transformer Explainer is easy to use and runs on any web browser. It quickly went viral after its debut, reaching 150,000 users in its first three months. More than 563,000 people worldwide have used the tool so far.

Global interest in Transformer Explainer continues when the team presents the tool at the 2026 Conference on Human Factors in Computing Systems (CHI 2026). CHI, the world’s most prestigious conference on human-computer interaction, will take place in Barcelona, April 13-17.

“There are moments when LLMs can seem almost like a person with their own will and personality, and that misperception has real consequences. For example, there have been cases where teenagers have made poor decisions based on conversations with LLMs,” said Ph.D. student Aeree Cho.

“Understanding that an LLM is fundamentally a model that predicts the probability distribution of the next token helps users avoid taking its outputs as absolute. What you put in shapes what comes out, and that understanding helps people engage with AI more carefully and critically.”

A transformer is a neural network architecture that changes data input sequence into an output. Text, audio, and images are forms of processed data, which is why transformers are common in generative AI models. They do this by learning context and tracking mathematical relationships between sequence components.

Transformer Explainer demystifies how transformers work. The platform uses visualization and interaction to show, step by step, how text flows through a model and produces predictions.

Using this approach, Transformer Explainer impacts the AI landscape in four main ways:

- It counters hype and misconceptions surrounding AI by showing how transformers work.

- It improves AI literacy among users by removing technical barriers and lowering the entry for learning about AI.

- It expands AI education by helping instructors teach AI mechanisms without extensive setup or computing resources.

- It influences future development of AI tools and educational techniques by providing a blueprint for interpretable AI systems.

“When I first learned about transformers, I felt overwhelmed. A transformer model has many parts, each with its own complex math. Existing resources typically present all this information at once, making it difficult to see how everything fits together,” said Grace Kim, a dual B.S./M.S. computer science student.

“By leveraging interactive visualization, we use levels of abstraction to first show the big picture of the entire model. Then users click into individual parts to reveal the underlying details and math. This way, Transformer Explainer makes learning far less intimidating.”

Many users don’t know what transformers are or how they work. The Georgia Tech team found that people often misunderstand AI. Some label AI with human-like characteristics, such as creativity. Others even describe it as working like magic.

Furthermore, barriers make it hard for students interested in transformers to start learning. Tutorials tend to be too technical and overwhelm beginners with math and code. While visualization tools exist, these often target more advanced AI experts.

Transformer Explainer overcomes these obstacles through its interactive, user-focused platform. It runs a familiar GPT model directly in any web browser, requiring no installation or special hardware.

Users can enter their own text and watch the model predict the next word in real time. Sankey-style diagrams show how information moves through embeddings, attention heads, and transformer blocks.

The platform also lets users switch between high-level concepts and detailed math. By adjusting temperature settings, users can see how randomness affects predictions. This reveals how probabilities drive AI outputs, rather than creativity.

“Millions of people around the world interact with transformer-driven AI. We believe that it is crucial to bridge the gap between day-to-day user experience and the models' technical reality, ensuring these tools are not misinterpreted as human-like or seen as sentient,” said Ph.D. student Alex Karpekov.

“Explaining the architecture helps users recognize that language generated by models is a product of computation, leading to a more grounded engagement with the technology.”

Cho, Karpekov, and Kim led the development of Transformer Explainer. Ph.D. students Alec Helbling, Seongmin Lee, Ben Hoover, and alumni Zijie (Jay) Wang (Ph.D. ML-CSE 2024) and Minsuk Kahng (Ph.D. CS-CSE 2019) assisted on the project.

Professor Polo Chau supervised the group and their work. His lab focuses on data science, human-centered AI, and visualization for social good.

Acceptance at CHI 2026 stems from the team winning the best poster award at the 2024 IEEE Visualization Conference. This recognition from one of the top venues in visualization research highlights Transformer Explainer’s effectiveness in teaching how transformers work.

“Transformer Explainer has reached over half a million learners worldwide,” said Chau, a faculty member in the School of Computational Science and Engineering.

“I'm thrilled to see it extend Georgia Tech's mission of expanding access to higher education, now to anyone with a web browser.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 31, 2026

Voice-activated, conversational artificial intelligence (AI) agents must provide clear explanations for their suggestions, or older adults aren’t likely to trust them.

That’s one of the main findings from a study by AI Caring on what older adults expect from explainable AI (XAI).

AI Caring is one of three AI Institutions led by Georgia Tech and funded by the National Science Foundation (NSF). The institution supports AI research that benefits older adults and their caregivers.

Niharika Mathur, a Ph.D. candidate in the School of Interactive Computing, was the lead author of a paper based on the study. The paper will be presented in April at the 2026 ACM Conference on Human Factors in Computing Systems (CHI) in Barcelona.

Mathur worked with the Cognitive Empowerment Program at Emory University to interview 23 older adults who live alone and use voice-activated AI assistants like Amazon’s Alexa and Google Home.

Many of them told her they feel excluded from the design of these products.

“The assumption is that all people want interactions the same way and across all kinds of situations, but that isn’t true,” Mathur said. “How older people use AI and what they want from it are different from what younger people prefer.”

One example she gave is that young people tend to be informal when talking with AI. Older people, on the other hand, talk to the agent like they would a person.

“If Older adults are talking to their family members about Alexa, they usually refer to Alexa as ‘she’ instead of ‘it,’” Mathur said. “They tend to humanize these systems a lot more than young people.”

Good Explanations

The study evaluated AI explanations that drew information from four sources of data:

- User history (past conversations with the agent)

- Environmental data (indoor temperature or the weather forecast)

- Activity data (how much time a user spends in different areas of the home)

- Internal reasoning (mathematical probabilities and likely outcomes)

Mathur said older users trust the agent more when it bases its explanations on data from the first three sources. However, internal reasoning creates skepticism.

Internal reasoning means the AI doesn’t have enough data from the other sources to give an explanation. It provides a percentage to reflect its confidence based on what it knows.

“The overwhelming response was negative toward confidence scores,” Mathur said. “If the AI says it’s 92% confident, older adults want to know what that’s based on.”

This is another example that Mathur said points to generational preferences.

“There’s a lot of explainable AI research that shows younger people like to see numbers in explanations, and they also tend to rely too much on explanations that contain numerical confidence. Older adults are the opposite. It makes them trust it less.”

Knowing the Context

Mathur said that AI agents interacting with older adults should serve a dual purpose. They should provide users with companionship and support independence while reducing the caretaking burden often placed on family members.

Some studies have shown that engineers have tended to favor caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are merely a box to be checked.

She discovered that in urgent situations, older users prefer the AI to be straightforward, while in casual settings, they desire more conversation.

“How people interact with technological systems is grounded in what the stakes of the situation are,” she said. “If it had anything to do with their immediate sense of safety, they did not want conversational elaboration. They want the AI to be very direct and factual.”

Not Just Checking Boxes

Mathur said AI agents that interact with older adults are ideally constructed with a dual purpose. They should provide companionship and autonomy for the users while alleviating the burden of caretaking that is often placed on their family members.

Some studies have shown that engineers have strayed toward favoring caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are a box to be checked.

“They’re not being thought of as consumers,” Mathur said. “A lot of products are being made for them but not with them.”

She also said psychological well-being is one of the most important outcomes these tools should produce.

Showing older adults that they are listened to can significantly help in gaining their trust. Some interviewees told Mathur they want agents who are deliberate about understanding their preferences and don’t dismiss their questions.

Meeting these needs reduces the likelihood of protesting and creating conflict with family members.

“It highlights just how important well-designed explanations are,” she said. “We must go beyond a transparency checklist.”

News Contact

Nathan Deen

College of Computing

Georgia Tech

Mar. 24, 2026

The words on this page mean something because they are assembled in a particular order and follow the complex rules of grammar and syntax. Creating new chemical polymers follows a similar kind of structure, with rules about what elements and groups of atoms go together and how to assemble them to make sense.

Thinking about polymers in that way has led Georgia Tech materials scientists to create new generative artificial intelligence tools that are like Claude or ChatGPT for new materials.

These are the first foundational models for generative polymer design that have also been validated through physical experiments: users specify the properties they need in a polymer and the model will suggest a chemical structure.

Led by Regents’ Entrepreneur Rampi Ramprasad, the researchers described their latest model this month in the Nature journal npj Artificial Intelligence — including a test material they created and validated in the lab to prove the models work.

News Contact

Joshua Stewart

College of Engineering

Mar. 19, 2026

Robots are increasingly learning new skills by watching people. From folding laundry to handling food, many real-world, humanlike tasks are too nuanced to be efficiently programmed step by step.

With imitation learning, humans demonstrate a task and robots learn to copy what they see through cameras and sensors. While at the leading edge of robotics research, this approach is limited by a major constraint: Robots can only work as fast as the people who taught them.

Now, Georgia Tech researchers have created a tool that smashes that speed barrier. The system allows robots to execute complex tasks significantly faster than human demonstrations while maintaining precision, control, and safety.

The team addresses a central challenge in modern robotics: how to combine the flexibility of learning from humans with the speed and reliability required for real-world deployment. The technology could lead to wider adoption of imitation learning in industrial and household applications and even enable robots to execute humanlike tasks better than ever before.

“The thing we’re trying to create — and I would argue industry is also trying to create — is a general-purpose robot that can do any task that human hands can do,” said Shreyas Kousik, assistant professor in the George W. Woodruff School of Mechanical Engineering and a co-lead author on the study. “To make that work outside the lab, speed really matters.”

The new tool, SAIL (Speed Adaptation for Imitation Learning), was born out of a cross-campus, interdisciplinary collaboration that brought together expertise in mechanical engineering, robotics systems, and machine learning. The research team includes Kousik; Benjamin Joffe, senior research scientist at the Georgia Tech Research Institute; and Danfei Xu, assistant professor in the School of Interactive Computing, along with graduate students and researchers from multiple labs.

Speed Without Sacrifice

Teaching robots to work faster than the speed of human demonstrations is challenging. Robots can behave differently at higher speeds, and small changes in the environment can cause errors.

“The challenge is that a robot is limited to the data it was trained on, and any changes in the environment can cause it to fail,” Kousik said.

SAIL addresses this challenge through a modular approach, with separate components working together to accelerate beyond the training data. The system keeps motions smooth at high speed, tracks movements accurately, adjusts speed dynamically based on task complexity, and schedules actions to account for hardware delays. This combination allows robots to move quickly while staying stable, coordinated, and precise.

“One of the gaps we saw was that our academic robotics systems could do impressive things, but they weren’t fast or robust enough for practical use,” Joffe said. “We wanted to study that gap carefully and design a system that addressed it end to end.”

He added, “The goal is not just to make robots faster, but to make them smart enough to know when speed helps and when it could cause mistakes.”

The team evaluated SAIL’s performance across 12 tasks, both in simulation and on two physical robot platforms. Tasks included stacking cups, folding cloth, plating fruit, packing food items, and wiping a whiteboard. In most cases, SAIL-enabled robots completed tasks three to four times faster than standard imitation-learning systems without losing accuracy.

One exception was the whiteboard-wiping task, where maintaining contact made high-speed execution difficult.

“Understanding where speed helps and where it hurts is critical,” Kousik said. “Sometimes slowing down is the right decision.”

While SAIL does not make robots universally adaptable on its own, it represents an important step toward robotic systems that can learn from humans without being constrained by human pace.

By showing how learned robotic behaviors can be accelerated safely and systematically, SAIL brings imitation learning closer to real-world use — where speed, precision, and reliability all matter.

Citation: Ranawaka Arachchige, et. al. “SAIL: Faster-than-Demonstration Execution of Imitation Learning Policies,” Conference on Robot Learning (CoRL), 2025.

DOI: https://doi.org/10.48550/arXiv.2506.11948

Funding: The authors would like to acknowledge the State of Georgia and the Agricultural Technology Research Program at Georgia Tech for supporting the work described in this paper.

Pagination

- Previous page

- Page 2

- Next page