Sep. 12, 2025

Robotic systems are currently deployed in sectors ranging from industrial manufacturing to healthcare to agriculture, adding benefits in production times, patient outcomes, and yields. This trend towards greater automation and human robot collaborative work environments, while providing great opportunities, also highlights a critical gap in cybersecurity research. These systems rely on network communication to coordinate movement, meaning that security breaches could result in the robot acting in ways that may endanger people and property.

Current cybersecurity approaches have been shown to be insufficient in blocking sophisticated attacks aimed at networked robotic motion-control systems.

To address this gap, Jun Ueda, Professor and ASME Fellow in the George W. Woodruff School of Mechanical Engineering at Georgia Tech, has been awarded approximately $700,000 by the National Science Foundation to establish methods to enhance cybersecurity for networked motion-control system. The research will focus on the unique geometric vulnerabilities in networked robotic systems and stealthy false data injection attacks that exploit geometric coordinate transformations to maintain mathematical consistency in robotic dynamics while altering physical world behavior.

Using an interdisciplinary approach that will combine research methodology from system dynamics, control, communication, differential geometry and cybersecurity engineering, Ueda hopes to establish new mathematical tools for analyzing robotic security and develop safer networked robotic systems that successfully repel system intrusion, manipulation attacks, and attacks that mislead operators.

This article refers to NSF Program Foundational Research in Robotics (FRR) Award # 2112793

A Geometric Approach for Generalized Encrypted Control of Networked Dynamical Systems

News Contact

Sep. 11, 2025

A recently awarded $20 million NSF Nexus Supercomputer grant to Georgia Tech and partner institutes promises to bring incredible computing power to the CODA building. But what makes this supercomputer different and how will it impact research in labs on campus, across disciplinary units, and across institutions?

Purpose Built for AI Discovery

Nexus is Georgia Tech’s next-generation supercomputer, replacing the HIVE. Most operational high-performance computing systems utilized for research were designed before the explosion in Machine Learning and AI. This revolution has already shown successes for scientific research and data analysis in many domains, but the compute power, complex connectivity, and data storage needs for these systems have limited their access to the academic research community. The Nexus supercomputer design process retained a robust HPC system as a base while integrating artificial intelligence, machine learning and large-scale data science analysis from the ground up.

Expert Support for Faculty and Researchers

The Institute for Data Engineering and Science (IDEaS) and the College of Computing house the Center for Artificial Intelligence in Science and Engineering (ARTISAN) group. This team has collective experience in working with national computational, cloud, commercial and institutional resources for computational activities, and decades of experience in scientific tools that aid in assisting both teaching and research faculty. Nexus is the next logical step, bringing together everything they’ve learned to build a national resource optimized for the future of AI-driven science.

Principal Research Scientist for the ARTISAN team, Suresh Marru, highlighted the need for this new resource, “AI is a core part of the Nexus vision. Today, researchers often spend more time setting up experiments, managing data, or figuring out how to run jobs on remote clusters than doing science. With Nexus, we’re flipping that script. By embedding AI into the platform, we help automate routine tasks, suggest optimal ways to run simulations, and even assist in generating input or analyzing results. This means researchers can move faster from question to insight. Instead of wrestling with infrastructure, they can focus on discovery.”

An Accessible AI Resource for GT & US Scientific Research

90% of Nexus capacity will be made available to the national research community through the NSF Advanced Computing Systems & Services (ACSS) program. Researchers from across the country, at universities, labs, and institutions of all sizes, will have access to this next-generation AI-ready supercomputer. For Georgia Tech research faculty and staff, the new system has multiple benefits:

- 10% of the time on the machine will be available for use by Georgia Tech researchers

- Nexus will allow GT researchers a chance to try out the latest hardware for AI computing

- Thanks to cyberinfrastructure tools from the ARTISAN group, Nexus will be easier to access than previous NSF supercomputers

Interim Executive Director of IDEaS and Regents' Professor David Sherrill notes, "Nexus brings Georgia Tech's leadership in research computing to a whole new level. It will be the first NSF Category I Supercomputer hosted on Georgia Tech's campus. The Nexus hardware and software will boost research in the foundations of AI, and applications of AI in science and engineering."

Sep. 02, 2025

In the morning, before you even open your eyes, your wearable device has already checked your vitals. By the time you brush your teeth, it has scanned your sleep patterns, flagged a slight irregularity, and adjusted your health plan. As you take your first sip of coffee, it’s already predicted your risks for the week ahead.

Georgia Tech researchers warn that this version of AI healthcare imagines a patient who is "affluent, able-bodied, tech-savvy, and always available." Those who don’t fit that mold, they argue, risk becoming invisible in the healthcare system.

The Ideal Future

In their study, published in the Proceedings of the ACM Conference on Human Factors in Computing Systems, the researchers analyzed 21 AI-driven health tools, ranging from fertility apps and wearable devices to diagnostic platforms and chatbots. They used sociological theory to understand the vision of the future these tools promote — and the patients they leave out.

“These systems envision care that is seamless, automatic, and always on,” said Catherine Wieczorek, a Ph.D. student in human-centered computing in the School of Interactive Computing and lead author of the study. “But they also flatten the messy realities of illness, disability, and socioeconomic complexity.”

Four Futures, One Narrow Lens

During their analysis, the researchers discovered four recurring narratives in AI-powered healthcare:

- Care that never sleeps. Devices track your heart rate, glucose levels, and fertility signals — all in real time. You are always being watched, because that’s framed as “care.”

- Efficiency as empathy. AI is faster, more objective, and more accurate. Unlike humans, it doesn’t get tired or biased. This pitch downplays the value of human judgment and connection.

- Prevention as perfection. A world where illness is avoided through early detection if you have the right sensors, the right app, and the right lifestyle.

- The optimized body. You’re not just healthy, you’re high-performing. The tech isn’t just treating you; it’s upgrading you.

“It’s like healthcare is becoming a productivity tool,” Wieczorek said. “You’re not just a patient anymore. You’re a project.”

Not Just a Tool, But a Teammate

This study also points to a critical transformation in which AI is no longer just a diagnostic tool; it’s a decision-maker. Described by the researchers as “both an agent and a gatekeeper,” AI now plays an active role in how care is delivered.

In some cases, AI systems are even named and personified, like Chloe, an IVF decision-support tool. “Chloe equips clinicians with the power of AI to work better and faster,” its promotional materials state. By framing AI this way — as a collaborator rather than just software — these systems subtly redefine who, or what, gets to be treated.

“When you give AI names, personalities, or decision-making roles, you’re doing more than programming. You’re shifting accountability and agency. That has consequences,” said Shaowen Bardzell, chair of Georgia Tech’s School of Interactive Computing and co-author of the study.

“It blurs the boundaries,” Wieczorek noted. “When AI takes on these roles, it’s reshaping how decisions are made and who holds authority in care.”

Calculated Care

Many AI tools promise early detection, hyper-efficiency, and optimized outcomes. But the study found that these systems risk sidelining patients with chronic illness, disabilities, or complex medical needs — the very people who rely most on healthcare.

“These technologies are selling worldviews,” Wieczorek explained. “They’re quietly defining who healthcare is for, and who it isn’t.”

By prioritizing predictive algorithms and automation, AI can strip away the context and humanity that real-world care requires.

“Algorithms don’t see nuance. It’s difficult for a model to understand how a patient might be juggling multiple diagnoses or understand what it means to manage illness, while also navigating other important concerns like financial insecurity or caregiving. They are predetermined inputs and outputs,” Wieczorek said. “While these systems claim to streamline care, they are also encoding assumptions about who matters and how care should work. And when those assumptions go unchallenged, the most vulnerable patients are often the ones left out.”

AI for ALL

The researchers argue that future AI systems must be developed in collaboration with those who don’t fit in the vision of a “perfect patient.”

“Innovation without ethics risks reinforcing existing inequalities. It’s about better tech and better outcomes for real people,” Bardzell said. “We’re not anti-innovation. But technological progress isn’t just about what we can do. It’s about what we should do — and for whom.”

Wieczorek and Bardzell aren’t trying to stop AI from entering healthcare. They’re asking AI developers to understand who they’re really serving.

Funding:

This work was supported by the National Science Foundation (Grant #2418059).

News Contact

Michelle Azriel, Sr. Writer-Editor

Aug. 20, 2025

Daniel Yue, assistant professor of IT Management at the Scheller College of Business, has been awarded the prestigious Best Dissertation Award by the Technology and Innovation Management Division of the Academy of Management. The recognition celebrates the most impactful doctoral research in the field of business and innovation.

Yue’s dissertation, developed during his Ph.D. at Harvard Business School, explores a paradox at the heart of the AI industry: why do firms openly share their innovations, like scientific knowledge, software, and models, despite the apparent lack of direct financial return? His work sheds light on the strategic and economic mechanisms that drive this openness, offering new frameworks for understanding how firms contribute to and benefit from shared technological progress.

“We typically think of firms as trying to capture value from their innovations,” Yue explained. “But in AI, we see companies freely publishing research and releasing open-source software. My dissertation investigates why this happens and what firms gain from it.”

News Contact

Kristin Lowe (She/Her)

Content Strategist

Georgia Institute of Technology | Scheller College of Business

kristin.lowe@scheller.gatech.edu

Aug. 25, 2025

Georgia Tech researchers have designed the first benchmark that tests how well existing AI tools can interpret advice from YouTube financial influencers, also known as finfluencers.

Lead author Michael Galarnyk, Ph.D. Machine Learning ’28, joined lead authors Veer Kejriwal, B.S. Computer Science ’25, and Agam Shah, Ph.D. Machine Learning ’26, along with co-authors Yash Bhardwaj, École Polytechnique, M.S. Trustworthy and Responsible AI ‘27; Nicholas Meyer, B.S. Electrical and Computer Engineering ’22 and Quantitative and Computational Finance ’24; Anand Krishnan, Stanford University, B.S. Computer Science ‘27; and, Sudheer Chava, Alton M. Costley Chair and professor of Finance at Georgia Tech.

Aptly named VideoConviction, the multimodal benchmark included hundreds of video clips. Experts labelled each clip with the influencer’s recommendation (buy, sell, or hold) and how strongly the influencer seemed to believe in their advice, based on tone, delivery, and facial expressions. The goal? To see how accurately AI can pick up on both the message and the conviction behind it.

“Our work shows that financial reasoning remains a challenge for even the most advanced models,” said Michael Galarnyk, lead author. “Multimodal inputs bring some improvement, but performance often breaks down on harder tasks that require distinguishing between casual discussion and meaningful analysis. Understanding where these models fail is a first step toward building systems that can reason more reliably in high stakes domains.”

News Contact

Kristin Lowe (She/Her)

Content Strategist

Georgia Institute of Technology | Scheller College of Business

kristin.lowe@scheller.gatech.edu

Aug. 25, 2025

By Chris Gaffney, Managing Director, Georgia Tech Supply Chain and Logistics Institute | Supply Chain Advisor | Former Executive at Frito-Lay, AJC International, and Coca-Cola

Introduction

Artificial intelligence has entrenched itself in almost every aspect of the professional world. From copywriting tools to search engine optimization and image generation, professionals and laypeople alike use this new technology to streamline daily activities. But, before AI, there was high-level analytics and machine learning in supply chain. Analysts across the supply chain used machine learning to interpret high volumes of data and turn it into predictive algorithms for inventory planning, demand planning, and more. Now, AI is generating these analytics at a much faster, real-time pace.

This shift raises important questions. What does this mean for technology professionals in the supply chain world who once made a living doing these jobs? And what can we expect for aspiring supply chain pros or mid-career professionals who want to increase their value to the team in an age of accelerated technological advances?

The fact of the matter is that AI is now everybody’s job. Standing still will ensure that you get left behind by your peers or the talent pipeline from colleges and universities. The question then becomes, how can I upskill and use what I already know to add value to my role and ensure that my AI competencies allow me to compete in today’s supply chain workforce?

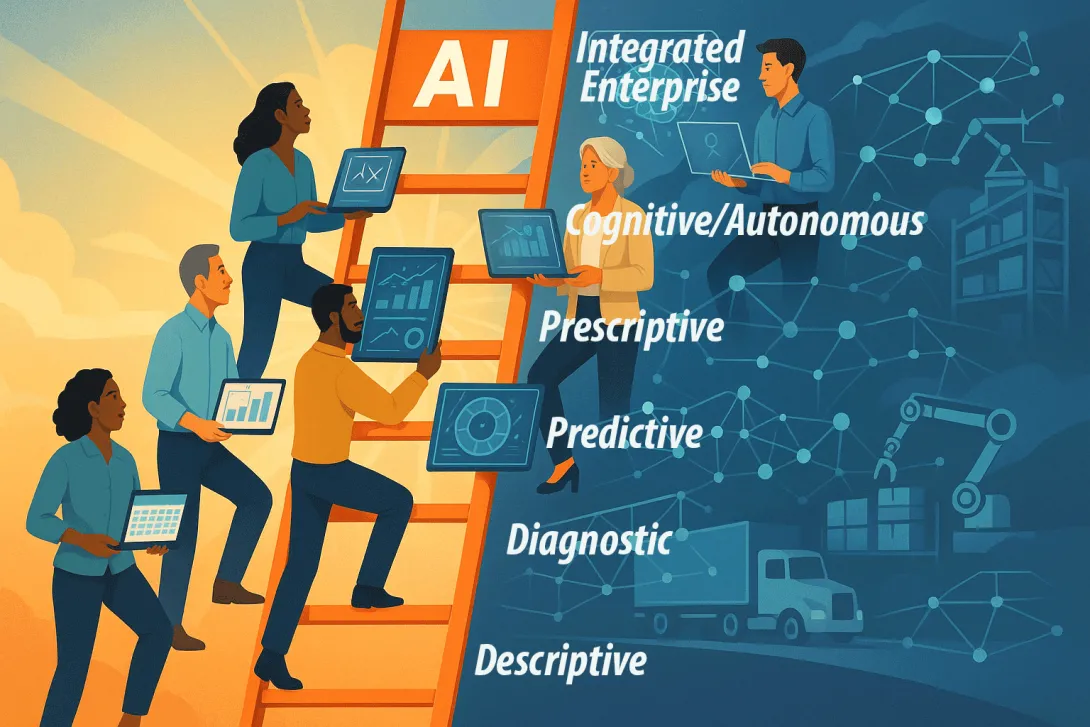

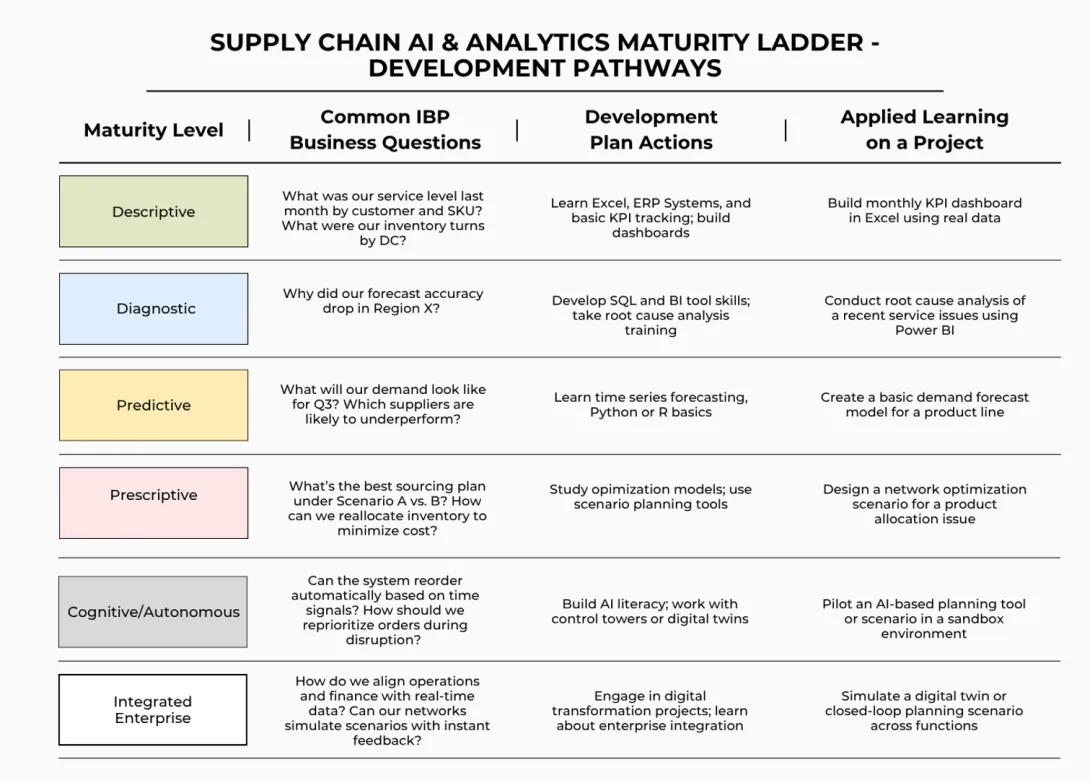

We’ll look at the ladder as a series of increasing levels of complexity and AI activity—what we’ll call ‘maturity levels’: descriptive, diagnostic, predictive, prescriptive, cognitive/autonomous, and integrated enterprise.

Some things to bear in mind as we progress through this topic:

- Everybody is somewhere on the ladder, so everyone has the opportunity to climb the ladder.

- Analytics are no longer just for specialists. AI allows analytics to be an access point to the ladder. You no longer have to rely on someone else higher up on the ladder, and it’s in your best interest to climb higher, regardless of your job description.

- There are lots of resources freely available to allow you to climb the ladder. But in most companies, you can find a mentor who is further along on a ladder, and perhaps they can help you up-skill your operational knowledge and help you advance your capabilities to ascend the ladder.

We’re here to discuss to what degree you should so you can optimize your career opportunities and not be left behind.

How Did We Get Here?

In the field of supply chain we’ve always been ahead of the curve when it comes to these types of innovations. Before AI, we were using machine learning and predictive analytics to enhance our understanding of real-time supply issues. We worked a lot on optimizations at Coke and started utilizing machine learning tactics almost 10 years ago. While I wasn’t the hands-on user of the technology, I took it upon myself to try and understand exactly what was happening and how it was working.

That was a large corporate machine–one of the biggest brands in the world–utilizing the latest in predictive analytics technology. And now we have a democratization of this technology being spread across industries. You no longer need to be part of such a high-powered team to make use of these tools.

We have now entered into an era where artificial intelligence has become omnipresent across almost every supply chain practice and industry, or any other career discipline. The key is understanding best practices is making use of AI in your field, and how you can add value and incorporate it into your everyday work-life.

Descriptive Level: From Rearview Mirror to Forward Thinking Decisions

“If you have some proficiency in Excel, then you’re on the ladder.” - Chris Gaffney

The lowest rung on the AI ladder is the descriptive level. Excel knowledge and experience resides here and can be the access point for most people. This level helps us describe what is happening with numbers and data. Reporting dashboards can be crafted here, and we can run trend analysis using basic inference to see what is happening and where to make adjustments, if necessary.

Excel tells us what did happen - not what could happen. These are important functions, to be sure. However, they only look behind us. They tell us what and why. Today’s supply chain landscape requires tools that allow us to make decisions based on what could happen in the future. We don’t have the power to make proactive decisions or to navigate uncertainty and factor in variables of change.

Our competitive edge is sharpened by having the capability to shape the future, not just explain the past. In order to do so, we need to move up into predictive and prescriptive AI territory.

Up until very recently, this descriptive capability was enough. Analysts, planners, and buyers were all able to produce data that helped others to understand what was happening. The data then required synthesis and analysis. The whys and so whats were human functions performed by different team members and used to measure the efficacy of various inputs and outputs throughout the supply chain. As one moves up the chain of command, so to speak, the ability to interpret the data and findings becomes even more important. However, the numbers crunching and analytics were more siloed.

And now, everyone has access to AI’s ability to synthesize and analyze raw data. But very few “off-the-shelf tools” can answer the why, let alone the ‘what should we do about it’ questions. Planners and managers need to upskill and ensure that they are up to speed on the capabilities and deficiencies of these platforms and insert themselves and their skillsets to close those gaps.

Roles at this level:

- Transportation analysts

- Warehouse supervisors reviewing daily throughput metrics

- Demand planners tracking forecast accuracy from the last quarter

Working in hindsight by monitoring and measuring data is important, albeit limiting. This looking backward in the world of supply chain decision making at a time when forward thinking is essential for future proofing your supply chain organization. Staying here too long limits your ability to prevent problems before they escalate.

What to do next?

- Learn Power BI or Tableau for interactive dashboards

- Get comfortable using large data sets from your ERP or WMS

- Start asking, “why” and “so what”

Diagnostic Level - Information into Insight

“This is where you start to become more valuable because now you can help the team avoid repeat issues.”

So you’ve now measured what happened. The next logical question is why? Here’s where many companies fall short by relying on only internal historical data. The real learning happens when you bring in external variables like weather, economy, labor, or competitive actions. Diagnostics help uncover root causes and patterns across time and systems. What does this mean for you and the AI ladder?

This could mean combining two different datasets using SQL to pull deeper reports or identifying correlations between variables. You need to be able to get inside of your supply chain to see what’s really going on, much like a physician will draw blood or perform various scans to get a more vivid and comprehensive picture of what’s happening.

Examples from the field:

- A demand planner diagnosing why forecasts were consistently off by adding external factors outside your control.

- A transportation analyst finding route disruptions correlated with labor strikes and weather trends - kinda like WAZE.

What you can do

- Add layers of internal and external factors

- Use Power BI or Excel to show the impacts of external events

- Start to track leading indicators, not just lagging ones.

Predictive - Seeing What’s Coming

“Most of the tools we have heavily leverage your own history. But your ability to sell a product next year is different because you don’t control everything.”

Predictive analytics enables supply chain professionals to see trends, forecast disruptions and plan proactively.

As we mentioned earlier, most forecasting tools rely too much on internal history. Predictive power comes from adding things like economic trends, labor availability, weather, etc., to your forecasting models.

My first exposure to the broader umbrella of machine learning, falling under AI, was while working at Coke. Every night, our machines processed enormous volumes of data to track how much of each type—across countless product combinations—was being used. This data was being used to predict when the fountain machines would fail so that we could prepare a replacement without losing time or operational capacity. Basically, this meant we could allocate maintenance resources proactively instead of reactively.

This machine learning doesn’t have to be intimidating. In fact, machine learning was the #1 skill in supply chain job postings in 2024. Python and machine learning are much more accessible tools than they once were, and many professionals are teaching themselves the basics using online resources that are much more prevalent than they once were. Again, the democratization of AI tools means everyone can level up a lot faster.

Roles Seeing This Shift

- Demand planners and sourcing managers are combining historical sales information with things like inflation, trade wars, and taste evolutions.

- Transportation teams are integrating weather trends and traffic data to reroute loads

What Can You Do:

- Learn the basics of Python’s forecasting libraries

- Pull in a single external variable, like weather or labor availability, into your demand forecast.

- Track model accuracy over time to see where it succeeds and, most importantly, fails.

Prescriptive: Deciding What to Do About It

"We don’t want analytics experts. We want people who are applied analytics or applied AI experts.”

It’s not just identifying the risk. The key is choosing a more effective path forward. And this requires modeling scenarios in a way that lets you take action rather than just be an observer.

A lot of companies stop at prediction. The ones that get ahead of the pack are those that are able to simulate outcomes and use this logic in daily decisions. Just remember that context is everything. Those with very impressive technical skills can sometimes miss the mark because they didn’t understand the business. There are also supply chain planners with moderate technical skills who can make major contributions because they knew what mattered and where to apply it.

The supply chain AI ladder is crucial, but only as effective as the depth of the supply chain knowledge base.

Cognitive and Integrated is When AI Starts to Work With You

This is the very top of the ladder or the tip of the AI ladder iceberg, if you will. This is the realm of AI agents that are learning and acting in an intelligent and sometimes autonomous manner. The cognitive tier blends into the integrated enterprise, where systems and data are connected. Warehouses talk to the forecast, which communicates with sourcing, which can adjust production. This is kind of futuristic, but based on how AI has evolved, it will likely be ubiquitous within a couple of years.

How to Apply Cognitive and Integrated AI:

- Learn how to build a basic GenAI or logic-based agent using online tutorials or sandbox tools

- Make sure the AI Agent’s work is sound before turning it loose on our business. The human element is still crucial in these cases.

Role of Leadership in Deploying the Supply Chain AI Ladder

“This can’t be a black box to you.”

Leaders need to know just enough about AI to advocate for it. If you’ve hired the right people, then you trust them to do the job that you hired them to do. If they’re telling you that AI tools will help them do their jobs better, then listen to them. Find out what your team needs and get them to explain to you how AI can unlock more benefits for your business.

Encourage them to pursue professional development courses and to experiment in a safe environment until they feel confident integrating the tools into regular operation.

Conclusion: Don’t Stand Still and Be Left Behind

The supply chain AI ladder is real, and it’s climbable. You are not too late to get on board and begin using AI to increase your personal value at your company. It doesn’t matter how old you are - whether you’re an entry-level professional with an MBA, a mid-career professional, or a seasoned C-suite executive. There is a place on the ladder for you.

The most valuable assets that employees can bring to bear right now in this tech immersion context. Those who have been in the workforce for a few years are able to mix their experiential knowledge with the tools and assets available through AI to translate technology into real-world wins for your supply chain teams. Your value increases significantly if you pair your knowledge with proactive learning tools.

Take the time to self-assess and figure out where you are on the ladder.

Don’t try to jump too high up on the level. Take it one rung at a time. Then reassess.

Commit to the 70/20/10 rule. 70% on-the-job learning, 20% learning from peers and mentors, and 10% formal training.

Apply what you’ve learned and stay curious. Just don’t get complacent. This is not the time to rest on your laurels because someone who is hungry for knowledge will be on your heels.

This content was developed in collaboration with SCM Talent Group, a supply chain recruiting and executive search firm.

Aug. 21, 2025

Georgia Tech and Shepherd Center recently awarded four seed grants totaling nearly $200,000 to researchers focusing on projects that will advance discoveries in neurorehabilitation, including acquired brain injury, spinal cord injury, multiple sclerosis, chronic pain, and other neurological conditions.

The Georgia Tech-Shepherd Center Seed Grant Program is part of an ongoing partnership between the two institutions that started in 2023 with the goal of advancing rehabilitative patient care and research.

“The seed grant program is intended to stimulate new interdisciplinary research collaborations by providing seed funding to obtain preliminary data or prototypes necessary for the submission of an external grant or industry opportunities,” says Deborah Backus, vice president of Research and Innovation at Shepherd Center. “As two leading research institutions, we know the potential for advancing rehabilitation therapies is even greater when we work together. We look forward to the solutions, treatments, and therapies that emerge from these initial seed grants.”

Experts from both institutions evaluated and scored seed grant applications based on the research’s innovation, approach, and potential for training opportunities, as well as its anticipated impact, prospects for commercial translation, and strategy for securing continued funding. This year, each awardee team received close to $50,000.

“We are very excited to launch this new seed grant program, which will spur ideas and propel research forward,” said Michelle LaPlaca, professor in the Coulter Department of Biomedical Engineering and the Georgia Tech lead of the Collaborative. “The complementary expertise of Georgia Tech and Shepherd Center researchers, combined with the motivation to find solutions for individuals with neurological injury and disability, is a winning formula for innovation.”

"Offering new hope for neurorehabilitation patients requires bringing together interdisciplinary researchers to explore new and creative ideas,” adds Chris Rozell, Julian T. Hightower Chaired professor in the School of Electrical and Computer Engineering and the inaugural executive director of the Institute of Neuroscience, Neurotechnology, and Society (INNS) at Georgia Tech. “I'm excited to see the talent at these world class institutions coming together to develop new solutions for these complex problems."

This year’s seed grants were awarded to the following projects:

- Proof of Concept Development of the Recovery Cushion – Stephen Sprigle, professor, School of Industrial Design and School of Mechanical Engineering, Georgia Tech; Jennifer Cowhig, research physical therapist, Shepherd Center.

- Paving a Smooth Path from Hospital to Home: A Feasibility Study of an Integrated Smart Transitional Home Lab to Support Stroke Rehabilitation Patients’ Transition to Home – John Morris, senior clinical research scientist, Shepherd Center; Hui Cai, professor in the School of Architecture, executive director of the SimTigrate Design Center, Georgia Tech.

- A Comparative Analysis of Lower-Limb Exoskeleton Technology for Non-Ambulatory Individuals with Spinal Cord Injury – Maegan Tucker, assistant professor, School of Electrical and Computer Engineering and School of Mechanical Engineering, Georgia Tech; Nicholas Evans (AP 2023), clinical research scientist, Shepherd Center.

- Improving Accessibility and Precision in Neurorehabilitation at the Point of Care with AI-Driven Remote Therapeutic Monitoring Solutions – Brad Willingham, clinical research scientist, director of Multiple Sclerosis Research, Shepherd Center; May Dongmei Wang, professor, Wallace H. Coulter Department of Biomedical Engineering, Georgia Tech.

News Contact

Kerry Ludlam

Director of Communications

Shepherd Center

Audra Davidson

Research Communications Program Manager

Institute for Neuroscience, Neurotechnology, and Society

Aug. 11, 2025

Team Atlanta, a group of Georgia Tech students, faculty, and alumni, achieved international fame on Friday when they won DARPA’s AI Cyber Challenge (AIxCC) and its $4 million grand prize.

AIxCC was a two-year long competition to create an artificial intelligence (AI) enabled cyber reasoning system capable of autonomously finding and patching vulnerabilities.

“This is a once in a generation competition organized by DARPA about how to utilize recent advancements in AI to use in security related tasks,” said Georgia Tech Professor Taesoo Kim.

“As hackers we started this competition as AI skeptics, but now we truly believe in the potential of adopting large language models (LLM) when solving security problems."

The Atlantis system was Team Atlanta’s submission. Atlantis is a fuzzer- or an automated software that finds vulnerabilities or bugs- and enhanced it with several different types of LLMs.

While developing the system, Team Atlanta reported the heat put out by the GPU rack was hot enough to roast marshmallows.

The team was comprised of hackers, engineers, and cybersecurity researchers. The Georgia Tech alumni on the team also represented their employers which include KAIST, POSTECH, and Samsung Research. Kim is also the vice president of Samsung Research.

News Contact

John Popham

Communications Officer II at the School of Cybersecurity and Privacy

Aug. 08, 2025

Research into tailored assistive and rehabilitative devices has seen recent advancements but the goal remains out of reach due to the sparsity of data on how humans learn complex balance tasks. To address this gap, a collaborating team of interdisciplinary faculty from Florida State University and Georgia Tech have been awarded ~$798,000 by the NSF to launch a study to better understand human motor learning as well as gain greater understanding into human robot interaction dynamics during the learning process.

Led by PI: Taylor Higgins, Assistant Professor, FAMU-FSU Department of Mechanical Engineering, partnering with Co-PIs Shreyas Kousik, Assistant Professor, Georgia Tech, George W. Woodruff School of Mechanical Engineering, and Brady DeCouto, Assistant Professor, FSU Anne Spencer Daves College of Education, Health, and Human Sciences, the research will use the acquisition of unicycle riding skill by participants to gain a better grasp on human motor learning in tasks requiring balance and complex movement in space. Although it might sound a bit odd, the fact that most people don’t know how to ride a unicycle, and the fact that it requires balance, mean that the data will cover the learning process from novice to skilled across the participant pool.

Using data acquired from human participants, the team will develop a “robotics assistive unicycle” that will be used in the training of the next pool of novice unicycle riders. This is to gauge if, and how rapidly, human motor learning outcomes improve with the assistive unicycle. The participants that engage with the robotic unicycle will also give valuable insight into developing effective human-robot collaboration strategies.

The fact that deciding to get on a unicycle requires a bit of bravery might not be great for the participants, but it’s great for the research team. The project will also allow exploration into the interconnection between anxiety and human motor learning to discover possible alleviation strategies, thus increasing the likelihood of positive outcomes for future patients and consumers of these devices.

Author

-Christa M. Ernst

This Article Refers to NSF Award # 2449160

News Contact

Sep. 19, 2025

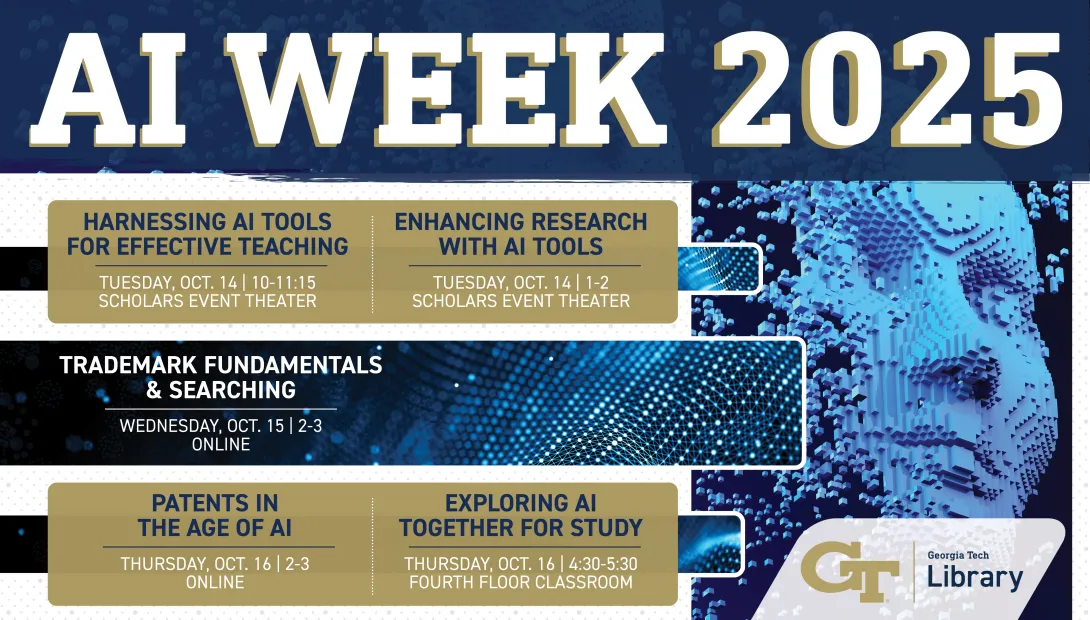

Join the Georgia Tech Library in person and virtually Tuesday Oct. 14 through Thursday, Oct. 16 for our Inaugural AI Week, a mix of panel discussions and seminars aimed at celebrating and investigating the myriad ways researchers, students and faculty harness the burgeoning technology.

“We’re thrilled to bring this slate of events, discussions and learning opportunities to campus focused on the game-changing use of artificial intelligence happening across the Institute,” said Dean Leslie Sharp. “The Library has brought together industry experts, student practitioners and research faculty to offer a varied and intriguing set of learning opportunities for our community.”

AI Week 2025 will include five separate in-person and online events, including:

- Faculty Panel Discussion: Harnessing AI Tools to Make Teaching More Effective and Engaging

Oct. 14 | 10:30-11:15 a.m.

Scholars Event Network, first floor Price Gilbert

- Panel Discussion: Enhancing Research with AI Tools

Oct. 14 | 1-2 p.m.

Scholars Event Network, first floor Price Gilbert

- Trademark Fundamentals and Searching (ONLINE)

Oct. 15 | 2-3 p.m.

Online

- Patents in the Age of AI: Navigating the Changing Landscape (ONLINE)

Oct. 16 | 2-3 p.m.

Online

- Exploring AI Together for Study, Copilot and Firefly

Oct. 16 | 4:30-5:30 p.m.

Crosland Tower Fourth Floor Classroom

Pagination

- Previous page

- Page 7

- Next page