Aug. 04, 2025

By Chris Gaffney, Managing Director, Georgia Tech Supply Chain and Logistics Institute | Supply Chain Advisor | Former Executive at Frito-Lay, AJC International, and Coca-Cola

A Personal Wake-Up Call

I’ve always considered myself a reasonably strong critical thinker—someone who asks good questions, challenges assumptions, and doesn’t adopt a viewpoint just because it’s popular. But a recent experience humbled me. I took an open-source critical thinking test and didn’t do nearly as well as I expected.

This led me down a deeper path of inquiry. I was already concerned about how two decades of social media have shaped the way we consume and respond to information—short, sensational content delivered by algorithm. And now, with the rapid rise of generative AI, I worry we may be trading our thinking for speed and scale.

I use AI tools daily, and I advocate for their use—especially in supply chain applications. But I’ve also come to believe this: if we’re not careful, we risk outsourcing the very thinking that makes us human and effective decision-makers.

Why Critical Thinking Matters More Than Ever—Especially in Supply Chain

Critical thinking isn’t just a defense mechanism—it’s a differentiator. In a world where AI can generate answers instantly, the professionals who ask the right questions will stand out.

Supply chain professionals operate in environments where second and third-order consequences matter. We are called on to make decisions under uncertainty, weigh risks, balance competing priorities, and understand interdependencies.

Judgment—tempered by experience, structured analysis, and humility—is the edge. Tools can help you scale, but they cannot replace the human responsibility to challenge, reflect, and adjust.

What Is Critical Thinking?

Critical thinking is the ability to think clearly and rationally about what to do or believe. It involves:

- Questioning assumptions

- Evaluating evidence

- Recognizing biases (ours and others’)

- Drawing reasoned conclusions

- Reflecting on one’s own thought process

Said simply, it’s self-awareness of your thinking style—how you form your views, test them, and revise them when new evidence emerges.

It requires effort. It requires slowing down. It requires, at times, being wrong.

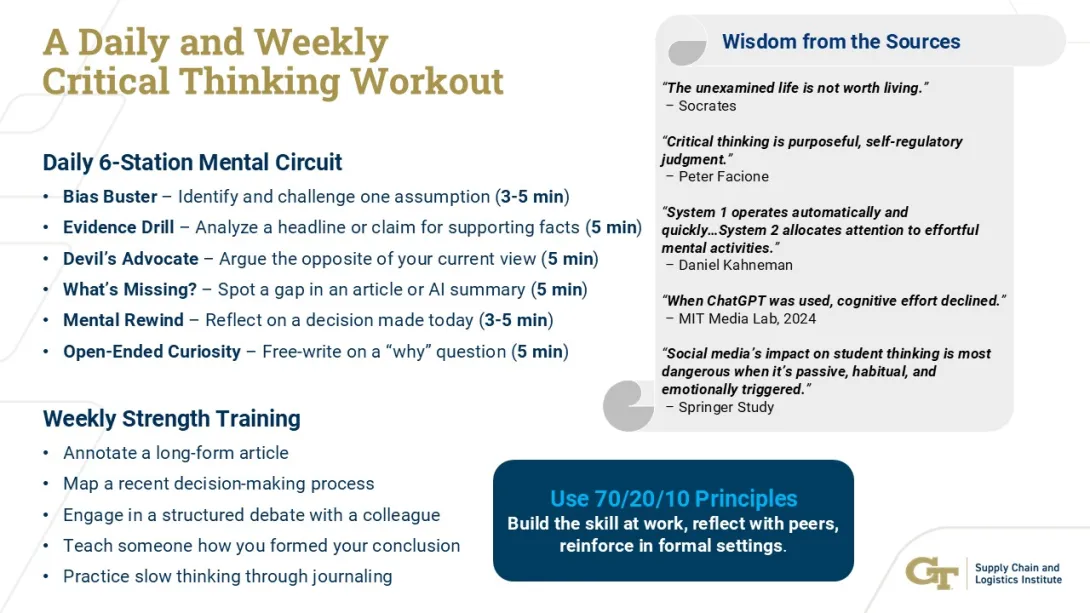

Facione, in his Delphi Report, defines it as "purposeful, self-regulatory judgment."

Kahneman reminds us that our brains are wired for shortcuts—“System 1” thinking is fast and efficient but often error-prone. True critical thinking requires “System 2” effort: slow, reflective, and disciplined.

Are We Losing It?

There’s growing evidence we are.

Social media echo chambers reduce exposure to opposing views. Short-form content conditions us to expect fast answers. And according to the MIT Media Lab (Kosmyna et al., 2024), students using ChatGPT retained less, showed reduced cognitive effort, and had lower originality.

“When ChatGPT was used, cognitive effort declined.”

And yet—this is not a moment for despair. It’s a call to discipline. Because critical thinking, practiced intentionally, can become a personal and professional superpower.

Applying Critical Thinking in Supply Chain Decisions

Supply chain professionals face complexity daily—inventory tradeoffs, supplier uncertainty, resource constraints, policy risk. Many of these decisions can’t be answered by tools alone—they require judgment. Critical thinking lives in that judgment.

Whether you're building a forecast, evaluating a supplier, responding to a disruption, or modeling risk exposure, structured thinking provides a path. The steps are familiar:

- Define the problem clearly

- Clarify what information is available—and what’s missing

- Analyze root causes or future implications

- Generate multiple options

- Establish decision criteria

- Choose a path—and test it before launch

- Monitor and adjust as feedback arrives

This process resembles A3 thinking or supply chain analytics. But what makes it powerful is doing it intentionally—even under pressure.

The best professionals I’ve worked with practice it on small decisions as well as large ones. They don’t confuse speed with clarity.

Practicing Critical Thinking When Using Generative AI

AI tools are powerful—but without deliberate use, they can dull our thinking. Here's how to make AI work with your brain—not instead of it:

- Document your assumptions before prompting

- Journal your intent: What are you trying to decide or explore?

- Ask AI to provide counterarguments or alternative views as well as sources for you to research and draw your own conclusions

- Look for what’s missing or oversimplified

- Summarize AI output in your own words

- Track and reflect on how AI influenced your decisions

Treat AI like a research assistant—not a strategist. Use it to extend your reach, not replace your reasoning.

Final Thought and Your Next Steps

Critical thinking is no longer optional. Not in business. Not in education. Not in leadership.

It is a skill. A discipline. And a mindset that pays dividends over a lifetime.

If you’ve read this far, take this challenge seriously:

- Write out how you form your opinions—on paper.

- Practice structured thinking on small problems weekly.

- Use AI with intention—never outsource your judgment.

- Teach someone else how you reached a conclusion.

- Be humble. Ask yourself: what if I’m wrong?

- Keep a thinking journal for 30 days.

The goal isn’t to be right all the time. It’s to be reflective, rigorous, open to challenge, and consistent over time. That’s what the world needs more of. That’s the edge AI can’t replicate.

So think before you automate.

And never stop questioning.

Jul. 31, 2025

Walk into any room Aleksandra Teng Ma’s been working in this summer, and you’ll probably hear a mix of experimental sounds, snippets of Amy Winehouse vocals, and the occasional Animal Crossing tune playing in the background. That’s just how her brain works—blending tech, artistry, and everyday play into something entirely her own.

Aleksandra is a master’s student in Music Technology at Georgia Tech, but “student” barely scratches the surface. This summer, she’s been everywhere—physically in Massachusetts and intellectually somewhere between a Pride performance and a human-AI jam session at MIT.

“I’m always with my microphone and MIDI keyboard,” she says, like it’s just second nature. “I love singing and coming up with tunes.”

Live from MIT — It’s Human + AI Jamming

Forget dusty textbooks and silent labs—Aleksandra’s research life is about real-time musical interactions between humans and AI. As a visiting researcher at MIT this summer, she’s digging into what it looks like when musicians "jam" with intelligent systems. Think futuristic band practice, but with algorithms joining in.

“It’s giving me a lot of exposure to co-design methodologies,” she explains, “and letting me observe how musicians respond to each other—and to AI.”

It’s not just code and theory, either. The insights come alive when she brings them to the stage. This summer, Aleksandra’s band performed at The Music Porch in Reading, MA for Pride Month. Their cover of Pink Pony Club turned into a moment she won’t forget.

“It was so fun seeing people—especially teenagers—singing and dancing together,” she says. “That’s one of those moments where I just thought, yep, this is why I picked music tech.”

From Winehouse Covers to Ableton Experiments

Despite her research chops, Aleksandra hasn’t lost touch with the joy of just making music. She sings and plays keyboard in a band, covers Amy Winehouse songs, and occasionally writes music just for fun. (Her dream studio partner? You guessed it: Amy herself.)

She’s also been expanding her technical toolkit this summer, diving deeper into sound design with Ableton and Serum.

“Still learning,” she says, “but I’m using them for sound design in songs—and loving it.”

And then there are the unexpected “whoa” moments. Like when she built a vocal patch for the Pixies’ Where Is My Mind? to use live during a performance.

“It was haunting,” she says. “And it worked so well live.”

Dream Tech and Georgia Tech

Ask Aleksandra what she’d invent if she could mash up two instruments, and she already has an idea:

“Automatic vocal effects through a microphone with a built-in amplifier,” she says, laughing. “Honestly, someone probably already made this, but I want it anyway.”

That kind of thinking is exactly what her time at Georgia Tech has sparked. Before the program, she saw music mostly through the lens of conventional instruments. Now? She’s all about how software and hardware can expand what music even is.

Her Summer, in Sound

If Aleksandra’s summer had a vibe, it’d be:

- A creek bubbling in the background

- A long, ghostly reverb trail on a siren vocal

- And the ever-cozy tones of Animal Crossing

Not exactly your typical lab soundtrack—but that’s the beauty of it.

This fall, she’s heading back to Georgia Tech after a gap year at Bose, ready to jump into research on multimodal music source separation (AKA teaching machines to pick apart and understand layers in music the way humans do).

And yes, she’ll still be singing.

Hits with Aleksandra

- Current summer jams: Rosebud by Oklou & the new Lorde album

- What people don’t “get” about her work: “How music signals work on a granular level”

Aleksandra Ma doesn’t just study music tech—she lives it. Whether she’s tweaking reverb patches, performing under porch lights, or teaching AI how to groove, she’s showing what it really means to be a 21st-century musician.

Jul. 25, 2025

As Georgia positions itself as a hub for digital infrastructure, communities across the state are facing a growing challenge: how to welcome the economic benefits of data centers while managing their significant environmental and infrastructure impacts. These facilities, essential for powering artificial intelligence, cloud computing, and everyday internet use, are also among the most resource-intensive buildings in the modern economy.

While companies like Microsoft and Google have pledged to reach net-zero emissions, experts say more transparency and smarter policy are needed to ensure that data center development aligns with community and environmental priorities. That means ensuring adequate energy infrastructure, investing in renewables, training local workers, and mitigating water and carbon impacts through innovation.

A New Kind of Energy Crunch

The rapid rise of AI is fueling explosive demand for computing power — and in turn, energy.

“The proliferation of AI workloads has significantly increased data center energy requirements,” says Divya Mahajan, assistant professor in the School of Electrical and Computer Engineering. “Large-scale AI training, especially for language models, leads to elevated and sustained power draw, often nearing the thermal and power envelopes of graphics processing units systems.”

This sustained demand is particularly challenging in hot, humid regions like Georgia, where cooling systems must work harder. “Training these models can cause thermal instability that directly affects cooling efficiency and power provisioning,” Mahajan explains. “This amplifies reliance on external cooling infrastructure, increasing water consumption and grid strain.”

Environmental and Economic Pressure

“Each new data center could lead to greenhouse gas emissions equivalent to a small town,” says Marilyn Brown, Regents’ and Brook Byers Professor of Sustainable Systems in the School of Public Policy. “In Georgia, the growth of data centers has already led to plans for new gas plants and the extension of aging coal plants.”

There’s an environmental cost to this growth: electricity and water. A single large data center can consume up to 5 million gallons of water per day.

Rising demand has a price. “It’s simple supply and demand,” says Ahmed Saeed, assistant professor at the School of Computer Science. “As overall power demand increases, if supply doesn’t keep up, costs will rise and the most affected will be lower-income consumers.”

Still, experts are optimistic that policy and technology can help mitigate these impacts.

Innovation May Hold the Key

Despite the challenges, experts see opportunities for innovation. “Technologies like direct-to-chip cooling and liquid cooling are promising,” says Mahajan. “But they’re not yet widespread.”

Saeed notes that some companies are experimenting with radical ideas, like Microsoft’s underwater Project Natick or locating data centers in Nordic countries where ambient air can be used for cooling. These approaches challenge conventional infrastructure norms by placing servers underwater or in remote, cold regions. “These are exciting, but we need scalable solutions that work in places like Georgia,” he emphasizes.

What Communities Should Ask For

As communities compete to attract data centers, experts say they should push for commitments that go beyond job creation.

“Communities should ensure that their power infrastructure can handle the added load without compromising resilience or increasing costs,” Saeed advises. “They should also require that data centers use renewable energy or invest in local clean energy projects.”

Training and hiring local workers is another key benefit communities can demand. “Deployment and maintenance of data centers require skilled workers,” Saeed adds. “Operators should invest in technical training and hire locally.”

Policy Can Make the Difference

Stronger policy frameworks can ensure growth doesn’t come at the expense of Georgia’s most vulnerable communities. “We need more transparency from companies about their energy and water use,” says Brown. “And we need policies that prevent the costs of supporting large consumers from being passed on to residential ratepayers.”

Some states are already taking action. Texas passed a bill to give regulators more control over large power consumers. In Georgia, a bill that would have paused tax breaks for data centers until their community impact was assessed was vetoed — but experts say the conversation is far from over.

“Data centers are here to stay,” says Saeed. “The question is whether we can make them sustainable — before their footprint becomes too large to manage.”

Jul. 24, 2025

Computer vision enables AI to see the world. It’s already being used for self-driving vehicles, medical imaging, face recognition, and more.

Georgia Tech faculty and student experts advancing this field were in action in June at the globally renowned CVPR conference from IEEE and the Computer Vision Foundation. Georgia Tech was in the top 10% of all organizations for lead authors and the top 4% for number of papers. More than 2000 organizations had research accepted into CVPR's main program.

Watch the video and hear from Tech experts about what’s new and what’s coming next. Featured students include College of Computing experts Fiona Ryan, Chengyue Huang, Brisa Maneechotesuwan, and Lex Whalen.

These researchers in computer vision are showing how they are extending AI capabilities with image and video data.

HIGHLIGHTS:

- College of Computing faculty, from the Schools of Interactive Computing (IC) and Computer Science (CS), represented the majority of Tech's faculty in the CVPR papers program (8 of 10 faculty).

- IC faculty Zsolt Kira and Bo Zhu each coauthored an oral paper, the top 3% of accepted papers. IC faculty member Judy Hoffman coauthored two highlight papers, the top 20% of acceptances.

- Georgia Tech is in the top 10% of all organizations for number of first authors and the top 4% for number of papers. More than 2,000 organizations had research in the main program.

- Tech experts were on 30 research paper teams across 16 research areas. Topics with more than one Tech expert included:

• Image/video synthesis & generation

• Efficient and scalable vision

• Multi-modal learning

• Datasets and evaluation

• Humans: Face, body, gesture, etc.

• Vision, language, and reasoning

• Autonomous driving

• Computational imaging

News Contact

Joshua Preston

Communications Manager, Marketing and Research

College of Computing

jpreston7@gatech.edu

Jul. 16, 2025

The National Science Foundation (NSF) has awarded Georgia Tech and its partners $20 million to build a powerful new supercomputer that will use artificial intelligence (AI) to accelerate scientific breakthroughs.

Called Nexus, the system will be one of the most advanced AI-focused research tools in the U.S. Nexus will help scientists tackle urgent challenges such as developing new medicines, advancing clean energy, understanding how the brain works, and driving manufacturing innovations.

“Georgia Tech is proud to be one of the nation’s leading sources of the AI talent and technologies that are powering a revolution in our economy,” said Ángel Cabrera, president of Georgia Tech. “It’s fitting we’ve been selected to host this new supercomputer, which will support a new wave of AI-centered innovation across the nation. We’re grateful to the NSF, and we are excited to get to work.”

Designed from the ground up for AI, Nexus will give researchers across the country access to advanced computing tools through a simple, user-friendly interface. It will support work in many fields, including climate science, health, aerospace, and robotics.

“The Nexus system's novel approach combining support for persistent scientific services with more traditional high-performance computing will enable new science and AI workflows that will accelerate the time to scientific discovery,” said Katie Antypas, National Science Foundation director of the Office of Advanced Cyberinfrastructure. “We look forward to adding Nexus to NSF's portfolio of advanced computing capabilities for the research community.”

Nexus Supercomputer — In Simple Terms

- Built for the future of science: Nexus is designed to power the most demanding AI research — from curing diseases, to understanding how the brain works, to engineering quantum materials.

- Blazing fast: Nexus can crank out over 400 quadrillion operations per second — the equivalent of everyone in the world continuously performing 50 million calculations every second.

- Massive brain plus memory: Nexus combines the power of AI and high-performance computing with 330 trillion bytes of memory to handle complex problems and giant datasets.

- Storage: Nexus will feature 10 quadrillion bytes of flash storage, equivalent to about 10 billion reams of paper. Stacked, that’s a column reaching 500,000 km high — enough to stretch from Earth to the moon and a third of the way back.

- Supercharged connections: Nexus will have lightning-fast connections to move data almost instantaneously, so researchers do not waste time waiting.

- Open to U.S. researchers: Scientists from any U.S. institution can apply to use Nexus.

Why Now?

AI is rapidly changing how science is investigated. Researchers use AI to analyze massive datasets, model complex systems, and test ideas faster than ever before. But these tools require powerful computing resources that — until now — have been inaccessible to many institutions.

This is where Nexus comes in. It will make state-of-the-art AI infrastructure available to scientists all across the country, not just those at top tech hubs.

“This supercomputer will help level the playing field,” said Suresh Marru, principal investigator of the Nexus project and director of Georgia Tech’s new Center for AI in Science and Engineering (ARTISAN). “It’s designed to make powerful AI tools easier to use and available to more researchers in more places.”

Srinivas Aluru, Regents’ Professor and senior associate dean in the College of Computing, said, “With Nexus, Georgia Tech joins the league of academic supercomputing centers. This is the culmination of years of planning, including building the state-of-the-art CODA data center and Nexus’ precursor supercomputer project, HIVE."

Like Nexus, HIVE was supported by NSF funding. Both Nexus and HIVE are supported by a partnership between Georgia Tech’s research and information technology units.

A National Collaboration

Georgia Tech is building Nexus in partnership with the National Center for Supercomputing Applications at the University of Illinois Urbana-Champaign, which runs several of the country’s top academic supercomputers. The two institutions will link their systems through a new high-speed network, creating a national research infrastructure.

“Nexus is more than a supercomputer — it’s a symbol of what’s possible when leading institutions work together to advance science,” said Charles Isbell, chancellor of the University of Illinois and former dean of Georgia Tech’s College of Computing. “I'm proud that my two academic homes have partnered on this project that will move science, and society, forward.”

What’s Next

Georgia Tech will begin building Nexus this year, with its expected completion in spring 2026. Once Nexus is finished, researchers can apply for access through an NSF review process. Georgia Tech will manage the system, provide support, and reserve up to 10% of its capacity for its own campus research.

“This is a big step for Georgia Tech and for the scientific community,” said Vivek Sarkar, the John P. Imlay Dean of Computing. “Nexus will help researchers make faster progress on today’s toughest problems — and open the door to discoveries we haven’t even imagined yet.”

News Contact

Siobhan Rodriguez

Senior Media Relations Representative

Institute Communications

Jul. 14, 2025

By Chris Gaffney, Managing Director, Georgia Tech Supply Chain and Logistics Institute | Supply Chain Advisor | Former Executive at Frito-Lay, AJC International, and Coca-Cola

Every few weeks these days, a new AI breakthrough makes headlines. Models get sharper and more capable. Language tools get more fluent. Claims of agent breakthroughs and embedded autonomy in tools are everywhere.

And each time, the question resurfaces: What’s left for people to do as this wave progresses?

It’s a fair question. But from what I’ve seen throughout my career—from managing logistics in a Frito-Lay regional DC to transportation and distribution operations at AJC International and Coca-Cola, and now through executive education, consulting, and applied research at Georgia Tech—I believe we’re asking the wrong question.

Instead of asking what AI can do, we should be asking: Where is the human edge—and how do we keep it sharp?

1. Collaboration Across Boundaries Still Wins the Day

Whether in manufacturing, logistics, commercial and customer teams, or strategy, success still hinges on people working together—often across silos, systems, or supply chains. At Coca-Cola, some of the most impactful progress we made didn’t come from technology upgrades. It came from aligning teams that didn’t naturally collaborate—finance with planning, supply chain with sales, bottlers with company.

From what I see in my advisory work and interviews with supply chain leaders, that hasn’t changed. AI can improve visibility. It can suggest decisions. But it doesn’t build consensus, resolve conflicts, or create shared understanding. That’s human work—and it often makes the difference between potential and progress.

2. When the Plan Breaks, People Step Up

During my time in global logistics at AJC International, unexpected events were the norm: shipping delays, capacity shortages, regulatory changes. AI may help flag risks, but when the plan breaks, it’s still people who step in, prioritize under pressure, and find creative solutions.

This same theme came up in a recent SCM Talent podcast conversation. When I asked a senior supply chain leader what traits define her most effective team members, she didn’t hesitate:

“A drive for results. Problem solving. The ability to work in teams. And the ability to influence others.”

Those aren’t going out of style. They’re still what carries teams forward when the data model breaks or the shipment gets stuck.

The professionals I see excelling—especially in moments of disruption—aren’t just technical experts. They’re problem solvers who own the outcome and stay focused when others get stuck.

Drive, persistence, and adaptability aren’t things you automate. They’re human qualities that remain essential.

3. Hands-On Context Isn’t a Field Trip—It’s a Foundation

At Frito-Lay, I worked in a regional distribution center and breakbulk operation managing warehouse activities and dispatching drivers. Later, I spent a full year as an operations manager at one of our plants, where I led drivers and worked with plant warehouse teams and schedulers to ensure load readiness and on-time dispatch to local DCs.

Those weren’t just jobs—they were formative experiences. They taught me how decisions affect execution in the real world, and how the rhythm of operations shapes everything else in the supply chain.

That’s why I firmly believe professionals—especially early in their careers—should spend 3 to 5 years in front-line roles. No AI tool can replicate the kind of intuition you build by seeing how things work, where they break, and how people respond in real time. That foundation lasts an entire career.

4. Communication and Leadership Will Always Matter

In every role I’ve had—from the plant floor to corporate teams to Georgia Tech—I’ve seen that clear communication and authentic leadership are force multipliers. They carry more weight now, not less.

AI might help with drafting, summarizing, or visualizing, but it doesn’t earn trust. It doesn’t mentor a new team member or guide a group through a difficult change. That takes listening, emotional intelligence, and personal credibility.

Those leading change in today’s organizations—whether rolling out a new system or rebuilding after disruption—are the ones who can communicate with clarity and lead with steadiness. That’s not something AI can learn.

5. The Edge Is Where Humans Live

There’s a space at the boundary of every operation—the “edge”—where plans meet real-world variability. And that’s where humans remain essential.

Whether it’s spotting an issue before it escalates, reading between the lines of a conversation, or connecting seemingly unrelated problems across functions, that kind of judgment is rooted in experience. It can’t be downloaded or inferred from data alone.

In my work at Georgia Tech, across executive education, consulting, and applied research, I regularly see the difference it makes when decision-makers bring not just technical knowledge, but lived context from the field. That human edge is where resilience is built—and where strategy becomes reality.

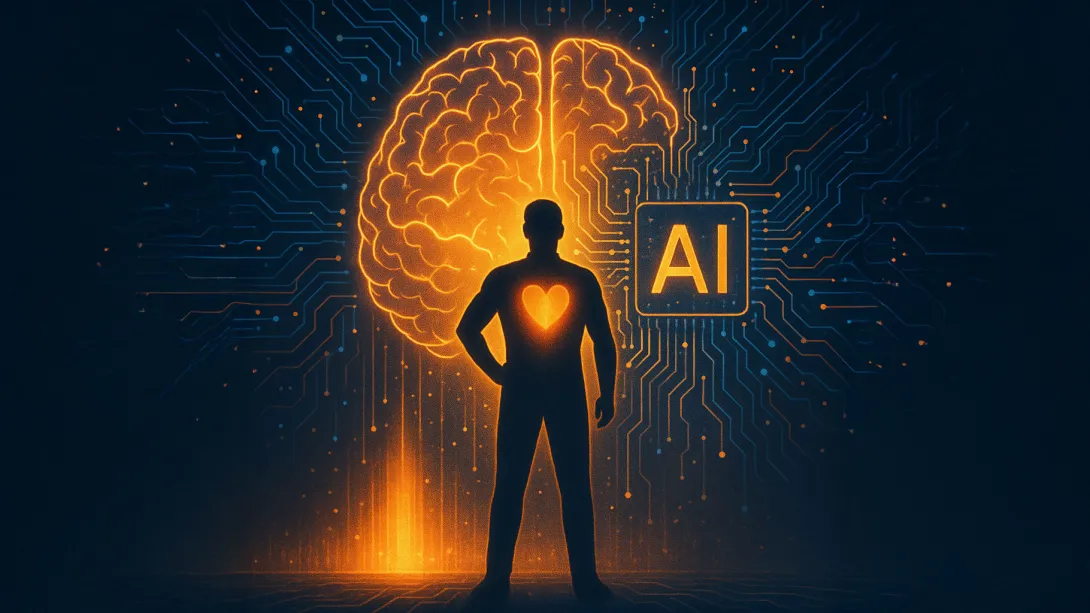

6. Humans and AI: Better Together

To be clear: this isn’t about rejecting AI. The smartest teams I work with aren’t afraid of it—they’re learning how to use it. AI tools can improve productivity, identify trends, and help people make better decisions. But they need to be paired with human insight.

AI suggests. People choose. AI speeds up planning. People keep it grounded. The professionals who combine digital fluency with interpersonal skill, operational awareness, and strategic judgment? Those are the ones who will lead in the next era.

So What Should You Do?

If you want to build a career that endures—and evolves—with AI, here are seven things I recommend:

- Invest in the front line. Not just a tour. Spend 3–5 years in a real operations or customer-facing role. It will shape how you lead for decades.

- Build bridges. Learn how sales thinks. Understand finance’s constraints. Connect systems, teams, and people.

- Volunteer when the extra project comes up. These stretch roles are often tied to strategic initiatives and senior leadership. Saying yes can accelerate learning and visibility—especially when others hesitate.

- Take roles at the intersections—not the cul-de-sacs. Look for positions that connect functions, partners, or ecosystems. Exposure to diverse perspectives sharpens insight and multiplies your value.

- Sharpen your communication. Speak with intent. Write with clarity. Listen deeply. These skills amplify everything else.

- Evolve with AI—or fall behind. You don’t need to code, but you do need to understand how AI is changing your domain. Through continuing education, hands-on learning, or professional development, stay curious and current.

- Never stop learning. At Georgia Tech, I see firsthand how ongoing learning—through executive education, research engagement, or new assignments—helps professionals lead through change. Keep asking: what haven’t I seen yet? Who could I learn from?

Final Thoughts

The future of work isn’t about humans vs. machines. It’s about people who can lead, decide, and connect—with AI as their force multiplier.

We may automate tasks. But judgment, trust, and empathy? Those are human domains. And in times of uncertainty, it’s the people who can navigate complexity, rally teams, and adapt with integrity who make the difference.

So yes, learn the tools. Embrace the change. But never underestimate the power of experience, context, and connection.

That’s your edge. And that’s not going anywhere.

Jul. 10, 2025

Georgia Tech has been recognized in a new IDC white paper, A Blueprint for AI‑Ready Campuses: Strategies from the Frontlines of Higher Education, as a national leader in deploying artificial intelligence across higher education. The report, published in partnership with Microsoft, highlights Georgia Tech’s comprehensive approach to integrating AI into teaching, research, and campus operations.

The Institute is one of only four U.S. universities featured in the report, joining Auburn University, Babson College, and the University of North Carolina at Chapel Hill.

“AI isn’t a single system or application—it’s a new foundation for how we work, teach, and learn,” said Leo Howell, Georgia Tech’s chief information security officer. “Our goal is to expose people to as many tools as possible, creating an ‘AI for All’ strategy that ensures everyone at Georgia Tech can leverage AI to enhance their work and learning experiences.”

Georgia Tech’s approach centers on a “persona-based model,” tailoring AI tools and resources to meet the needs of students, faculty, researchers, and administrators. That personalized approach, according to the report, is what makes Georgia Tech’s efforts both scalable and sustainable.

The white paper also emphasizes the importance of industry partnerships in Georgia Tech’s strategy. Through collaborations with Microsoft, OpenAI, and NVIDIA, the Institute is deploying advanced AI technologies while preparing students for the demands of an AI-driven workforce.

Georgia Tech’s success lies in its flexibility, the report notes. The Institute tests AI tools through targeted pilots, gathers user feedback, and rapidly iterates to improve outcomes. This adaptive mindset is recommended as a best practice for other institutions navigating their own AI transformation.

The full IDC white paper is available for download here.

News Contact

Breon Martin

AI Marketing Communications Manager

Jul. 03, 2025

As strange as it sounds, the key to understanding life’s origins might lie in artificial intelligence. At least, according to a new approached being pursued by researchers at Georgia Tech.

School of Electrical and Computer Engineering (ECE) Assistant Professor Amirali Aghazadeh and Ph.D. student Daniel Saeedi have developed AstroAgents, an AI system that analyzes mass spectrometry data — detailed chemical compositions from meteorites and Earth soil samples — to generate novel hypotheses about the origins of life on the planet.

What sets AstroAgents apart is its use of agentic AI. Unlike traditional AI systems that perform fixed tasks, this agentic system is designed to pursue a scientific goal. It draws from astrobiology literature, interprets complex data, and proposes original ideas that researchers can investigate further.

Their paper, recently featured in the journal "Nature", is opening new possibilities for how scientists explore questions that have remained unanswered for decades.

In a special Q&A, Aghazadeh and Saeedi explain how AstroAgents analyzes space chemistry, what it’s revealing about the possible origins of life on Earth, and what they hope to explore next.

News Contact

Dan Watson

Jun. 27, 2025

A team of Georgia Tech graduate students is using artificial intelligence (AI) to help people with disabilities find their dream jobs.

Searching for the right job is stressful for most, but it can be overwhelming for people with disabilities. However, using an innovative approach, the student entrepreneurs created a customizable AI-powered "job coach" that connects people with accessible employment opportunities.

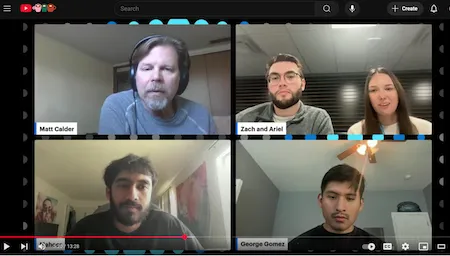

OMSCS students George Gomez, Ariel Magyar, Zachary Patrignani, and Maheer Sayeed created Interstellar Jobs as their entry for the March 2025 Microsoft Azure Innovation Challenge. The team beat over 70 international entries to secure first place and $10,000.

Interstellar Jobs uses information about job seekers' disabilities, job preferences, and other personal details to provide detailed coaching tips for specific jobs. The tips let job seekers know if they're a good fit for the position, what challenges they can expect, and what they can do to manage these challenges successfully.

The challenge, co-sponsored by TechBridge, required teams to create a functional proof of concept within a tight timeframe using AI, analytics, networking, and other Microsoft Azure Web Services.

Selecting which services to use was the starting point for most teams. In fact, Sayeed says most of the competition tried to use as many Azure services as possible for their projects.

"We didn't do that. We kept it simple," said Sayeed.

"Our mindset going into the challenge was that we'd find the problem first, and then we would look at the services we would use."

Their entrepreneurial approach led the team to develop Interstellar Jobs using just three Azure services. As an example of their approach, the team faced the challenge of addressing specific disabilities in relation to thousands of job listings.

Developers usually depend on drop-down menus when presenting an extensive list of options. However, this method might not cover all disabilities or could use outdated or overly broad language. It also wouldn't account for people with multiple or nuanced disabilities that don't fit neatly into a single category.

The Interstellar Jobs team opted for a blank field for users to list their disabilities.

"We kept it very open-ended for our users," said Sayeed.

The team used OpenAI Service to 'clean' entries on the backend, regardless of what users wrote in the blank field. This method ensures that users can always get a structured and actionable response from Interstellar Jobs.

"As a user, not having to pick from a drop-down menu just feels good," said Matt Calder, senior product marketing manager at Microsoft.

Calder hosts Microsoft DevRadio and recently interviewed the Interstellar Jobs team. "I like how your approach changes how people interact with the whole system. If you make something really usable, it's going to be accessible as well," said Calder.

Despite its success, the team has no immediate plans to expand Interstellar Jobs. Each member balances a full-time job and their studies in Georgia Tech's Online Master of Science in Computer Science (OMSCS) program.

"We gained so much about cloud development and Azure Web Services from the experience," said Sayeed. "We also learned the value of AI in these applications."

News Contact

Ben Snedeker, Communications Manager II

Georgia Tech College of Computing

Jun. 26, 2025

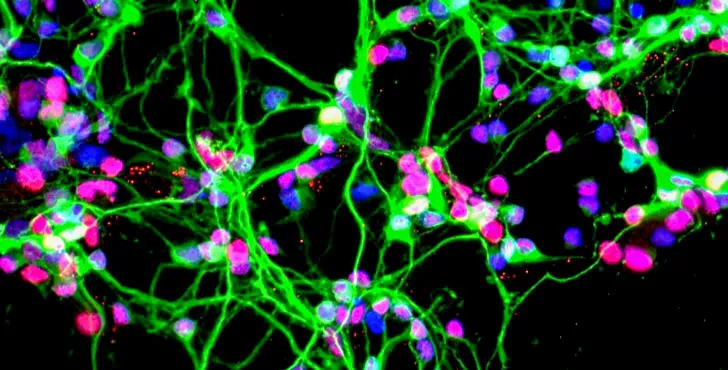

Researchers at Georgia Tech have taken a critical step forward in creating efficient, useful and brain-like artificial intelligence (AI). The key? A new algorithm that results in neural networks with internal structure more like the human brain.

The study, “TopoNets: High-Performing Vision and Language Models With Brain-Like Topography,” was awarded a spotlight at this year’s International Conference on Learning Representations (ICLR), a distinction given to only 2 percent of papers. The research was led by graduate student Mayukh Deb alongside School of Psychology Assistant Professor Apurva Ratan Murty.

Thirty-two of Tech’s computing, engineering, and science faculty represented the Institute at ICLR 2025, which is globally renowned for sharing cutting-edge research.

“We started with this idea because we saw that AI models are unstructured, while brains are exquisitely organized,” says first-author Deb. “Our models with internal structure showed more than a 20 percent boost in efficiency with almost no performance losses. And this is out-of-the-box — it’s broadly applicable to other models with no extra fine-tuning needed.”

For Murty, the research also underscores the importance of a rapidly growing field of research at the intersection of neuroscience and AI. “There's a major explosion in understanding intelligence right now,” he says. “The neuro-AI approach is exciting because it helps emulate human intelligence in machines, making AI more interpretable.”

“In addition to advancing AI, this type of research also benefits neuroscience because it informs a fundamental question: Why is our brain organized the way it is?,” Deb adds. “Making AI more interpretable helps everyone.”

Brain-inspired blueprints

In the brain, neurons form topographic maps: neurons used for comparable tasks are closer together. The researchers applied this concept to AI by organizing how internal components (like artificial neurons) connect and process information.

This type of organization has been tried in the past but has been challenging, Murty says. “Historically, rules constraining how the AI could structure itself often resulted in lower-performing models. We realized that for this type of biophysical constraint, you simply can’t map everything — you need an algorithmic solution.”

“Our key insight was an algorithmic trick that gives the same structure as brains without enforcing things that models don't respond well to,” he adds. “That breakthrough was what Mayukh (Deb) worked on.”

The algorithm, called TopoLoss, uses a loss function to encourage brain-like organization in artificial neural networks, and it is compatible with many AI systems capable of understanding language and images.

“The resulting training method, TopoNets, is very flexible and broadly applicable,” Murty says. “You can apply it to contemporary models very easily, which is a critical advancement when compared to previous methods.”

Neuro-AI innovations

Murty and Deb plan to continue refining and designing brain-inspired AI systems. “All parts of the brain have some organization — we want to expand into other domains,” Deb says. “On the neuroscience side of things, we want to discover new kinds of organization in brains using these topographic systems.”

Deb also cites possibilities in robotics, especially in situations like space exploration where resources are limited. “Imagine running a model inside a robot with limited power,” he says. “Structured models can help us achieve 80 percent of performance with just 20 percent of energy consumption, saving valuable energy and space. This is still experimental, but it's the direction we are interested in exploring.”

“This success highlights the potential of a new approach, designing systems that benefit both neuroscience and AI — and beyond,” Murty adds. “We can learn so much from the human brain, and this project shows that brain-inspired systems can help current AI be better. We hope our work stimulates this conversation.”

Pagination

- Previous page

- Page 8

- Next page