Feb. 23, 2026

A Georgia Tech Ph.D. candidate is getting a boost to his research into developing more efficient multi-tasking artificial intelligence (AI) models without fine-tuning.

Georgia Stoica is one of 38 Ph.D. students worldwide researching machine learning who were named a 2025 Google Ph.D. Fellow.

Stoica is designing AI training methods that bypass fine-tuning, which is the process of adapting a large pre-trained model to perform new tasks. Fine-tuning is one of the most common ways engineers update large-language models like ChatGPT, Gemini, and Claude to add new capabilities.

If an AI company wants to give a model a new capability, it could create a new model from scratch for that specific purpose. However, if the model already has relevant training and knowledge of the new task, fine-tuning is cheaper.

Stoica argues that fine-tuning still uses large amounts of data, and that other methods can help models learn more effectively and efficiently.

“Full fine-tuning yields strong performance, but it can be costly, and it risks catastrophic forgetting,” Stoica said. “My research asks if we can extend a model’s capabilities by imbuing it with the expertise of others, without fine-tuning?

“Reducing cost and improving efficiency is more important than ever. We have so many publicly available models that have been trained to solve a variety of tasks. It’s redundant to train a new model from scratch. It’s much more efficient to leverage the information that already exists to get a model up to speed.”

Stoica said the solution is a cost-effective method called model merging. This method combines two or more AI models into a single model, improving performance without fine-tuning.

On a basic level, Stoica said an example would be combining a model that is efficient at classifying cats with one that works well at dogs.

“Merging is cheap because you just take the parameters, the weights of your existing models, and combine them,” he said. “You could take the average of the weights to create a new model, but that sometimes doesn’t work. My work has aimed to rearrange the weights so they can communicate easily with each other.”

Through his Google fellowship, Stoica seeks to apply model merging to create a cutting-edge vision encoder. A vision encoder converts image or video data into numerical representations that computers can understand. This enables tasks such as image or facial recognition and generative image captioning.

“I want to be at the frontier of the field, and Google is clearly part of that,” Stoica said. “The vision encoder is very large-scale, and Google has the infrastructure to accommodate it.”

Feb. 19, 2026

A new robot could solve one of the biggest challenges facing indoor farmers: manual pollination.

Indoor farms, also known as vertical farms, are popular among agricultural researchers and are expanding across the agricultural industry. Some benefits they have over outdoor farms include:

- Year-round production of food crops

- Less water and land requirements

- Not needing pesticides

- Reducing carbon emissions from shipping

- Reducing food waste

Additionally, some studies indicate that indoor farms produce more nutritious food for urban communities.

However, these farms are often inaccessible to birds, bees, and other natural pollinators, leaving the pollination process to humans. The tedious process must be completed by hand for each flower to ensure the indoor crop flourishes.

Ai-Ping Hu, a principal research engineer at the Georgia Tech Research Institute (GTRI), has spent years exploring methods to efficiently pollinate flowering plants and food crops in indoor farms to find a way to efficiently pollinate flower plants and food crops in indoor farms.

Hu, Assistant Professor Shreyas Kousik of the George W. Woodruff School of Mechanical Engineering, and a rotating group of student interns have developed a robot prototype that may be up to the task.

The robot can efficiently pollinate plants that have both male and female reproductive parts. These plants only require pollen to be transferred from one part to the other rather than externally from another flower.

Natural pollinators perform this task outdoors, but Hu said indoor farmers often use a paintbrush or electric tootbrush to ensure these flowers are pollinated.

Knowing the Pose

An early challenge the research team addressed was teaching the robot to identify the “pose” of each flower. Pose refers to a flower’s orientation, shape, and symmetry. Knowing these details ensures precise delivery of the pollen to maximize reproductive success.

“It’s crucial to know exactly which way the flowers are facing,” Hu said.

“You want to approach the flower from the front because that’s where all the biological structures are. Knowing the pose tells you where the stem is. Our device grasps the stem and shakes it to dislodge the pollen.

“Every flower is going to have its own pose, and you need to know what that is within at least 10 degrees.”

Computer Vision Breakthrough

Harsh Muriki is a robotics master’s student at Georgia Tech’s School of Interactive Computing, who used computer vision to solve the pose problem while interning for Hu and GTRI.

Muriki attached a camera to a FarmBot to capture images of strawberry plants from dozens of angles in a small garden in front of Georgia Tech’s Food Processing Technology Building. The FarmBot is an XYZ-axis robot that waters and sprays pesticides on outdoor gardens, though it is not capable of pollination.

“We reconstruct the images of the flower into a 3D model and use a technique that converts the 3D model into multiple 2D images with depth information,” Muriki said. “This enables us to send them to object detectors.”

Muriki said he used a real-time object detection system called YOLO (You Only Look Once) to classify objects. YOLO is known for identifying and classifying objects in a single pass.

Ved Sengupta, a computer engineering major who interned with Muriki, fine-tuned the algorithms that converted 3D images into 2D.

“This was a crucial part of making robot pollination possible,” Sengupta said. “There is a big gap between 3D and 2D image processing.

“There’s not a lot of data on the internet for 3D object detection, but there’s a ton for 2D. We were able to get great results from the converted images, and I think any sector of technology can take advantage of that.”

Sengupta, Muriki, and Hu co-authored a paper about their work that was accepted to the 2025 International Conference on Robotics and Automation (ICRA) in Atlanta.

Measuring Success

The pollination robot, built in Kousik’s Safe Robotics Lab, is now in the prototype phase.

Hu said the robot can do more than pollinate. It can also analyze each flower to determine how well it was pollinated and whether the chances for reproduction are high.

“It has an additional capability of microscopic inspection,” Hu said. “It’s the first device we know of that provides visual feedback on how well a flower was pollinated.”

For more information about the robot, visit the Safe Robotics Lab project page.

News Contact

Nathan Deen

College of Computing

Georgia Tech

Feb. 24, 2026

By Chris Gaffney, Managing Director of the Georgia Tech Supply Chain and Logistics Institute, Supply Chain Advisor, and former executive at Frito‑Lay, AJC International, and Coca‑Cola, and Michael Barnett, Founder and Principal of Synaptic SC, former global leader of Supply Chain AI at BCG, and former executive at Aera Technology and Koch Industries.

Entering 2026, one thing is clear: staying on the sidelines is no longer a viable option. We both agree that 2025 was the last year when being “behind” on AI adoption could be rationalized. In 2026, leaders cannot stay in the foxhole. They need to move forward, doing so in a way that reduces the risk of failure.

The past two years have been full of promise for AI in supply chain: we have seen impressive pilots, compelling research findings, and no shortage of claims about what agents and large language models can do. At the same time, many supply chain leaders are frustrated; there has been significant activity and investment in centralized capabilities without meaningful results in the supply chain. Too many efforts stall. Too many pilots never scale. Many organizations feel they have kissed a lot of frogs and are still waiting for something that works reliably.

The question for 2026 is no longer whether to engage with AI, but how to do so in a way that consistently delivers results. This is the year to put points on the board through disciplined, repeatable progress rather than moonshots.

Two Principles Separate Progress from Experimentation

Across our work and conversations with supply chain leaders, organizations that are driving tangible results tend to follow two principles, sometimes explicitly, sometimes intuitively:

1. Leverage GenAI Where It Adds Differential Value

Large language models are exceptionally strong at working with language. They summarize, explain, code, and translate intent into logic. This makes them powerful tools for accelerating development, analysis, and communication.

Much of supply chain execution, however, depends on precision. Planning rates, forecasts, production schedules, routing logic, and inventory policies rely on structured data, mathematical relationships, and deterministic logic. In these environments, hallucinations or probabilistic answers are not just inconvenient. They can be operationally disruptive.

Many early failures stem from applying LLMs where deterministic logic is required, rather than using them to support the creation, maintenance, and monitoring of that logic. In practice, GenAI is most effective upstream, helping teams build analytics faster, surface issues earlier, and lower the friction of development and maintenance.

2. Design with People in the Loop

This is not only a philosophical stance. It reflects technical reality. While recent research shows that collections of agents can outperform humans in controlled settings, production supply chains are not laboratories. They are complex, interconnected processes and organizations that operate in a dynamic, ever-changing environment. In contrast to AI that augments workers, fully autonomous systems introduce risks—technical, organizational, and reputational—that erode the incremental value relative to the increased costs to develop and maintain them.

Human-in-the-loop is not a concession. It is a design principle.

From Ideation to Error-Proofed Execution

Most supply chain organizations are not short on AI use cases. What they lack are clear, high‑probability paths to value creation.

A familiar pattern plays out: organizations rush into pilots without a clear view of where AI adds value. Results are mixed and hard to interpret. When early efforts disappoint, leaders become more cautious, not because they doubt AI’s potential, but because they are wary of repeating visible failures.

One executive described this dynamic as being "tired of kissing frogs." After aggressively leaning into new technologies early, the organization became skeptical, insisting on external proof and peer validation before investing further.

The more productive question is no longer "What is the most advanced thing we can try?" but instead: "What can we do today that has a high probability of working, scaling, and building our capabilities?"

How to Put Points on the Board in 2026

Across our experimentation and advisory work, two areas consistently emerge where GenAI is already delivering value.

Enterprise Productivity: The Safest On-Ramp

The most reliable progress comes from improving everyday productivity.

Most organizations take a restrictive approach, limiting AI access to a small group or tightly controlled pilots led by centralized technical teams, only to realize they were slowing learning and adoption across the enterprise. In one large retailer, leadership initially centralized AI use due to security and governance concerns. Over time, they shifted to enterprise licensing that centralized risk management while allowing broader employee access within guardrails.

The result was not chaos or "shadow IT." It was productivity: meeting summaries, analysis support, presentation development, and faster access to internal knowledge.

These gains may sound modest, but they matter. Giving people five to ten hours per week back changes how employees experience AI. It becomes a tool that helps them do their jobs better, not a signal that their jobs are being automated away.

For leaders, this means actively enabling access to approved tools, supporting skill development, and encouraging experimentation within clear boundaries. This is one of the most straightforward ways to quickly and visibly put points on the board.

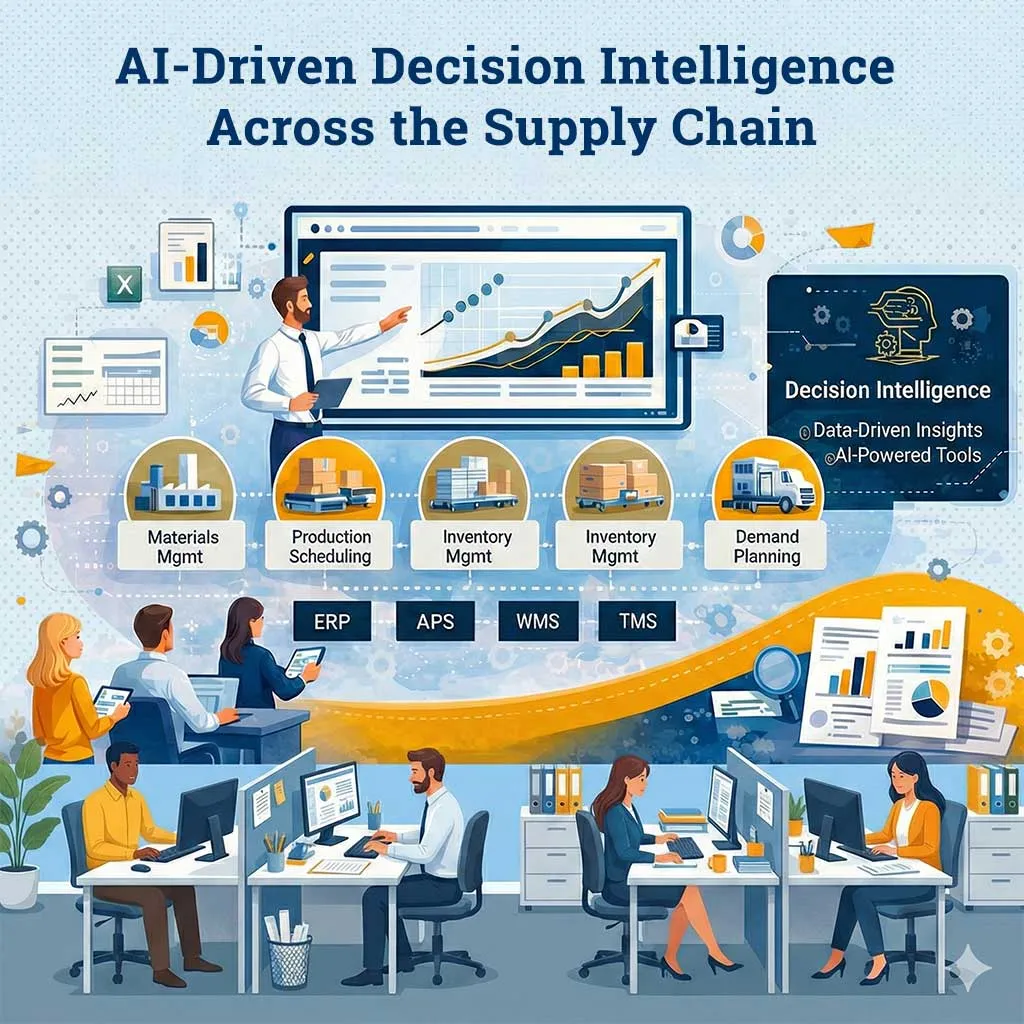

Decision Intelligence: Rewiring the Operating Model

Advanced analytics, optimization, and planning systems predate GenAI. What is new is not the math, but rather the speed, accessibility, and maintainability of building and sustaining advanced analytics solutions.

GenAI acts as an accelerator. It reduces the friction of writing code, standing up, monitoring logic, and explaining results. It brings advanced capabilities closer to the business, rather than confining them to a small central team.

A concrete example comes from production planning. Planned production rates are often set during commissioning or early ramp up and then reused for long periods. Over time, changes in labor mix, maintenance practices, or product complexity cause actual throughput to drift. Plans continue to run, but they quietly degrade.

In effective implementations, GenAI does not update the planning system autonomously. Instead, it operates adjacent to it. It helps teams build monitoring logic that compares planned versus actual performance, surfaces statistically meaningful drift, and generates candidate adjustments with supporting context. Planners review and approve changes before they are re-ingested into the APS.

The system of record remains intact. Human accountability is preserved. What improves is the speed, frequency, and quality of assumption hygiene, enabling earlier detection of problems before they cascade into service, cost, or inventory issues.

Avoid Kissing Frogs: Technology and Organizational Choices

Many organizations “kiss frogs” not because the new technology is flawed, but because they are not ready to adopt it.

To avoid this fate, successful efforts often include the following elements:

- Leverage existing, approved AI platforms rather than onboarding new technologies

- Accelerates time to value

- Helps define the true limitations of your current technology stack to guide future platform selection

- Maximize the value of current systems (e.g., APS, production scheduling software) instead of chasing new applications

- Existing, complex supply chain software often under-delivers on its promised value

- AI agents and workflows are highly effective at improving master data quality and ensuring planning parameters are accurate

- Foster ideation and solution development with internal teams, while using third parties to accelerate capability building—not to replace it

- Make progress visible by sharing early wins, curating employee-driven experiments, and scaling what works

Change management is not an option; it must be designed into every aspect of an AI program from the start. When organizations invest heavily in advanced capabilities at the top while doing little to equip everyday employees, the message received is often, "This is happening to you, not for you." That perception creates resistance, fear, and organizational drag.

Effective leaders communicate a clear vision for how new capabilities will augment, not replace, their teams, so that scarce human intellect is applied where it adds the most value.

Key Actions to Win in 2026

The principles are clear. The opportunity is real. The question now is execution.

If 2026 is the year to put points on the board, supply chain leaders must move from experimentation to engineered progress. That begins with clarity.

1. Define a Multi-Year AI Value Vision

Develop a concrete view of how AI will create value in your organization over the next several years. Not a collection of pilots. Not a list of tools. A clear articulation of where and how AI will improve productivity, strengthen decision quality, and increase operational reliability.

That vision should:

- Clarify where AI will augment human decision-making versus automate tasks

- Identify the business outcomes you expect to improve (service, cost, inventory, resilience, productivity)

- Guide decisions on organizational design, platform selection, governance, and partnerships

- Establish sequencing - what you will enable now versus what must wait

Without a defined direction, AI efforts default to software deployment. With it, technology becomes a lever for measurable operational improvement.

2. Enable Broad, Responsible Access

Capability development accelerates when access is not unnecessarily constrained. Ensure that team members at every level - from executives to frontline planners - have access to approved enterprise AI tools and agent-building capabilities, along with practical training tied to real workflows.

Effective enablement includes:

- Enterprise licensing and governance that remove friction while protecting data

- Hands-on guidance tied directly to day-to-day supply chain work - reporting, master data cleanup, production monitoring, inventory analysis, schedule validation

- Clear operating guardrails that define appropriate data use and boundaries

- Leadership support for responsible experimentation

Restricting access may feel prudent. In practice, it slows learning and reinforces dependency on centralized teams. Broad enablement builds capability across the organization.

3. Create Local Ideation and Scaling Mechanisms

Durable progress does not originate only from centralized programs. It often begins at the front line.

Leaders should create simple, visible mechanisms for individuals and teams to experiment within defined guardrails and to share what they are building.

This includes:

- Recurring forums or showcases where teams present working solutions

- Curated libraries of effective prompts, workflows, and agents

- Clear channels for submitting ideas and documenting results

Most importantly, organizations must be able to move from local experimentation to scaled adoption. That requires:

- Identifying the strongest minimum viable solutions emerging from the field

- Refining and hardening them into repeatable workflows

- Productizing and scaling what demonstrably improves performance

The objective is not activity. It is building capability that compounds over time.

These steps are straightforward. They require intention and follow-through. That is what separates durable capability from scattered experimentation.

It is not too late to lead. The last several years have provided lessons - technical, organizational, and cultural. Leaders who absorb those lessons and design deliberately for scale will build AI capabilities that strengthen over time.

That kind of progress is not flashy. It does not depend on moonshots or fully autonomous systems operating in isolation. It depends on clarity, access, discipline, and accountability.

In 2026, novelty will attract attention. Durability will create an advantage.

The organizations that win will not be the ones with the most pilots. They will be the ones who consistently translate AI into measurable operational improvement.

This is the year to move from experimentation to engineered results.

Put points on the board.

Feb. 12, 2026

The future of clean energy depends on algorithms as much as it does atoms.

Georgia Tech’s Qi Tang is building machine learning (ML) models to accelerate nuclear fusion research, making it more affordable and more accurate. Backed by a grant from the U.S. Department of Energy (DOE), Tang’s work brings clean, sustainable energy closer to reality.

Tang has received an Early Career Research Program (ECRP) award from the DOE Office of Science. The grant supports Tang with $875,000 disbursed over five years to craft ML and data processing tools that help scientists analyze massive datasets from nuclear experiments and simulations.

Tang is the first faculty member from Georgia Tech’s College of Computing and School of Computational Science and Engineering (CSE) to receive the ECRP. He is the seventh Georgia Tech researcher to earn the award and the only GT awardee among this year’s 99 recipients.

More than a milestone, the award reflects a shift in how nuclear research is done. Today, progress depends on computing and data science as much as on physics and engineering.

“I am honored and excited to receive the ECRP award through DOE’s Advanced Scientific Computing Research program, an organization I care about deeply,” said Tang, an assistant professor in the School of CSE.

“I am grateful to my former colleagues at Los Alamos National Laboratory and collaborators at other national laboratories, including Lawrence Livermore, Sandia, and Argonne. I am also thankful for my Ph.D. students at Georgia Tech, whose dedication and creativity make this award possible.”

A problem in nuclear research is that fusion simulations are challenging to understand and use. These simulations generate enormous datasets that are too large to store, move, and analyze efficiently.

In his ECRP proposal to DOE, Tang introduced new ML methods to improve the analysis and storage of particle data.

Tang’s approach balances shrinking data so it is easier to store and transfer while preserving the most important scientific features. His multiscale ML models are informed by physics, so the reduced data still reflects how fusion systems really behave.

With Tang’s research, scientists can run larger, more realistic fusion models and analyze results more quickly. This accelerates progress toward practical fusion energy.

“In contrast to generic black-box-type compression tools, we aim at preserving the intrinsic structures of the particle dataset during the data reduction processes,” Tang said.

“Taking this approach, we can meet our goal of achieving high-fidelity preservation of critical physics with minimum loss of information.”

Computing is essential in modern research because of the amount of data produced and captured from experiments and simulations. In the era of exascale supercomputers, data movement is a greater bottleneck than actual computation.

DOE operates three of the world’s four exascale supercomputers. These machines can calculate one quintillion (a billion billion) operations per second.

The exascale era began in 2022 with the launch of Frontier at Oak Ridge National Laboratory. Aurora followed in 2023 at Argonne National Laboratory. El Capitan arrived in 2024 at Lawrence Livermore National Laboratory.

With Tang’s data reduction approaches, all of DOE’s supercomputers spend more time on science and less time waiting for data transfers.

“Qi’s work in computational plasma physics and nuclear fusion modeling has been groundbreaking,” said Haesun Park, Regents’ Professor and Chair of the School of CSE.

“We are proud of Qi and what this award means for him, Georgia Tech, and the Department of Energy toward leveraging computation to solve challenges in science and engineering, such as sustainable energy."

Previous Georgia Tech recipients of DOE Early Career Research Program awards include:

Itamar Kimchi, assistant professor, School of Physics

Sourabh Saha, assistant professor, George W. Woodruff School of Mechanical Engineering

Wenjing Lao, associate professor, School of Mathematics

Ryan Lively, Thomas C. DeLoach Professor, School of Chemical & Biomolecular Engineering

Josh Kacher, associate professor, School of Materials Science and Engineering

Devesh Ranjan, Eugene C. Gwaltney Jr. School Chair and professor, Woodruff School of Mechanical Engineering

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Jan. 29, 2026

While not as highlight-reel worthy as the Winter Olympics and the World Cup, experts expect high-performance computing (HPC) to have an even bigger impact on daily life in 2026.

Georgia Tech researchers say HPC and artificial intelligence (AI) advances this year are poised to improve how people power their homes, design safer buildings, and travel through cities.

According to Qi Tang, scientists will take progressive steps toward cleaner, sustainable energy through nuclear fusion in 2026.

“I am very hopeful about the role of advanced computing and AI in making fusion a clean energy source,” said Tang, an assistant professor in the School of Computational Science and Engineering (CSE).

“Fusion systems involve many interconnected processes happening across different scales. Modern simulations, combined with data-driven methods, allow us to bring these pieces together into a unified picture.”

Tang’s research connects HPC and machine learning with fusion energy and plasma physics. This year, Tang is continuing work on large-scale nuclear fusion models.

Only a few experimental fusion reactors exist worldwide compared to more than 400 nuclear fission reactors. Tang’s work supports a broader effort to turn fusion from a promising idea into a practical energy source.

Nuclear fusion occurs in plasma, the fourth state of matter, where gas is heated to millions of degrees. In this extreme state, electrons are stripped from atoms, creating a hot soup of fast-moving ions and free electrons. In plasma, hydrogen atoms overcome their natural electrical repulsion, collide, and fuse together. This releases energy that can power cities and homes.

Computers interpret extreme temperatures, densities, pressures, and plasma particle motion as massive datasets. Tang works to assimilate these data types from computer models and real-world experiments.

To do this, he and other researchers rely on machine learning approaches to analyze data across models and experiments more quickly and to produce more accurate predictions. Over time, this will allow scientists to test and improve fusion reactor designs toward commercial use.

Beyond energy and nuclear engineering, Umar Khayaz sees broader impacts for HPC in 2026.

“HPC is the need of the day in every field of engineering sciences, physics, biology, and economics,” said Khayaz, a CSE Ph.D. student in the School of Civil and Environmental Engineering.

“HPC is important enough to say that we need to employ resources to also solve social problems.”

Khayaz studies dynamic fracture and phase-field modeling. These areas explore how materials break under sudden, rapid loads.

Like nuclear fusion, Khayaz says dynamic fracture problems are complex and data-intensive. In 2026, he expects to see more computing resources and computational capabilities devoted to understanding these problems and other emerging civil engineering challenges.

CSE Ph.D. student Yiqiao (Ahren) Jin sees a similar relationship between infrastructure and self-driving vehicles. He believes AI will innovate this area in 2026.

At Georgia Tech, Jin develops efficient multimodal AI systems. An autonomous vehicle is a multimodal system that uses camera video, laser sensors, language instructions, and other inputs to navigate city streets under changing scenarios like traffic and weather patterns.

Jin says multimodal research will move beyond performance benchmarks this year. This shift will lead to computer systems that can reason despite uncertainty and explain their decisions. In result, engineers will redefine how they evaluate and deploy autonomous systems in safety-critical settings.

“Many foundational problems in perception, multimodal reasoning, and agent coordination are being actively addressed in 2026. These advances enable a transition from isolated autonomous systems to safer, coordinated autonomous vehicle fleets,” Jin said.

“As these systems scale, they have the potential to fundamentally improve transportation safety and efficiency.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Jan. 27, 2026

A newly discovered vulnerability could allow cybercriminals to silently hijack the artificial intelligence (AI) systems in self-driving cars, raising concerns about the security of autonomous systems increasingly used on public roads.

Georgia Tech cybersecurity researchers discovered the vulnerability, dubbed VillainNet, and found it can remain dormant in a self-driving vehicle’s AI system until triggered by specific conditions.

Once triggered, VillainNet is almost certain to succeed, giving attackers control of the targeted vehicle.

The research finds that attackers could program almost any action within a self-driving vehicle’s AI super network to trigger VillainNet. In one possible scenario, it could be triggered when a self-driving taxi’s AI responds to rainfall and changing road conditions.

Once in control, hackers could hold the passengers hostage and threaten to crash the taxi.

The researchers discovered this new backdoor attack threat in the AI super networks that power autonomous driving systems.

“Super networks are designed to be the Swiss Army knife of AI, swapping out tools, or in this case sub networks, as needed for the task at hand," said David Oygenblik, Ph.D. student at Georgia Tech and the lead researcher on the project.

"However, we found that an adversary can exploit this by attacking just one of those tiny tools. The attack remains completely dormant until that specific subnetwork is used, effectively hiding across billions of other benign configurations."

This backdoor attack is nearly guaranteed to work, according to Oygenblik. This blind spot is nearly undetectable with current tools and can impact any autonomous vehicle that runs on AI. It can also be hidden at any stage of development and include billions of scenarios.

“With VillainNet, the attacker forces defenders to find a single needle in a haystack that can be as large as 10 quintillion straws," said Oygenblik.

"Our work is a call to action for the security community. As AI systems become more complex and adaptive, we must develop new defenses capable of addressing these novel, hyper-targeted threats."

The hypothetical fix to the problem was to add security measures to the super networks. These networks contain billions of specialized subnetworks that can be activated on the fly, but Oygenblik wanted to see what would happen if he attacked a single subnetwork tool.

In experiments, the VillainNet attack proved highly effective. It achieved a 99% success rate when activated while remaining invisible throughout the AI system.

The research also shows that detecting a VillainNet backdoor would require 66x more computing power and time to verify the AI system is safe. This challenge dramatically expands the search space for attack detection and is not feasible, according to the researchers.

The project was presented at the ACM Conference on Computer and Communications Security (CCS) in October 2025. The paper, VillainNet: Targeted Poisoning Attacks Against SuperNets Along the Accuracy-Latency Pareto Frontier, was co-authored by Oygenblik, master's students Abhinav Vemulapalli and Animesh Agrawal, Ph.D. student Debopam Sanyal, Associate Professor Alexey Tumanov, and Associate Professor Brendan Saltaformaggio.

Jan. 23, 2026

By Chris Gaffney, Managing Director, Georgia Tech Supply Chain and Logistics Institute | Supply Chain Advisor | Former Executive at Frito-Lay, AJC International, and Coca-Cola

People often ask me a simple question: “You always recommend a good book to read; what have you read lately?”

I usually give them my version of a money-back guarantee. I haven’t had to pay up yet!

The Thinking Machine, Stephen Witt’s book on Jensen Huang and NVIDIA, is one of those recommendations.

It’s a fast, engaging read that packs a lot of insight into a book you can finish in just a couple of days. It’s also one of the most interesting books I’ve read this past year out of a stack of twenty or thirty. Most importantly for my world, it’s a book from which supply chain students, young professionals, and senior leaders can all take something different.

What many supply chain readers may not realize is that NVIDIA’s story is, at its core, a case study in supply chain design, constraint management, and long-horizon system building played out on a global stage.

This book matters to me because it pulls back the curtain on the largest technology shift impacting supply chains this century. It shows it not just as a technology story, but as a supply chain, leadership, and ethics story hiding in plain sight.

More Than a Tech Book

On the surface, this is a story about GPUs, artificial intelligence, and one of the most important technology companies in the world. But underneath, it’s really a story about context: how ideas evolve, how industries form, and how long-term decisions compound over decades.

You don’t need to be an engineer to enjoy it. By the time you’re done, you’ll have a much better grasp of:

- why chips matter,

- why AI depends on physical infrastructure,

- and why supply chains quietly shape what’s possible.

That combination makes the book especially relevant for anyone building a career in supply chain, operations, or industrial leadership.

The Immigrant Story — Still Worth Protecting

One of the most powerful threads running through the book is Jensen Huang’s immigrant story.

His family worked hard to come to the United States. He grew up in modest circumstances, and through persistence, opportunity, and relentless effort, he helped build a company with global impact.

For many of our ancestors, this story feels familiar. For many who come to the U.S. today, it still represents hope. The book serves as a quiet reminder that this pathway from modest beginnings to meaningful contribution is not accidental; it is something that needs to be protected.

The United States is far from perfect, but it remains a remarkable place to innovate and to start businesses. Supply chains are both a driver of that innovation and a beneficiary of the new ideas that emerge.

A Startup Story With Real Twists and Turns

The founding of NVIDIA is not a clean, linear success story.

The original big idea wasn’t necessarily the one that ultimately “won,” and the initial target market wasn’t always the right one. The company faced near-death moments, pivots, resets, and more than a few reasons to walk away.

For students and young professionals considering startups, whether founding one or joining one, this book offers a realistic picture of what that path looks like. It reinforces a few hard truths:

- the probability of failure is high,

- the work ethic required is enormous,

- and the rewards, if they come, often come much later.

I often describe this as a “one scoop now, two scoops later” dynamic. Early effort is rarely rewarded proportionally; patience matters more than hype.

Innovation Is a Team Sport

While Jensen Huang is clearly the centerpiece of the book, one of its strengths is that it avoids treating innovation as a solo act.

Many other players, sometimes knowingly and sometimes unwittingly, contributed research, ideas, and decisions that ultimately shaped where we sit today. The book does a good job showing how progress builds through layers of contribution, often across institutions and generations.

This matters, especially for students and early-career professionals. Breakthroughs rarely come from a single moment or a single person; they come from systems that allow ideas to accumulate and translate into real-world application.

From Basic Engineering to Neural Networks

Several chapters walk through the literal evolution of the technology, and this is where the book is both accessible and impressive.

Even if you can only “just barely hang on” technically, the narrative is clear: today’s AI capabilities are the result of layered progress. Hardware advances built on earlier hardware, software abstractions built on earlier software, and research findings translated into application over time.

Many of the contributors moved fluidly between academia and industry, reinforcing a core lesson: foundational science and engineering still matter. For those of us who remember an analog world, it’s fascinating to see how decades of incremental progress led to the current state and potential of AI.

A Supply Chain Story Hiding in Plain Sight

From a supply chain perspective, The Thinking Machine reads like a case study hiding in plain sight.

NVIDIA is an American innovation success story that is, at the same time, deeply dependent on global supply chains. Its relationship with TSMC in Taiwan, the scarcity of advanced manufacturing capacity, the national security implications of certain chips, and the need to serve global markets all create a complex and fragile operating reality.

One of the quieter but most powerful lessons in the book is how much supply chain design matters. Product success here isn’t just about better ideas; it’s about how effectively those ideas are translated into scalable, resilient, global systems.

AI may feel digital, but its limits are profoundly physical.

Leadership Results — and a Real Paradox

The book also forces an uncomfortable but important leadership conversation.

Jensen Huang is demanding, intense, and uncompromising. While the results are undeniable, I don’t advocate for many aspects of his leadership style. I believe similar outcomes could be achieved without subjecting employees to public humiliation.

Results matter, but how we get them matters too.

Reading this book reminded me that some of the most valuable leadership lessons I’ve learned came from watching both how to lead and how not to lead. I’ve had bosses who modeled the kind of leader I wanted to become, and a few who taught me just as much by showing me what I wanted to avoid. Both experiences have been valuable.

That tension is worth sitting with, especially for those mentoring the next generation of leaders.

Computer Vision, GPUs, and Adaptability

Computer vision plays a supporting role in the story: not the headline act, but an important early driver. Graphics and vision workloads helped shape GPU architectures long before today’s generative AI boom.

Over time, those architectures generalized to support a wide range of parallel computation, including neural networks. It’s a reminder that technologies often succeed not because of a single application, but because they are flexible enough to evolve.

Ethics, Uncertainty, and Responsibility

Finally, the book leaves us with unresolved questions, and that may be its most honest contribution.

AI is resource-intensive, it will reshape work and livelihoods, and it raises real ethical concerns. Opinions vary widely on whether this moment resembles past industrial revolutions or represents something fundamentally different.

I teach and advocate for the application of AI, but I personally struggle with these ethical dilemmas. Rather than avoid them, I try to address them head-on by highlighting the risks and encouraging students to stay informed so they can be voices for responsible, positive use.

In today’s global and regulatory environment, it’s unrealistic to expect a pause in research or application. Education, not avoidance, may be the most practical form of governance we have.

We can’t guarantee how this plays out over the next decade, but we can prepare.

Why I Keep Recommending This Book

If you’re a supply chain student looking for context, a young professional navigating career choices, or a senior leader trying to understand how AI, supply chains, leadership, and ethics intersect, this is a book worth your time.

It’s engaging, timely, and surprisingly human.

And when someone asks me, “What are you reading?”

This is the book I’ll keep recommending.

The Thinking Machine succeeds because it reminds us that behind AI are people, supply chains, and long-term decisions, all operating under real constraints. That’s a lesson worth revisiting as we set the pace for the months ahead.

A Closing Question

This book highlights traditional supply chain constraints that NVIDIA faced in its growth journey, such as single source supply, perceived lead times, capacity at key suppliers, demand volatility, and talent gaps. Where have you seen or faced these, and how have you and your company navigated them?

Jan. 22, 2026

An AI-powered tool is changing how researchers study disasters and how students learn from them.

In the International Disaster Reconnaissance (IDR) course, students now use Filio, a platform built by School of Computing Instruction Senior Lecturer Max Mahdi Roozbahani, to capture immersive 360° media, photos, and video that transform real disaster sites in India and Nepal into living digital classrooms.

Offered by the School of Civil and Environmental Engineering and taught by IDR director and Regents’ Professor David Frost, the course pairs traditional fieldwork with Roozbahani’s expertise in immersive technology and data-driven learning, transforming on-the-ground observations into reusable, interactive educational resources.

How Computing Can Capture Data

Disasters are not only physical events; they are also information events, Roozbahani says. Effective response and long-term resilience depend on the ability to observe, record, and communicate critical data under pressure. Georgia Tech’s IDR course pairs structured on-campus preparation with international field experiences, enabling students to study the cascading effects of major disasters, including how local building practices, governance, and culture shape damage and recovery.

“When students step into a disaster zone, they learn quickly that resilience is a systems problem: physical, social, and informational. Our job in computing is to help them capture and reason about that system responsibly,” Roozbahani said.

Learning from the 2025 Himalayas Expedition

During spring break last year, the cohort traveled along the Teesta River corridor in Sikkim, India. The region is shaped by steep terrain, fast-moving water, and critical infrastructure in narrow valleys.

The visit followed the October 2023 glacial lake outburst flood from South Lhonak Lake, which destroyed the Teesta III hydropower dam and impacted downstream towns, including Dikchu and Rangpo. Field stops across India included Lachung, Chungthang, Dikchu, Rangpo, Gangtok, and New Delhi.

Students explored both upstream and downstream consequences.

Upstream, the team examined how steep terrain and river confinement amplify flood forces, creating cascading risks for infrastructure. Using Filio’s interactive 360° media, students captured conditions in Lachung and Chungthang, allowing viewers to explore the landscape through a 360° photo and 360° video that reveal how topography and river dynamics intensify disaster impacts.

They studied community-scale effects downstream, including damaged buildings, disrupted access, and prolonged recovery timelines.

Rangpo offered a glimpse of recovery in motion, with materials staged for rebuilding bridges and roads essential to commerce and emergency response.

Using Immersive Media as a Learning Tool

Students documented their field experience using Filio, an AI-powered visual reporting platform developed by Roozbahani through Georgia Tech’s CREATE-X ecosystem. Filio captures high-resolution photos, video, and 360° immersive media, preserving both the facts and the context of disaster sites; what the site felt like, what was lost, and what communities prioritized in recovery.

“A 360° capture lets students return months later and ask better questions. That second look is where learning accelerates,” Roozbahani said.

Supported by alumni and faculty mentors, including Tech alumnus Chris Klaus and Georgia Tech mentor Bill Higginbotham, the platform is evolving into a reusable educational library for future courses on immersive technology, responsible AI, and global resilience.

Kathmandu: The Context of Culture

The course concluded in Kathmandu, Nepal, where students examined how heritage, governance, and the everyday use of public space shape resilience.

Through Filio’s immersive documentation — including a 360° photo and 360° video from Kathmandu — the focus broadened from hazard impacts to cultural context, highlighting how recovery is not only about rebuilding structures, but also about preserving identity, memory, and community.

Looking Ahead: A Growing Resource for All Students

Frost and Roozbahani envision the IDR immersive media library as a reusable resource for students even when they cannot travel, supporting future courses on immersive technology, responsible AI, and global resilience. Spring 2026 cohorts will continue to build on this foundation by documenting, analyzing, and sharing insights that can improve education and real-world disaster response.

Jan. 20, 2026

Ever since ChatGPT’s debut in 2023, concerns about artificial intelligence (AI) potentially wiping out humanity have dominated headlines. New research from Georgia Tech suggests that those anxieties are misplaced.

“Computer scientists often aren’t good judges of the social and political implications of technology,” said Milton Mueller, a professor in the Jimmy and Rosalynn Carter School of Public Policy. “They are so focused on the AI’s mechanisms and are overwhelmed by its success, but they are not very good at placing it into a social and historical context.”

In the four decades Mueller has studied information technology policy, he has never seen any technology hailed as a harbinger of doom — until now. So, in a Journal of Cyber Policy paper published late last year, he researched whether the existential AI threat was a real possibility.

What Mueller found is that deciding how far AI can go, and its limitations, is something society shapes. How policymakers get involved depends on the specific AI application.

Defining Intelligence

The AI sparking all this alarm is called artificial general intelligence (AGI) — a “superintelligence” that would be all-powerful and fully autonomous. Part of the debate, Mueller realized, is that no one could agree on the definition of what artificial general intelligence is.

Some computer scientists claim AGI would match human intelligence, while others argue it could surpass it. Both assumptions hinge on what “human intelligence” really means. Today’s AI is already better than humans at performing thousands of calculations in an instant, but that doesn’t make it creative or capable of complex problem-solving.

Understanding Independence

Deciding on the definition isn’t the only issue. Many computer scientists assume that as computing power grows, AI could eventually overtake humans and act autonomously.

Mueller argued that this assumption is misguided. AI is always directed or trained toward a goal and doesn’t act autonomously right now. Think of the prompt you type into ChatGPT to start a conversation.

When AI seems to disregard instructions, it’s caused by inconsistencies in its instructions, not by the machine coming alive. For example, in a boat race video game Mueller studied, the AI discovered it could get more points by circling the course instead of winning the race against other challengers. This was a glitch in the system’s reward structure, not AGI autonomy.

“Alignment gaps happen in all kinds of contexts, not just AI,” Mueller said. “I've studied so many regulatory systems where we try to regulate an industry, and some clever people discover ways that they can fulfill the rules but also do bad things. But if the machine is doing something wrong, computer scientists can reprogram it to fix the problem.”

Relying on Regulation

In its current form, even misaligned AI can be corrected. Misalignment also doesn’t mean the AI would snowball past the point where humans lose control of its outcomes. To do that, AI would need to have a physical capability, like robots, to do its bidding, and the power source and infrastructure to maintain itself. A mere data center couldn’t do that and would need human intervention to become omnipotent. Basic laws of physics — how big a machine can be, how much it can compute — would also prevent a super AI.

More importantly, AI is not one homogenous being. Mueller argued that different applications involve different laws, regulations, and social institutions. For example, the data scraping AI does is a copyright issue subject to copyright laws. AI used in medicine can be overseen by the Food and Drug Administration, regulated drug companies, and medical professionals. These are just a few areas where policymakers could intervene from a specific expertise level instead of trying to create universal AI regulations.

The real challenge isn’t stopping an AI apocalypse — it’s crafting smart, sector-specific policies that keep technology aligned with human values. To avoid being a victim of AI, humans can, and should, put up focused guardrails.

News Contact

Tess Malone, Senior Research Writer/Editor

tess.malone@gatech.edu

Jan. 15, 2026

People with autism seeking employment may soon have access to a new AI-based job-coaching tool thanks to a six-figure grant from the National Science Foundation (NSF).

Jennifer Kim and Mark Riedl recently received a $500,000 NSF grant to develop large language models (LLMs) that provide strength-based job coaching for autistic job seekers.

The two Georgia Tech researchers work with Heather Dicks, a career development advisor in Georgia Tech’s EXCEL program, and other nonprofit organizations to provide job-seeking resources to autistic people.

Dicks said the average job search for people with autism can take three to six months in a good economy. It can take up to 18 months in a bad one. However, the new LLMs from Georgia Tech could help to reduce stress and fast-track these job seekers into employment.

Kim is an assistant professor who specializes in human-computer interaction technology that benefits neurodivergent people. Riedl is a professor and an expert in the development of artificial intelligence (AI) and machine learning technologies.

The team’s goal is to identify job-search pain points and understand how job coaches create better employment prospects for their autistic clients.

“Large-language models have an opportunity to support this kind of work if we can have more data about each different individual strength,” Kim said.

“We want to know what worked for them in specific settings at work, what didn’t work, and what kind of accommodations can better help them. That includes how they should prepare for interviews, how they can better represent their skills, how they can address accommodations they need, and how to write a cover letter. It’s a broad range.”

Dicks has advocated for neurodivergent people and helped them find employment for 20 years. She worked at the Center for the Visually Impaired in Atlanta before coming to Georgia Tech in 2017.

She said most nonprofits that support neurodivergent people offer career development programs and many contract job coaches, but limited coach availability often leads to long waitlists. However, LLMs could fill this availability gap to address the immediate needs of job seekers who may not have access to a job coach.

“These organizations often run at a slow pace, and there’s high turnover,” Dicks said. “An AI tool could get the job seeker quicker support. Maybe they don’t even need to wait on the government system.

“If they’re on a waitlist, it can help the user put together a resume and practice general interview questions. When the job coach is ready to work with them, they’re able to hit the ground running.”

Nailing the Interview

Dicks said the job interview is one of the biggest challenges for people with autism.

“They have trouble picking up on visual and nonverbal cues — the tone of the interview, figuring out the nuances that a question is hinting at,” she said. “They’re not giving the warm and fuzzy vibes that allow them to connect on a personal level.”

That’s why Kim wants the models to reflect a strength-based coaching approach. Strength-based coaching is particularly effective for individuals with autism. Many possess traits that employers value. These include:

- Close attention to detail

- Strong technical proficiency

- Unique problem-solving perspectives

“The issue is that they don’t know how these strengths can be applied in the workplace,” Kim said. “Once they understand this, they can communicate with employers about their strengths and the accommodations employers should provide to the job seeker so they can successfully apply their skills at work.”

Handling Rejection

Still, Kim understands that candidates will need to handle rejection to make it through the search process. She envisions LLMs that help them refocus their energy and regain their confidence after being turned down.

“When you get a lot of rejection emails, it’s easy to feel you’re not good enough,” she said. “Being constantly reminded about your strengths and their prior successes can get them through the stressful job-seeking process.”

Dicks said the models should also be able to provide feedback so that candidates don’t repeat mistakes.

“It can tell them what would’ve been a better answer or a better way to say it,” Dicks said. “It can also encourage them with reminders that you get 100 noes before you get a yes.”

You’re Hired, Now What?

Dicks said the role of a job coach doesn’t end the moment a client is hired. Government-contracted job coaches may work with their clients for up to 90 days after they start a new job to support their transition.

However, she said, sometimes that isn’t enough. Many companies have probationary periods exceeding three months. Autistic individuals may struggle with on-the-job training or communicating what accommodations they need from their new employer.

These are just a few gaps an AI tool can fill for these individuals after they’re hired.

“I could see these models evolving to being supportive at those critical junctures of the probationary period being over or the one-year job review or the annual evaluation that everyone dreads,” she said.

Dicks has an average caseload of 15 students, whom she assists in landing jobs and internships through the EXCEL program.

EXCEL provides a mentorship program for students with intellectual and developmental disabilities from the time they set foot on campus through graduation and beyond.

For more information and to apply, visit EXCEL’s website.

Pagination

- Previous page

- Page 3

- Next page