Jan. 27, 2026

A newly discovered vulnerability could allow cybercriminals to silently hijack the artificial intelligence (AI) systems in self-driving cars, raising concerns about the security of autonomous systems increasingly used on public roads.

Georgia Tech cybersecurity researchers discovered the vulnerability, dubbed VillainNet, and found it can remain dormant in a self-driving vehicle’s AI system until triggered by specific conditions.

Once triggered, VillainNet is almost certain to succeed, giving attackers control of the targeted vehicle.

The research finds that attackers could program almost any action within a self-driving vehicle’s AI super network to trigger VillainNet. In one possible scenario, it could be triggered when a self-driving taxi’s AI responds to rainfall and changing road conditions.

Once in control, hackers could hold the passengers hostage and threaten to crash the taxi.

The researchers discovered this new backdoor attack threat in the AI super networks that power autonomous driving systems.

“Super networks are designed to be the Swiss Army knife of AI, swapping out tools, or in this case sub networks, as needed for the task at hand," said David Oygenblik, Ph.D. student at Georgia Tech and the lead researcher on the project.

"However, we found that an adversary can exploit this by attacking just one of those tiny tools. The attack remains completely dormant until that specific subnetwork is used, effectively hiding across billions of other benign configurations."

This backdoor attack is nearly guaranteed to work, according to Oygenblik. This blind spot is nearly undetectable with current tools and can impact any autonomous vehicle that runs on AI. It can also be hidden at any stage of development and include billions of scenarios.

“With VillainNet, the attacker forces defenders to find a single needle in a haystack that can be as large as 10 quintillion straws," said Oygenblik.

"Our work is a call to action for the security community. As AI systems become more complex and adaptive, we must develop new defenses capable of addressing these novel, hyper-targeted threats."

The hypothetical fix to the problem was to add security measures to the super networks. These networks contain billions of specialized subnetworks that can be activated on the fly, but Oygenblik wanted to see what would happen if he attacked a single subnetwork tool.

In experiments, the VillainNet attack proved highly effective. It achieved a 99% success rate when activated while remaining invisible throughout the AI system.

The research also shows that detecting a VillainNet backdoor would require 66x more computing power and time to verify the AI system is safe. This challenge dramatically expands the search space for attack detection and is not feasible, according to the researchers.

The project was presented at the ACM Conference on Computer and Communications Security (CCS) in October 2025. The paper, VillainNet: Targeted Poisoning Attacks Against SuperNets Along the Accuracy-Latency Pareto Frontier, was co-authored by Oygenblik, master's students Abhinav Vemulapalli and Animesh Agrawal, Ph.D. student Debopam Sanyal, Associate Professor Alexey Tumanov, and Associate Professor Brendan Saltaformaggio.

Jan. 15, 2026

People with autism seeking employment may soon have access to a new AI-based job-coaching tool thanks to a six-figure grant from the National Science Foundation (NSF).

Jennifer Kim and Mark Riedl recently received a $500,000 NSF grant to develop large language models (LLMs) that provide strength-based job coaching for autistic job seekers.

The two Georgia Tech researchers work with Heather Dicks, a career development advisor in Georgia Tech’s EXCEL program, and other nonprofit organizations to provide job-seeking resources to autistic people.

Dicks said the average job search for people with autism can take three to six months in a good economy. It can take up to 18 months in a bad one. However, the new LLMs from Georgia Tech could help to reduce stress and fast-track these job seekers into employment.

Kim is an assistant professor who specializes in human-computer interaction technology that benefits neurodivergent people. Riedl is a professor and an expert in the development of artificial intelligence (AI) and machine learning technologies.

The team’s goal is to identify job-search pain points and understand how job coaches create better employment prospects for their autistic clients.

“Large-language models have an opportunity to support this kind of work if we can have more data about each different individual strength,” Kim said.

“We want to know what worked for them in specific settings at work, what didn’t work, and what kind of accommodations can better help them. That includes how they should prepare for interviews, how they can better represent their skills, how they can address accommodations they need, and how to write a cover letter. It’s a broad range.”

Dicks has advocated for neurodivergent people and helped them find employment for 20 years. She worked at the Center for the Visually Impaired in Atlanta before coming to Georgia Tech in 2017.

She said most nonprofits that support neurodivergent people offer career development programs and many contract job coaches, but limited coach availability often leads to long waitlists. However, LLMs could fill this availability gap to address the immediate needs of job seekers who may not have access to a job coach.

“These organizations often run at a slow pace, and there’s high turnover,” Dicks said. “An AI tool could get the job seeker quicker support. Maybe they don’t even need to wait on the government system.

“If they’re on a waitlist, it can help the user put together a resume and practice general interview questions. When the job coach is ready to work with them, they’re able to hit the ground running.”

Nailing the Interview

Dicks said the job interview is one of the biggest challenges for people with autism.

“They have trouble picking up on visual and nonverbal cues — the tone of the interview, figuring out the nuances that a question is hinting at,” she said. “They’re not giving the warm and fuzzy vibes that allow them to connect on a personal level.”

That’s why Kim wants the models to reflect a strength-based coaching approach. Strength-based coaching is particularly effective for individuals with autism. Many possess traits that employers value. These include:

- Close attention to detail

- Strong technical proficiency

- Unique problem-solving perspectives

“The issue is that they don’t know how these strengths can be applied in the workplace,” Kim said. “Once they understand this, they can communicate with employers about their strengths and the accommodations employers should provide to the job seeker so they can successfully apply their skills at work.”

Handling Rejection

Still, Kim understands that candidates will need to handle rejection to make it through the search process. She envisions LLMs that help them refocus their energy and regain their confidence after being turned down.

“When you get a lot of rejection emails, it’s easy to feel you’re not good enough,” she said. “Being constantly reminded about your strengths and their prior successes can get them through the stressful job-seeking process.”

Dicks said the models should also be able to provide feedback so that candidates don’t repeat mistakes.

“It can tell them what would’ve been a better answer or a better way to say it,” Dicks said. “It can also encourage them with reminders that you get 100 noes before you get a yes.”

You’re Hired, Now What?

Dicks said the role of a job coach doesn’t end the moment a client is hired. Government-contracted job coaches may work with their clients for up to 90 days after they start a new job to support their transition.

However, she said, sometimes that isn’t enough. Many companies have probationary periods exceeding three months. Autistic individuals may struggle with on-the-job training or communicating what accommodations they need from their new employer.

These are just a few gaps an AI tool can fill for these individuals after they’re hired.

“I could see these models evolving to being supportive at those critical junctures of the probationary period being over or the one-year job review or the annual evaluation that everyone dreads,” she said.

Dicks has an average caseload of 15 students, whom she assists in landing jobs and internships through the EXCEL program.

EXCEL provides a mentorship program for students with intellectual and developmental disabilities from the time they set foot on campus through graduation and beyond.

For more information and to apply, visit EXCEL’s website.

Dec. 10, 2025

Pascal Van Hentenryck, A. Russell Chandler III Chair and Professor in the H. Milton Stewart School of Industrial and Systems Engineering (ISyE) at Georgia Tech, director of Tech AI, and director of NSF AI4OPT, was a keynote speaker at AI Festival 2025, held December 1–3 at TU Wien Informatics in Vienna, Austria.

The three-day international festival convened leading researchers, industry experts, and members of the public to explore how artificial intelligence is shaping science, technology, and society. Through keynote talks, panels, and interactive sessions, the event fostered dialogue around emerging AI research, real-world applications, and societal impact.

Van Hentenryck delivered a keynote on “AI for Engineering Optimization” during Day 1: Research, which focused on recent advances in foundational and applied AI. His talk highlighted how AI and optimization methods can be integrated to address complex engineering challenges, with implications for domains such as energy systems, mobility, and large-scale decision-making.

The session was chaired by Nysret Musliu of TU Wien and the Cluster of Excellence Bilateral AI (BilAI).

The research-focused first day of the festival featured discussions on topics including neurosymbolic AI, large language models, explainable AI, AI in science, and automated problem solving and decision-making. Van Hentenryck’s keynote contributed to these conversations by emphasizing the role of AI-driven optimization in advancing engineering design and operational efficiency.

AI Festival 2025 was co-organized by TU Wien, the Center for Artificial Intelligence and Machine Learning (CAIML), BilAI—funded by the Austrian Science Fund (FWF)—the Vienna Science and Technology Fund (WWTF), and TU Austria. The event underscored the importance of international collaboration across academia and industry in advancing responsible and impactful AI research.

Van Hentenryck’s participation reflects Georgia Tech’s leadership in artificial intelligence, as well as the missions of Tech AI and AI4OPT to advance AI-enabled optimization and decision-making for complex, real-world systems.

Dec. 16, 2025

Supply chain management is poised to enter a new era. The Harvard Business Review has published a groundbreaking article co-authored by Andre Calmon, associate professor of operations management, alongside Flavio Calmon, Harvard University; Carol Long, Harvard University; and David Simchi-Levi, Massachusetts Institute of Technology. “The Age of Autonomous Supply Chains Has Arrived” explores how generative AI is transforming supply chain management from automated systems to truly autonomous operations.

Based on data collected at the Scheller College of Business, Calmon’s research demonstrates how AI models like Llama 4 Maverick 17B—equipped with optimized prompts, data-sharing rules, and guardrails—can outperform human teams in managing complex supply chains. Using the classic MIT Beer Distribution Game as a testbed, the authors benchmarked AI agents against more than 100 Georgia Tech students. The results were striking: AI-driven systems reduced total supply chain costs by up to 67% compared to human performance.

Traditional automated systems rely on rigid, human-designed rules. Calmon and his co-authors employed autonomous agents that learn, adapt, and coordinate across functions in real time. The study highlights four critical factors for success: selecting capable reasoning models, implementing guardrails to prevent costly errors, curating data through orchestration, and refining prompts for optimal performance.

“This breakthrough positions the Scheller College of Business as a thought leader at the intersection of AI and supply chain innovation,” said Calmon. “World-class supply chain management is becoming a plug-and-play capability. Businesses that understand how to guide generative AI agents with the right data and policies will gain a decisive competitive edge.”

The implications extend beyond cost savings. By delegating operational decisions to autonomous systems, human managers can focus on strategic priorities such as network design and supplier relationships. In an era of global volatility, this research emphasizes how future supply chain success depends on the strategic use of AI-driven technology.

News Contact

Kristin Lowe (She/Her)

Content Strategist

Georgia Institute of Technology | Scheller College of Business

kristin.lowe@scheller.gatech.edu

Nov. 18, 2025

Viral videos abound with humanoid robots performing amazing feats of acrobatics and dance but finding videos of a humanoid robot performing a common household task or traversing a new multi-terrain environment easily, and without human control, are much rarer. This is because training humanoid robots to perform these seemingly simple functions involves the need for simulation training data that lack the complex dynamics and degrees of freedom of motion that are inherent in humanoid robots.

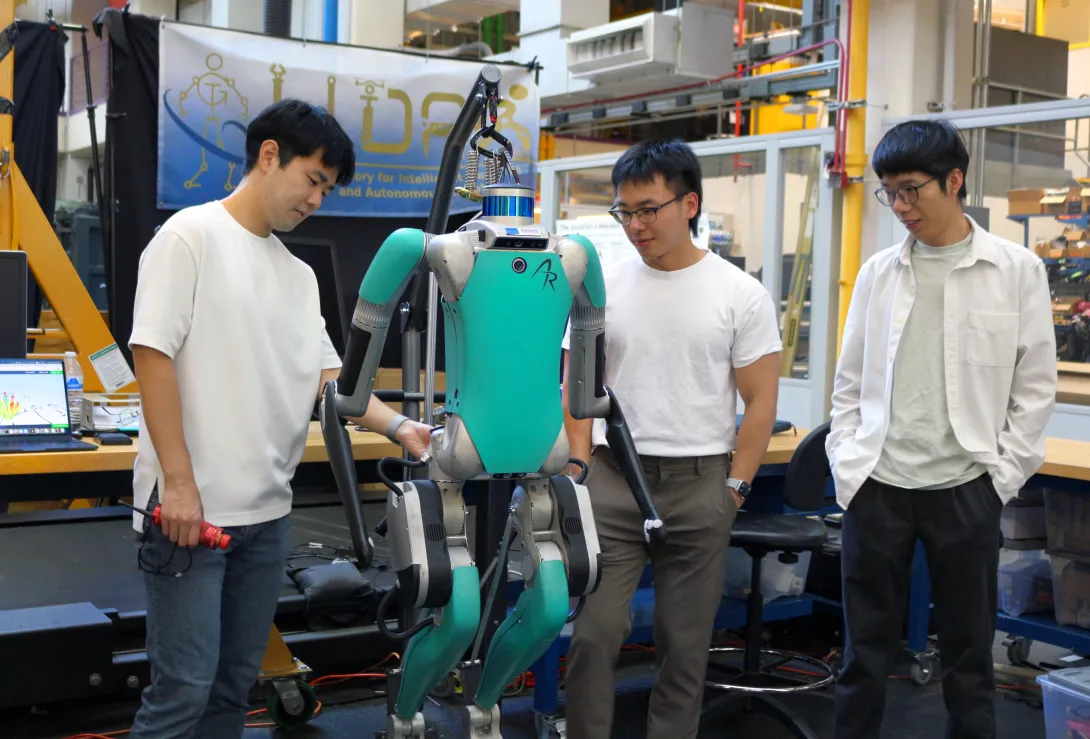

To achieve better training outcomes with faster deployment results, Fukang Liu and Feiyang Wu, graduate students under Professor Ye Zhao from the Woodruff School of Mechanical Engineering and faculty member of the Institute for Robotics and Intelligent Machines, have published a duo of papers in IEEE Robotics and Automation Letters. This is a collaborative work with three other IRIM affiliated faculties, Profs. Danfei Xu, Yue Chen, and Sehoon Ha, as well as Prof. Anqi Wu from School of Computational Science and Engineering.

To develop more reliable motion learning for humanoid robots and enable humanoid robots to perform complex whole-body movements in the real world, Fukang led a team and developed Opt2Skill, a hybrid robot learning framework that combines model-based trajectory optimization with reinforcement learning. Their framework integrates dynamics and contacts into the trajectory planning process and generates high-quality, dynamically feasible datasets, which result in more reliable motion learning for humanoid robots and improved position tracking and task success rates. This approach shows a promising way to augment the performance and generalization of humanoid RL policies using dynamically feasible motion datasets. Incorporating torque data also improved motion stability and force tracking in contact-rich scenarios, demonstrating that torque information plays a key role in learning physically consistent and contact-rich humanoid behaviors.

While other datasets, such as inverse kinematics or human demonstrations, are valuable, they don’t always capture the dynamics needed for reliable whole-body humanoid control.” said by Fukang Liu. “With our Opt2Skill framework, we combine trajectory optimization with reinforcement learning to generate and leverage high-quality, dynamically feasible motion data. This integrated approach gives robots a richer and more physically grounded training process, enabling them to learn these complex tasks more reliably and safely for real-world deployment. - Fukang Liu

In another line of humanoid research, Feiyang established a one-stage training framework that allows humanoid robots to learn locomotion more efficiently and with greater environmental adaptability. Their framework, Learn-to-Teach (L2T), unlike traditional two-stage “teacher-student” approaches, which first train an expert in simulation and then retrain a limited-perception student, teaches both simultaneously, sharing knowledge and experiences in real time. The result of this two-way training is a 50% reduction in training data and time, while maintaining or surpassing state-of-the-art performance in humanoid locomotion. The lightweight policy learned through this process enables the lab’s humanoid robot to traverse more than a dozen real-world terrains—grass, gravel, sand, stairs, and slopes—without retraining or depth sensors.

By training an expert and a deployable controller together, we can turn rich simulation feedback into a lightweight policy that runs on real hardware, letting our humanoid adapt to uneven, unstructured terrain with far less data and hand-tuning than traditional methods. - Feiyang Wu

By the application of these training processes, the team hopes to speed the development of deployable humanoid robots for home use, manufacturing, defense, and search and rescue assistance in dangerous environments. These methods also support advances in embodied intelligence, enabling robots to learn richer, more context-aware behaviors.Additionally, the training data process can be applied to research to improve the functionality and adaptability of human assistive devices for medical and therapeutic uses.

As humanoid robots move from controlled labs into messy, unpredictable real-world environments, the key is developing embodied intelligence—the ability for robots to sense, adapt, and act through their physical bodies,” said Professor Ye Zhao. “The innovations from our students push us closer to robots that can learn robust skills, navigate diverse terrains, and ultimately operate safely and reliably alongside people. - Prof. Ye Zhao

Author - Christa M. Ernst

Citations

Liu F, Gu Z, Cai Y, Zhou Z, Jung H, Jang J, Zhao S, Ha S, Chen Y, Xu D, Zhao Y. Opt2skill: Imitating dynamically-feasible whole-body trajectories for versatile humanoid loco-manipulation. IEEE Robotics and Automation Letters. 2025 Oct 13.

Wu F, Nal X, Jang J, Zhu W, Gu Z, Wu A, Zhao Y. Learn to teach: Sample-efficient privileged learning for humanoid locomotion over real-world uneven terrain. IEEE Robotics and Automation Letters. 2025 Jul 23.

News Contact

Nov. 17, 2025

“How will AI kill Creature?”

That was the question posed to Scheller College of Business Evening MBA students Katie Bowen (’25), Ellie Cobb (’26), and Christopher Jones (’26) in a marketing practicum course that paired them with Creature, a brand, product, and marketing transformation studio.

For 10 weeks, the students worked as consultants in a project that challenged them to rethink the role of artificial intelligence in creative industries. Course instructor Jarrett Oakley, director of Marketing at TOTO USA, guided the student project as they developed strategies to help Creature navigate the evolving landscape of AI-driven marketing.

Business School Meets Real Business

“Nothing accelerates the value of a business school education like applying it in real time to real businesses,” Oakley said. “This course mirrored a consulting engagement, turning classroom learning into actionable expertise through direct collaboration with local firms. It was designed to spark creative thinking, build confidence, and bridge theory with practice.”

What began as a traditional strategic analysis quickly evolved into a forward-looking exploration of AI’s impact on branding, user experience, and performance creative. “Our team realized early on that AI wasn’t a threat but a powerful tool,” the students shared. “We found that AI’s real impact lies not in replacing creativity, but in reshaping expectations, accelerating timelines, and redefining performance standards. It also gives forward-thinking agencies like Creature the opportunity to guide clients still catching up to the AI curve.”

Creature’s founders, Margaret Strickland and Matt Berberian, welcomed the collaboration. “We solve creative challenges across brand, product, and performance,” said Strickland. “AI is transforming each of these areas. The students helped us see how to stay ahead of the curve.”

Students applied frameworks like SWOT, Porter’s Five Forces, and the G-STIC model to diagnose challenges and develop actionable strategies. Weekly meetings with Creature allowed for iterative feedback and refinement.

One of the team’s most surprising insights came from primary research: many agencies hesitate to disclose their use of AI, fearing clients will demand lower prices. “We recommended Creature define and share their AI philosophy,” said the students. “Clients want transparency and innovation, and they’ll choose partners who embrace AI, not hide from it.”

Creature took the advice to heart. Since the project concluded, the firm has launched a new AI consulting offering, SNSE by Creature, and implemented automation across operations, resulting in a 21% boost in efficiency. They’ve also adopted an AI manifesto to guide future initiatives.

A Transformative Student Experience

Katie Bowen, Evening MBA '25

“This project let us apply MBA concepts to a real-world business challenge. We dove into Creature’s business and tailored our analysis to their needs. It pushed us to think critically about how companies stay competitive when AI tools are widely accessible. Using strategy, innovation, and marketing frameworks, we bridged theory and practice to deliver forward-looking recommendations.”

Ellie Cobb, Evening MBA ‘26

“This project strengthened my ability to use AI effectively in both personal and professional contexts—not just knowing how to use it, but when not to. Exploring such a fast-evolving topic made me more agile and open-minded, ready to follow where research and emerging trends lead.”

Christopher Jones, Evening MBA ‘26

“The Marketing Practicum with Creature was an eye-opening experience that deepened my understanding of AI’s impact on business. It sharpened my critical thinking as I navigated conflicting information about AI, and gave me practical insight into business strategy, from integrating new technology to managing innovation and diversifying product offerings.”

Education With Impact

Oakley believes the practicum will have lasting impact. “These students now understand how traditional marketing strategy integrates with emerging AI capabilities. They’re ready to lead in a rapidly evolving industry.”

As AI continues to reshape marketing, partnerships like the one between Scheller and Creature demonstrate the power of collaboration, innovation, and education in preparing future leaders for whatever comes next.

News Contact

Kristin Lowe (She/Her)

Content Strategist

Georgia Institute of Technology | Scheller College of Business

kristin.lowe@scheller.gatech.edu

Nov. 14, 2025

311 chatbots make it easier for people to report issues to their local government without long wait times on the phone. However, a new study finds that the technology might inhibit civic engagement.

311 systems allow residents to report potholes, broken fire hydrants, and other municipal issues. In recent years, the use of artificial intelligence (AI) to provide 311 services to community residents has boomed across city and state governments. This includes an artificial virtual assistant (AVA) developed by third-party vendors for the City of Atlanta in 2023.

Through survey data, researchers from Tech’s School of Interactive Computing found that many residents are generally positive about 311 chatbots. In addition to eliminating long wait times over the phone, they also offer residents quick answers to permit applications, waste collection, and other frequently asked questions.

However, the study, which was conducted in Atlanta, indicates that 311 chatbots could be causing residents to feel isolated from public officials and less aware of what’s happening in their community.

Jieyu Zhou, a Ph.D. student in the School of IC, said it doesn’t have to be that way.

Uniting Communities

Zhou and her advisor, Assistant Professor Christopher MacLellan, published a paper at the 2025 ACM Designing Interactive Systems (DIS) Conference that focuses on improving public service chatbot design and amplifying their civic impact. They collaborated with Professor Carl DiSalvo, Associate Professor Lynn Dombrowski, and graduate students Rui Shen and Yue You.

Zhou said 311 chatbots have the potential to be agents that drive community organization and improve quality of life.

“Current chatbots risk isolating users in their own experience,” Zhou said. “In the 311 system, people tend to report their own individual issues but lose a sense of what is happening in their broader community.

“People are very positive about these tools, but I think there’s an opportunity as we envision what civic chatbots could be. It’s important for us to emphasize that social element — engaging people within the community and connecting them with government representatives, community organizers, and other community members.”

Zhou and MacLellan said 311 chatbots can leave users wondering if others in their communities share their concerns.

“If people are at a town hall meeting, they can get a sense of whether the problems they are experiencing are shared by others,” Zhou said. “We can’t do that with a chatbot. It’s like an isolated room, and we’re trying to open the doors and the windows.”

Adding a Human Touch

In their paper, the researchers note that one of the biggest criticisms of 311 chatbots is they can’t replace interpersonal interaction.

Unlike chatbots, people working in local government offices are likely to:

- Have direct knowledge of issues

- Provide appropriate referrals

- Empathize with the resident’s concerns

MacLellan said residents are likely to grow frustrated with a chatbot when reporting issues that require this level of contextual knowledge.

One person in the researchers’ survey noted that the chatbot they used didn’t understand that their report was about a sidewalk issue, not a street issue.

“Explaining such a situation to a human representative is straightforward,” MacLellan said. “However, when the issue being raised does not fall within any of the categories the chatbot is built to address, it often misinterprets the query and offers information that isn’t helpful.”

The researchers offer some design suggestions that can help chatbots foster community engagement and improve community well-being:

- Escalation. Regarding the sidewalk report, the chatbot did not offer a way to escalate the query to a human who could resolve it. Zhou said that this is a feature that chatbots should have but often lack.

- Transparency. Chatbots could provide details about recent and frequently reported community issues. They should inform users early in the call process about known problems to help avoid an overload of user complaints.

- Education. Chatbots can keep users updated about what’s happening in their communities.

- Collective action. Chatbots can help communities organize and gather ideas to address challenges and solve problems.

“Government agencies may focus mainly on fixing individual issues,” Zhou said, “But recognizing community-level patterns can inspire collective creativity. For example, one participant suggested that if many people report a broken swing at a playground, it could spark an initiative to design a new playground together—going far beyond just fixing it.”

These are just a few examples of things, the researchers argue, that 311 services were originally designed to achieve.

“Communities were already collaborating on identifying and reporting issues,” Zhou said. “These chatbots should reflect the original intentions and collaboration practices of the communities they serve.

“Our research suggests we can increase the positive impact of civic chatbots by including social aspects within the design of the system, connecting people, and building a community view.”

Nov. 12, 2025

One of the top conferences for AI and computer games is recognizing a School of Interactive Computing professor with its first-ever test-of-time award.

At its event this week in Alberta, Canada, the AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment (AIIDE) is honoring Professor Mark Riedl. The award also honors University of Utah Professor and Division of Games Chair Michael Young, Riedl’s Ph.D. advisor.

Riedl studied under Young at North Carolina State University.

Their 2005 paper, From Linear Story Generation to Branching Story Graphs, highlighted the challenges of using AI to create interactive gaming narratives in which user actions influence the story’s progression.

In 2005, computer game systems that supported linear, non-branching games were widely used. Riedl introduced an innovative mathematical formula for interactive stories ranging from choose-your-own-adventure novels to modern computer games.

“We didn’t use the term ‘generative AI’ back then, but I was working on AI for the generation of creative artifacts,” Riedl said. “This was before we had practical deep learning or large language models.

“One of the reasons this paper is still relevant 20 years later is that it didn’t just present a technology, it attempted to provide a framework for solving a grand challenge in AI.”

That challenge is still ongoing, Riedl said. Game designers continue to struggle with balancing story coherence against the amount of narrative control afforded to users.

“When users exercise a high degree of control within the environment, it is likely that their actions will change the state of the world in ways that may interfere with the causal dependencies between actions as intended within a storyline,” Riedl and Young wrote in the paper.

“Narrative mediation makes linear narratives interactive. The question is: Is the expressive power of narrative mediation at least as powerful as the story graph representation?”

AIIDE is being held this week at the University of Alberta in Edmonton, Alberta. Riedl will receive the award on Wednesday.

Nov. 03, 2025

A new deep learning architectural framework could boost the development and deployment efficiency of autonomous vehicles and humanoid robots. The framework will lower training costs and reduce the amount of real-world data needed for training.

World foundation models (WFMs) enable physical AI systems to learn and operate within synthetic worlds created by generative artificial intelligence (genAI). For example, these models use predictive capabilities to generate up to 30 seconds of video that accurately reflects the real world.

The new framework, developed by a Georgia Tech researcher, enhances the processing speed of the neural networks that simulate these real-world environments from text, images, or video inputs.

The neural networks that make up the architectures of large language models like ChatGPT and visual models like Sora process contextual information using the “attention mechanism.”

Attention refers to a model’s ability to focus on the most relevant parts of input.

The Neighborhood Attention Extension (NATTEN) allows models that require GPUs or high-performance computing systems to process information and generate outputs more efficiently.

Processing speeds can increase by up to 2.6 times, said Ali Hassani, a Ph.D. student in the School of Interactive Computing and the creator of NATTEN. Hassani is advised by Associate Professor Humphrey Shi.

Hassani is also a research scientist at Nvidia, where he introduced NATTEN to Cosmos — a family of WFMs the company uses to train robots, autonomous vehicles, and other physical AI applications.

“You can map just about anything from a prompt or an image or any combination of frames from an existing video to predict future videos,” Hassani said. “Instead of generating words with an LLM, you’re generating a world.

“Unlike LLMs that generate a single token at a time, these models are compute-heavy. They generate many images — often hundreds of frames at a time — so the models put a lot of work on the GPU. NATTEN lets us decrease some of that work and proportionately accelerate the model.”

Sep. 02, 2025

A new version of Georgia Tech’s virtual teaching assistant, Jill Watson, has demonstrated that artificial intelligence can significantly improve the online classroom experience. Developed by the Design Intelligence Laboratory (DILab) and the U.S. National Science Foundation AI Institute for Adult Learning and Online Education (AI-ALOE), the latest version of Jill Watson integrates OpenAI’s ChatGPT and is outperforming OpenAI’s own assistant in real-world educational settings.

Jill Watson not only answers student questions with high accuracy. It also improves teaching presence and correlates with better academic performance. Researchers believe this is the first documented instance of a chatbot enhancing teaching presence in online learning for adult students.

How Jill Watson Shaped Intelligent Teaching Assistants

First introduced in 2016 using IBM’s Watson platform, Jill Watson was the first AI-powered teaching assistant deployed in real classes. It began by responding to student questions on discussion forums like Piazza using course syllabi and a curated knowledge base of past Q&As. Widely covered by major media outlets including The Chronicle of Higher Education, The Wall Street Journal, and The New York Times, the original Jill pioneered new territory in AI-supported learning.

Subsequent iterations addressed early biases in the training data and transitioned to more flexible platforms like Google’s BERT in 2019, allowing Jill to work across learning management systems such as EdStem and Canvas. With the rise of generative AI, the latest version now uses ChatGPT to engage in extended, context-rich dialogue with students using information drawn directly from courseware, textbooks, video transcripts, and more.

Future of Personalized, AI-Powered Learning

Designed around the Community of Inquiry (CoI) framework, Jill Watson aims to enhance “teaching presence,” one of three key factors in effective online learning, alongside cognitive and social presence. Teaching presence includes both the design of course materials and facilitation of instruction. Jill supports this by providing accurate, personalized answers while reinforcing the structure and goals of the course.

The system architecture includes a preprocessed knowledge base, a MongoDB-powered memory for storing conversation history, and a pipeline that classifies questions, retrieves contextually relevant content, and moderates responses. Jill is built to avoid generating harmful content and only responds when sufficient verified course material is available.

Field-Tested in Georgia and Beyond

The first AI-powered teaching assistant was developed for Georgia Tech’s Online Master of Science in Computer Science (OMSCS) program. By fall 2023, Jill Watson was deployed in Georgia Tech’s OMSCS artificial intelligence course, serving more than 600 students, as well as in an English course at Wiregrass Georgia Technical College, part of the Technical College System of Georgia (TCSG).

A controlled A/B experiment in the OMSCS course allowed researchers to compare outcomes between students with and without access to Jill Watson, even though all students could use ChatGPT. The findings are striking:

- Jill Watson’s accuracy on synthetic test sets ranged from 75% to 97%, depending on the content source. It consistently outperformed OpenAI’s Assistant, which scored around 30%.

- Students with access to Jill Watson showed stronger perceptions of teaching presence, particularly in course design and organization, as well as higher social presence.

- Academic performance also improved slightly: students with Jill saw more A grades (66% vs. 62%) and fewer C grades (3% vs. 7%).

A Smarter, Safer Chatbot

While Jill Watson uses ChatGPT for natural language generation, it restricts outputs to validated course material and verifies each response using textual entailment. According to a study by Taneja et al. (2024), Jill not only delivers more accurate answers than OpenAI’s Assistant but also avoids producing confusing or harmful content at significantly lower rates.

Compared to OpenAI’s Assistant, Jill Watson (ChatGPT) not only achieves higher accuracy but also produces confusing or harmful content at significantly lower rates. Jill Watson answers correctly 78.7% of the time, with only 2.7% of its errors categorized as harmful and 54.0% as confusing. In contrast, OpenAI’s Assistant demonstrates a much lower accuracy of 30.7%, with harmful failures occurring 14.4% of the time and confusing failures rising to 69.2%. Additionally, Jill Watson has a lower retrieval failure rate of 43.2%, compared to 68.3% for the OpenAI Assistant.

What’s Next for Jill

The team plans to expand testing across introductory computing courses at Georgia Tech and technical colleges. They also aim to explore Jill Watson’s potential to improve cognitive presence, particularly critical thinking and concept application. Although quantitative results for cognitive presence are still inconclusive, anecdotal feedback from students has been positive. One OMSCS student wrote:

“The Jill Watson upgrade is a leap forward. With persistent prompting I managed to coax it from explicit knowledge to tacit knowledge. Kudos to the team!”

The researchers also expect Jill to reduce instructional workload by handling routine questions and enabling more focus on complex student needs.

Additionally, AI-ALOE is collaborating with the publishing company John Wiley & Sons, Inc., to develop a Jill Watson virtual teaching assistant for one of their courses, with the instructor and university chosen by Wiley. If successful, this initiative could potentially scale to hundreds or even thousands of classes across the country and around the world, transforming the way students interact with course content and receive support.

A Georgia Tech-Led Collaboration

The Jill Watson project is supported by Georgia Tech, the US National Science Foundation’s AI-ALOE Institute (Grants #2112523 and #2247790), and the Bill & Melinda Gates Foundation.

Core team members are Saptrishi Basu, Jihou Chen, Jake Finnegan, Isaac Lo, JunSoo Park, Ahamad Shapiro and Karan Taneja, under the direction of professor Ashok Goel and Sandeep Kakar. The team works under Beyond Question LLC, an AI-based educational technology startup.

News Contact

Breon Martin

Pagination

- Previous page

- Page 2

- Next page