Apr. 30, 2026

A Georgia Tech School of Interactive Computing professor and his Ph.D. student have been named to the 2026 list of Microsoft Research Fellows and Fellowship Advisors.

Associate Professor Alan Ritter and Ph.D. student Ethan Mendes were awarded fellowships for their work on creating artificial intelligence (AI) agents that function as teammates.

Mendes was named a fellow, while Ritter will serve as his fellowship advisor.

The Microsoft Research Fellowship is open to faculty, students, and postdocs. Ritter said that if Microsoft sees alignment in a project, it gives recipients the opportunity to work even closer with their collaborators by inviting them to join as additional fellows.

That turned out to be the case with Mendes after Ritter listed him as a collaborator in his fellowship proposal.

“I’m delighted to serve as Ethan Mendes’ fellowship advisor,” Ritter said. “He is an exceptionally strong researcher, and I’m excited to see his work recognized through the Microsoft Research Fellowship.”

Through the fellowship, Ritter and Mendes will design AI systems that better support collaboration and decision-making within organizations.

“The goal is to move beyond AI as a tool for a single user and instead study how AI can help groups make more informed, transparent, and coordinated decisions,” Ritter said. “We will focus on methods that bring together information from many different sources, help people reason under uncertainty, and generate analyses that support collective problem-solving in complex work settings.”

Professor Named to Sustainability Cohort

The Purple Mai’a Foundation has selected Associate Professor Josiah Hester to join its Eahou Global Immersion Cohort.

The Purple Mai’a Foundation is a technology education nonprofit headquartered in Aiea, Hawaii, that teaches coding and computer science to Native Hawaiian students.

The 29 members of the Eahou Global Immersion Cohort from 15 countries are leaders from indigenous communities recognized for their contributions to sustainability.

Hester is a Native Hawaiian whose research centers on sustainable and battery-free technology.

The cohort will gather on O’ahu May 1-3 for Eahou Fest, where they will share stories and solutions from research around the world.

“I’m honored to be selected for the Eahou Global Immersion Cohort and to learn alongside such an inspiring group of resilience leaders who come from around the globe,” Hester said.

“Participants are selected for their significant leadership over the past decade and their ability to bring what they learn back to their communities and integrate it into ongoing work and partnerships. I’m excited to connect these experiences with my work and bring these lessons back into research and teaching at Georgia Tech.”

Jill Watson Creator Receives AAAI Lecture Award

Professor Ashok Goel received one of the most distinguished awards from the Association for the Advancement of Artificial Intelligence (AAAI).

Goel was selected as the 20th recipient of the AAAI Robert S. Engel Memorial Lecture Award. Established in 2003, the award is given to those who have demonstrated excellence in AI scholarship, outstanding applications of AI, and extraordinary service to AAAI and the AI community.

Goel received the award in January during the AAAI Conference on Artificial Intelligence in Singapore. According to the awards program, Goel was recognized for contributions to biologically inspired design, case-based reasoning, and application of AI in virtual teaching.

Goel is the inventor of Jill Watson, one of the first AI virtual teaching assistants used in higher education classrooms.

AAAI is also the publisher of AI Magazine, which Goel served as editor-in-chief from 2016 to 2021.

“I am both honored and humbled to receive AAAI's Robert Engelmore Award,” Goel said. “Bob was a long-time editor of AAAI's AI Magazine, and many years after he retired, I became the editor of the magazine. This makes the Engelmore Award special to me.”

Apr. 20, 2026

How did the earliest life on Earth build complex biological machinery with so few tools? A new study explores how the simplest building blocks of proteins — once limited to just half of today’s amino acids — could still form the sophisticated structures life depends on.

The paper, The Borderlands of Foldability: Lessons from Simplified Proteins, is a meta-analysis of six decades of protein research and reveals that ancient proteins may have been far more complicated and dynamic than previously thought.

Recently published in the journal Trends in Chemistry, the study includes Georgia Tech researchers Lynn Kamerlin, professor in the School of Chemistry and Biochemistry and Georgia Research Alliance Vasser-Woolley Chair in Molecular Design, and Quantitative Biosciences Ph.D. candidate Alfie-Louise Brownless.

Co-authors also include Institute of Science Tokyo graduate student Koh Seya and Liam M. Longo, who serves as a specially appointed associate professor at Science Tokyo and as an affiliate research scientist at the Blue Marble Space Institute of Science.

The research has implications ranging from the origins of life and the search for life in the universe to cutting-edge medical innovation. “One of the biggest unanswered questions in science is how life first began,” says Kamerlin, who is a corresponding author of the study. “Understanding how the first protein-like molecules formed and what the earliest proteins may have been like is a key part of that puzzle.”

“Proteins power our bodies — and all life on Earth,” she adds. “Simply put, the evolution of proteins is the reason that we’re able to have this conversation at all.”

A Protein Folding Paradox

If proteins are the scaffolding of life, amino acids are the components that make up that scaffolding. “Today, an average protein is constructed from a chain of about 300 amino acids, involving 20 different types of amino acids,” Kamerlin shares. Proteins fold when these chains twist into a specific 3-dimensional shape, creating structures critical for biology.

However, while these folds are essential, exactly how a protein knows which way to fold remains a mystery. “We know that proteins didn’t just fold randomly,” Kamerlin shares, “because randomly trying all possible configurations would take a protein longer than the age of the universe.”

It’s a cornerstone problem in biological science called “Levinthal’s Paradox,” and highlights a fundamental mystery: Proteins fold incredibly quickly into very specific combinations — but like a sheet of paper spontaneously folding into an origami swan, researchers don’t know how proteins “choose” the folds they make.

“We can predict what a protein will look like, but can’t tell you how it got there,” Kamerlin adds. “That’s what we’re interested in exploring: how small early proteins developed into the complex proteins that support every living thing on today’s Earth.”

Simple Letters, Sophisticated Structures

Early proteins likely had access to just half of today’s amino acids. “About 10-12 amino acids were likely available on early Earth,” Kamerlin says. Like writing a story with just the letters “A” through “L,” researchers assumed that the ‘vocabulary’ proteins could build from such a limited amino acid alphabet would also be constrained.

“There is a language to protein folding,” Kamerlin explains. “That language is hidden in their structures. Our research is in trying to understand the rules — the grammar and vocabulary that dictate a protein fold.”

The grammar they discovered was surprising: with a combination of creative techniques and environmental support, complex structures can arise from limited amino acid alphabets.

“We found that it is possible to develop complex folds with very simple tools — and certain environments, like salty ones, can help support that,” Kamerlin shares. “Early proteins could also cross-link and associate, interacting like LEGO blocks to create more complex structures.”

Pioneering Proteins

Now, the team is conducting research in environments that could mimic conditions on early Earth — aiming to discover more about how these regions could have given rise to today’s complex proteins. “This aspect of our research also ties into the amazing space research happening at Georgia Tech,” Kamerlin says. “While we’re interested in understanding early life on Earth, our work could help inform where best to look for evidence of life beyond our planet.”

Kamerlin specializes in creating computer models that simulate possible scenarios – creating an opportunity to quickly and efficiently test many theories. The most compelling of these can then be tested by her collaborator and co-author at Science Tokyo, Liam Longo, in lab experiments.

Protein folding is also at the forefront of medical innovation, ranging from diagnostic tools to cancer treatments and neurodegenerative diseases. “In the broader scope, we’re interested in discovering what we can design, what we can stress test, and what we can reconstruct with AI and other computational tools,” Kamerlin says. “Because if you can understand how proteins fold, you gain the ability to design them.”

Funding: NASA, the Human Frontier Science Program, and the Knut and Alice Wallenberg Foundation

Apr. 13, 2026

Vibe coding programmers are releasing batches of vulnerable code, according to researchers at the School of Cybersecurity and Privacy (SCP) at Georgia Tech, who have scanned over 43,000 security advisories across the web.

The programming style relies on using generative artificial intelligence (AI) to create software code using tools like Claude, Gemini, and GitHub Copilot. According to graduate research assistant Hanqing Zhao of the Systems Software & Security Lab (SSLab), no one had been tracking these common vulnerabilities and exposures before the launch of their Vibe Security Radar.

“The vulnerabilities we found lead to breaches,” he said. “Everyone is using these tools now. We need a feedback loop to identify which tools, which patterns, and which workflows create the most risk.”

The radar extensively scans public vulnerability databases, finds the error for each vulnerability, and then examines the code’s history to find who introduced the bug. If they discover an AI tool's signature, the radar flags it.

Of the 74 confirmed cases uncovered so far by the tool, 14 are critical risks, and 25 are high. These vulnerabilities include command injection, authentication bypass, and server-side request forgery. Zhao explained that since AI models tend to repeat the same mistakes, an attacker would need to find these bugs just once.

“Millions of developers using the same models means the same bugs showing up across different projects,” he said. “Find one pattern in one AI codebase, you can scan for it across thousands of repositories.”

Despite its success, the team has only scratched the surface of the problem. The radar can trace metadata like co-author tags, bot emails, and other known tool signatures, but it can't identify an issue if these markers have been removed.

The next step is behavioral detection. AI-written code has patterns in how it names variables, structures functions, and handles errors.

“We're building models that can identify AI code from the code itself, no metadata needed,” said Zhao. “That opens up a lot of cases we currently can't touch.”

The team is also improving its verification pipeline and expanding its sources to include more vulnerability databases. The goal is to get a more complete picture of AI-introduced vulnerabilities across open source, not just the ones that happen to leave signatures behind.

As more programmers rely on vibe coding, Zhao warns that it still needs to be reviewed as thoroughly as any other project.

“The whole point of vibe coding is not reading it afterward, I know,” he said. “But if you're shipping AI output to production, review it the way you'd review a junior developer's pull request. Especially anything around input handling and authentication.”

When prompting AI, SSLab also recommends providing more detailed instructions to get it closer to production-ready. There are also tools to check the code for vulnerabilities after code it has been generated. Not double-checking could lead to a catastrophe.

“The attack surface keeps growing,” said Zhao. “More people running AI agents locally means the attacker doesn't need to break into the company infrastructure. They just need one vulnerability in a model context protocol server that someone installed and never reviewed.”

One reason the attack surfaces are expanding rapidly is AI’s evolution. In the second half of 2025, the Vibe Security Radar found about 18 cases across seven months. Then, in the first three months of 2026, it identified 56. March 2026 alone had 35, more than all of 2025 combined.

Many tools, like Claude, are now more autonomous, allowing developers to write entire features, create files, and even make architecture decisions.

“When an agent builds something without authentication, that's not a typo,” said Zhao. “It's a design flaw baked in from the start. Claude Code and Copilot together account for most of what we detect, but that's partly because they leave the clearest signatures.”

News Contact

John Popham

Communications Officer II at the School of Cybersecurity and Privacy

Apr. 02, 2026

As students increasingly turn to artificial intelligence (AI) to help with coursework, some worry that their learning could be compromised. Georgia Tech researchers are working to counter this potential decline with an AI tool they hope will promote learning rather than hinder it.

TokenSmith is a citation-supported large language model (LLM) tutor that can be hosted locally on a user’s personal computer. The tutor only provides answers based on course materials, such as the textbook or lecture slides.

Associate Professor Joy Arulraj began the project with support from the Bill Kent Family Foundation AI in Higher Education Faculty Fellowship last year. The fellowship, led by Georgia Tech’s Center for 21st Century Universities, supports faculty projects exploring innovative and ethical uses of AI in teaching.

Arulraj has enlisted assistant professors Kexin Rong and Steve Mussmann to help build TokenSmith.

Mussmann said TokenSmith is a synergistic blend of a database system and a machine learning system. The model stores textbooks, textbook annotations by course staff, common questions and answers, a learning state of the student, and student feedback in a structured database system. However, machine learning plays a key role in the answer generation as well as adapting the system to the student, course staff guidance, and user feedback.

"What excites me most is demonstrating how data-driven ML and principled database systems design can reinforce each other — one providing adaptability and flexibility, the other providing structure and traceability — in a way that benefits students," Mussmann said.

Keeping the model local has been an important focus of the project. The team wanted to create an AI tutor that helps students learn from their class resources rather than just giving answers. With each response, TokenSmith cites the origin of the answer in the provided documents.

“One problem with LLMs is that they can hallucinate and provide wrong answers, but in this controlled environment, we can add these guardrails to make sure it’s actually helpful in an educational setting,” Rong said.

Rong said she feels that students often undervalue textbooks, and she hopes TokenSmith can motivate students to make better use of them.

“Textbooks can sometimes be daunting, but maybe if we combine them with the model, students might be more willing to read a paragraph or page in the textbook, and that could help clarify something for them,” she said.

Running the model locally is more cost-effective and helps preserve the user’s privacy. But running the new tool locally comes with technical challenges.

One challenge with creating the model is speed. Since it is a locally based model, TokenSmith depends solely on the user’s computer memory. Tests have also shown that the tutor currently struggles to answer more complex questions.

“We are interested in pushing the boundaries of these local models so that they give students good answers and also run fast enough to keep students engaged,” Arulraj said.

News Contact

Morgan Usry, Communications Officer

Mar. 31, 2026

While people use search engines, chatbots, and generative artificial intelligence tools every day, most don’t know how they work. This sets unrealistic expectations for AI and leads to misuse. It also slows progress toward building new AI applications.

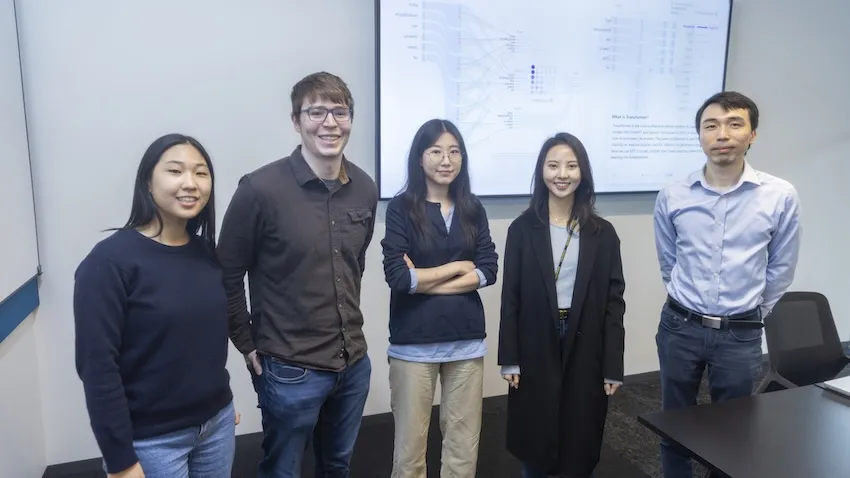

Georgia Tech researchers are making AI easier to understand through their work on Transformer Explainer. The free, online tool shows non-experts how ChatGPT, Claude, and other large language models (LLMs) process language.

Transformer Explainer is easy to use and runs on any web browser. It quickly went viral after its debut, reaching 150,000 users in its first three months. More than 563,000 people worldwide have used the tool so far.

Global interest in Transformer Explainer continues when the team presents the tool at the 2026 Conference on Human Factors in Computing Systems (CHI 2026). CHI, the world’s most prestigious conference on human-computer interaction, will take place in Barcelona, April 13-17.

“There are moments when LLMs can seem almost like a person with their own will and personality, and that misperception has real consequences. For example, there have been cases where teenagers have made poor decisions based on conversations with LLMs,” said Ph.D. student Aeree Cho.

“Understanding that an LLM is fundamentally a model that predicts the probability distribution of the next token helps users avoid taking its outputs as absolute. What you put in shapes what comes out, and that understanding helps people engage with AI more carefully and critically.”

A transformer is a neural network architecture that changes data input sequence into an output. Text, audio, and images are forms of processed data, which is why transformers are common in generative AI models. They do this by learning context and tracking mathematical relationships between sequence components.

Transformer Explainer demystifies how transformers work. The platform uses visualization and interaction to show, step by step, how text flows through a model and produces predictions.

Using this approach, Transformer Explainer impacts the AI landscape in four main ways:

- It counters hype and misconceptions surrounding AI by showing how transformers work.

- It improves AI literacy among users by removing technical barriers and lowering the entry for learning about AI.

- It expands AI education by helping instructors teach AI mechanisms without extensive setup or computing resources.

- It influences future development of AI tools and educational techniques by providing a blueprint for interpretable AI systems.

“When I first learned about transformers, I felt overwhelmed. A transformer model has many parts, each with its own complex math. Existing resources typically present all this information at once, making it difficult to see how everything fits together,” said Grace Kim, a dual B.S./M.S. computer science student.

“By leveraging interactive visualization, we use levels of abstraction to first show the big picture of the entire model. Then users click into individual parts to reveal the underlying details and math. This way, Transformer Explainer makes learning far less intimidating.”

Many users don’t know what transformers are or how they work. The Georgia Tech team found that people often misunderstand AI. Some label AI with human-like characteristics, such as creativity. Others even describe it as working like magic.

Furthermore, barriers make it hard for students interested in transformers to start learning. Tutorials tend to be too technical and overwhelm beginners with math and code. While visualization tools exist, these often target more advanced AI experts.

Transformer Explainer overcomes these obstacles through its interactive, user-focused platform. It runs a familiar GPT model directly in any web browser, requiring no installation or special hardware.

Users can enter their own text and watch the model predict the next word in real time. Sankey-style diagrams show how information moves through embeddings, attention heads, and transformer blocks.

The platform also lets users switch between high-level concepts and detailed math. By adjusting temperature settings, users can see how randomness affects predictions. This reveals how probabilities drive AI outputs, rather than creativity.

“Millions of people around the world interact with transformer-driven AI. We believe that it is crucial to bridge the gap between day-to-day user experience and the models' technical reality, ensuring these tools are not misinterpreted as human-like or seen as sentient,” said Ph.D. student Alex Karpekov.

“Explaining the architecture helps users recognize that language generated by models is a product of computation, leading to a more grounded engagement with the technology.”

Cho, Karpekov, and Kim led the development of Transformer Explainer. Ph.D. students Alec Helbling, Seongmin Lee, Ben Hoover, and alumni Zijie (Jay) Wang (Ph.D. ML-CSE 2024) and Minsuk Kahng (Ph.D. CS-CSE 2019) assisted on the project.

Professor Polo Chau supervised the group and their work. His lab focuses on data science, human-centered AI, and visualization for social good.

Acceptance at CHI 2026 stems from the team winning the best poster award at the 2024 IEEE Visualization Conference. This recognition from one of the top venues in visualization research highlights Transformer Explainer’s effectiveness in teaching how transformers work.

“Transformer Explainer has reached over half a million learners worldwide,” said Chau, a faculty member in the School of Computational Science and Engineering.

“I'm thrilled to see it extend Georgia Tech's mission of expanding access to higher education, now to anyone with a web browser.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 31, 2026

Voice-activated, conversational artificial intelligence (AI) agents must provide clear explanations for their suggestions, or older adults aren’t likely to trust them.

That’s one of the main findings from a study by AI Caring on what older adults expect from explainable AI (XAI).

AI Caring is one of three AI Institutions led by Georgia Tech and funded by the National Science Foundation (NSF). The institution supports AI research that benefits older adults and their caregivers.

Niharika Mathur, a Ph.D. candidate in the School of Interactive Computing, was the lead author of a paper based on the study. The paper will be presented in April at the 2026 ACM Conference on Human Factors in Computing Systems (CHI) in Barcelona.

Mathur worked with the Cognitive Empowerment Program at Emory University to interview 23 older adults who live alone and use voice-activated AI assistants like Amazon’s Alexa and Google Home.

Many of them told her they feel excluded from the design of these products.

“The assumption is that all people want interactions the same way and across all kinds of situations, but that isn’t true,” Mathur said. “How older people use AI and what they want from it are different from what younger people prefer.”

One example she gave is that young people tend to be informal when talking with AI. Older people, on the other hand, talk to the agent like they would a person.

“If Older adults are talking to their family members about Alexa, they usually refer to Alexa as ‘she’ instead of ‘it,’” Mathur said. “They tend to humanize these systems a lot more than young people.”

Good Explanations

The study evaluated AI explanations that drew information from four sources of data:

- User history (past conversations with the agent)

- Environmental data (indoor temperature or the weather forecast)

- Activity data (how much time a user spends in different areas of the home)

- Internal reasoning (mathematical probabilities and likely outcomes)

Mathur said older users trust the agent more when it bases its explanations on data from the first three sources. However, internal reasoning creates skepticism.

Internal reasoning means the AI doesn’t have enough data from the other sources to give an explanation. It provides a percentage to reflect its confidence based on what it knows.

“The overwhelming response was negative toward confidence scores,” Mathur said. “If the AI says it’s 92% confident, older adults want to know what that’s based on.”

This is another example that Mathur said points to generational preferences.

“There’s a lot of explainable AI research that shows younger people like to see numbers in explanations, and they also tend to rely too much on explanations that contain numerical confidence. Older adults are the opposite. It makes them trust it less.”

Knowing the Context

Mathur said that AI agents interacting with older adults should serve a dual purpose. They should provide users with companionship and support independence while reducing the caretaking burden often placed on family members.

Some studies have shown that engineers have tended to favor caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are merely a box to be checked.

She discovered that in urgent situations, older users prefer the AI to be straightforward, while in casual settings, they desire more conversation.

“How people interact with technological systems is grounded in what the stakes of the situation are,” she said. “If it had anything to do with their immediate sense of safety, they did not want conversational elaboration. They want the AI to be very direct and factual.”

Not Just Checking Boxes

Mathur said AI agents that interact with older adults are ideally constructed with a dual purpose. They should provide companionship and autonomy for the users while alleviating the burden of caretaking that is often placed on their family members.

Some studies have shown that engineers have strayed toward favoring caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are a box to be checked.

“They’re not being thought of as consumers,” Mathur said. “A lot of products are being made for them but not with them.”

She also said psychological well-being is one of the most important outcomes these tools should produce.

Showing older adults that they are listened to can significantly help in gaining their trust. Some interviewees told Mathur they want agents who are deliberate about understanding their preferences and don’t dismiss their questions.

Meeting these needs reduces the likelihood of protesting and creating conflict with family members.

“It highlights just how important well-designed explanations are,” she said. “We must go beyond a transparency checklist.”

News Contact

Nathan Deen

College of Computing

Georgia Tech

Feb. 25, 2026

Artificial intelligence (AI) systems power everything from chatbots to security cameras, yet many of the most advanced models operate as “black boxes.” Companies can use them, but outsiders can’t see how they were built, where they came from, or whether they contain hidden flaws.

This lack of transparency creates real risks. A model could contain security vulnerabilities or hidden backdoors. It could also be a lightly modified version of an open-source system — repackaged in violation of its license — with no easy way to prove it.

Researchers at the Georgia Institute of Technology have developed a new framework, ZEN, to help solve this problem. The tool can recover a model’s unique “fingerprint” directly from its memory, allowing experts to trace its origins and reconstruct how it was assembled.

“Analyzing a proprietary AI model without identifying where it came from and how it is constructed is like trying to fix a car engine with the hood welded shut,” said David Oygenblik, a Ph.D. student at Georgia Tech and the study’s lead author.

“ZEN not only X-rays the engine but also provides the complete wiring diagram.”

ZEN works by taking a snapshot of a running AI system and extracting information about both its mathematical structure and the code that defines it. It compares that fingerprint against a database of known open-source models to determine the system’s origin.

If it finds a match, ZEN identifies the exact changes and generates software patches that allow investigators to recreate a working replica of the proprietary model for testing.

That capability has major implications for both security and intellectual property protection.

“With ZEN, a security analyst can finally test a black-box model for hidden backdoors, and a company can gather concrete evidence to prove its software license was infringed,” Oygenblik said.

To evaluate the system, the research team tested ZEN on 21 state-of-the-art AI models, including Llama 3, YOLOv10, and other well-known systems.

ZEN correctly traced every customized model back to its original open-source foundation — achieving 100% attribution accuracy. Even when models had been heavily modified — differing by more than 83% from their original versions — ZEN successfully identified the changes and enabled full reconstruction for security testing.

The researchers will present their findings at the 2026 Network and Distributed System Security (NDSS) Symposium. The paper, Achieving Zen: Combining Mathematical and Programmatic Deep Learning Model Representations for Attribution and Reuse, was authored by Oygenblik, master’s student Dinko Dermendzhiev, Ph.D. students Filippos Sofias, Mingxuan Yao, Haichuan Xu, and Runze Zhang, post-doctorate scholars Jeman Park, and Amit Kumar Sikder, as well as Associate Professor Brendan Saltaformaggio.

News Contact

John Popham

Communications Officer II School of Cybersecurity and Privacy

Feb. 23, 2026

A Georgia Tech Ph.D. candidate is getting a boost to his research into developing more efficient multi-tasking artificial intelligence (AI) models without fine-tuning.

Georgia Stoica is one of 38 Ph.D. students worldwide researching machine learning who were named a 2025 Google Ph.D. Fellow.

Stoica is designing AI training methods that bypass fine-tuning, which is the process of adapting a large pre-trained model to perform new tasks. Fine-tuning is one of the most common ways engineers update large-language models like ChatGPT, Gemini, and Claude to add new capabilities.

If an AI company wants to give a model a new capability, it could create a new model from scratch for that specific purpose. However, if the model already has relevant training and knowledge of the new task, fine-tuning is cheaper.

Stoica argues that fine-tuning still uses large amounts of data, and that other methods can help models learn more effectively and efficiently.

“Full fine-tuning yields strong performance, but it can be costly, and it risks catastrophic forgetting,” Stoica said. “My research asks if we can extend a model’s capabilities by imbuing it with the expertise of others, without fine-tuning?

“Reducing cost and improving efficiency is more important than ever. We have so many publicly available models that have been trained to solve a variety of tasks. It’s redundant to train a new model from scratch. It’s much more efficient to leverage the information that already exists to get a model up to speed.”

Stoica said the solution is a cost-effective method called model merging. This method combines two or more AI models into a single model, improving performance without fine-tuning.

On a basic level, Stoica said an example would be combining a model that is efficient at classifying cats with one that works well at dogs.

“Merging is cheap because you just take the parameters, the weights of your existing models, and combine them,” he said. “You could take the average of the weights to create a new model, but that sometimes doesn’t work. My work has aimed to rearrange the weights so they can communicate easily with each other.”

Through his Google fellowship, Stoica seeks to apply model merging to create a cutting-edge vision encoder. A vision encoder converts image or video data into numerical representations that computers can understand. This enables tasks such as image or facial recognition and generative image captioning.

“I want to be at the frontier of the field, and Google is clearly part of that,” Stoica said. “The vision encoder is very large-scale, and Google has the infrastructure to accommodate it.”

Feb. 19, 2026

A new robot could solve one of the biggest challenges facing indoor farmers: manual pollination.

Indoor farms, also known as vertical farms, are popular among agricultural researchers and are expanding across the agricultural industry. Some benefits they have over outdoor farms include:

- Year-round production of food crops

- Less water and land requirements

- Not needing pesticides

- Reducing carbon emissions from shipping

- Reducing food waste

Additionally, some studies indicate that indoor farms produce more nutritious food for urban communities.

However, these farms are often inaccessible to birds, bees, and other natural pollinators, leaving the pollination process to humans. The tedious process must be completed by hand for each flower to ensure the indoor crop flourishes.

Ai-Ping Hu, a principal research engineer at the Georgia Tech Research Institute (GTRI), has spent years exploring methods to efficiently pollinate flowering plants and food crops in indoor farms to find a way to efficiently pollinate flower plants and food crops in indoor farms.

Hu, Assistant Professor Shreyas Kousik of the George W. Woodruff School of Mechanical Engineering, and a rotating group of student interns have developed a robot prototype that may be up to the task.

The robot can efficiently pollinate plants that have both male and female reproductive parts. These plants only require pollen to be transferred from one part to the other rather than externally from another flower.

Natural pollinators perform this task outdoors, but Hu said indoor farmers often use a paintbrush or electric tootbrush to ensure these flowers are pollinated.

Knowing the Pose

An early challenge the research team addressed was teaching the robot to identify the “pose” of each flower. Pose refers to a flower’s orientation, shape, and symmetry. Knowing these details ensures precise delivery of the pollen to maximize reproductive success.

“It’s crucial to know exactly which way the flowers are facing,” Hu said.

“You want to approach the flower from the front because that’s where all the biological structures are. Knowing the pose tells you where the stem is. Our device grasps the stem and shakes it to dislodge the pollen.

“Every flower is going to have its own pose, and you need to know what that is within at least 10 degrees.”

Computer Vision Breakthrough

Harsh Muriki is a robotics master’s student at Georgia Tech’s School of Interactive Computing, who used computer vision to solve the pose problem while interning for Hu and GTRI.

Muriki attached a camera to a FarmBot to capture images of strawberry plants from dozens of angles in a small garden in front of Georgia Tech’s Food Processing Technology Building. The FarmBot is an XYZ-axis robot that waters and sprays pesticides on outdoor gardens, though it is not capable of pollination.

“We reconstruct the images of the flower into a 3D model and use a technique that converts the 3D model into multiple 2D images with depth information,” Muriki said. “This enables us to send them to object detectors.”

Muriki said he used a real-time object detection system called YOLO (You Only Look Once) to classify objects. YOLO is known for identifying and classifying objects in a single pass.

Ved Sengupta, a computer engineering major who interned with Muriki, fine-tuned the algorithms that converted 3D images into 2D.

“This was a crucial part of making robot pollination possible,” Sengupta said. “There is a big gap between 3D and 2D image processing.

“There’s not a lot of data on the internet for 3D object detection, but there’s a ton for 2D. We were able to get great results from the converted images, and I think any sector of technology can take advantage of that.”

Sengupta, Muriki, and Hu co-authored a paper about their work that was accepted to the 2025 International Conference on Robotics and Automation (ICRA) in Atlanta.

Measuring Success

The pollination robot, built in Kousik’s Safe Robotics Lab, is now in the prototype phase.

Hu said the robot can do more than pollinate. It can also analyze each flower to determine how well it was pollinated and whether the chances for reproduction are high.

“It has an additional capability of microscopic inspection,” Hu said. “It’s the first device we know of that provides visual feedback on how well a flower was pollinated.”

For more information about the robot, visit the Safe Robotics Lab project page.

News Contact

Nathan Deen

College of Computing

Georgia Tech

Jan. 29, 2026

While not as highlight-reel worthy as the Winter Olympics and the World Cup, experts expect high-performance computing (HPC) to have an even bigger impact on daily life in 2026.

Georgia Tech researchers say HPC and artificial intelligence (AI) advances this year are poised to improve how people power their homes, design safer buildings, and travel through cities.

According to Qi Tang, scientists will take progressive steps toward cleaner, sustainable energy through nuclear fusion in 2026.

“I am very hopeful about the role of advanced computing and AI in making fusion a clean energy source,” said Tang, an assistant professor in the School of Computational Science and Engineering (CSE).

“Fusion systems involve many interconnected processes happening across different scales. Modern simulations, combined with data-driven methods, allow us to bring these pieces together into a unified picture.”

Tang’s research connects HPC and machine learning with fusion energy and plasma physics. This year, Tang is continuing work on large-scale nuclear fusion models.

Only a few experimental fusion reactors exist worldwide compared to more than 400 nuclear fission reactors. Tang’s work supports a broader effort to turn fusion from a promising idea into a practical energy source.

Nuclear fusion occurs in plasma, the fourth state of matter, where gas is heated to millions of degrees. In this extreme state, electrons are stripped from atoms, creating a hot soup of fast-moving ions and free electrons. In plasma, hydrogen atoms overcome their natural electrical repulsion, collide, and fuse together. This releases energy that can power cities and homes.

Computers interpret extreme temperatures, densities, pressures, and plasma particle motion as massive datasets. Tang works to assimilate these data types from computer models and real-world experiments.

To do this, he and other researchers rely on machine learning approaches to analyze data across models and experiments more quickly and to produce more accurate predictions. Over time, this will allow scientists to test and improve fusion reactor designs toward commercial use.

Beyond energy and nuclear engineering, Umar Khayaz sees broader impacts for HPC in 2026.

“HPC is the need of the day in every field of engineering sciences, physics, biology, and economics,” said Khayaz, a CSE Ph.D. student in the School of Civil and Environmental Engineering.

“HPC is important enough to say that we need to employ resources to also solve social problems.”

Khayaz studies dynamic fracture and phase-field modeling. These areas explore how materials break under sudden, rapid loads.

Like nuclear fusion, Khayaz says dynamic fracture problems are complex and data-intensive. In 2026, he expects to see more computing resources and computational capabilities devoted to understanding these problems and other emerging civil engineering challenges.

CSE Ph.D. student Yiqiao (Ahren) Jin sees a similar relationship between infrastructure and self-driving vehicles. He believes AI will innovate this area in 2026.

At Georgia Tech, Jin develops efficient multimodal AI systems. An autonomous vehicle is a multimodal system that uses camera video, laser sensors, language instructions, and other inputs to navigate city streets under changing scenarios like traffic and weather patterns.

Jin says multimodal research will move beyond performance benchmarks this year. This shift will lead to computer systems that can reason despite uncertainty and explain their decisions. In result, engineers will redefine how they evaluate and deploy autonomous systems in safety-critical settings.

“Many foundational problems in perception, multimodal reasoning, and agent coordination are being actively addressed in 2026. These advances enable a transition from isolated autonomous systems to safer, coordinated autonomous vehicle fleets,” Jin said.

“As these systems scale, they have the potential to fundamentally improve transportation safety and efficiency.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Pagination

- Page 1

- Next page