Mar. 11, 2026

A new study by EPIcenter affiliate Jamal Mamkhezri examines how public preferences for solar‑energy policy have shifted over a six‑year period in New Mexico, offering one of the first long‑term repeated cross‑section analyses of willingness to pay (WTP) for renewable‑energy attributes. Using identical discrete choice experiment (DCE) tasks from surveys conducted in 2017 and 2023, Professor Mamkhezri evaluates how households value increases in Renewable Portfolio Standards (RPS), changes in rooftop versus utility‑scale solar shares, monthly credit‑banking rules, water usage in electricity generation, and smart‑meter information delivery options.

Across more than 1,100 combined respondents, the study uncovers selective temporal stability in energy preferences. Some attributes—such as support for higher RPS targets, reductions in water use, and preferences for online smart‑meter information—remain relatively stable over time. In contrast, others shift considerably: WTP for increasing the rooftop solar share declines by more than 40%, while WTP to protect monthly credit banking rises more than 200%, reflecting heightened awareness of net‑metering debates and rapid growth in rooftop solar adoption.

Importantly, the study reveals that environmental attitudes, measured through New Ecological Paradigm (NEP) scores, once strongly predicted preferences for rooftop solar and smart‑meter technologies in 2017, but these relationships fade or even reverse by 2023—signaling a shift as these technologies transition from niche, identity‑driven goods to mainstream infrastructure. Meanwhile, environmental attitudes continue to robustly shape preferences for RPS increases and water‑use reductions in both survey waves.

News Contact

Gil Gonzalez, EPIcenter.

Mar. 24, 2026

A recent review by EPIcenter faculty affiliate Constance Crozier (School of Industrial and Systems Engineering, Georgia Institute of Technology) and Matthew Liska (School of Physics, Georgia Institute of Technology) explores the growing role of data centers in providing flexibility, the ability to shift or reduce electricity use in response to grid conditions, to the electric grid as renewable energy penetration and AI-driven computing demand surge. The authors highlight that data centers, particularly those supporting high-performance computing and AI workloads, are projected to consume nearly 10% of U.S. electricity by the end of the decade, presenting both challenges and opportunities for grid stability.

The paper examines various strategies for enhancing the flexibility of data center energy use. One approach is to use backup power systems, such as uninterruptible power supplies, to support the grid during emergencies. Another method involves rerouting computing jobs to different data centers in other locations to balance energy demand. The authors also discuss implementing smart scheduling techniques that shift workloads to off-peak hours, reducing strain on the grid. Additionally, they highlight adjusting processor speeds by lowering CPU (central processing unit) and GPU (graphics processing unit) clock rates to limit power consumption when needed. Finally, the paper suggests pre-cooling data center equipment to limit the energy required for cooling during peak demand periods. Notably, experimental evidence shows that underclocking GPUs can cut power consumption by 40% with only a 22% performance loss, suggesting technical feasibility for demand-response interventions.

Despite these technical options, the authors find that real-world cost considerations and reliability concerns limit widespread adoption. Data center operators generally do not change their behavior in response to electricity prices, as job revenue far outweighs energy costs under normal conditions. For example, a GPU rented at $2 per hour consumes only $0.04 worth of electricity at average prices, making curtailment unattractive except during extreme price spikes. Surveys indicate that operators are reluctant to compromise reliability or deploy backup systems for ancillary services. Consequently, price-based incentives alone are unlikely to drive meaningful flexibility.

Read more on the EPIcenter Webpage

Listen to a podcast on the research here

News Contact

Gilbert Gonzalez, EPIcenter

Mar. 31, 2026

While people use search engines, chatbots, and generative artificial intelligence tools every day, most don’t know how they work. This sets unrealistic expectations for AI and leads to misuse. It also slows progress toward building new AI applications.

Georgia Tech researchers are making AI easier to understand through their work on Transformer Explainer. The free, online tool shows non-experts how ChatGPT, Claude, and other large language models (LLMs) process language.

Transformer Explainer is easy to use and runs on any web browser. It quickly went viral after its debut, reaching 150,000 users in its first three months. More than 563,000 people worldwide have used the tool so far.

Global interest in Transformer Explainer continues when the team presents the tool at the 2026 Conference on Human Factors in Computing Systems (CHI 2026). CHI, the world’s most prestigious conference on human-computer interaction, will take place in Barcelona, April 13-17.

“There are moments when LLMs can seem almost like a person with their own will and personality, and that misperception has real consequences. For example, there have been cases where teenagers have made poor decisions based on conversations with LLMs,” said Ph.D. student Aeree Cho.

“Understanding that an LLM is fundamentally a model that predicts the probability distribution of the next token helps users avoid taking its outputs as absolute. What you put in shapes what comes out, and that understanding helps people engage with AI more carefully and critically.”

A transformer is a neural network architecture that changes data input sequence into an output. Text, audio, and images are forms of processed data, which is why transformers are common in generative AI models. They do this by learning context and tracking mathematical relationships between sequence components.

Transformer Explainer demystifies how transformers work. The platform uses visualization and interaction to show, step by step, how text flows through a model and produces predictions.

Using this approach, Transformer Explainer impacts the AI landscape in four main ways:

- It counters hype and misconceptions surrounding AI by showing how transformers work.

- It improves AI literacy among users by removing technical barriers and lowering the entry for learning about AI.

- It expands AI education by helping instructors teach AI mechanisms without extensive setup or computing resources.

- It influences future development of AI tools and educational techniques by providing a blueprint for interpretable AI systems.

“When I first learned about transformers, I felt overwhelmed. A transformer model has many parts, each with its own complex math. Existing resources typically present all this information at once, making it difficult to see how everything fits together,” said Grace Kim, a dual B.S./M.S. computer science student.

“By leveraging interactive visualization, we use levels of abstraction to first show the big picture of the entire model. Then users click into individual parts to reveal the underlying details and math. This way, Transformer Explainer makes learning far less intimidating.”

Many users don’t know what transformers are or how they work. The Georgia Tech team found that people often misunderstand AI. Some label AI with human-like characteristics, such as creativity. Others even describe it as working like magic.

Furthermore, barriers make it hard for students interested in transformers to start learning. Tutorials tend to be too technical and overwhelm beginners with math and code. While visualization tools exist, these often target more advanced AI experts.

Transformer Explainer overcomes these obstacles through its interactive, user-focused platform. It runs a familiar GPT model directly in any web browser, requiring no installation or special hardware.

Users can enter their own text and watch the model predict the next word in real time. Sankey-style diagrams show how information moves through embeddings, attention heads, and transformer blocks.

The platform also lets users switch between high-level concepts and detailed math. By adjusting temperature settings, users can see how randomness affects predictions. This reveals how probabilities drive AI outputs, rather than creativity.

“Millions of people around the world interact with transformer-driven AI. We believe that it is crucial to bridge the gap between day-to-day user experience and the models' technical reality, ensuring these tools are not misinterpreted as human-like or seen as sentient,” said Ph.D. student Alex Karpekov.

“Explaining the architecture helps users recognize that language generated by models is a product of computation, leading to a more grounded engagement with the technology.”

Cho, Karpekov, and Kim led the development of Transformer Explainer. Ph.D. students Alec Helbling, Seongmin Lee, Ben Hoover, and alumni Zijie (Jay) Wang (Ph.D. ML-CSE 2024) and Minsuk Kahng (Ph.D. CS-CSE 2019) assisted on the project.

Professor Polo Chau supervised the group and their work. His lab focuses on data science, human-centered AI, and visualization for social good.

Acceptance at CHI 2026 stems from the team winning the best poster award at the 2024 IEEE Visualization Conference. This recognition from one of the top venues in visualization research highlights Transformer Explainer’s effectiveness in teaching how transformers work.

“Transformer Explainer has reached over half a million learners worldwide,” said Chau, a faculty member in the School of Computational Science and Engineering.

“I'm thrilled to see it extend Georgia Tech's mission of expanding access to higher education, now to anyone with a web browser.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 31, 2026

Voice-activated, conversational artificial intelligence (AI) agents must provide clear explanations for their suggestions, or older adults aren’t likely to trust them.

That’s one of the main findings from a study by AI Caring on what older adults expect from explainable AI (XAI).

AI Caring is one of three AI Institutions led by Georgia Tech and funded by the National Science Foundation (NSF). The institution supports AI research that benefits older adults and their caregivers.

Niharika Mathur, a Ph.D. candidate in the School of Interactive Computing, was the lead author of a paper based on the study. The paper will be presented in April at the 2026 ACM Conference on Human Factors in Computing Systems (CHI) in Barcelona.

Mathur worked with the Cognitive Empowerment Program at Emory University to interview 23 older adults who live alone and use voice-activated AI assistants like Amazon’s Alexa and Google Home.

Many of them told her they feel excluded from the design of these products.

“The assumption is that all people want interactions the same way and across all kinds of situations, but that isn’t true,” Mathur said. “How older people use AI and what they want from it are different from what younger people prefer.”

One example she gave is that young people tend to be informal when talking with AI. Older people, on the other hand, talk to the agent like they would a person.

“If Older adults are talking to their family members about Alexa, they usually refer to Alexa as ‘she’ instead of ‘it,’” Mathur said. “They tend to humanize these systems a lot more than young people.”

Good Explanations

The study evaluated AI explanations that drew information from four sources of data:

- User history (past conversations with the agent)

- Environmental data (indoor temperature or the weather forecast)

- Activity data (how much time a user spends in different areas of the home)

- Internal reasoning (mathematical probabilities and likely outcomes)

Mathur said older users trust the agent more when it bases its explanations on data from the first three sources. However, internal reasoning creates skepticism.

Internal reasoning means the AI doesn’t have enough data from the other sources to give an explanation. It provides a percentage to reflect its confidence based on what it knows.

“The overwhelming response was negative toward confidence scores,” Mathur said. “If the AI says it’s 92% confident, older adults want to know what that’s based on.”

This is another example that Mathur said points to generational preferences.

“There’s a lot of explainable AI research that shows younger people like to see numbers in explanations, and they also tend to rely too much on explanations that contain numerical confidence. Older adults are the opposite. It makes them trust it less.”

Knowing the Context

Mathur said that AI agents interacting with older adults should serve a dual purpose. They should provide users with companionship and support independence while reducing the caretaking burden often placed on family members.

Some studies have shown that engineers have tended to favor caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are merely a box to be checked.

She discovered that in urgent situations, older users prefer the AI to be straightforward, while in casual settings, they desire more conversation.

“How people interact with technological systems is grounded in what the stakes of the situation are,” she said. “If it had anything to do with their immediate sense of safety, they did not want conversational elaboration. They want the AI to be very direct and factual.”

Not Just Checking Boxes

Mathur said AI agents that interact with older adults are ideally constructed with a dual purpose. They should provide companionship and autonomy for the users while alleviating the burden of caretaking that is often placed on their family members.

Some studies have shown that engineers have strayed toward favoring caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are a box to be checked.

“They’re not being thought of as consumers,” Mathur said. “A lot of products are being made for them but not with them.”

She also said psychological well-being is one of the most important outcomes these tools should produce.

Showing older adults that they are listened to can significantly help in gaining their trust. Some interviewees told Mathur they want agents who are deliberate about understanding their preferences and don’t dismiss their questions.

Meeting these needs reduces the likelihood of protesting and creating conflict with family members.

“It highlights just how important well-designed explanations are,” she said. “We must go beyond a transparency checklist.”

News Contact

Nathan Deen

College of Computing

Georgia Tech

Mar. 30, 2026

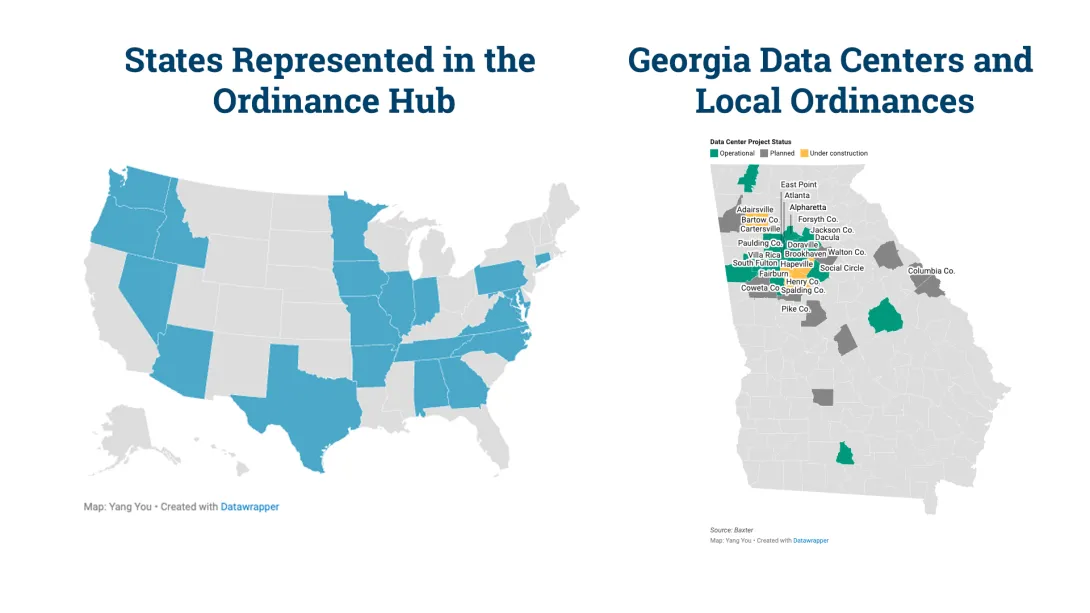

The Energy Policy and Innovation Center (EPIcenter) at Georgia Tech has launched an interactive tool to help communities navigate the dynamic land-use and policy landscape surrounding data center development: the Georgia Data Center Ordinance Hub.

As new data centers continue to be built and proposed in Georgia, counties and municipalities across the state are considering how to guide this growth. EPIcenter’s data center dashboard provides policymakers, planners, researchers, and community stakeholders with a centralized resource to better understand how data center regulations are being developed and applied across Georgia and the U.S.

“Our Data Center Hub provides Georgia communities with a one-stop shop to understand how their neighbors are managing land-use regulations for data centers,” said Laura Taylor, director of EPIcenter. “It brings together clear, accessible information to help jurisdictions plan when data center growth occurs in their area.”

The dashboard is organized around five thematic areas commonly addressed in data center land-use regulations: Site Planning and Building Design, Infrastructure and Utilities, Environmental and Community Protections, Public Safety and Security, and Lifecycle Governance. Within each theme, users can explore specific regulatory topics and access the relevant ordinances enacted by Georgia communities.

To build the dashboard, EPIcenter researchers conducted a comprehensive review of municipal codes across the state.

“We reviewed municipal codes for about 180 cities and counties across Georgia and identified ordinances that specifically address data center development,” said Yang You, EPIcenter’s research associate who developed the project. “In total, we found 19 data center-specific topics that ordinances tend to cover. We analyzed ordinances across jurisdictions and organized their ordinance provisions into topics such as building placement, setbacks, infrastructure, and environmental considerations to make it easier to compare how different jurisdictions regulate data centers.”

You added that the dashboard also incorporates examples from outside of Georgia. By gathering ordinances from other states and pairing them with Georgia-specific examples, EPIcenter aims to provide a clear framework to help communities efficiently address data center land-use regulation.

The Georgia Data Center Ordinance Hub is available through the Energy Policy and Innovation Center website.

News Contact

Priya Devarajan || SEI Communications Program Manager

Mar. 25, 2026

Whether it’s a fire or a flood, a ship’s crew can only rely on itself and its training in emergencies at sea. The same is true for crews facing digital threats on oil tankers, cargo ships, and other commercial vessels.

New cybersecurity research from the Georgia Institute of Technology, however, revealed that crews aboard commercial vessels were often not adequately prepared to manage cyberattacks effectively due to systemic training gaps.

The findings are based on interviews conducted by researchers with more than 20 officer-level mariners to assess the maritime industry’s readiness to handle cybersecurity attacks at sea.

"Historically, cybersecurity research has focused heavily on cyber-physical systems like cars, factories, and industrial plants, but ships have largely been overlooked,” said Anna Raymaker, Ph.D. student and lead researcher.

“That gap is concerning when more than 90% of the world’s goods travel by sea. Recent incidents, from GPS spoofing to ships linked to subsea cable disruptions, show that maritime systems are increasingly part of the global cyber threat landscape.”

The researchers proposed four practical strategies to strengthen maritime cyber defenses and close the training gaps. Their findings were presented recently at the ACM SIGSAC Conference on Computer and Communications Security (CCS).

1. Make Cybersecurity Training Actually Maritime

Many of those interviewed for the study described current cybersecurity training as “boilerplate” — generic modules that don’t reflect real shipboard risks.

Researchers recommend:

- Role-specific instruction: Navigation officers should learn to detect and identify GPS spoofing. Engineers should focus on vulnerabilities in remotely monitored systems.

- Bridging IT and Operational Technology: Crews need to understand how attacks on IT systems can trigger physical consequences in operational technology — including collisions, groundings, or explosions.

- Hands-on delivery: Replace passive PowerPoints with drills and in-person exercises that build muscle memory.

- Accessible standards: Training must account for the wide range of educational backgrounds across crews and be standardized across ranks.

2. Move Beyond “Call IT”

At sea, crews can’t simply escalate a cyber incident to a shore-based IT department and wait. Operational resilience requires onboard readiness.

Researchers recommend:

- Vessel-specific response plans: Ships need clear, actionable protocols for threats such as AIS jamming or radar manipulation.

- Military-style drills: Adopting MCON (Emission Control) exercises — used by the U.S. Military Sealift Command — can train crews to operate safely without electronic systems.

- Stronger connectivity controls: High-bandwidth satellite systems like Starlink introduce new risks. Clear policies and network segregation are essential to prevent new entry points for attackers.

Related Article: When GPS lies at sea: How electronic warfare is threatening ships and their crews by Anna Raymaker

3. Create Unified, Ship-Specific Regulations

Maritime cybersecurity regulations are often reactive and fragmented. Researchers argue the industry needs a cohesive, domain-specific framework.

Key recommendations include:

- A unified global model: Like the energy sector’s NERC CIP standards, a maritime framework could mandate baseline controls such as encryption, network segmentation, and anonymous incident reporting.

- Rules built for real crews: Regulations designed for large naval operations don’t translate well to smaller merchant or research vessels. Standards must reflect actual shipboard conditions.

- Future-proofing requirements: Autonomous ships and remotely operated vessels expand the cyber-physical attack surface. Regulations must proactively address these emerging technologies.

4. Invest in Maritime-Specific Cyber Research

Finally, the researchers stress that long-term resilience requires deeper technical research focused on maritime systems.

Priority areas include:

- Real-time intrusion detection systems tailored to shipboard protocols.

- Proactive security risk assessments of interconnected onboard systems.

- Cyber-physical modeling to better understand cascading failures in complex maritime environments.

The Bottom Line

Cyber threats at sea are no longer hypothetical. Mariners report real-world incidents ranging from GPS spoofing to ransomware that disrupts global trade.

“Through our interviews with mariners, I saw firsthand how much dedication and pride they take in their work,” said Raymaker. “Our goal is for this research to serve as a call to action for researchers, policymakers, and industry to invest more attention in maritime cybersecurity and support the people who risk their lives every day to keep global trade, food, and energy moving."

A Sea of Cyber Threats: Maritime Cybersecurity from the Perspective of Mariners was presented at CCS 2025. It was written by Raymaker and her colleagues, Ph.D. students Akshaya Kumar, Miuyin Yong Wong, and Ryan Pickren; Research Scientist Animesh Chhotaray, Associate Professor Frank Li, Associate Professor Saman Zonouz, and Georgia Tech Provost and Executive Vice President for Academic Affairs Raheem Beyah.

News Contact

John Popham

Communications Officer II School of Cybersecurity and Privacy

Mar. 18, 2026

After watching hundreds of mosquitoes buzzing around one of their colleagues and collecting 20 million data points, Georgia Tech and Massachusetts Institute of Technology researchers have created a mathematical model that predicts how and where female mosquitoes will fly to feast on humans.

The new study is the first to visualize mosquito flight patterns and provides hard data for improving capture and control strategies. In addition to being a nuisance, mosquitoes transmit diseases such as malaria, yellow fever, and Zika, which cause more than 700,000 deaths every year.

“It’s like a crowded bar,” said David Hu, a professor in Georgia Tech’s George W. Woodruff School of Mechanical Engineering and the School of Biological Sciences, with an adjunct appointment in the School of Physics. “Customers aren’t there because they followed each other into the bar. They’re attracted by the same cues: drinks, music, and the atmosphere. The same is true of mosquitoes. Rather than following the leader, the insect follows the signals and happens to arrive at the same spot as the others. They’re good copies of each other.”

Read more and watch:

Georgia Tech College of Engineering newsroom and The Conversation

News Contact

Jason Maderer (maderer@gatech.edu)

Mar. 17, 2026

The building blocks of proteins, amino acids are essential for all living things. Twenty different amino acids build the thousands of proteins that carry out biological tasks. While some are made naturally in our bodies, others are absorbed through the food we eat.

Amino acids also play a critical role commercially where they are manufactured and added to pharmaceuticals, dietary supplements, cosmetics, animal feeds, and industrial chemicals — an energy-intensive process leading to greenhouse gas emissions, resource consumption, and pollution.

A landmark new system developed at Georgia Tech could lead to an alternative: a commercially scalable, environmentally sustainable method for amino acid production that is carbon negative, using more carbon than it emits.

The breakthrough builds on a method that the team pioneered in 2024 and solves a key issue – increasing efficiency to an unprecedented 97% and reducing the bioprocess cost by over 40%. It’s the highest reported conversion of CO2 equivalents into amino acids using any synthetic biology system to date.

Published in the journal ACS Synthetic Biology, the study, “Cell-Free-Based Thermophilic Biocatalyst for the Synthesis of Amino Acids From One-Carbon Feedstocks,” was led by Bioengineering Ph.D. student Ray Westenberg and Professor Pamela Peralta-Yahya, who holds joint appointments in the School of Chemistry and Biochemistry and School of Chemical and Biomolecular Engineering. The team also included Shaafique Chowdhury (Ph.D. ChBE 25) and Kimberly Wennerholm (ChBE 23); alongside University of Washington collaborators Ryan Cardiff, then a Ph.D. student and now a Chain Reaction Innovations Fellow at Argonne National Laboratory, and Charles W. H. Matthaei Endowed Professor in Chemical Engineering James M. Carothers; in addition to Pacific Northwest National Laboratory Synthetic Biology Team Leader Alexander S. Beliaev.

"This work shifts the narrative from simply reducing carbon emissions to actually consuming them to create value,” says Peralta-Yahya. “We are taking low-cost carbon sources and building essential ingredients in a truly carbon-negative process that is efficient, effective, and scalable.”

Heat-Loving Organisms

The work builds on the cell-free technology the team used in their earlier study. “Previously, we discovered that a system that uses the machinery of cells, without using actual living cells, could be used to create amino acids from carbon dioxide,” Peralta-Yahya explains. “But to create a commercially viable system, we needed to increase the system’s efficiency and reduce the cost.”

The team discovered that bits of leftover cells were consuming starting materials, and — like a machine with unnecessary gears or parts — this limited the system’s efficiency. To optimize their “machine,” the team would need to remove the extra background machinery.

"Leftover cell parts were using key resources without helping produce the amino acids we were looking for,” says Peralta-Yahya. “We knew that heating the system could be one way to purify it because heat can denature these components.”

The challenge was in how to protect the essential system components from the high temperatures, she adds. “We wondered if introducing enzymes produced by a heat-loving bacterium, Moorella thermoacetica, might protect our system, while still allowing us to denature and remove that inefficient background machinery.”

The results were astounding: after introducing the enzymes, heating and “cleaning” the system, and letting it cool to room temperature, synthesis of the amino acids serine and glycine leaped to 97% yield — nearly three times that of the team’s previous system.

Scaling for Sustainability

To make the system viable for large-scale use, the team also needed to reduce costs. “One of the most costly components in this system is the cofactor tetrahydrofolate (THF),” Peralta-Yahya shares. “Reducing the amount of THF needed to start the process was one way to make the system more inexpensive and ultimately more commercially viable.”

By linking reaction steps so waste from one step fueled the next, the team devised a method to recycle THF within the system that reduces the amount of THF needed by five-fold — lowering bioprocessing costs by 42%.

“This decrease in cost and increase in yield is a critical step forward in creating a method with real potential for use in industry and manufacturing,” Peralta-Yahya says. “This system could pave the way for moving this carbon-negative technology out of the lab and onto the continuous, industrial scale."

Funding: The Advanced Research Project Agency-Energy (ARPA-E); U.S. Department of Energy; and the U.S. Department of Energy, Office of Science, Biological and Environmental Research Program.

Mar. 27, 2026

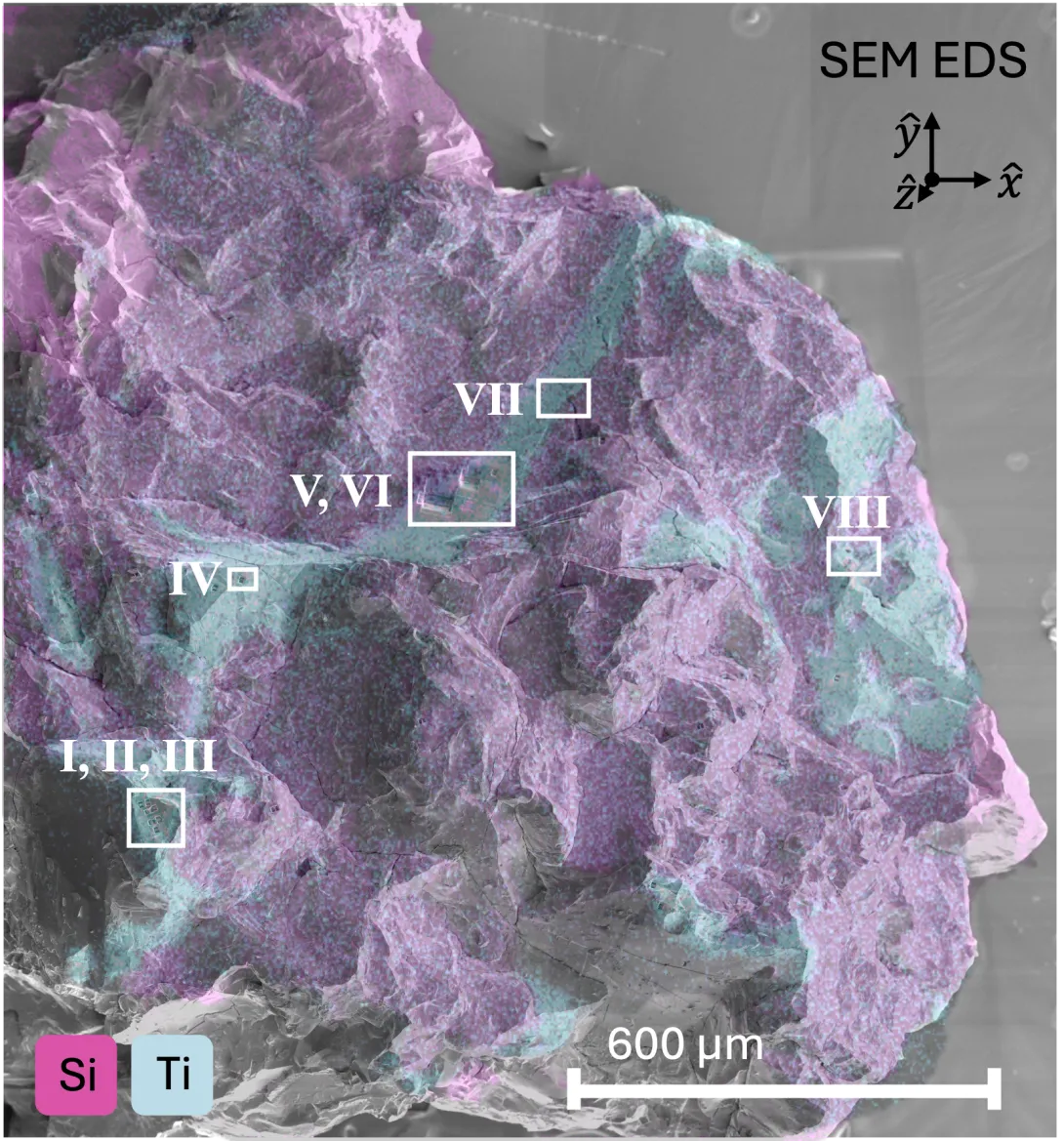

A chemical signature hidden in a 3.8‑billion‑year‑old lunar rock is offering new insights into the availability of oxygen within the young Moon.

Published today in the journal Nature Communications, the paper “Trivalent Titanium in High-Titanium Lunar Ilmenite” confirms titanium in a reduced, trivalent state in a black, metal-rich lunar mineral called ilmenite. It’s a state only possible in low-oxygen environments, conditions researchers refer to as “reducing.”

“Models have suggested that these reducing conditions may have varied at different locations and times across the surface of the Moon,” says lead author Advik Vira, a graduate student in the School of Physics who recently earned his doctoral degree. “We hope our microscopy technique can be a valuable step in mapping and understanding the Moon’s 4.5-billion-year history.”

The team anticipates that their technique could be used on many of the lunar samples collected more than 50 years ago by the Apollo missions in addition to the Apollo Next Generation Samples — a group of lunar samples that have been stored under pristine conditions — and new samples from the planned Artemis missions, with Artemis II slated for launch this spring. The technique might also be applicable to samples collected from the far side of the Moon and returned in 2024 by the Chang’e-6 mission.

“The Moon holds clues not only to its own past, but also to the earliest eras of Earth’s evolution — history that has long since been erased from our planet,” Vira says. “This study is a step toward understanding the history of both and a reminder that there is still so much left to learn from the lunar rocks we’ve brought back to Earth.”

The School of Physics research team included corresponding authors Vira and Professor Phillip First; in addition to graduate student Roshan Trivedi; undergraduate students Gabriella Dotson, Keyes Eames, Dean Kim, and Emma Livernois; and Professor Zhigang Jiang, along with Institute for Matter and Systems Materials Characterization Facility Senior Research Scientist Mengkun Tian; School of Chemistry and Biochemistry Senior Research Scientist Brant Jones and Thom Orlando, Regents' Professor in the School of Chemistry and Biochemistry with a joint appointment in the School of Physics.

The Georgia Tech team was joined by Addis Energy Senior Geochemist Katherine Burgess; Macalester College Assistant Professor of Geology Emily First; along with Lawrence Berkeley National Laboratory Research Scientist Harrison Lisabeth, Senior Scientist Nobumichi Tamura, and Postdoctoral Fellow Tyler Farr, who recently earned a Ph.D. from Georgia Tech’s George W. Woodruff School of Mechanical Engineering.

CLEVER research

The investigation began with a dark gray rock called a lunar basalt. Formed when ancient magma erupted on the Moon’s surface, minerals crystallized as it cooled — preserving key information in their structures. Billions of years later, the rock was brought to Earth by the 1972 Apollo 17 mission, where a small piece is now stored at Georgia Tech’s Center for Lunar Environment and Volatile Exploration Research (CLEVER), a NASA Solar System Exploration Research Virtual Institute (SSERVI) center led by Orlando.

As a NASA virtual institute, CLEVER supports researchers exploring lunar conditions and developing tools for the upcoming crewed Artemis missions, and provided the lunar samples for this research. The SSERVI also plays a critical role in training the next generation of planetary researchers: both Vira and Farr earned their Ph.D.s while on the CLEVER team.

“At CLEVER, we are very interested in understanding the impacts of space weathering,” Vira says. “We implemented modern sample preparation and advanced microscopy techniques to image samples at the atomic level, and were curious to apply it more broadly to the collection of Apollo rocks in the Orlando Lab. This sample caught our attention.”

“When we imaged an ilmenite crystal from the lunar basalt, what struck us first was how uniform and perfect the crystal structure was,” he recalls. “We found no defects from space weathering and instead saw an undamaged, pristine crystal — undisturbed for 3.8 billion years.”

To investigate further, the team analyzed small chips of the rock with Burgess, a member of the RISE2 SSERVI team and then a geologist at the U.S. Naval Research Laboratory. Using state-of-the-art electron microscopy and spectroscopy techniques, Vira determined the oxidation state of the elements in the ilmenite present.

In spectroscopy measurements, each element leaves a distinct ‘signature,’ Vira explains. “When we brought our results back to Georgia Tech’s Materials Characterization Facility, Mengkun (Tian) noticed something unusual: the signature showed titanium might be present in the trivalent state.”

The presence of trivalent titanium had long been suspected in this lunar mineral. The team was intrigued.

A new window into old rocks

With funding from Georgia Tech’s Center for Space Technology and Research (CSTAR), Vira returned to the U.S. Naval Research Laboratory to analyze additional samples. The results confirmed that more titanium was present than the mineral’s formula (FeTiO₃) predicts — indicating a portion of the titanium present was trivalent.

“That led me to place our measurements in terms of the broader geological context,” Vira shares. Working with First, Vira explored how ilmenite with trivalent titanium could help reconstruct the nature of ancient magmas from the Moon, especially the chemical availability of oxygen.

“Because its location on the Moon was noted during the Apollo mission, we know exactly where this rock is from, and we can determine how old the rock is,” he explains. “When coupled with our trivalent titanium measurements, we can use that information to estimate the reducing conditions for this specific region at the specific time our rock formed.”

If the upcoming Artemis missions return samples suitable for the team’s technique, these rocks could provide a new window into ancient lunar geology. The research also highlights that many lunar samples already on Earth could be reexamined to look for trivalent titanium.

“There is still so much to learn from the lunar samples we have already brought to Earth,” Vira says. “It’s a testament to the long-term value of each sample return mission. As technology continues to advance, this type of work will continue to give us critical insights into our planet and our place in the universe for years to come.”

DOI: 10.1038/s41467-026-69770-w

Funding: This work was directly supported by the NASA SSERVI under CLEVER. Researchers were also supported by the NASA RISE2 SSERVI and the Heising-Simons Foundation. Funding for collaborations between the U.S. Naval Research Laboratory and Georgia Tech for the investigation of lunar minerals was provided by the Georgia Tech Center for Space Technology and Research. Sample preparation was performed at the Georgia Tech Institute for Matter and Systems, which is supported by the National Science Foundation. This work utilized the resources of the Advanced Light Source, a user facility supported by the U.S. Department of Energy, Office of Science, Office of Basic Energy Sciences, and was supported in part by previous breakthroughs obtained through the Laboratory Direct.

Mar. 06, 2026

Georgia Tech Energy Day returns this year on March 19 with an expanded focus and a new collaborative momentum. Cohosted by the Georgia Tech Institute for Matter and Systems (IMS) and the Strategic Energy Institute, (SEI) with plenary session support from the Energy Policy and Innovation Center, Energy Day 2026 convenes leaders from academia, industry, government, and students to address the challenges associated with meeting the rapidly growing electricity demand driven by artificial intelligence (AI) and high-performance computing.

Set in the heart of Tech Square on the Georgia Tech campus, this year’s event explores how energy systems, materials, technologies, supply chains, and policy must evolve in response to AI’s accelerating impact. As digital infrastructure expands and computation intensifies, the need for reliable, resilient, and sustainable power has never been more urgent.

“Energy Day reflects Georgia Tech’s strength in connecting world-class research in materials and components with the infrastructure and partnerships needed to translate discovery into scalable energy technologies that serve industry, society, and the future economy,” said Eric Vogel, executive director of the IMS and the Hightower Professor in Materials Science and Engineering.

Energy Day 2026 also marks an important milestone with the introduction of its first group of corporate sponsors: GE Vernova, Southern Company, Georgia Power, ExxonMobil, Southwire Spark, Gems Setra, and Tektronix. Their support reflects a shared commitment to advancing energy solutions.

“Tektronix is excited to be part of Energy Day because advancing the future of energy starts with precise measurement and trusted insights,” said Christopher Bohn, president of Tektronix. “From power electronics and high voltage systems to grid scale renewables and AI driven control technologies, the breakthroughs discussed here directly align with the innovations we support through our products and solutions. Collaborating with Georgia Tech allows us to engage early with emerging research and the next generation of engineers—critical collaborators in building a cleaner, smarter, and more resilient energy ecosystem.”

The keynote address will be delivered by Vanessa Z. Chan, a nationally recognized leader at the intersection of innovation, commercialization, and emerging technologies. Chan will provide insights on accelerating technological discovery, emphasizing how AI is transforming energy and materials design. She will discuss how commercialization strategies must rapidly evolve across multidisciplinary energy domains from grid modernization to advanced batteries and clean manufacturing.

Building on the themes introduced in the keynote, the program transitions into a fireside chat with Georgia Tech EVPR Tim Lieuwen featuring Amit Kulkarni and Jim Walsh. Kulkarni is vice president of Product Management and Strategy for the Gas Power business within GE Vernova, where he oversees the world’s largest portfolio of power generation equipment. Walsh, vice president of GE Vernova’s Consulting Services, leads teams providing innovative solutions across the full spectrum of power generation, delivery, and utilization.

Next comes a policy-focused panel that will explore the surge in power demand driven by AI, how the United States is addressing today’s most urgent energy challenges, and the long-term implications of today’s decisions for a sustainable energy future. Bringing together leading voices in U.S. environmental and energy policy, the panel features Joe Aldy of Harvard University and former special assistant to the president for Energy and Environment; Al McGartland of New York University’s Institute for Policy Integrity and former Environmental Protection Agency lead economist and director of the National Center for Environmental Economics; and Kevin Rennert, fellow and director of the Comprehensive Climate Strategies Program at Resources for the Future and former staff member on the U.S. Senate Committee on Energy and Natural Resources.

The second panel focuses on critical materials — the foundation of advanced energy systems and digital technologies. As AI, data centers, and advanced energy technologies drive demand for critical materials, securing them now requires integration and coordination across the entire value chain. Panelists include Rachel Galloway, British consul general in Atlanta; Vijay Murugesan, head of Materials Intelligence and Digital Innovation at Amazon; Colin Spellmeyer, executive strategic sourcing leader at GE Vernova; Charles Sims, Tennessee Valley Authority Distinguished Professor of Energy and Environmental Policy at the University of Tennessee; and Nortey Yeboah, principal engineer at Southern Company. Together, they will offer perspectives on the policy and economic frameworks shaping the energy supply chain, from developing raw resources to manufacturing the technologies essential to future energy systems.

In the afternoon, participants can dive deeper into specialized topics through three focused technical tracks.

- “Meeting the Demand for Power” will examine how emerging technologies, advanced nuclear systems, and renewable integration can work together to deliver reliable, resilient electricity.

- “Data Center Infrastructure and Resources” will explore innovations in thermal management technologies, energy-efficient computing, and the broader resource impacts of expanding digital infrastructure.

- “Grid Technologies and Markets” will highlight strategies for strengthening grid capacity, incorporating demand-side management, and optimizing carbon performance as energy systems evolve.

“Meeting the rapidly rising electricity demand driven by AI requires bold ideas, coordinated action, and research that moves at the speed of innovation,” said Yuanzhi Tang, executive director of the SEI. “Energy Day 2026 brings together the people and expertise needed to shape resilient, sustainable energy systems for the future. At Georgia Tech, we see this event as a catalyst for new partnerships, new solutions, and a shared commitment to strengthening the nation’s energy foundation.”

Energy Day 2026 is designed for researchers advancing emerging energy technologies, policymakers navigating shifting regulatory and geopolitical landscapes, industry professionals seeking insight into emerging tools and supply chains, and students preparing to enter one of the most consequential sectors of the decade. It also welcomes anyone interested in AI, sustainability, electrification, and critical materials.

Join us to explore the future of energy. To learn more and register, visit: Energy Day 2026.

News Contact

Priya Devarajan | Communications Program Manager

Pagination

- Previous page

- Page 4

- Next page