Mar. 30, 2026

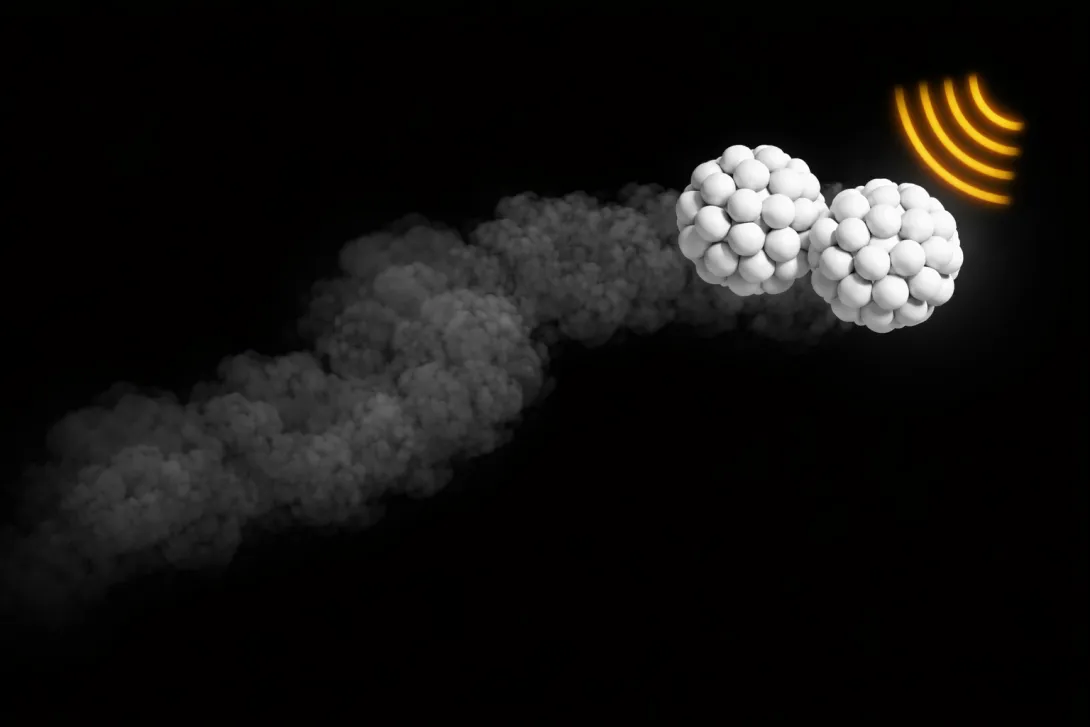

Georgia Tech researchers have created swarms of tiny robotic particles that move and self-organize using only mechanical design — no electronics, software, or sensors. By encoding behavior in each particle’s shape, the team can control how the swarm spreads and reconfigures, with potential applications in medicine and space.

Mar. 25, 2026

Georgia Tech has announced the recipients of the 2026 Institute Research Awards, honoring faculty, staff, and research teams whose work has made significant scientific, technological, and societal impact. Presented by the Office of the Executive Vice President for Research, the awards recognize excellence across six categories spanning innovation, mentorship, collaboration, engagement, and research program development and impact. This year’s honorees reflect the breadth of Georgia Tech’s research enterprise — from foundational discovery to commercialization and community partnerships — and will be recognized at the Faculty and Staff Honors Luncheon on April 24.

Mar. 19, 2026

Robots are increasingly learning new skills by watching people. From folding laundry to handling food, many real-world, humanlike tasks are too nuanced to be efficiently programmed step by step.

With imitation learning, humans demonstrate a task and robots learn to copy what they see through cameras and sensors. While at the leading edge of robotics research, this approach is limited by a major constraint: Robots can only work as fast as the people who taught them.

Now, Georgia Tech researchers have created a tool that smashes that speed barrier. The system allows robots to execute complex tasks significantly faster than human demonstrations while maintaining precision, control, and safety.

The team addresses a central challenge in modern robotics: how to combine the flexibility of learning from humans with the speed and reliability required for real-world deployment. The technology could lead to wider adoption of imitation learning in industrial and household applications and even enable robots to execute humanlike tasks better than ever before.

“The thing we’re trying to create — and I would argue industry is also trying to create — is a general-purpose robot that can do any task that human hands can do,” said Shreyas Kousik, assistant professor in the George W. Woodruff School of Mechanical Engineering and a co-lead author on the study. “To make that work outside the lab, speed really matters.”

The new tool, SAIL (Speed Adaptation for Imitation Learning), was born out of a cross-campus, interdisciplinary collaboration that brought together expertise in mechanical engineering, robotics systems, and machine learning. The research team includes Kousik; Benjamin Joffe, senior research scientist at the Georgia Tech Research Institute; and Danfei Xu, assistant professor in the School of Interactive Computing, along with graduate students and researchers from multiple labs.

Speed Without Sacrifice

Teaching robots to work faster than the speed of human demonstrations is challenging. Robots can behave differently at higher speeds, and small changes in the environment can cause errors.

“The challenge is that a robot is limited to the data it was trained on, and any changes in the environment can cause it to fail,” Kousik said.

SAIL addresses this challenge through a modular approach, with separate components working together to accelerate beyond the training data. The system keeps motions smooth at high speed, tracks movements accurately, adjusts speed dynamically based on task complexity, and schedules actions to account for hardware delays. This combination allows robots to move quickly while staying stable, coordinated, and precise.

“One of the gaps we saw was that our academic robotics systems could do impressive things, but they weren’t fast or robust enough for practical use,” Joffe said. “We wanted to study that gap carefully and design a system that addressed it end to end.”

He added, “The goal is not just to make robots faster, but to make them smart enough to know when speed helps and when it could cause mistakes.”

The team evaluated SAIL’s performance across 12 tasks, both in simulation and on two physical robot platforms. Tasks included stacking cups, folding cloth, plating fruit, packing food items, and wiping a whiteboard. In most cases, SAIL-enabled robots completed tasks three to four times faster than standard imitation-learning systems without losing accuracy.

One exception was the whiteboard-wiping task, where maintaining contact made high-speed execution difficult.

“Understanding where speed helps and where it hurts is critical,” Kousik said. “Sometimes slowing down is the right decision.”

While SAIL does not make robots universally adaptable on its own, it represents an important step toward robotic systems that can learn from humans without being constrained by human pace.

By showing how learned robotic behaviors can be accelerated safely and systematically, SAIL brings imitation learning closer to real-world use — where speed, precision, and reliability all matter.

Citation: Ranawaka Arachchige, et. al. “SAIL: Faster-than-Demonstration Execution of Imitation Learning Policies,” Conference on Robot Learning (CoRL), 2025.

DOI: https://doi.org/10.48550/arXiv.2506.11948

Funding: The authors would like to acknowledge the State of Georgia and the Agricultural Technology Research Program at Georgia Tech for supporting the work described in this paper.

Feb. 19, 2026

A new robot could solve one of the biggest challenges facing indoor farmers: manual pollination.

Indoor farms, also known as vertical farms, are popular among agricultural researchers and are expanding across the agricultural industry. Some benefits they have over outdoor farms include:

- Year-round production of food crops

- Less water and land requirements

- Not needing pesticides

- Reducing carbon emissions from shipping

- Reducing food waste

Additionally, some studies indicate that indoor farms produce more nutritious food for urban communities.

However, these farms are often inaccessible to birds, bees, and other natural pollinators, leaving the pollination process to humans. The tedious process must be completed by hand for each flower to ensure the indoor crop flourishes.

Ai-Ping Hu, a principal research engineer at the Georgia Tech Research Institute (GTRI), has spent years exploring methods to efficiently pollinate flowering plants and food crops in indoor farms to find a way to efficiently pollinate flower plants and food crops in indoor farms.

Hu, Assistant Professor Shreyas Kousik of the George W. Woodruff School of Mechanical Engineering, and a rotating group of student interns have developed a robot prototype that may be up to the task.

The robot can efficiently pollinate plants that have both male and female reproductive parts. These plants only require pollen to be transferred from one part to the other rather than externally from another flower.

Natural pollinators perform this task outdoors, but Hu said indoor farmers often use a paintbrush or electric tootbrush to ensure these flowers are pollinated.

Knowing the Pose

An early challenge the research team addressed was teaching the robot to identify the “pose” of each flower. Pose refers to a flower’s orientation, shape, and symmetry. Knowing these details ensures precise delivery of the pollen to maximize reproductive success.

“It’s crucial to know exactly which way the flowers are facing,” Hu said.

“You want to approach the flower from the front because that’s where all the biological structures are. Knowing the pose tells you where the stem is. Our device grasps the stem and shakes it to dislodge the pollen.

“Every flower is going to have its own pose, and you need to know what that is within at least 10 degrees.”

Computer Vision Breakthrough

Harsh Muriki is a robotics master’s student at Georgia Tech’s School of Interactive Computing, who used computer vision to solve the pose problem while interning for Hu and GTRI.

Muriki attached a camera to a FarmBot to capture images of strawberry plants from dozens of angles in a small garden in front of Georgia Tech’s Food Processing Technology Building. The FarmBot is an XYZ-axis robot that waters and sprays pesticides on outdoor gardens, though it is not capable of pollination.

“We reconstruct the images of the flower into a 3D model and use a technique that converts the 3D model into multiple 2D images with depth information,” Muriki said. “This enables us to send them to object detectors.”

Muriki said he used a real-time object detection system called YOLO (You Only Look Once) to classify objects. YOLO is known for identifying and classifying objects in a single pass.

Ved Sengupta, a computer engineering major who interned with Muriki, fine-tuned the algorithms that converted 3D images into 2D.

“This was a crucial part of making robot pollination possible,” Sengupta said. “There is a big gap between 3D and 2D image processing.

“There’s not a lot of data on the internet for 3D object detection, but there’s a ton for 2D. We were able to get great results from the converted images, and I think any sector of technology can take advantage of that.”

Sengupta, Muriki, and Hu co-authored a paper about their work that was accepted to the 2025 International Conference on Robotics and Automation (ICRA) in Atlanta.

Measuring Success

The pollination robot, built in Kousik’s Safe Robotics Lab, is now in the prototype phase.

Hu said the robot can do more than pollinate. It can also analyze each flower to determine how well it was pollinated and whether the chances for reproduction are high.

“It has an additional capability of microscopic inspection,” Hu said. “It’s the first device we know of that provides visual feedback on how well a flower was pollinated.”

For more information about the robot, visit the Safe Robotics Lab project page.

News Contact

Nathan Deen

College of Computing

Georgia Tech

Jan. 05, 2026

University research drives U.S. innovation, and Georgia Institute of Technology is leading the way.

The latest Higher Education Research and Development (HERD) Survey from the National Science Foundation (NSF) places Georgia Tech as No. 2 nationally for federally sponsored research expenditures in 2024. This is Georgia Tech’s highest-ever ranking from the NSF HERD survey and a 70% increase over the Institute's 2019 numbers.

In total expenditures from all externally funded dollars (including the federal government, foundations, industry, etc.), Georgia Tech is ranked at No. 6.

Tech remains ranked No. 1 among universities without a medical school — a major accomplishment, as medical schools account for a quarter of all research expenditures nationally.

“Georgia Tech’s rise to No. 2 in federally sponsored research expenditures reflects the extraordinary talent and commitment of our faculty, staff, students, and partners. This achievement demonstrates the confidence federal agencies have in our ability to deliver transformative research that addresses the nation’s most critical challenges,” said Tim Lieuwen, executive vice president for Research.

Overall, the state of Georgia maintained its No. 8 position in university research and development, and for the first time, the state topped the $4 billion mark in research expenditures. Georgia Tech provides $1.5 billion, the largest state university contribution. In the last five years, federal funding for higher education research in the state of Georgia has grown an astounding 46% — 10 points higher than the U.S. rate.

Lieuwen said, “Georgia Tech is proud to lead the state in research contributions, helping Georgia surpass the $4 billion mark for the first time. Our work doesn’t just advance knowledge — it saves lives, creates jobs, and strengthens national security. This growth reflects our commitment to drive innovation that benefits Georgia, our country, and the world.”

About the NSF HERD Survey

The NSF HERD Survey is an annual census of U.S. colleges and universities that expended at least $150,000 in separately accounted for research and development (R&D) in the fiscal year. The survey collects information on R&D expenditures by field of research and source of funds and also gathers information on types of research, expenses, and headcounts of R&D personnel.

About Georgia Tech's Research Enterprise

The research enterprise at Georgia Tech is led by the Executive Vice President for Research, Tim Lieuwen, and directs a portfolio of research, development, and sponsored activities. This includes leadership of the Georgia Tech Research Institute (GTRI), the Enterprise Innovation Institute, 11 interdisciplinary research institutes (IRIs), Office of Commercialization, Office of Corporate Engagement, plus research centers, and related research administrative support units. Georgia Tech routinely ranks among the top U.S. universities in volume of research conducted.

News Contact

Angela Ayers

Assistant Vice President of Research Communications

Georgia Tech

Dec. 09, 2025

When we check the weather forecast, that information comes from satellites. When we FaceTime a friend, that call could come via satellites. From cellphone networks to national security systems, satellites are vital to our connected globe. Yet regulating how satellites function across borders is almost as complicated as the technology that launches them into space. Researchers in Georgia Tech’s Space Research Institute are shaping how satellites operate, both scientifically and politically.

Nov. 19, 2025

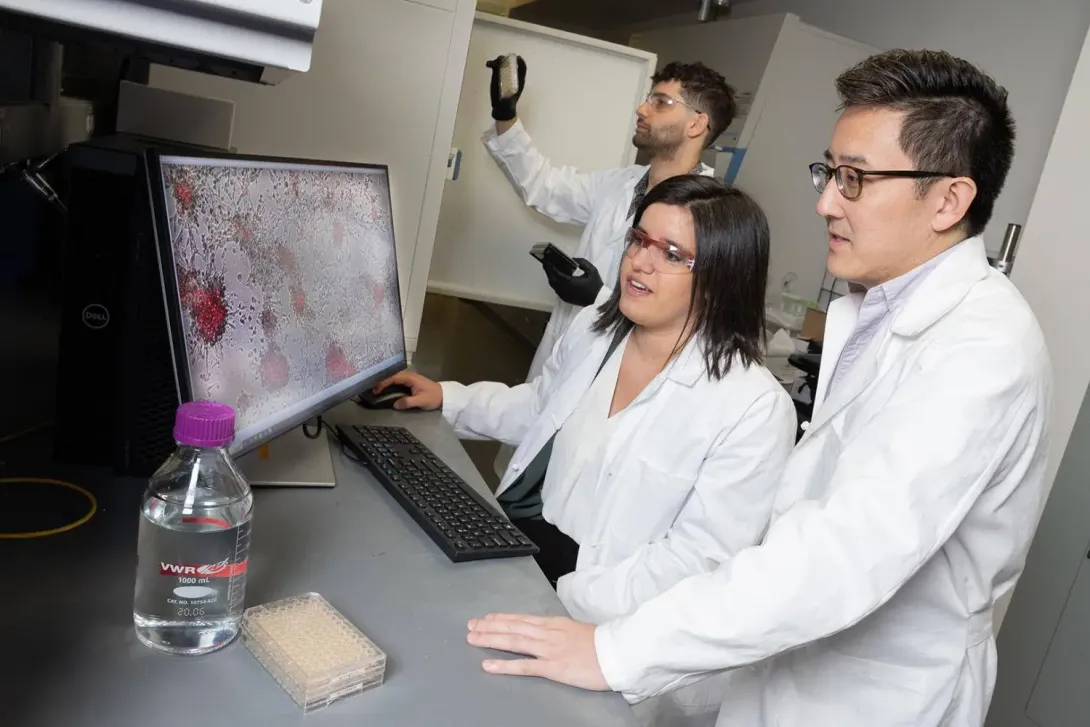

To make useful wearable robotic devices that can help stroke patients or people with amputated limbs, the computer brains driving the systems must be trained. That takes time and money — lots of time and money. And researchers need specially equipped labs to collect mountains of human data for training.

Even when engineers have a working device and brain, called a controller, changes and improvements to the exoskeleton system typically mean data collection and training start all over again. The process is expensive and makes bringing fully functional exoskeletons or robotic limbs into the real world largely impractical.

Not anymore, thanks to Georgia Tech engineers and computer scientists.

They’ve created an artificial intelligence tool that can turn huge amounts of existing data on how people move into functional exoskeleton controllers. No data collection, retraining, and hours upon hours of additional lab time required for each specific device.

Their approach has produced an exoskeleton brain capable of offering meaningful assistance across a huge range of hip and knee movements that works as well as the best controllers currently available. Their worked was published Nov. 19 in Science Robotics.

News Contact

Joshua Stewart

College of Engineering

Nov. 20, 2025

Georgia Institute of Technology has been ranked 7th in the world in the 2026 Times Higher Education Interdisciplinary Science Rankings, in association with Schmidt Science Fellows. This designation underscores Georgia Tech’s leadership in research that solves global challenges.

“Interdisciplinary research is at the heart of Georgia Tech’s mission,” said Tim Lieuwen, executive vice president for Research. “Our faculty, students, and research teams work across disciplines to create transformative solutions in areas such as healthcare, energy, advanced manufacturing, and artificial intelligence. This ranking reflects the strength of our collaborative culture and the impact of our research on society.”

As a top R1 research university, Georgia Tech is shaping the future of basic and applied research by pursuing inventive solutions to the world’s most pressing problems. Whether discovering cancer treatments or developing new methods to power our communities, work at the Institute focuses on improving the human condition.

Teams from all seven Georgia Tech colleges, 11 interdisciplinary research institutes, the Georgia Tech Research Institute, Enterprise Innovation Institute, and hundreds of research labs and centers work together to transform ideas into real results.

News Contact

Angela Ayers

Nov. 18, 2025

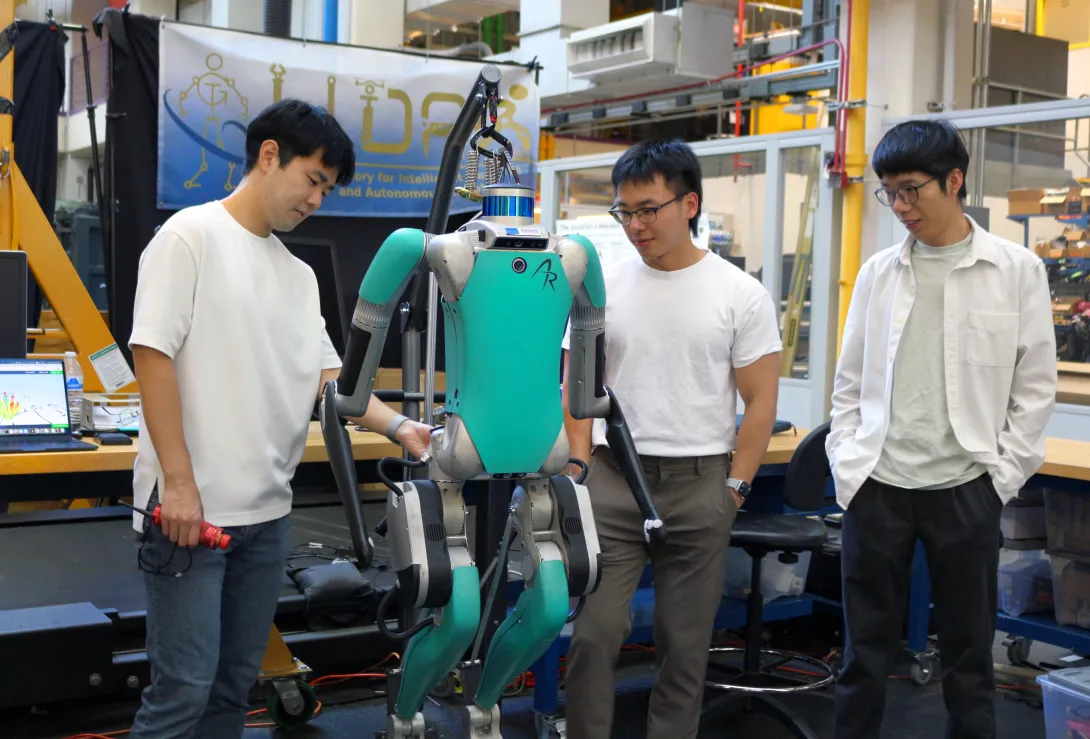

Viral videos abound with humanoid robots performing amazing feats of acrobatics and dance but finding videos of a humanoid robot performing a common household task or traversing a new multi-terrain environment easily, and without human control, are much rarer. This is because training humanoid robots to perform these seemingly simple functions involves the need for simulation training data that lack the complex dynamics and degrees of freedom of motion that are inherent in humanoid robots.

To achieve better training outcomes with faster deployment results, Fukang Liu and Feiyang Wu, graduate students under Professor Ye Zhao from the Woodruff School of Mechanical Engineering and faculty member of the Institute for Robotics and Intelligent Machines, have published a duo of papers in IEEE Robotics and Automation Letters. This is a collaborative work with three other IRIM affiliated faculties, Profs. Danfei Xu, Yue Chen, and Sehoon Ha, as well as Prof. Anqi Wu from School of Computational Science and Engineering.

To develop more reliable motion learning for humanoid robots and enable humanoid robots to perform complex whole-body movements in the real world, Fukang led a team and developed Opt2Skill, a hybrid robot learning framework that combines model-based trajectory optimization with reinforcement learning. Their framework integrates dynamics and contacts into the trajectory planning process and generates high-quality, dynamically feasible datasets, which result in more reliable motion learning for humanoid robots and improved position tracking and task success rates. This approach shows a promising way to augment the performance and generalization of humanoid RL policies using dynamically feasible motion datasets. Incorporating torque data also improved motion stability and force tracking in contact-rich scenarios, demonstrating that torque information plays a key role in learning physically consistent and contact-rich humanoid behaviors.

While other datasets, such as inverse kinematics or human demonstrations, are valuable, they don’t always capture the dynamics needed for reliable whole-body humanoid control.” said by Fukang Liu. “With our Opt2Skill framework, we combine trajectory optimization with reinforcement learning to generate and leverage high-quality, dynamically feasible motion data. This integrated approach gives robots a richer and more physically grounded training process, enabling them to learn these complex tasks more reliably and safely for real-world deployment. - Fukang Liu

In another line of humanoid research, Feiyang established a one-stage training framework that allows humanoid robots to learn locomotion more efficiently and with greater environmental adaptability. Their framework, Learn-to-Teach (L2T), unlike traditional two-stage “teacher-student” approaches, which first train an expert in simulation and then retrain a limited-perception student, teaches both simultaneously, sharing knowledge and experiences in real time. The result of this two-way training is a 50% reduction in training data and time, while maintaining or surpassing state-of-the-art performance in humanoid locomotion. The lightweight policy learned through this process enables the lab’s humanoid robot to traverse more than a dozen real-world terrains—grass, gravel, sand, stairs, and slopes—without retraining or depth sensors.

By training an expert and a deployable controller together, we can turn rich simulation feedback into a lightweight policy that runs on real hardware, letting our humanoid adapt to uneven, unstructured terrain with far less data and hand-tuning than traditional methods. - Feiyang Wu

By the application of these training processes, the team hopes to speed the development of deployable humanoid robots for home use, manufacturing, defense, and search and rescue assistance in dangerous environments. These methods also support advances in embodied intelligence, enabling robots to learn richer, more context-aware behaviors.Additionally, the training data process can be applied to research to improve the functionality and adaptability of human assistive devices for medical and therapeutic uses.

As humanoid robots move from controlled labs into messy, unpredictable real-world environments, the key is developing embodied intelligence—the ability for robots to sense, adapt, and act through their physical bodies,” said Professor Ye Zhao. “The innovations from our students push us closer to robots that can learn robust skills, navigate diverse terrains, and ultimately operate safely and reliably alongside people. - Prof. Ye Zhao

Author - Christa M. Ernst

Citations

Liu F, Gu Z, Cai Y, Zhou Z, Jung H, Jang J, Zhao S, Ha S, Chen Y, Xu D, Zhao Y. Opt2skill: Imitating dynamically-feasible whole-body trajectories for versatile humanoid loco-manipulation. IEEE Robotics and Automation Letters. 2025 Oct 13.

Wu F, Nal X, Jang J, Zhu W, Gu Z, Wu A, Zhao Y. Learn to teach: Sample-efficient privileged learning for humanoid locomotion over real-world uneven terrain. IEEE Robotics and Automation Letters. 2025 Jul 23.

News Contact

Sep. 18, 2025

Maintaining balance while walking may seem automatic — until suddenly it isn’t. Gait impairment, or difficulty with walking, is a major liability for stroke and Parkinson’s patients. Not only do gait issues slow a person down, but they are also one of the top causes of falls. And solutions are often limited to time-intensive and costly physical therapy.

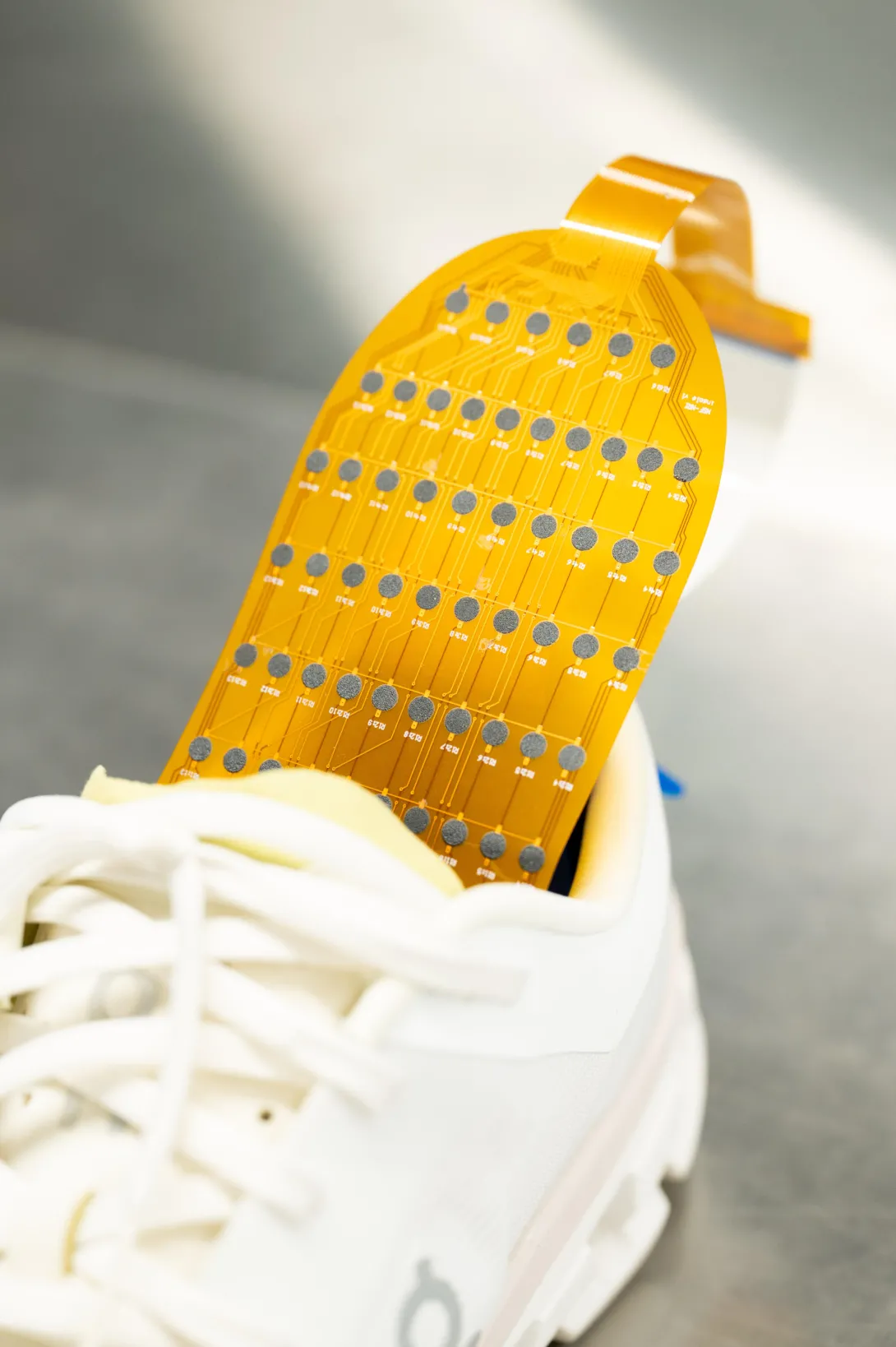

A new wearable electronic device that can be inserted inside any shoe may be able to address this challenge. The device, developed by Georgia Tech researchers, is made of more than 170 thin, flexible sensors that measure foot pressure — a key metric for determining whether someone is off-balance. The sensor collects pressure data, which the researchers could eventually use to predict which changes lead to falls.

The researchers presented their work in the paper, “Flexible Smart Insole and Plantar Pressure Monitoring Using Screen-Printed Nanomaterials and Piezoresistive Sensors.” It was the cover paper in the August edition of ACSApplied Materials & Interfaces.

Pressure Points

Smart footwear isn’t new — but making it both functional and affordable has been nearly impossible. W. Hong Yeo’s lab has made its reputation on creating malleable medical devices. The researchers rely on the common commercial practice of screen-printing electronics to screen-print sensors. They realized they could apply this printing technique to address walking difficulties.

“Screen-printing is advantageous for developing medical devices because it's low-cost and scalable,” said Yeo, the Peterson Professor and Harris Saunders Jr. Professor in the George W. Woodruff School of Mechanical Engineering. “So, when it comes to thinking about commercialization and mass production, screen-printing is a really good platform because it's already been used in the electronics industry.”

Making the device accessible to the everyday user was paramount for Yeo’s team. A key innovation was making sure the wearable is thin enough to be comfortable for the wearer and easy to integrate with other assistive technologies. The device uses Bluetooth, enabling a smartphone to collect data and offer the future possibility of integrating with existing health monitoring applications.

Possibilities for real-world adaptation are promising, thanks to these innovations. Lightweight and small, the wearable could be paired with robotics devices to help stroke and Parkinson’s patients and the elderly walk. The high number of sensors could make it easier for researchers to apply a machine learning algorithm that could predict falls. The device could even enable professional athletes to analyze their performance.

Regardless of how the device is used, Yeo intends to keep its cost under $100. So far, with funding from the National Science Foundation, the researchers have tested the device on healthy subjects. They hope to expand the study to people with gait impairments and, eventually, make the device commercially available.

“I'm trying to bridge the gap between the lack of available devices in hospitals or medical practices and the lab-scale devices,” Yeo said. “We want these devices to be ready now — not in 10 years.”

With its low-cost, wireless design and potential for real-time feedback, this smart insole could transform how we monitor and manage walking difficulties — not just in clinical settings, but in everyday life.

News Contact

Tess Malone, Senior Research Writer/Editor

tess.malone@gatech.edu

Pagination

- Page 1

- Next page

![<p>Hong Yeo holds the wearable electronic device made of more than 170 thin, flexible sensors that measure foot pressure — a key metric for determining whether someone is off-balance. [Photos by Joya Chapman]</p> Hong Yeo holds shoe insert.](/sites/default/files/styles/wide/public/news/2026-04/DSC_0589.jpeg.webp?itok=7llp2Ils)