May. 22, 2026

Moving a new idea from a research lab to production remains one of industry’s toughest challenges. But at the Georgia Tech Manufacturing Institute (GTMI), which leads the nation in translating research into technologies that shape the future of U.S. manufacturing, that gap is being closed by design. This effort was on full display during AMPF Week, a two-day celebration marking the official opening of the newly renovated Georgia Tech Advanced Manufacturing Pilot Facility (AMPF).

May. 11, 2026

The energy shock is already widely understood. What is not yet widely understood is what comes after it — and why a diplomatic deal, when it comes, will not be the end of the story.

By Chris Gaffney, Managing Director of the Georgia Tech Supply Chain and Logistics Institute and a former Vice President of Global Strategic Supply Chain at The Coca-Cola Company.

Three weeks ago, I started hearing from contacts in my network. Senior supply chain executives, people who have managed through COVID and the Suez Canal blockage, were expressing concern. The kind of concern that doesn’t make it into earnings calls or press releases. The kind that shows up in private conversations between people who actually move goods around the world for a living.

Their worry wasn't about crude oil prices. Crude oil prices are now widely discussed. Their worry was about what happens after crude oil prices. About the plastic in your water bottle, the fertilizer going into this year's corn crop, the engine oil in your car, the polyester in your running shoes.

Those conversations sent me back to the data. The geopolitical crisis and the energy shock are now well-documented in mainstream reporting. What is less discussed and what my conversations with experienced practitioners suggested was being systematically underestimated is the operational cascade downstream of that energy shock. I wanted to answer a specific question: given that the Strait has been effectively closed since February 28, what aspects of the downstream impact are already locked in regardless of a diplomatic solution, and what is still unfolding? Could I use publicly available data, straightforward analytical tools, and accessible modeling to produce a defensible, quantified view of that question?

The answer, after several weeks of work, is yes. And what the analysis shows is more operationally significant than most of the public commentary has yet captured.

Start with what is already true.

The International Energy Agency (IEA) has characterized this as what it describes as one of the largest supply disruption in the history of the global oil market. Flows through the Strait fell from roughly 20 million barrels per day before the conflict to low single-digit levels in March and early April. Asian crude stocks dropped 31 million barrels in March alone, with further declines expected through April. Global refinery runs in Asia were cut by around 6 million barrels per day. Middle distillate prices in Singapore hit all-time highs.

But energy prices, as alarming as they are, are the visible part of this problem. The less visible part is what those commodities become.

Naphtha, a petroleum derivative most people have never heard of, is the feedstock for the polyester in your clothing, the polyethylene terephthalate (PET) in your water bottle, the polypropylene in your food packaging, the polyvinyl chloride (PVC) in your plumbing. Roughly 80 percent of the naphtha imported into Asia comes from the Middle East. South Korean petrochemical plants were running at 60 to 70 percent of capacity by late April. Japanese crackers at 65 to 75 percent. The IEA confirmed it in plain language: Asian petrochemical plants curtailed operating rates as feedstock supply dried up.

Liquefied petroleum gas (LPG) is the cooking gas that 60 percent of Indian households depend on for daily meals and was the first fuel to be rationed. Queues formed as deliveries were delayed. This reflected physical supply constraints alongside severe price pressure.

Fertilizer prices hit 49 percent above last year's levels by April, according to DTN data. Corn planting intentions dropped 3.5 percent. The math on that is straightforward: the food prices that result from this spring’s planting decisions will show up at the grocery store in 2027. The disruption has a long tail, and most of that tail is still ahead of us.

The question isn’t whether this will affect what you pay for everyday goods. It already is. The question is how far the cascade goes and how long it lasts.

Here is what the modeling shows.

Working from publicly available IEA, U.S. Energy Information Administration (EIA), and commodity price data, I built a scenario model that tracks 12 commodity-region pairs through a 300-day simulation horizon. I then ran that model over 1,500 times with slightly varying assumptions to produce a range of outcomes rather than a single point estimate. That range is more honest than a single number, because the genuine uncertainty in this situation deserves to be represented.

Three findings stand out.

First: a diplomatic deal today would be unlikely to quickly reverse what has already happened. This is the finding that surprised me most, and it held across almost every simulation. The high-import-dependency commodities have already depleted enough inventory that functional shortage is already embedded in the near-term outlook regardless of when the Strait reopens. The diplomatic question determines how long the pain lasts and how severe the recovery will be. For consumers, this means the effects may show up long after the headlines fade through higher prices, product shortages, and delays in everything from clothing and packaging to fertilizer-dependent food production.

Second: Europe's most visible supply chain story, airlines canceling flights, is a price story, not a physical shortage story. The IEA documents approximately six weeks of European jet fuel supply. Airlines are grounding aircraft because fuel has doubled in price, not because airports are running dry. Meanwhile, Asian petrochemical plants are curtailing because feedstock physically stopped arriving. These two situations look similar in the headlines. They require completely different responses. For consumers, the difference matters because one problem mainly makes travel and goods more expensive, while the other can interrupt the actual production of the products modern life depends on.

Third: the recovery will be harder and longer than most public commentary assumes. S&P Global estimates five weeks to seven months for full supply normalization after a reopening, depending on infrastructure damage. Mine clearance alone requires 60 to 90 days of sustained operations before commercial vessels can transit safely. Insurance premiums will not normalize until underwriters see months of safe transit. And when supply does restart, suppressed demand returns simultaneously with a supply base that is still rebuilding. The EIA's 2027 demand forecast of 1.6 million barrels per day growth (nearly three times the depressed 2026 rate) makes this concrete. We have seen this pattern before. COVID demonstrated it at scale. The bullwhip effect, applied to a supply-side energy shock, produces a second dislocation on the back side of the crisis.

What this means for your grocery bill, your gas tank, and your business.

The analysis maps 36 supply chain pathways from raw commodity to consumer shelf across 15 product categories. Here are three examples that are or will be visible to you.

Take construction materials. PVC pipe, insulation, and window profiles all begin with petrochemical feedstocks moving through the Gulf region. PVC resin prices in India rose nearly 80 percent in March. Since PVC pipe is largely PVC resin, the pass-through to construction costs is immediate and difficult to absorb. The result is likely to show up in higher prices for building materials, repairs, and infrastructure projects long before most consumers connect the cause.

The same pattern is unfolding in synthetic motor oil. Shell's Pearl Gas-to-Liquid facility in Qatar — one of the world's most important sources of premium Group III base oil — was taken offline by missile strikes. Producers in Bahrain and the UAE have declared force majeure. Roughly 40 percent of global Group III supply is now offline or unable to ship. For consumers, that eventually means higher oil-change costs, more expensive industrial lubricants, and added operating costs moving quietly through trucking, aviation, manufacturing, and delivery networks.

Food arrives later, but it arrives. Fertilizer prices are already sharply elevated, and planting decisions are being made right now under those conditions. The agricultural calendar creates a lag most consumers do not see. Disruptions this spring can become higher grocery prices many months from now. That is not speculation. It is simply how agricultural supply chains work.

We tend to underestimate the breadth and duration of these events while they are happening, and overestimate how quickly things return to normal after they appear to resolve.

What we did, and why it matters how we did it.

Every number in this analysis traces to a cited source. Where data was insufficient and judgment was required, those judgment calls are labeled as such. The model is not a black box. It is a documented, reproducible simulation that any researcher can run independently.

I also used AI — specifically Claude by Anthropic — as a partner to help analyze and build this work. While I provided the analytical framework, the practitioner judgments, and the validation of assumptions, the AI assisted with drafting, building models, computation, and data synthesis. This collaboration is fully detailed in the paper.

This represents a new way of performing analytical work. The results are significant: a quantified, sourced, and reproducible analysis of a complex disruption in the actual world. What usually takes a traditional research team months was completed in weeks. That speed is vital when a situation is still unfolding.

The larger point.

Sixty-seven days in, the global supply chain community is navigating a disruption that has no precise historical parallel. The 1973 OAPEC embargo lasted months and produced lasting structural change in how the world consumes energy. The 1990 Gulf War shock was brief enough that it produced relatively mild downstream consequences. The 2022 European energy crisis showed us what happens when industrial feedstock costs become uneconomic for months at a time: capacity comes offline, and some of it does not come back for a long time.

The 2026 Hormuz closure is now 72 days old. It has already lasted longer than the 1990 Gulf War shock. It is approaching the territory where the worse historical outcomes become the more relevant comparators. Every additional week of closure moves the probability distribution toward the scenarios that produced lasting structural damage.

Both public and private entities may be underestimating the magnitude of what recovery will require. Restoring normal supply chain function after an event of this scale and duration is not a matter of reopening a waterway. It is a matter of rebuilding inventory buffers, restarting industrial capacity, normalizing insurance markets, reestablishing commercial relationships, and managing the demand surge that hits simultaneously with the supply restart. The organizations that are planning for that recovery now will be materially better positioned than those that wait.

The people I talked to three weeks ago were right to be concerned. Their concern was based on experience and instinct and what they were seeing in their own business. Our work over the past weeks validates their perspective.

An enduring diplomatic solution is the essential precondition for any of this to improve. Without it, the cascade continues. With it, the hard work of recovery begins. Either way, the time to understand the full scope of what is in motion is now and not after the headlines move on.

Editor’s note:

View the related report: technical analysis, scenario modeling, Monte Carlo simulation methodology, consumer impact assessment.

Apr. 29, 2026

The Georgia Tech Manufacturing Institute (GTMI) has selected Aaron P. Stebner as its new associate director, expanding the institute’s leadership as it scales advanced manufacturing research, infrastructure, and industry engagement.

Stebner is the Eugene C. Gwaltney Jr. Chair in Manufacturing and a professor in the George W. Woodruff School of Mechanical Engineering, with a joint appointment in the School of Materials Science and Engineering. He also serves as a James R. and Sarah R. Borders Faculty Fellow, founding director of Georgia Artificial Intelligence Manufacturing (Georgia AIM), and executive director of Georgia Tech’s Professional Master’s in Manufacturing Leadership program.

In his role as associate director, Stebner will lead operations, engagement and continued growth of the Advanced Manufacturing Pilot Facility (AMPF), a cornerstone of Georgia Tech’s manufacturing ecosystem. The facility brings together artificial intelligence, automation, robotics, and digital manufacturing to accelerate materials discovery and manufacturing innovation at pilot scale.

The AMPF is evolving into what Georgia Tech leaders describe as a national first: a university-based, self-driving manufacturing facility that allows new technologies to be invented, tested and de-risked before they reach full-scale production. Backed by more than $80 million in federal, state, and private investment, the facility serves as a shared-use platform for industry, startups, researchers, and government partners.

“AMPF is a national user facility and a blended industry-academia, human-AI environment where new manufacturing discoveries are made ready for industry adoption,” Stebner said. “By integrating AI-enabled systems, real-time automation and pilot-scale validation, we’re helping shorten the timeline from discovery to deployment.”

Stebner’s research and leadership sit at the intersection of artificial intelligence, manufacturing, materials, and mechanics, with an emphasis on intelligent and adaptive manufacturing systems. His work spans advanced alloys, additive manufacturing, autonomous experimentation, and data-driven process design, with applications across aerospace, automotive, biomedical, energy and industrial sectors.

Under his leadership, the AMPF is expected to continue expanding as a national collaboration hub for academia, industry, and government. The facility supports pilot-scale testing of emerging technologies, workforce development and applied research aimed at strengthening U.S. manufacturing competitiveness and economic resilience.

Stebner’s appointment also strengthens the alignment between GTMI, Georgia AIM and Georgia Tech’s broader research enterprise, integrating AI-driven research, translational infrastructure, and industry partnerships into a cohesive model for manufacturing innovation.

“With Aaron’s experience building forward-looking manufacturing programs and leading large, interdisciplinary teams, GTMI is well positioned to accelerate the impact of the AMPF and related initiatives,” said Tom Kurfess, executive director of GTMI.

News Contact

Yanet Chernet

Communications Officer

Apr. 30, 2026

By Chris Gaffney, Managing Director of the Georgia Tech Supply Chain and Logistics Institute, Supply Chain Advisor, and former executive at Frito‑Lay, AJC International, and Coca‑Cola.

In this issue:

- The real blind spot in analytics teams

- Three failures where the model was “right” and the decision was wrong

- A five-question checklist to run before anything goes to leadership.

A Subtle but Growing Concern

Over the past several months, I have had conversations with senior leaders at several large, well-established supply chain organizations with strong teams responsible for Integrated Business Planning (IBP) and supply chain network design and optimization.

These teams are technically strong. They know how to build models. They are comfortable with large data sets. Many are now incorporating AI tools into their workflows.

But the same concern keeps surfacing across those conversations:

The analytical capability is improving—but the decision-making discipline around it is not keeping pace.

Analysts move quickly to building models without fully defining the business problem. Assumptions are not always surfaced or challenged. Outputs are evaluated mathematically, not operationally. And recommendations are not always translated into real-world implications.

Leaders are concerned about this and are looking for ways to address. I share their concern because I have been in their shoes.

What the Experience Taught Us

Earlier in my career, across different roles at Coca-Cola, we did not formally teach critical thinking. We learned it through experience and often through mistakes. Three situations shaped how I think about this today.

Powerade: When the Model Works but the Thinking Doesn’t

While working with optimization groups at Coca-Cola North America, we overbuilt capacity for Powerade. The model did exactly what it was supposed to do. The problem was upstream of the model.

We took the demand forecast at face value. At the time, we deferred to the brand teams without interrogating their assumptions. We never asked what was driving the projected volume—whether the competitive dynamics supported it, whether the channel assumptions were realistic, whether pricing and distribution plans were grounded, whether overall market growth would materialize as projected.

The consequence was idle capacity, production lines that were purchased and never installed, write-offs, and a fundamental change to our process. Going forward, brand and supply chain teams were both required to sign off on future business cases. The model was technically correct. The thinking around the model had not been.

Little Rock: When Feasibility Isn’t Reality

Later, within Coca-Cola Supply, we made a network decision to close a plant in Little Rock. On paper, the remaining system had the capacity to absorb the volume. The model said so.

What the model assessed was production capacity based on rated line speeds. What it did not account for was dock and storage capacity at peak, or the practical limitations of standing up a new shift at the receiving plants. Those constraints were real. They were also invisible in the model.

In the short term, we had to source sub optimally from other plants—which directly undermined the business case we had built to justify the closure. The math was right. The operational validation was incomplete.

Mini Cans: When the Thinking Matches the Model

By the time I led the National Product Support Group, we had evolved. Decisions like the launch of mini cans required cross-functional alignment, scenario-based thinking, and a clear understanding of how demand would actually be generated across channels and routes to market.

We got that one right, not because the model was more sophisticated, but because the discipline around the model was stronger. We had learned, the hard way, to ask the questions the model could not ask for itself.

Most of the Work Is Outside the Model

There is a line I first heard from Chris Janke: "Most of the work is outside the model." He may have learned it from someone else; I don’t know the original source, but it is the framing that has stayed with me. With the advances in data and machine learning we have seen over the past decade, that proportion may be closer to 75 percent today.

We are better than ever at collecting and cleansing large data sets, processing high volumes of information, and identifying mathematical errors. But the most important work still happens outside the model: defining the right business question, building meaningful scenarios, interpreting outputs in real-world terms, and stress-testing the assumptions that drive the recommendation.

Janke captured this precisely in documenting his own experience with a modeling error that illustrated the point. An analyst had validated the math on a labor cost model—everything checked out numerically. But when the output was translated into real-world terms, it implied production workers earning roughly $300,000 per year while working approximately 60 hours total annually. The math was internally consistent. The result was operationally impossible. The question that should have been asked early: does this make sense in the context of how the business actually operates? It was not asked until after the analysis was complete.

The discipline to ask that question is not modeling skill. It is a critical thinking skill.

Where the Breakdown Happens

Before the Model: Skipping the Hard Questions

A common pattern today is that analysts move quickly to building the model. The harder and more important step of defining the business decision before the model is built gets compressed or skipped entirely. The questions that require that step are not complicated, but they take time and engagement to answer well:

- What business decision are we actually trying to make?

- What scenarios matter, and why?

- What does success look like—not mathematically, but operationally?

- What constraints are real versus assumed?

These questions are not as clean as coding a model. They require conversations with people who understand the constraints, not just the data. That is part of why they get skipped.

After the Model: Mistaking Mathematical Accuracy for Business Validity

This is where more serious errors occur. Model issues can usually be fixed with more time. Misinterpretation of output leads to bad decisions that are much harder to unwind.

The Powerade and Little Rock situations both illustrate this. In each case, the model was not wrong in any technical sense. What was missing was the translation layer— where someone asks, “what changes on a Tuesday night shift, at Plant B, when demand spikes 12 percent?”

That translation layer does not happen automatically. It has to be built into how teams work. And it is exactly the discipline that gets squeezed when organizations reward speed and analytical sophistication above everything else.

What Critical Thinking Actually Means in Supply Chain

Critical thinking in supply chain is not skepticism for its own sake, and it is not a soft skill that sits alongside the analytical work. It is a discipline applied to decisions and not just to models. The word itself points to what we mean: kritikos, the Greek root, means skilled in judging, able to discern*. That is the right definition for our purposes.

It means asking whether the right question is being answered before investing in answering it well. It means making the assumptions that drive a recommendation visible and testable. It means translating analytical output into operational consequence: what actually changes, for whom, at what cost, and under what conditions the answer flips.

That discipline shows up or breaks down at four specific moments:

- Before the model is built: Is the business question defined precisely enough to model?

- While the model is running: Are the assumptions embedded in the data realistic and challenged?

- When the output is ready: Does this result make sense in how the business actually operates?

- Before the recommendation goes forward: Have we planned for how this will be received, and by whom?

When these moments are skipped because of time pressure, overconfidence in tools, or a culture that rewards analytical speed over decision rigor the gap between analysis and action grows. The Powerade and Little Rock situations were both failures at these moments, not failures of the models themselves.

*DeCesare, M. (2009). Casting a critical glance at teaching “critical thinking.” Pedagogy and the Human Sciences, 1(1), 73–77.

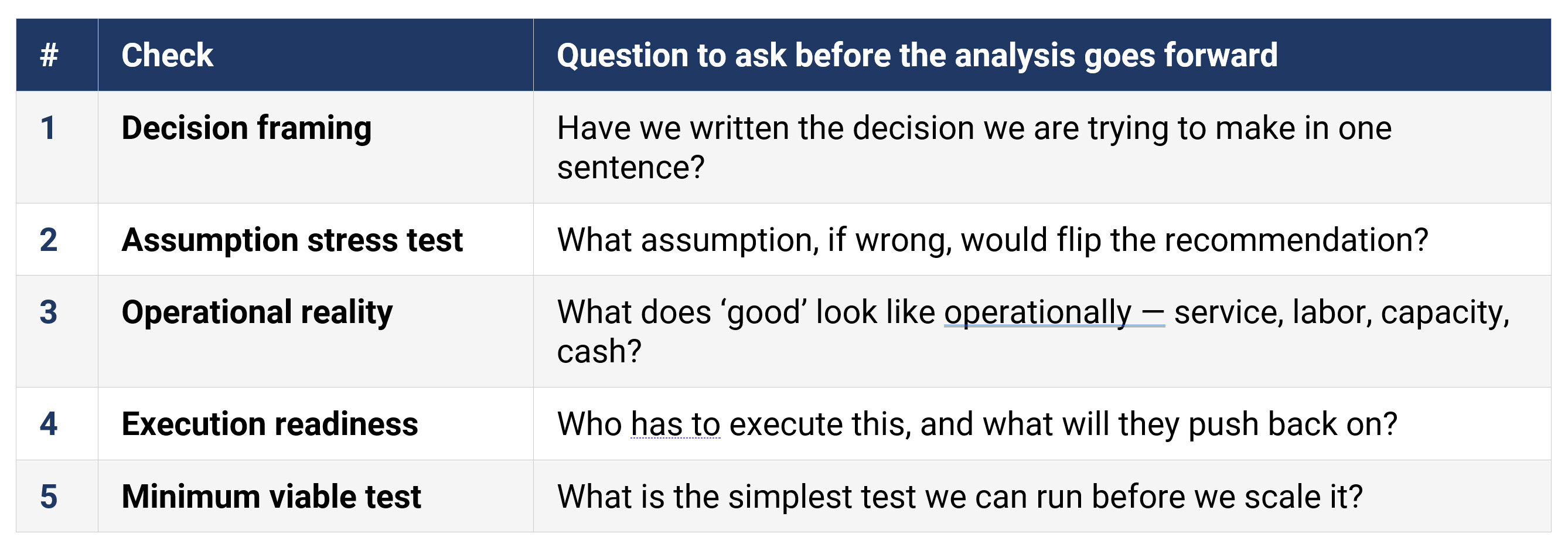

A Five-Question Diagnostic

Before an analysis or recommendation moves forward, teams should be able to answer five questions clearly. If any of them cannot be answered, the analysis is not ready—regardless of how strong the model is.

Figure 1: A Five-Question Diagnostic (accessible version)

These are questions that should have specific, grounded answers before a recommendation reaches leadership. If the team cannot answer question two (what assumption would flip the result) then the recommendation rests on unexamined ground. If question four cannot be answered, the change management work has not started yet.

In the Powerade situation, questions one and two were the misses. In Little Rock, it was question three. The models were not the problem. The diagnostic would have surfaced both gaps before the decisions were made.

This Gap Is Well Documented

What I am describing from my own experience is consistent with what the research shows.

A long-running finding in operations research is that many models are built and comparatively few actually drive decisions, and the breakdown is organizational, not technical. A widely cited review in the European Journal of Operational Research frames this as an implementation problem rooted in how models are connected (or not connected) to the people and processes that own the decision.

Professional credentialing bodies have recognized the same gap. The INFORMS Certified Analytics Professional blueprint explicitly lists business problem framing, stakeholder analysis, and business case development as core analytics competencies—not optional additions. The signal is clear: being analytically strong is necessary but not sufficient.

On the training side, a field study published in the European Journal of Operational Research tested the effects of structured decision training across roughly 1,000 decision makers and analysts. The results showed measurable improvement in proactive decision-making skills and decision satisfaction. The gap is real, and it is addressable. It is a training and design issue, not a talent issue.

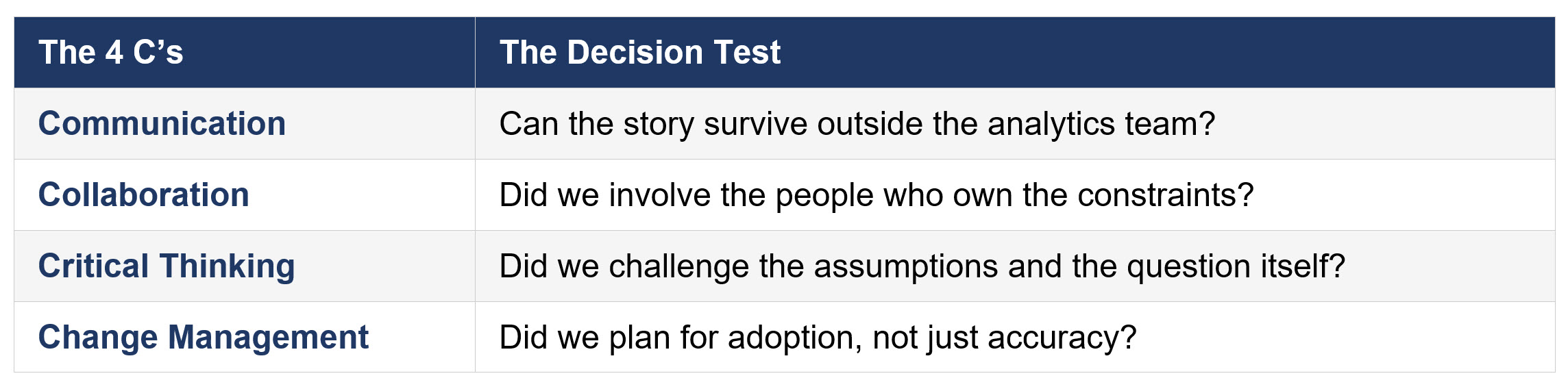

The 4 C’s: A Decision-Focused Framework

At Georgia Tech SCL, we organize this thinking around what we call the 4 C’s. These soft skills play a key role in the decision process. Each one asks a specific question about whether the decision, not just the analysis, was made well.

Figure 2: The 4 C’s: A Decision-Focused Framework (accessible version)

Notice what this framework does not include: model accuracy, data quality, or visualization quality. Those matter, and they are inputs to the decision. But a team can have a perfect model, a clean dataset, and a compelling dashboard and still fail all four of these tests.

The Powerade situation failed the Collaboration test The supply chain team did not sufficiently interrogate the brand team’s assumptions. Little Rock failed the Critical Thinking test: the right question was not asked about what the model was not capturing. In both cases, the Communication and Change Management failures followed directly from those upstream gaps.

When all four are present, analysis becomes a decision. When one or more is missing, the analysis and translation to a solid recommendation are at risk.

Where to Start

This topic keeps coming up in conversations with companies, in work with practitioners, and in what we hear from students as they move into industry roles.

The tools are not the problem. AI-assisted analytics, optimization models, and advanced forecasting are real assets. But tools amplify the thinking behind them. Weak decision discipline and better tools is a faster path to the wrong answer.

If this shows up in your org, try the five-question diagnostic on your next recommendation before it hits leadership. If it surfaces gaps you cannot close quickly, SCL can help. We are building workshops and courseware on decision-focused critical thinking, and we will cover this in our June Lunch and Learn.

Questions or comments? Reach out to SCL.

Apr. 26, 2026

Georgia Artificial Intelligence in Manufacturing, or Georgia AIM, has received one of the highest research awards at the Georgia Institute of Technology, the Outstanding Achievement in Research Program Impact.

The award was announced March 25, 2026 and is one of six Institute Research Awards given by Georgia Tech’s Office of the Executive Vice President for Research. The portfolio of awards honors achievements in research engagement, innovation, faculty advising, and impact.

Georgia AIM is a statewide coalition led by the Georgia Tech Enterprise Innovation Institute (EI2) and the Georgia Tech Manufacturing Institute (GTMI) to develop and deploy AI talent and innovation in manufacturing. The Georgia AIM coalition includes dozens of universities, technical colleges, nonprofits, and economic development organizations.

“It is an incredible experience to collaborate with technology and economic development leaders around the state to lead the nation and the world in AI for manufacturing,” said Aaron Stebner, Georgia AIM co-director and the Eugene C. Gwaltney Jr. Chair in Manufacturing at Georgia Tech.

“We are truly honored to receive this recognition from our peers at Georgia Tech,” said Tom Kurfess, GTMI Executive Director and HUSCO/Ramirez Distinguished Chair in Fluid Power and Motion Control.

Georgia AIM was initiated in 2021 by Stebner, EI2 Vice President David Bridges, Kurfess, Georgia AIM managing director and GTMI deputy director Steven Ferguson, and Georgia Tech executive director for strategic partnerships George White. The coalition received an initial $500,000 planning grant from the U.S. Economic Development Administration (EDA), which was followed by $65 million in additional grants from EDA and with additional federal, state, and private sector support now totals more than $100 million to enact projects across the state.

The Georgia AIM coalition counts many achievements on and off campus, including:

- Supporting collaborations for more than thirty-five faculty, fifty research faculty and professionals, ten post docs, eighty graduate research assistants, one hundred and fifty undergraduate research assistants, and dozens of staff at Georgia Tech.

- Transforming the Georgia Tech Advanced Manufacturing Pilot Facility into a national user facility for research and development to invent, test, derisk, and mature AI manufacturing and materials technologies.

- Building a manufacturing commercialization pipeline that links faculty research, student innovation, startups, and corporate partners to introduce AI manufacturing innovations to regional and national economies.

- Launching workforce development programs that provide new opportunities and career paths thousands of students spanning K-12 engagement, technical apprenticeships and credentials, and professional education.

- Providing STEM experiences including AI coding camps, robotics competitions, and advanced manufacturing competitions to thousands of students across Georgia.

- 21 peer reviewed journal articles, 5 peer reviewed conference proceedings, 5 National Academies workshop presentations, 5 keynote/plenary presentations, more than 200 conference presentations and posters, 13 invention disclosures, 7 provisional patents, 2 full patents filed to date with dozens more in process.

“Georgia AIM proves that innovation scales when built alongside workforce,” said Ferguson. “We built a seamless pipeline from education to industry, ensuring talent is ready to deploy AI in real manufacturing environments on day one.”

“The impact of Georgia AIM is grounded in collaboration — universities, industry, nonprofits and communities working together to shape the future of advanced manufacturing in Georgia,” said Bridges. “This recognition underscores what a coordinated statewide effort can accomplish.”

Because research covers a range of activities — from research and development to commercialization and public impacts — the annual awards recognize the many facets of work in this area. The peer-driven nomination process emphasizes measurable contributions and leadership across disciplines.

“The strength of Georgia Tech’s research enterprise begins with the talented people who push discovery forward every day,” said Tim Lieuwen, executive vice president for Research. “Congratulations to this year’s honorees, who demonstrate what it means to turn bold ideas into real-world impact, advancing knowledge from fundamental science to commercial and community applications. With these awards, we celebrate their leadership, creativity, and dedication to serving the public good.”

Read more about this year’s Institute Research Award winners.

News Contact

Yanet Chernet

Communications Officer

Apr. 15, 2026

Generative artificial intelligence (AI) is best known for creating images and text. Now, it is helping industries make better planning decisions.

Georgia Tech researchers have created a new AI model for decision-focused learning (DFL), called Diffusion-DFL. Recent tests showed it makes more accurate decisions than current approaches.

Along with optimizing industrial output, Diffusion-DFL lowers costs and reduces risk. Experiments also showed it performs across different fields.

Diffusion-DFL doesn’t just surpass current methods; it also predicts more accurately as problem sizes grow. The model requires less computing power despite these high-performance marks, making it more accessible to smaller enterprises.

Diffusion-DFL runs on diffusion models, the same technology that powers DALL-E and other AI image generators. It is the first DFL framework based on diffusion models.

“Anyone who makes high-stakes decisions under uncertainty, including supply chain managers, energy operators, and financial planners, benefits from Diffusion-DFL,” said Zihao Zhao, a Georgia Tech Ph.D. student who led the project.

“Instead of optimizing around a single forecast, the model evaluates many possible scenarios, so decisions account for real-world risk and become more robust.”

To test Diffusion-DFL, the team ran experiments based on real-world settings, including:

- Factory manufacturing to meet product demand

- Power grid scheduling to meet energy demand

- Stock market portfolio optimization

In each case, Diffusion-DFL made more accurate decisions than current methods. It also performed better as problems became larger and more complex. These results confirm the model’s ability to make important decisions in real-world scenarios with noisy data and uncertainty.

The experiments also show that Diffusion-DFL is practical, not just accurate. Training diffusion models is expensive, so the team developed a way to reduce memory use. This cut training costs by more than 99.7%. As a result, Diffusion-DFL can reach more researchers and practitioners.

“Our score-function estimator cuts GPU memory from over 60 gigabytes to 0.13 with almost no loss in decision quality, reducing the requirement for massive computing resources,” Zhao said. “I hope this expands Diffusion-DFL into other domains, like healthcare, where decisions must be made quickly under complex uncertainty."

Beyond decision-making applications, Diffusion-DFL marks a shift in DFL techniques and in the broader use of generative AI models.

In supply chain management, planners estimate future demand before deciding how much product to stock. In this DFL problem, engineers align ML models with predetermined decision objectives, like minimizing risk or reducing costs.

One flaw of DFL methods is that they optimize around a single, deterministic prediction in an uncertain future.

Diffusion-DFL takes a different approach. Instead of making a single guess, it determines a range of possible outcomes. This leads to decisions based on many likely scenarios, rather than on a single assumed future.

To do this, the framework uses diffusion models. These generative AI models create high-quality data from images, text, and audio.

The forward diffusion process involves adding noise to data until it becomes pure noise. Models trained via forward diffusion can reverse diffusion. This means they can start with noisy data and then produce meaningful insights from training examples.

Real-world data is often noisy and uncertain. Traditional DFL methods struggle in these conditions, but diffusion models are designed to handle them.

Because of this, Diffusion-DFL can explore many possible outcomes and choose better actions. Like image-generation AI, the model works well with complex data from different sources. This enables its use across different industries.

“Diffusion models have achieved significant success in generative AI and image synthesis, but our work shows their potential extends far beyond that,” said Kai Wang, an assistant professor in the School of Computational Science and Engineering (CSE).

“What makes Diffusion-DFL unique is that the specific downstream application guides how the model learns to handle uncertainty.

“Whether we are scheduling energy for power grids, balancing risk in financial portfolios, or developing early warning systems in healthcare, we can explicitly train these highly expressive models to navigate the unique complexities of each domain.”

Zhao and Wang collaborated with Caltech Ph.D. candidate Christopher Yeh and Harvard University postdoctoral fellow Lingkai Kong on Diffusion-DFL. Kong earned his Ph.D. in CSE from Georgia Tech in 2024.

Wang will present Diffusion-DFL on behalf of the group at the upcoming International Conference on Learning Representations (ICLR 2026). Occurring April 23-27 in Rio de Janeiro, ICLR is one of the world’s most prestigious conferences dedicated to artificial intelligence research.

“ICLR is the perfect stage for Diffusion-DFL because it brings together the exact community that needs to see the bridge between generative modeling and high-stakes decision-making for real-world applications,” Wang said.

“Presenting Diffusion-DFL allows us to challenge the traditional training framework of diffusion models. It’s about sparking a broader conversation on how we can align the training objectives of generative AI directly with actual, downstream decision-making needs.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Apr. 09, 2026

A new study conducted by researchers with the Georgia Tech Supply Chain and Logistics Institute shows that the Port of Savannah is the most cost-effective and reliable gateway for cargo destined for Atlanta, Memphis, and Nashville. According to the research, shippers can save more than $1,000 per container by routing freight through Savannah instead of West Coast ports, when evaluating full end-to-end supply chain costs and transit reliability.

The study emphasizes that gateway decisions should not be based solely on ocean rates or sailing time. While trans-Pacific routes to the West Coast are shorter at sea, researchers found that congestion, cargo rehandling, and inland transportation complexity often introduce delays and variability. In contrast, Savannah's efficient port operations, on-terminal rail service, and direct interstate access help offset longer ocean voyages with faster inland movement and greater predictability.

Researchers analyzed vessel and inland transportation data from ten Asian ports to the three Southeastern markets. Their findings showed that Savannah's reliable port processing and inland logistics significantly reduce congestion exposure and transit variability, making it a more dependable gateway for shippers seeking consistent delivery performance.

The study was conducted by Georgia Tech faculty and PhD students at the Institute's Physical Internet Center and reinforces previous Atlanta-focused research demonstrating similar benefits of East Coast routing. The findings support the growing role of the Port of Savannah as a strategic gateway for U.S. supply chains serving inland Southeast markets.

Read the original press release from the Georgia Ports Authority here:

Georgia Tech research shows East Coast gateway best choice for Atlanta, Memphis and Nashville

News Contact

info@scl.gatech.edu

Apr. 10, 2026

Chris Gaffney, Managing Director of the Georgia Tech Supply Chain and Logistics Institute (SCL), was featured in a recent Atlanta News First segment examining how a potential conflict involving Iran could impact fuel prices and broader transportation costs.

Drawing on his expertise in supply chain economics and transportation systems, Gaffney discussed how disruptions in global energy markets can ripple through logistics networks, ultimately affecting consumers and businesses across Georgia and the Southeast.

Read the full Atlanta News First article and watch the related video: Experts Warn War With Iran Could Raise Costs, Georgia Fuel Prices Leading the Way

News Contact

info@scl.gatech.edu

Apr. 01, 2026

Manufacturing is undergoing a significant transformation as artificial intelligence reshapes how industrial systems operate, adapt, and scale. The H. Milton Stewart School of Industrial and Systems Engineering (ISyE) has launched its Manufacturing and AI Initiative, which brings together faculty expertise in statistics, optimization, data science, and systems engineering to address emerging challenges and opportunities in modern manufacturing.

ISyE researchers are applying AI to complex manufacturing environments, including multistage production systems, asset management, quality improvement, and human‑centered manufacturing. Faculty leaders emphasize the importance of contextualizing large volumes of manufacturing data so AI can support reliable decision‑making, efficient operations, and sustainable outcomes. At the same time, the initiative acknowledges challenges such as data integration, system complexity, and the need to balance automation with human involvement. Together, these efforts position ISyE at the forefront of shaping AI‑powered manufacturing systems that are innovative, resilient, and socially responsible.

Read the full article in ISyE Magazine

News Contact

Annette Filliat, ISyE Communications Writer

Mar. 25, 2026

Whether it’s a fire or a flood, a ship’s crew can only rely on itself and its training in emergencies at sea. The same is true for crews facing digital threats on oil tankers, cargo ships, and other commercial vessels.

New cybersecurity research from the Georgia Institute of Technology, however, revealed that crews aboard commercial vessels were often not adequately prepared to manage cyberattacks effectively due to systemic training gaps.

The findings are based on interviews conducted by researchers with more than 20 officer-level mariners to assess the maritime industry’s readiness to handle cybersecurity attacks at sea.

"Historically, cybersecurity research has focused heavily on cyber-physical systems like cars, factories, and industrial plants, but ships have largely been overlooked,” said Anna Raymaker, Ph.D. student and lead researcher.

“That gap is concerning when more than 90% of the world’s goods travel by sea. Recent incidents, from GPS spoofing to ships linked to subsea cable disruptions, show that maritime systems are increasingly part of the global cyber threat landscape.”

The researchers proposed four practical strategies to strengthen maritime cyber defenses and close the training gaps. Their findings were presented recently at the ACM SIGSAC Conference on Computer and Communications Security (CCS).

1. Make Cybersecurity Training Actually Maritime

Many of those interviewed for the study described current cybersecurity training as “boilerplate” — generic modules that don’t reflect real shipboard risks.

Researchers recommend:

- Role-specific instruction: Navigation officers should learn to detect and identify GPS spoofing. Engineers should focus on vulnerabilities in remotely monitored systems.

- Bridging IT and Operational Technology: Crews need to understand how attacks on IT systems can trigger physical consequences in operational technology — including collisions, groundings, or explosions.

- Hands-on delivery: Replace passive PowerPoints with drills and in-person exercises that build muscle memory.

- Accessible standards: Training must account for the wide range of educational backgrounds across crews and be standardized across ranks.

2. Move Beyond “Call IT”

At sea, crews can’t simply escalate a cyber incident to a shore-based IT department and wait. Operational resilience requires onboard readiness.

Researchers recommend:

- Vessel-specific response plans: Ships need clear, actionable protocols for threats such as AIS jamming or radar manipulation.

- Military-style drills: Adopting MCON (Emission Control) exercises — used by the U.S. Military Sealift Command — can train crews to operate safely without electronic systems.

- Stronger connectivity controls: High-bandwidth satellite systems like Starlink introduce new risks. Clear policies and network segregation are essential to prevent new entry points for attackers.

Related Article: When GPS lies at sea: How electronic warfare is threatening ships and their crews by Anna Raymaker

3. Create Unified, Ship-Specific Regulations

Maritime cybersecurity regulations are often reactive and fragmented. Researchers argue the industry needs a cohesive, domain-specific framework.

Key recommendations include:

- A unified global model: Like the energy sector’s NERC CIP standards, a maritime framework could mandate baseline controls such as encryption, network segmentation, and anonymous incident reporting.

- Rules built for real crews: Regulations designed for large naval operations don’t translate well to smaller merchant or research vessels. Standards must reflect actual shipboard conditions.

- Future-proofing requirements: Autonomous ships and remotely operated vessels expand the cyber-physical attack surface. Regulations must proactively address these emerging technologies.

4. Invest in Maritime-Specific Cyber Research

Finally, the researchers stress that long-term resilience requires deeper technical research focused on maritime systems.

Priority areas include:

- Real-time intrusion detection systems tailored to shipboard protocols.

- Proactive security risk assessments of interconnected onboard systems.

- Cyber-physical modeling to better understand cascading failures in complex maritime environments.

The Bottom Line

Cyber threats at sea are no longer hypothetical. Mariners report real-world incidents ranging from GPS spoofing to ransomware that disrupts global trade.

“Through our interviews with mariners, I saw firsthand how much dedication and pride they take in their work,” said Raymaker. “Our goal is for this research to serve as a call to action for researchers, policymakers, and industry to invest more attention in maritime cybersecurity and support the people who risk their lives every day to keep global trade, food, and energy moving."

A Sea of Cyber Threats: Maritime Cybersecurity from the Perspective of Mariners was presented at CCS 2025. It was written by Raymaker and her colleagues, Ph.D. students Akshaya Kumar, Miuyin Yong Wong, and Ryan Pickren; Research Scientist Animesh Chhotaray, Associate Professor Frank Li, Associate Professor Saman Zonouz, and Georgia Tech Provost and Executive Vice President for Academic Affairs Raheem Beyah.

News Contact

John Popham

Communications Officer II School of Cybersecurity and Privacy

Pagination

- Page 1

- Next page