Aug. 11, 2025

Team Atlanta, a group of Georgia Tech students, faculty, and alumni, achieved international fame on Friday when they won DARPA’s AI Cyber Challenge (AIxCC) and its $4 million grand prize.

AIxCC was a two-year long competition to create an artificial intelligence (AI) enabled cyber reasoning system capable of autonomously finding and patching vulnerabilities.

“This is a once in a generation competition organized by DARPA about how to utilize recent advancements in AI to use in security related tasks,” said Georgia Tech Professor Taesoo Kim.

“As hackers we started this competition as AI skeptics, but now we truly believe in the potential of adopting large language models (LLM) when solving security problems."

The Atlantis system was Team Atlanta’s submission. Atlantis is a fuzzer- or an automated software that finds vulnerabilities or bugs- and enhanced it with several different types of LLMs.

While developing the system, Team Atlanta reported the heat put out by the GPU rack was hot enough to roast marshmallows.

The team was comprised of hackers, engineers, and cybersecurity researchers. The Georgia Tech alumni on the team also represented their employers which include KAIST, POSTECH, and Samsung Research. Kim is also the vice president of Samsung Research.

News Contact

John Popham

Communications Officer II at the School of Cybersecurity and Privacy

Jul. 22, 2025

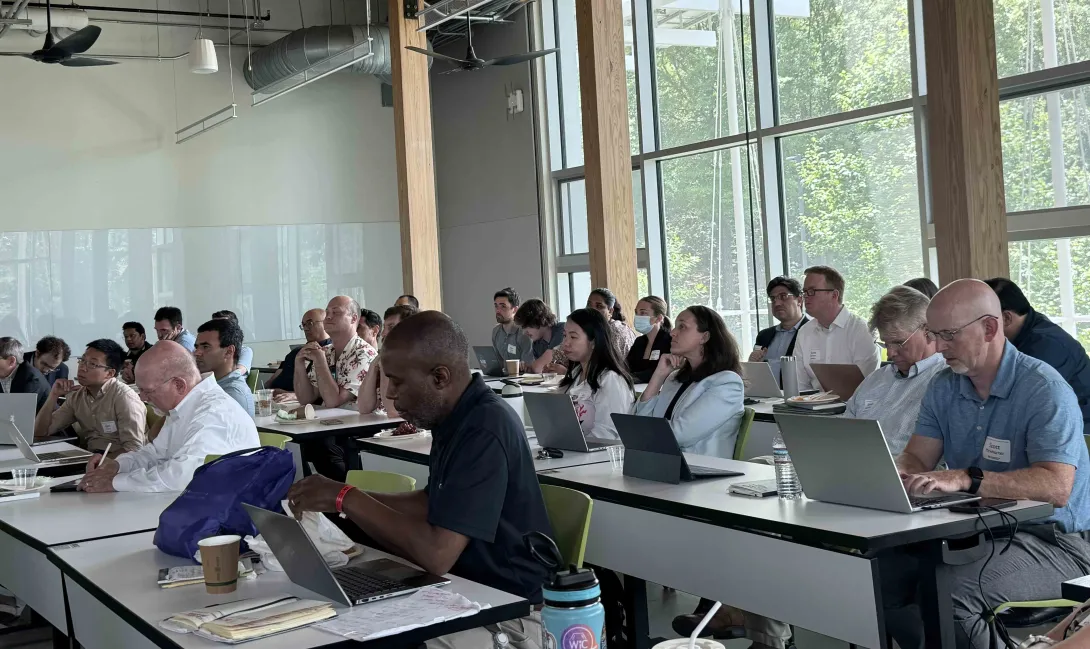

In June, the Strategic Energy Institute (SEI) hosted the Energy and National Security Summer Cohort Meeting that convened seed grant awardees from the Energy and National Security Initiative. A partnership between SEI and the Georgia Tech Research Institute (GTRI), the initiative provides research support through a seed grant program that launched last summer.

“As national security needs rapidly evolve, Georgia Tech is leveraging its research ecosystem and seed funding programs to accelerate the development of transformational technologies and strategies that strengthen national resilience,” said Christine Conwell, interim executive director of SEI. “We designed this seed grant program to tackle pressing national security priorities of today, such as threats to the grid, nuclear security, supply chain resilience, and renewable integration.”

The event began with an introduction from John Tien, SEI distinguished external fellow, professor of the practice, and former deputy secretary for the Department of Homeland Security, who addressed the evolving and multifaceted challenges facing energy, national security, and policy today. Tien’s talk emphasized the importance of early, strategic research investments in driving sustainable progress and long-term solutions.

The seed grant awardees then presented the initial progress of their research projects through lightning talks and a Q&A session. The research projects included:

- Energy Infrastructure Security and Risk Assessment Through Interactive Wargaming.

- Evaluating Energy Storage Materials, Supplies, and Systems in the Context of National Security Requirements.

- Nanostructured Sensors for Monitoring of Nuclear Fuel Cycle.

- Resilient Critical Infrastructures via Provable Secure Control Algorithms.

- Robust Energy Systems Planning by Way of Novel Systems Engineering (RESPoNSE).

- SPARC: Severe-Weather Predictive Analytics and Resilient Communication.

- The Strategic Mineral Economy: Challenges and Opportunities for Critical Resources.

“That critical intersection between energy and national security is where both risk and opportunity lie. To mitigate those risks and take advantage of the opportunities, our project teams have developed research topic areas that align with the U.S. Department of Energy's nine pillars for American energy dominance and security, as well as ongoing U.S. Department of Defense priorities,” said Tien.

The meeting showcased Georgia Tech’s collaborative and forward-looking research at the intersection of energy and national security, aimed at shaping a more secure and resilient energy future.

Written by: Katie Strickland & Priya Devarajan

News Contact

Priya Devarajan || SEI Communications Program Manager

May. 14, 2025

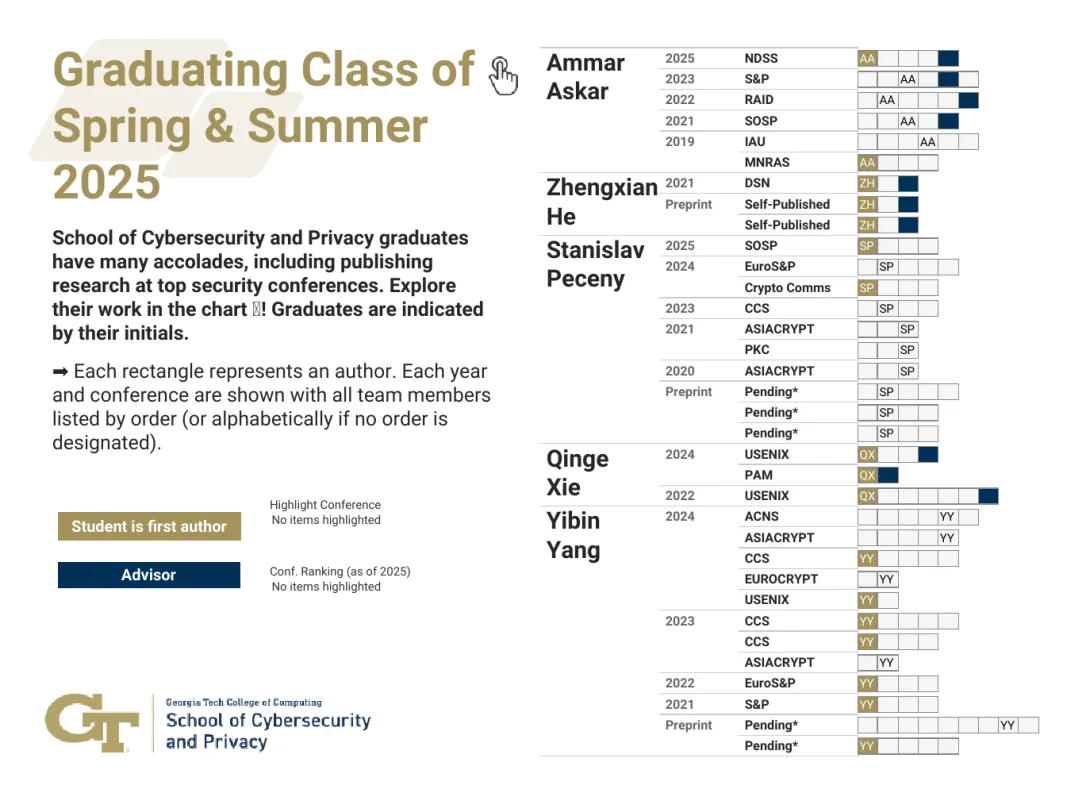

The School of Cybersecurity and Privacy at Georgia Tech is proud to recognize the accomplishments of five doctoral students who finished their doctoral programs in Spring 2025. These scholars have advanced critical research in software security, cryptography, and privacy, collectively publishing 34 papers, most of which appear in top-tier venues.

Ammar Askar developed new tools for software security in multi-language systems, including a concolic execution engine powered by large language models. He highlighted DEFCON 2021, which he attended with the Systems Software and Security Lab (SSLab), as a favorite memory.

Zhengxian He persevered through the pandemic to lead a major project with an industry partner, achieving strong research outcomes. He will be joining Amazon and fondly remembers watching sunsets from the CODA building.

Stanislav Peceny focused on secure multiparty computation (MPC), designing high-performance cryptographic protocols that improve efficiency by up to 1000x. He’s known for his creativity in both research and life, naming avocado trees after famous mathematicians and enjoying research discussions on the CODA rooftop.

Qinge Xie impressed faculty with her adaptability across multiple domains. Her advisor praised her independence and technical range, noting her ability to pivot seamlessly between complex research challenges.

Yibin Yang contributed to the advancement of zero-knowledge proofs and MPC, building toolchains that are faster and more usable than existing systems. His work earned a Distinguished Paper Award at ACM CCS 2023, and he also served as an RSAC Security Scholar. Yang enjoyed teaching and engaging with younger students, especially through events like Math Kangaroo.

Faculty mentors included Regents’ Entrepreneur Mustaque Ahamad, Professors Taesoo Kim and Vladimir Kolesnikov, and Assistant Professor Frank Li, who played vital roles in guiding the graduates’ research journeys.

Learn more about the graduates and their mentors on the 2025 Ph.D. graduate microsite.

News Contact

JP Popham, Communications Officer II

College of Computing | School of Cybersecurity and Privacy

Feb. 14, 2025

Men and women in California put their lives on the line when battling wildfires every year, but there is a future where machines powered by artificial intelligence are on the front lines, not firefighters.

However, this new generation of self-thinking robots would need security protocols to ensure they aren’t susceptible to hackers. To integrate such robots into society, they must come with assurances that they will behave safely around humans.

It begs the question: can you guarantee the safety of something that doesn’t exist yet? It’s something Assistant Professor Glen Chou hopes to accomplish by developing algorithms that will enable autonomous systems to learn and adapt while acting with safety and security assurances.

He plans to launch research initiatives, in collaboration with the School of Cybersecurity and Privacy and the Daniel Guggenheim School of Aerospace Engineering, to secure this new technological frontier as it develops.

“To operate in uncertain real-world environments, robots and other autonomous systems need to leverage and adapt a complex network of perception and control algorithms to turn sensor data into actions,” he said. “To obtain realistic assurances, we must do a joint safety and security analysis on these sensors and algorithms simultaneously, rather than one at a time.”

This end-to-end method would proactively look for flaws in the robot’s systems rather than wait for them to be exploited. This would lead to intrinsically robust robotic systems that can recover from failures.

Chou said this research will be useful in other domains, including advanced space exploration. If a space rover is sent to one of Saturn’s moons, for example, it needs to be able to act and think independently of scientists on Earth.

Aside from fighting fires and exploring space, this technology could perform maintenance in nuclear reactors, automatically maintain the power grid, and make autonomous surgery safer. It could also bring assistive robots into the home, enabling higher standards of care.

This is a challenging domain where safety, security, and privacy concerns are paramount due to frequent, close contact with humans.

This will start in the newly established Trustworthy Robotics Lab at Georgia Tech, which Chou directs. He and his Ph.D. students will design principled algorithms that enable general-purpose robots and autonomous systems to operate capably, safely, and securely with humans while remaining resilient to real-world failures and uncertainty.

Chou earned dual bachelor’s degrees in electrical engineering and computer sciences as well as mechanical engineering from University of California Berkeley in 2017, a master’s and Ph.D. in electrical and computer engineering from the University of Michigan in 2019 and 2022, respectively. He was a postdoc at MIT Computer Science & Artificial Intelligence Laboratory prior to joining Georgia Tech in November 2024. He is a recipient of the National Defense Science and Engineering Graduate fellowship program, NSF Graduate Research fellowships, and was named a Robotics: Science and Systems Pioneer in 2022.

News Contact

John (JP) Popham

Communications Officer II

College of Computing | School of Cybersecurity and Privacy

Nov. 25, 2024

Cybersecurity researchers have discovered new vulnerabilities that could provide criminals with wireless access to the computer systems in automobiles, aircraft, factories, and other cyber-physical systems.

The computers used in vehicles and other cyber-physical systems rely on a specialized internal network to communicate commands between electronics. Because it took place internally, it was traditionally assumed that attackers could only influence this network through physical access.

In collaboration with Hyundai, researchers from Georgia Tech’s Cyber-Physical Systems Security Research Lab (CPSec) observed that threat models used to evaluate the security of these technologies were outdated.

The team, led by Ph.D. student Zhaozhou Tang, found that vehicle technology advancements allowed attackers to launch new attacks, improve existing attacks, and circumvent current defense systems.

For example, Tang’s findings included the possibility for attackers to remotely compromise the computers used in cars and aircraft through Wi-Fi, cellular, Bluetooth, and other wireless channels.

“Our job was to thoroughly review existing information and find ways to protect against these attacks,” he said. “We found new threats and proposed a defense system that can protect against the new and old attacks.”

In response to their findings, the team developed ERACAN, the first comprehensive defense system against this new generation of attackers. Designed to detect new and old attacks, ERACAN can deploy defenses when necessary.

The system also classifies the attacks it reacts to, providing security experts with the tools for detailed analysis. It has a detection rate of 100% for all attacks launched by conventional methods and detects enhanced threat models 99.7% of the time.

The project received a distinguished paper award at the 2024 ACM Conference on Computer and Communications Security (CCS 24) held in Salt Lake City. Tang presented the paper at the October conference.

“This was Zhaozhou’s first paper in his Ph.D. program, and he deserves recognition for his groundbreaking work on automotive cybersecurity,” said Saman Zonouz, associate professor in the School of Cybersecurity and Privacy and the School of Electrical and Computer Engineering.

The U.S. Department of Homeland Security has designated the transportation sector as one of the nation’s 16 critical infrastructure sectors. Ensuring its security is vital to national security and public safety.

“Modern vehicles, which rely heavily on controller area networks for essential operations, are integral components of this infrastructure,” said Zonouz. “With the increasing sophistication of cyberthreats, safeguarding these systems has become critical to ensuring the resilience and security of transportation networks.”

This paper introduced to the scientific community the first comprehensive defense system to address advanced threats targeting vehicular controller area networks.

The CPSec team is putting the technology it has developed into practice in collaboration with Hyundai America Technical Center, Inc., which sponsors the work. Tang hopes ERACAN’s success will raise awareness of these new threats in the research community and industry.

“It will help them build future defenses,” he said. “We have demonstrated the best practice to defend against these attacks.”

Tang received his bachelor’s degree at Georgia Tech, where he first performed security-related work for the automobile industry. While working with Zonouz on his master’s degree, he decided to change course and pursue research initiatives like vehicle security in a Ph.D. program.

“It is interesting how it came full circle,” he said. “I will continue on this path of automobile security throughout my Ph.D.”

ERACAN: Defending Against an Emerging CAN Threat Model, was written by Zhaozhou Tang, Khaled Serag from the Qatar Computing Research Institute, Saman Zonouz, Berkay Celik and Dongyan Xu from Purdue University, and Raheem Beyah, professor and dean of the College of Engineering. The CPSec Lab is a collaboration between the School of Cybersecurity and Privacy and the School of Electrical and Computer Engineering.

News Contact

John Popham

Communications Officer II

School of Cybersecurity and Privacy

Oct. 18, 2024

The U.S. Department of Energy (DOE) has awarded Georgia Tech researchers a $4.6 million grant to develop improved cybersecurity protection for renewable energy technologies.

Associate Professor Saman Zonouz will lead the project and leverage the latest artificial technology (AI) to create Phorensics. The new tool will anticipate cyberattacks on critical infrastructure and provide analysts with an accurate reading of what vulnerabilities were exploited.

“This grant enables us to tackle one of the crucial challenges facing national security today: our critical infrastructure resilience and post-incident diagnostics to restore normal operations in a timely manner,” said Zonouz.

“Together with our amazing team, we will focus on cyber-physical data recovery and post-mortem forensics analysis after cybersecurity incidents in emerging renewable energy systems.”

As the integration of renewable energy technology into national power grids increases, so does their vulnerability to cyberattacks. These threats put energy infrastructure at risk and pose a significant danger to public safety and economic stability. The AI behind Phorensics will allow analysts and technicians to scale security efforts to keep up with a growing power grid that is becoming more complex.

This effort is part of the Security of Engineering Systems (SES) initiative at Georgia Tech’s School of Cybersecurity and Privacy (SCP). SES has three pillars: research, education, and testbeds, with multiple ongoing large, sponsored efforts.

“We had a successful hiring season for SES last year and will continue filling several open tenure-track faculty positions this upcoming cycle,” said Zonouz.

“With top-notch cybersecurity and engineering schools at Georgia Tech, we have begun the SES journey with a dedicated passion to pursue building real-world solutions to protect our critical infrastructures, national security, and public safety.”

Zonouz is the director of the Cyber-Physical Systems Security Laboratory (CPSec) and is jointly appointed by Georgia Tech’s School of Cybersecurity and Privacy (SCP) and the School of Electrical and Computer Engineering (ECE).

The three Georgia Tech researchers joining him on this project are Brendan Saltaformaggio, associate professor in SCP and ECE; Taesoo Kim, jointly appointed professor in SCP and the School of Computer Science; and Animesh Chhotaray, research scientist in SCP.

Katherine Davis, associate professor at the Texas A&M University Department of Electrical and Computer Engineering, has partnered with the team to develop Phorensics. The team will also collaborate with the NREL National Lab, and industry partners for technology transfer and commercialization initiatives.

The Energy Department defines renewable energy as energy from unlimited, naturally replenished resources, such as the sun, tides, and wind. Renewable energy can be used for electricity generation, space and water heating and cooling, and transportation.

News Contact

John Popham

Communications Officer II

College of Computing | School of Cybersecurity and Privacy

Oct. 24, 2024

Eight Georgia Tech researchers were honored with the ACM Distinguished Paper Award for their groundbreaking contributions to cybersecurity at the recent ACM Conference on Computer and Communications Security (CCS).

Three papers were recognized for addressing critical challenges in the field, spanning areas such as automotive cybersecurity, password security, and cryptographic testing.

“These three projects underscore Georgia Tech's leadership in advancing cybersecurity solutions that have real-world impact, from protecting critical infrastructure to ensuring the security of future computing systems and improving everyday digital practices,” said School of Cybersecurity and Privacy (SCP) Chair Michael Bailey.

One of the papers, ERACAN: Defending Against an Emerging CAN Threat Model, was co-authored by Ph.D. student Zhaozhou Tang, Associate Professor Saman Zonouz, and College of Engineering Dean and Professor Raheem Beyah. This research focuses on securing the controller area network (CAN), a vital system used in modern vehicles that is increasingly targeted by cyber threats.

"This project is led by our Ph.D. student Zhaozhou Tang with the Cyber-Physical Systems Security (CPSec) Lab," said Zonouz. "Impressively, this was Zhaozhou's first paper in his Ph.D., and he deserves special recognition for this groundbreaking work on automotive cybersecurity."

The work introduces a comprehensive defense system to counter advanced threats to vehicular CAN networks, and the team is collaborating with the Hyundai America Technical Center to implement the research. The CPSec Lab is a collaborative effort between SCP and the School of Electrical and Computer Engineering (ECE).

In another paper, Testing Side-Channel Security of Cryptographic Implementations Against Future Microarchitectures, Assistant Professor Daniel Genkin collaborated with international researchers to define security threats in new computing technology.

"We appreciate ACM for recognizing our work," said Genkin. “Tools for early-stage testing of CPUs for emerging side-channel threats are crucial to ensuring the security of the next generation of computing devices.”

The third paper, Unmasking the Security and Usability of Password Masking, was authored by graduate students Yuqi Hu, Suood Al Roomi, Sena Sahin, and Frank Li, SCP and ECE assistant professor. This study investigated the effectiveness and provided recommendations for implementing password masking and the practice of hiding characters as they are typed and offered.

"Password masking is a widely deployed security mechanism that hasn't been extensively investigated in prior works," said Li.

The assistant professor credited the collaborative efforts of his students, particularly Yuqi Hu, for leading the project.

The ACM Conference on Computer and Communications Security (CCS) is the flagship annual conference of the Special Interest Group on Security, Audit and Control (SIGSAC) of the Association for Computing Machinery (ACM). The conference was held from Oct. 14-18 in Salt Lake City.

News Contact

John Popham

Communications Officer II

College of Computing | School of Cybersecurity and Privacy

Apr. 19, 2024

When U.S. Rep. Earl L. “Buddy” Carter from Georgia’s 1st District visited Atlanta recently, one of his top priorities was meeting with the experts at Georgia Tech’s 20,000-square-foot Advanced Manufacturing Pilot Facility (AMPF).

Carter was recently named the House Energy and Commerce Committee’s chair of the Environment, Manufacturing, and Critical Materials Subcommittee, a group that concerns itself primarily with contamination of soil, air, noise, and water, as well as emergency environmental response, whether physical or cybersecurity.

Because AMPF’s focus dovetails with subcommittee interests, the facility was a fitting stop for Carter, who was welcomed for an afternoon tour and series of live demonstrations. Programs within Georgia Tech’s Enterprise Innovation Institute — specifically the Georgia Artificial Intelligence in Manufacturing (Georgia AIM) and Georgia Manufacturing Extension Partnership (GaMEP) — were well represented.

“Innovation is extremely important,” Carter said during his April 1 visit. “In order to handle some of our problems, we’ve got to have adaptation, mitigation, and innovation. I’ve always said that the greatest innovators, the greatest scientists in the world, are right here in the United States. I’m so proud of Georgia Tech and what they do for our state and for our nation.”

Carter’s AMPF visit began with an introduction by Thomas Kurfess, Regents' Professor and HUSCO/Ramirez Distinguished Chair in Fluid Power and Motion Control in the George W. Woodruff School of Mechanical Engineering and executive director of the Georgia Tech Manufacturing Institute; Steven Ferguson, principal research scientist and managing director at Georgia AIM; research engineer Kyle Saleeby; and Donna Ennis, the Enterprise Innovation Institute’s director of community engagement and program development, and co-director of Georgia AIM.

Ennis provided an overview of Georgia AIM, while Ferguson spoke on the Manufacturing 4.0 Consortium and Kurfess detailed the AMPF origin story, before introducing four live demonstrations.

The first of these featured Chuck Easley, Professor of the Practice in the Scheller College of Business, who elaborated on supply chain issues. Afterward, Alan Burl of EPICS: Enhanced Preparation for Intelligent Cybermanufacturing Systems and mechanical engineer Melissa Foley led a brief information session on hybrid turbine blade repair.

Finally, GaMEP project manager Michael Barker expounded on GaMEP’s cybersecurity services, and Deryk Stoops of Central Georgia Technical College detailed the Georgia AIM-sponsored AI robotics training program at the Georgia Veterans Education Career Transition Resource (VECTR) Center, which offers training and assistance to those making the transition from military to civilian life.

The topic of artificial intelligence, in all its subtlety and nuance, was of particular interest to Carter.

“AI is the buzz in Washington, D.C.,” he said. “Whether it be healthcare, energy, [or] science, we on the Energy and Commerce Committee look at it from a sense [that there’s] a very delicate balance, and we understand the responsibility. But we want to try to benefit from this as much as we can.”

“I heard something today I haven’t heard before," Carter continued, "and that is instead of calling it artificial intelligence, we refer to it as ‘augmented intelligence.’ I think that’s a great term, and certainly something I’m going to take back to Washington with me.”

“It was a pleasure to host Rep. Carter for a firsthand look at AMPF," shared Ennis, "which is uniquely positioned to offer businesses the opportunity to collaborate with Georgia Tech researchers and students and to hear about Georgia AIM.

“At Georgia AIM, we’re committed to making the state a leader in artificial intelligence-assisted manufacturing, and we’re grateful for Congressman Carter’s interest and support of our efforts."

News Contact

Eve Tolpa

Senior Writer/Editor

Enterprise Innovation Institute (EI2)

Feb. 12, 2024

The University of Waterloo and the Board of Regents of the University System of Georgia, representing Georgia Institute of Technology (Georgia Tech), have officially entered a Memorandum of Understanding (MOU) to strengthen academic and research ties between the two institutions. The MOU signifies a commitment to fostering collaborative initiatives in research, education, and other areas of mutual interest. Both universities, recognized for their global impact and innovation, are eager to embark on this journey of cooperation.

Charmaine Dean, Vice-President of Research & International, shared, “The University of Waterloo is pleased to embark on a new collaboration with Georgia Tech, featuring faculty and student exchanges, joint research projects, dual degrees, and conferences. Strengthening ties between our institutions through this collaboration creates a dynamic environment for our faculty and students to foster innovation in many areas of mutual excellence.”

“Georgia Tech is excited to see its NSF AI Institute for Advances in Optimization (AI4OPT), under the leadership of Prof. Pascal Van Hentenryck, partner with experts from the Waterloo Artificial Intelligence Institute of the University of Waterloo. I am really looking forward to the impact that this partnership will have in advancing the fundamental knowledge of AI, in further expanding its applications, and in enabling its wider adoption,” noted Prof. Bernard Kippelen, Vice Provost for International Initiatives at Georgia Tech.

This collaboration is poised to elevate the academic and research landscape of both institutions, promoting global engagement and creating opportunities for students and faculty to thrive in an interconnected world.

News Contact

Breon Martin

Jan. 11, 2024

In 1950, Alan Turing asked, “Can machines think?” More than 70 years later, advancements in artificial intelligence are creating exciting possibilities and questions about its potential pitfalls.

A recent executive order issued by President Joe Biden seeks to establish "new standards for AI safety and security" while addressing consumer privacy concerns and promoting innovation. Georgia Tech experts have examined the key elements of the order and offer their thoughts on its scope and what comes next.

A Precautionary Tale

The order calls for the development of standards, tools, and tests to ensure the safe use of AI. From voice scams and phishing campaigns to larger-scale threats, the technology’s potential dangers have been widely documented. But Margaret Kosal, associate professor in the Ivan Allen College of Liberal Arts, says that additional context is often needed to dispel hysteria.

"No one is going to be hooking up AI to launch nuclear weapons, but AI capabilities may enable targeting, or enable the command and control and the decision-making time to be compressed,” she said.

The order will create an AI Safety and Security Board tasked with addressing critical threats. Companies developing foundation models that "pose a serious risk to national security, national economic security, or national public health and safety” will be required to notify the federal government when training the model and required to share the results of all red-team safety tests — a simulated cyberattack to test a system's defenses.

Since the launch of ChatGPT in 2022, a CNBC report details a 1,267% rise in phishing emails. Srijan Kumar, assistant professor in the College of Computing, attributes the increase to the technology's availability and an inability to rein in "bad actors."

He says these scams will only continue to get more sophisticated and personalized. They “can be created by knowing what you might be willing to fall prey to versus what I might fall prey to,” said Kumar, whose systems have influenced misinformation detection on sites like X (formerly Twitter) and Wikipedia. “AI is not going to autonomously do all of those bad things, but this order can ensure there are consequences for people who misuse it.”

A Delicate Balance

Building an AI platform requires large amounts of data regardless of its intended application. Two primary goals of the executive order are protecting privacy and advancing equity.

To protect personal data, the order tasks Congress with evaluating how agencies collect and use commercially available information and address algorithmic discrimination.

Acknowledging that everyone should be allowed to have their voice represented in the outputs of AI data sets, Deven Desai, associate professor in the Scheller College of Business, noted, "There are people who don't want to be part of data sets, which is their right, but this means their voices won't be reflected in the outputs.”

The order also includes sections to address intellectual property concerns among inventors and creators, though legal challenges will likely set new precedents in the years ahead.

When that time comes, Kosal says that defining “theft” in the context of AI becomes the true challenge and that, ultimately, money will play a significant role. "If you spit out a Harry Potter book and read it yourself, nobody will care. It's when you start selling it to make money, and you don't share proceeds with the original people, then it becomes an issue," she said.

What Does AI-Generated Mean?

The order instructs the Department of Commerce to develop guidelines for content authentication and watermarking to label AI-generated content. Desai questions what it means for something to be truly created by AI.

An important distinction lies between using AI to assist a writer in organizing their thoughts and using the technology to generate content. He likens the trend to the music industry in the 1980s.

"Synthesizers really changed people's ability to generate music and, for a while, people thought that was horrible. They can just program the music. They're not. I am still the human responsible for that music, or that article in this case, so what is the point of the label?" he asks.

As AI assistance becomes commonplace in content creation, trusting the source of information is increasingly important. Recently, articles published on Sports Illustrated's website featured AI-generated content provided by a third-party company that had used a machine to write the content and create fake bylines. Sports Illustrated, which may not have known of the problem, ran the material without disclosure to readers. CEO Ross Levinsohn was ousted shortly after the story broke.

“Perhaps if the third party had disclosed its use of AI software, SI would have been able to assess how much AI was used and then chosen not to run the material, or to run it with a disclaimer that AI helped write the material,” Desai said. "Of course, even if they label the content as AI-generated, a reader still won't know exactly how much of the content came from AI or a human.”

AI and the Workforce

As AI systems and models become more sophisticated, workers may become more concerned about being replaced. To counteract these concerns, the order calls for a study to examine AI’s potential impact on labor markets and investments in workforce training efforts.

Kumar compares the rise of AI to similar technological innovations throughout history and sees it as an opportunity for workers and industries to adapt. "It's less a matter of AI replacing workers and more of reskilling people to use the new technology. It's no different from when assembly lines in the auto industry were created."

Promoting Innovation and Competition

The power to harness the full potential of AI has initiated a race to the top. Desai believes that part of the executive order providing resources to smaller developers can help level the playing field.

"There is a possibility here for markets to open up. Current players using models that weren't built with transparency in mind might struggle, but maybe that's OK."

The issue of reliability and transparency comes into focus for Desai, especially as it relates to government usage of AI. The order calls on agencies to "acquire specified AI products and services faster, more cheaply, and more effectively through more rapid and efficient contracting."

When taxpayer dollars are at stake, government can’t afford to trust a technology it doesn’t fully understand — a topic Desai has explored elsewhere. "You can’t just say, ‘We don’t know how it works, but we trust it.’ That’s not going to work. So that’s where there may be a slowdown in the government’s ability to use private sector software if they can’t explain how the thing works and to show that it doesn’t have discriminatory issues.”

What's Next

Promoting and policing the safe use of AI cannot be done independently. Georgia Tech experts agree that participation on a global scale is necessary. To that end, the European Union will unveil its comprehensive EU AI Act, which includes a similar framework to the president's executive order.

Due to the evolving nature of AI, the executive order or the EU's actions will not be all-encompassing. Law often lags behind technology, but Kosal points out that it's crucial to think beyond what currently exists when crafting policy.

Experts also agree that AI cannot be regulated or governed through a single document and that this order is likely the first in a series of policymaking moves. Kosal sees tremendous opportunity with the innovation surrounding AI but hopes the growing fear of its rise does not usher in another AI winter, in which interest and research funding fade.

News Contact

Steven Gagliano - Institute Communications

Pagination

- Previous page

- Page 2

- Next page