May. 15, 2024

Georgia Tech researchers say non-English speakers shouldn’t rely on chatbots like ChatGPT to provide valuable healthcare advice.

A team of researchers from the College of Computing at Georgia Tech has developed a framework for assessing the capabilities of large language models (LLMs).

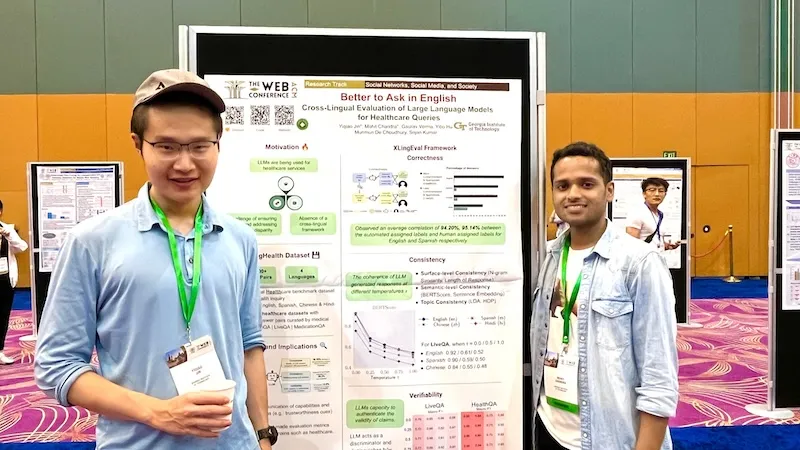

Ph.D. students Mohit Chandra and Yiqiao (Ahren) Jin are the co-lead authors of the paper Better to Ask in English: Cross-Lingual Evaluation of Large Language Models for Healthcare Queries.

Their paper’s findings reveal a gap between LLMs and their ability to answer health-related questions. Chandra and Jin point out the limitations of LLMs for users and developers but also highlight their potential.

Their XLingEval framework cautions non-English speakers from using chatbots as alternatives to doctors for advice. However, models can improve by deepening the data pool with multilingual source material such as their proposed XLingHealth benchmark.

“For users, our research supports what ChatGPT’s website already states: chatbots make a lot of mistakes, so we should not rely on them for critical decision-making or for information that requires high accuracy,” Jin said.

“Since we observed this language disparity in their performance, LLM developers should focus on improving accuracy, correctness, consistency, and reliability in other languages,” Jin said.

Using XLingEval, the researchers found chatbots are less accurate in Spanish, Chinese, and Hindi compared to English. By focusing on correctness, consistency, and verifiability, they discovered:

- Correctness decreased by 18% when the same questions were asked in Spanish, Chinese, and Hindi.

- Answers in non-English were 29% less consistent than their English counterparts.

- Non-English responses were 13% overall less verifiable.

XLingHealth contains question-answer pairs that chatbots can reference, which the group hopes will spark improvement within LLMs.

The HealthQA dataset uses specialized healthcare articles from the popular healthcare website Patient. It includes 1,134 health-related question-answer pairs as excerpts from original articles.

LiveQA is a second dataset containing 246 question-answer pairs constructed from frequently asked questions (FAQs) platforms associated with the U.S. National Institutes of Health (NIH).

For drug-related questions, the group built a MedicationQA component. This dataset contains 690 questions extracted from anonymous consumer queries submitted to MedlinePlus. The answers are sourced from medical references, such as MedlinePlus and DailyMed.

In their tests, the researchers asked over 2,000 medical-related questions to ChatGPT-3.5 and MedAlpaca. MedAlpaca is a healthcare question-answer chatbot trained in medical literature. Yet, more than 67% of its responses to non-English questions were irrelevant or contradictory.

“We see far worse performance in the case of MedAlpaca than ChatGPT,” Chandra said.

“The majority of the data for MedAlpaca is in English, so it struggled to answer queries in non-English languages. GPT also struggled, but it performed much better than MedAlpaca because it had some sort of training data in other languages.”

Ph.D. student Gaurav Verma and postdoctoral researcher Yibo Hu co-authored the paper.

Jin and Verma study under Srijan Kumar, an assistant professor in the School of Computational Science and Engineering, and Hu is a postdoc in Kumar’s lab. Chandra is advised by Munmun De Choudhury, an associate professor in the School of Interactive Computing.

The team will present their paper at The Web Conference, occurring May 13-17 in Singapore. The annual conference focuses on the future direction of the internet. The group’s presentation is a complimentary match, considering the conference's location.

English and Chinese are the most common languages in Singapore. The group tested Spanish, Chinese, and Hindi because they are the world’s most spoken languages after English. Personal curiosity and background played a part in inspiring the study.

“ChatGPT was very popular when it launched in 2022, especially for us computer science students who are always exploring new technology,” said Jin. “Non-native English speakers, like Mohit and I, noticed early on that chatbots underperformed in our native languages.”

School of Interactive Computing communications officer Nathan Deen and School of Computational Science and Engineering communications officer Bryant Wine contributed to this report.

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Nathan Deen, Communications Officer

ndeen6@cc.gatech.edu

May. 06, 2024

Cardiologists and surgeons could soon have a new mobile augmented reality (AR) tool to improve collaboration in surgical planning.

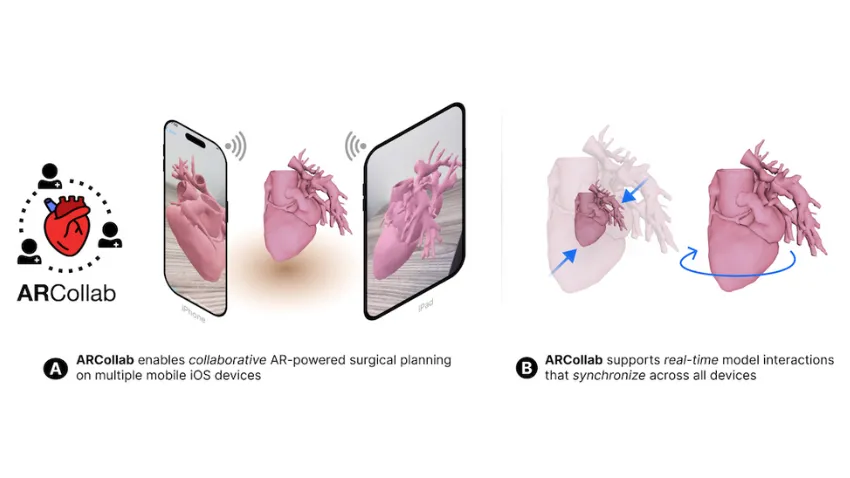

ARCollab is an iOS AR application designed for doctors to interact with patient-specific 3D heart models in a shared environment. It is the first surgical planning tool that uses multi-user mobile AR in iOS.

The application’s collaborative feature overcomes limitations in traditional surgical modeling and planning methods. This offers patients better, personalized care from doctors who plan and collaborate with the tool.

Georgia Tech researchers partnered with Children’s Healthcare of Atlanta (CHOA) in ARCollab’s development. Pratham Mehta, a computer science major, led the group’s research.

“We have conducted two trips to CHOA for usability evaluations with cardiologists and surgeons. The overall feedback from ARCollab users has been positive,” Mehta said.

“They all enjoyed experimenting with it and collaborating with other users. They also felt like it had the potential to be useful in surgical planning.”

ARCollab’s collaborative environment is the tool’s most novel feature. It allows surgical teams to study and plan together in a virtual workspace, regardless of location.

ARCollab supports a toolbox of features for doctors to inspect and interact with their patients' AR heart models. With a few finger gestures, users can scale and rotate, “slice” into the model, and modify a slicing plane to view omnidirectional cross-sections of the heart.

Developing ARCollab on iOS works twofold. This streamlines deployment and accessibility by making it available on the iOS App Store and Apple devices. Building ARCollab on Apple’s peer-to-peer network framework ensures the functionality of the AR components. It also lessens the learning curve, especially for experienced AR users.

ARCollab overcomes traditional surgical planning practices of using physical heart models. Producing physical models is time-consuming, resource-intensive, and irreversible compared to digital models. It is also difficult for surgical teams to plan together since they are limited to studying a single physical model.

Digital and AR modeling is growing as an alternative to physical models. CardiacAR is one such tool the group has already created.

However, digital platforms lack multi-user features essential for surgical teams to collaborate during planning. ARCollab’s multi-user workspace progresses the technology’s potential as a mass replacement for physical modeling.

“Over the past year and a half, we have been working on incorporating collaboration into our prior work with CardiacAR,” Mehta said.

“This involved completely changing the codebase, rebuilding the entire app and its features from the ground up in a newer AR framework that was better suited for collaboration and future development.”

Its interactive and visualization features, along with its novelty and innovation, led the Conference on Human Factors in Computing Systems (CHI 2024) to accept ARCollab for presentation. The conference occurs May 11-16 in Honolulu.

CHI is considered the most prestigious conference for human-computer interaction and one of the top-ranked conferences in computer science.

M.S. student Harsha Karanth and alumnus Alex Yang (CS 2022, M.S. CS 2023) co-authored the paper with Mehta. They study under Polo Chau, an associate professor in the School of Computational Science and Engineering.

The Georgia Tech group partnered with Timothy Slesnick and Fawwaz Shaw from CHOA on ARCollab’s development.

“Working with the doctors and having them test out versions of our application and give us feedback has been the most important part of the collaboration with CHOA,” Mehta said.

“These medical professionals are experts in their field. We want to make sure to have features that they want and need, and that would make their job easier.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

May. 06, 2024

Thanks to a Georgia Tech researcher's new tool, application developers can now see potential harmful attributes in their prototypes.

Farsight is a tool designed for developers who use large language models (LLMs) to create applications powered by artificial intelligence (AI). Farsight alerts prototypers when they write LLM prompts that could be harmful and misused.

Downstream users can expect to benefit from better quality and safer products made with Farsight’s assistance. The tool’s lasting impact, though, is that it fosters responsible AI awareness by coaching developers on the proper use of LLMs.

Machine Learning Ph.D. candidate Zijie (Jay) Wang is Farsight’s lead architect. He will present the paper at the upcoming Conference on Human Factors in Computing Systems (CHI 2024). Farsight ranked in the top 5% of papers accepted to CHI 2024, earning it an honorable mention for the conference’s best paper award.

“LLMs have empowered millions of people with diverse backgrounds, including writers, doctors, and educators, to build and prototype powerful AI apps through prompting. However, many of these AI prototypers don’t have training in computer science, let alone responsible AI practices,” said Wang.

“With a growing number of AI incidents related to LLMs, it is critical to make developers aware of the potential harms associated with their AI applications.”

Wang referenced an example when two lawyers used ChatGPT to write a legal brief. A U.S. judge sanctioned the lawyers because their submitted brief contained six fictitious case citations that the LLM fabricated.

With Farsight, the group aims to improve developers’ awareness of responsible AI use. It achieves this by highlighting potential use cases, affected stakeholders, and possible harm associated with an application in the early prototyping stage.

A user study involving 42 prototypers showed that developers could better identify potential harms associated with their prompts after using Farsight. The users also found the tool more helpful and usable than existing resources.

Feedback from the study showed Farsight encouraged developers to focus on end-users and think beyond immediate harmful outcomes.

“While resources, like workshops and online videos, exist to help AI prototypers, they are often seen as tedious, and most people lack the incentive and time to use them,” said Wang.

“Our approach was to consolidate and display responsible AI resources in the same space where AI prototypers write prompts. In addition, we leverage AI to highlight relevant real-life incidents and guide users to potential harms based on their prompts.”

Farsight employs an in-situ user interface to show developers the potential negative consequences of their applications during prototyping.

Alert symbols for “neutral,” “caution,” and “warning” notify users when prompts require more attention. When a user clicks the alert symbol, an awareness sidebar expands from one side of the screen.

The sidebar shows an incident panel with actual news headlines from incidents relevant to the harmful prompt. The sidebar also has a use-case panel that helps developers imagine how different groups of people can use their applications in varying contexts.

Another key feature is the harm envisioner. This functionality takes a user’s prompt as input and assists them in envisioning potential harmful outcomes. The prompt branches into an interactive node tree that lists use cases, stakeholders, and harms, like “societal harm,” “allocative harm,” “interpersonal harm,” and more.

The novel design and insightful findings from the user study resulted in Farsight’s acceptance for presentation at CHI 2024.

CHI is considered the most prestigious conference for human-computer interaction and one of the top-ranked conferences in computer science.

CHI is affiliated with the Association for Computing Machinery. The conference takes place May 11-16 in Honolulu.

Wang worked on Farsight in Summer 2023 while interning at Google + AI Research group (PAIR).

Farsight’s co-authors from Google PAIR include Chinmay Kulkarni, Lauren Wilcox, Michael Terry, and Michael Madaio. The group possesses closer ties to Georgia Tech than just through Wang.

Terry, the current co-leader of Google PAIR, earned his Ph.D. in human-computer interaction from Georgia Tech in 2005. Madaio graduated from Tech in 2015 with a M.S. in digital media. Wilcox was a full-time faculty member in the School of Interactive Computing from 2013 to 2021 and serves in an adjunct capacity today.

Though not an author, one of Wang’s influences is his advisor, Polo Chau. Chau is an associate professor in the School of Computational Science and Engineering. His group specializes in data science, human-centered AI, and visualization research for social good.

“I think what makes Farsight interesting is its unique in-workflow and human-AI collaborative approach,” said Wang.

“Furthermore, Farsight leverages LLMs to expand prototypers’ creativity and brainstorm a wide range of use cases, stakeholders, and potential harms.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Apr. 23, 2024

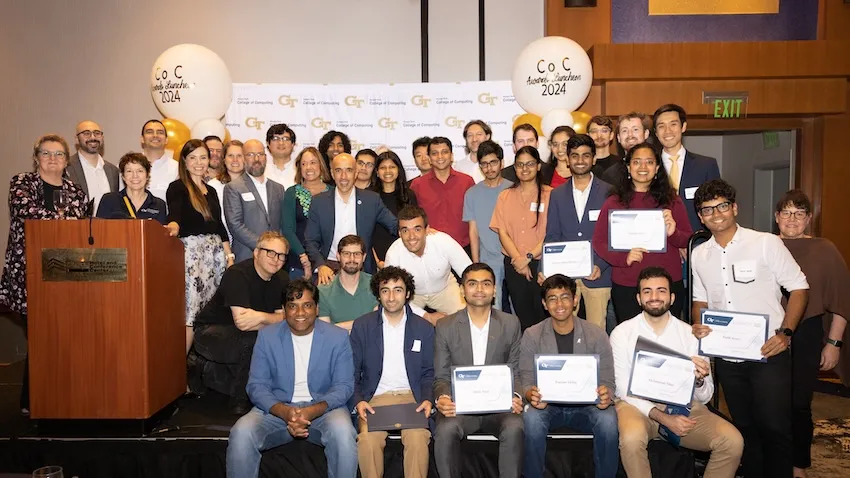

The College of Computing’s countdown to commencement began on April 11 when students, faculty, and staff converged at the 33rd Annual Awards Celebration.

The banquet celebrated the college community for an exemplary academic year and recognized the most distinguished individuals of 2023-2024. For Alex Orso, the reception was a high-water mark in his role as interim dean.

“I always say that the best part about my job is to brag about the achievements and accolades of my colleagues,” said Orso.

“It is my distinct honor and privilege to recognize these award winners and the collective success of the College of Computing.”

Orso’s colleagues from the School of Computational Science and Engineering (CSE) were among the celebration’s honorees. School of CSE students, faculty, and alumni earning awards this year include:

- Grace Driskill, M.S. CSE student - The Donald V. Jackson Fellowship

- Harshvardhan Baldwa, M.S. CSE student - The Marshal D. Williamson Fellowship

- Mansi Phute, M.S. CS student- The Marshal D. Williamson Fellowship

- Assistant Professor Chao Zhang- Outstanding Junior Faculty Research Award

- Nazanin Tabatbaei, teaching assistant in Associate Professor Polo Chau’s CSE 6242 Data & Visual Analytics course- Outstanding Instructional Associate Teaching Award

- Rodrigo Borela (Ph.D. CSE-CEE 2021), School of Computing Instruction Lecturer and CSE program alumnus - William D. "Bill" Leahy Jr. Outstanding Instructor Award

- Pratham Metha, undergraduate student in Chau’s research group- Outstanding Legacy Leadership Award

- Alexander Rodriguez (Ph.D. CS 2023), School of CSE alumnus - Outstanding Doctoral Dissertation Award

At the Institute level, Georgia Tech recognized Driskill, Baldwa, and Phute for their awards on April 10 at the annual Student Honors Celebration.

Driskill’s classroom achievement earned her a spot on the 2024 All-ACC Indoor Track and Field Academic Team. This follows her selection for the 2023 All-ACC Academic Team for cross country.

Georgia Tech’s Center for Teaching and Learning released in summer 2023 the Class of 1934 Honor Roll for spring semester courses. School of CSE awardees included Assistant Professor Srijan Kumar (CSE 6240: Web Search & Text Mining), Lecturer Max Mahdi Roozbahani (CS 4641: Machine Learning), and alumnus Mengmeng Liu (CSE 6242: Data & Visual Analytics).

Accolades and recognition of School of CSE researchers for 2023-2024 expounded off campus as well.

School of CSE researchers received awards off campus throughout the year, a testament to the reach and impact of their work.

School of CSE Ph.D. student Gaurav Verma kicked off the year by receiving the J.P. Morgan Chase AI Research Ph.D. Fellowship. Verma was one of only 13 awardees from around the world selected for the 2023 class.

Along with seeing many of his students receive awards this year, Polo Chau attained a 2023 Google Award for Inclusion Research. Later in the year, the Institute promoted Chau to professor, which takes effect in the 2024-2025 academic year.

Schmidt Sciences selected School of CSE Assistant Professor Kai Wang as an AI2050 Early Career Fellow to advance artificial intelligence research for social good. By being part of the fellowship’s second cohort, Wang is the first ever Georgia Tech faculty to receive the award.

School of CSE Assistant Professor Yunan Luo received two significant awards to advance his work in computational biology. First, Luo received the Maximizing Investigator’s Research Award (MIRA) from the National Institutes of Health, which provides $1.8 million in funding for five years. Next, he received the 2023 Molecule Make Lab Institute (MMLI) seed grant.

Regents’ Professor Surya Kalidindi, jointly appointed with the George W. Woodruff School of Mechanical Engineering and School of CSE, was named a fellow to the 2023 class of the Department of Defense’s Laboratory-University Collaboration Initiative (LUCI).

2023-2024 was a monumental year for Assistant Professor Elizabeth Qian, jointly appointed with the Daniel Guggenheim School of Aerospace Engineering and the School of CSE.

The Air Force Office of Scientific Research selected Qian for the 2024 class of their Young Investigator Program. Earlier in the year, she received a grant under the Department of Energy’s Energy Earthshots Initiative.

Qian began the year by joining 81 other early-career engineers at the National Academy of Engineering’s Grainger Foundation Frontiers of Engineering 2023 Symposium. She also received the Hans Fischer Fellowship from the Institute for Advance Study at the Technical University of Munich.

It was a big academic year for Associate Professor Elizabeth Cherry. Cherry was reelected to a three-year term as a council member-at-large of the Society of Industrial and Applied Mathematics (SIAM). Cherry is also co-chair of the SIAM organizing committee for next year’s Conference on Computational Science and Engineering (CSE25).

Cherry continues to serve as the School of CSE’s associate chair for academic affairs. These leadership contributions led to her being named to the 2024 ACC Academic Leaders Network (ACC ALN) Fellows program.

School of CSE Professor and Associate Chair Edmond Chow was co-author of a paper that received the Test of Time Award at Supercomputing 2023 (SC23). Right before SC23, Chow’s Ph.D. student Hua Huang was selected as an honorable mention for the 2023 ACM-IEEE CS George Michael Memorial HPC Fellowship.

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Apr. 17, 2024

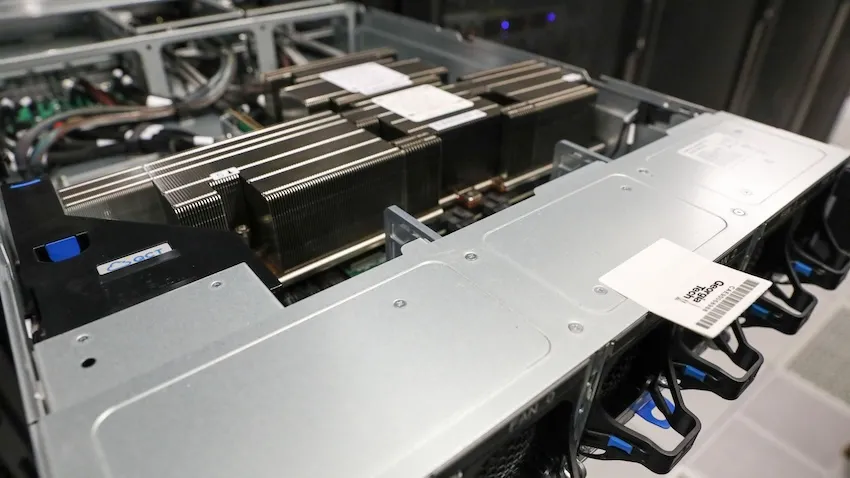

Computing research at Georgia Tech is getting faster thanks to a new state-of-the-art processing chip named after a female computer programming pioneer.

Tech is one of the first research universities in the country to receive the GH200 Grace Hopper Superchip from NVIDIA for testing, study, and research.

Designed for large-scale artificial intelligence (AI) and high-performance computing applications, the GH200 is intended for large language model (LLM) training, recommender systems, graph neural networks, and other tasks.

Alexey Tumanov and Tushar Krishna procured Georgia Tech’s first pair of Grace Hopper chips. Spencer Bryngelson attained four more GH200s, which will arrive later this month.

“We are excited about this new design that puts everything onto one chip and accessible to both processors,” said Will Powell, a College of Computing research technologist.

“The Superchip’s design increases computation efficiency where data doesn’t have to move as much and all the memory is on the chip.”

A key feature of the new processing chip is that the central processing unit (CPU) and graphics processing unit (GPU) are on the same board.

NVIDIA’s NVLink Chip-2-Chip (C2C) interconnect joins the two units together. C2C delivers up to 900 gigabytes per second of total bandwidth, seven times faster than PCIe Gen5 connections used in newer accelerated systems.

As a result, the two components share memory and process data with more speed and better power efficiency. This feature is one that the Georgia Tech researchers want to explore most.

Tumanov, an assistant professor in the School of Computer Science, and his Ph.D. student Amey Agrawal, are testing machine learning (ML) and LLM workloads on the chip. Their work with the GH200 could lead to more sustainable computing methods that keep up with the exponential growth of LLMs.

The advent of household LLMs, like ChatGPT and Gemini, pushes the limit of current architectures based on GPUs. The chip’s design overcomes known CPU-GPU bandwidth limitations. Tumanov’s group will put that design to the test through their studies.

Krishna is an associate professor in the School of Electrical and Computer Engineering and associate director of the Center for Research into Novel Computing Hierarchies (CRNCH).

His research focuses on optimizing data movement in modern computing platforms, including AI/ML accelerator systems. Ph.D. student Hao Kang uses the GH200 to analyze LLMs exceeding 30 billion parameters. This study will enable labs to explore deep learning optimizations with the new chip.

Bryngelson, an assistant professor in the School of Computational Science and Engineering, will use the chip to compute and simulate fluid and solid mechanics phenomena. His lab can use the CPU to reorder memory and perform disk writes while the GPU does parallel work. This capability is expected to significantly reduce the computational burden for some applications.

“Traditional CPU to GPU communication is slower and introduces latency issues because data passes back and forth over a PCIe bus,” Powell said. “Since they can access each other’s memory and share in one hop, the Superchip’s architecture boosts speed and efficiency.”

Grace Hopper is the inspirational namesake for the chip. She pioneered many developments in computer science that formed the foundation of the field today.

Hopper invented the first compiler, a program that translates computer source code into a target language. She also wrote the earliest programming languages, including COBOL, which is still used today in data processing.

Hopper joined the U.S. Navy Reserve during World War II, tasked with programming the Mark I computer. She retired as a rear admiral in August 1986 after 42 years of military service.

Georgia Tech researchers hope to preserve Hopper’s legacy using the technology that bears her name and spirit for innovation to make new discoveries.

“NVIDIA and other vendors show no sign of slowing down refinement of this kind of design, so it is important that our students understand how to get the most out of this architecture,” said Powell.

“Just having all these technologies isn’t enough. People must know how to build applications in their coding that actually benefit from these new architectures. That is the skill.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 19, 2024

Computer science educators will soon gain valuable insights from computational epidemiology courses, like one offered at Georgia Tech.

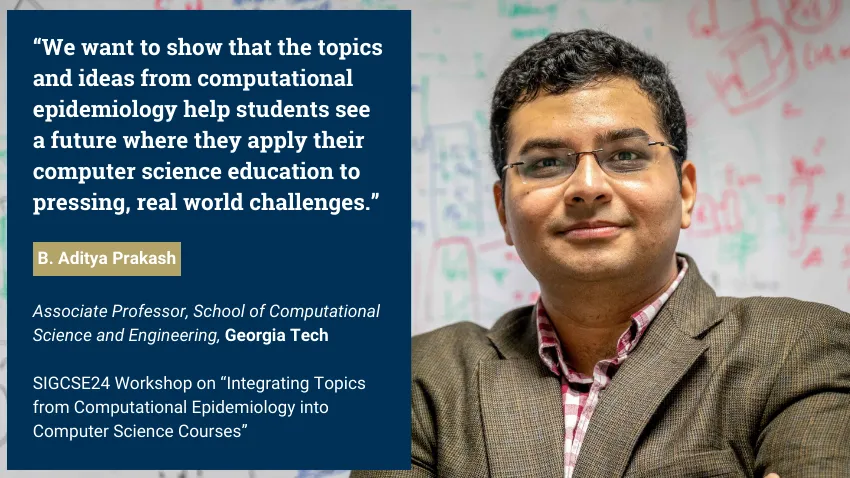

B. Aditya Prakash is part of a research group that will host a workshop on how topics from computational epidemiology can enhance computer science classes.

These lessons would produce computer science graduates with improved skills in data science, modeling, simulation, artificial intelligence (AI), and machine learning (ML).

Because epidemics transcend the sphere of public health, these topics would groom computer scientists versed in issues from social, financial, and political domains.

The group’s virtual workshop takes place on March 20 at the technical symposium for the Special Interest Group on Computer Science Education (SIGCSE). SIGCSE is one of 38 special interest groups of the Association for Computing Machinery (ACM). ACM is the world’s largest scientific and educational computing society.

“We decided to do a tutorial at SIGCSE because we believe that computational epidemiology concepts would be very useful in general computer science courses,” said Prakash, an associate professor in the School of Computational Science and Engineering (CSE).

“We want to give an introduction to concepts, like what computational epidemiology is, and how topics, such as algorithms and simulations, can be integrated into computer science courses.”

Prakash kicks off the workshop with an overview of computational epidemiology. He will use examples from his CSE 8803: Data Science for Epidemiology course to introduce basic concepts.

This overview includes a survey of models used to describe behavior of diseases. Models serve as foundations that run simulations, ultimately testing hypotheses and making predictions regarding disease spread and impact.

Prakash will explain the different kinds of models used in epidemiology, such as traditional mechanistic models and more recent ML and AI based models.

Prakash’s discussion includes modeling used in recent epidemics like Covid-19, Zika, H1N1 bird flu, and Ebola. He will also cover examples from the 19th and 20th centuries to illustrate how epidemiology has advanced using data science and computation.

“I strongly believe that data and computation have a very important role to play in the future of epidemiology and public health is computational,” Prakash said.

“My course and these workshops give that viewpoint, and provide a broad framework of data science and computational thinking that can be useful.”

While humankind has studied disease transmission for millennia, computational epidemiology is a new approach to understanding how diseases can spread throughout communities.

The Covid-19 pandemic helped bring computational epidemiology to the forefront of public awareness. This exposure has led to greater demand for further application from computer science education.

Prakash joins Baltazar Espinoza and Natarajan Meghanathan in the workshop presentation. Espinoza is a research assistant professor at the University of Virginia. Meghanathan is a professor at Jackson State University.

The group is connected through Global Pervasive Computational Epidemiology (GPCE). GPCE is a partnership of 13 institutions aimed at advancing computational foundations, engineering principles, and technologies of computational epidemiology.

The National Science Foundation (NSF) supports GPCE through the Expeditions in Computing program. Prakash himself is principal investigator of other NSF-funded grants in which material from these projects appear in his workshop presentation.

[Related: Researchers to Lead Paradigm Shift in Pandemic Prevention with NSF Grant]

Outreach and broadening participation in computing are tenets of Prakash and GPCE because of how widely epidemics can reach. The SIGCSE workshop is one way that the group employs educational programs to train the next generation of scientists around the globe.

“Algorithms, machine learning, and other topics are fundamental graduate and undergraduate computer science courses nowadays,” Prakash said.

“Using examples like projects, homework questions, and data sets, we want to show that the topics and ideas from computational epidemiology help students see a future where they apply their computer science education to pressing, real world challenges.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 14, 2024

Schmidt Sciences has selected Kai Wang as one of 19 researchers to receive this year’s AI2050 Early Career Fellowship. In doing so, Wang becomes the first AI2050 fellow to represent Georgia Tech.

“I am excited about this fellowship because there are so many people at Georgia Tech using AI to create social impact,” said Wang, an assistant professor in the School of Computational Science and Engineering (CSE).

“I feel so fortunate to be part of this community and to help Georgia Tech bring more impact on society.”

AI2050 has allocated up to $5.5 million to support the cohort. Fellows receive up to $300,000 over two years and will join the Schmidt Sciences network of experts to advance their research in artificial intelligence (AI).

Wang’s AI2050 project centers on leveraging decision-focused AI to address challenges facing health and environmental sustainability. His goal is to strengthen and deploy decision-focused AI in collaboration with stakeholders to solve broad societal problems.

Wang’s method to decision-focused AI integrates machine learning with optimization to train models based on decision quality. These models borrow knowledge from decision-making processes in high-stakes domains to improve overall performance.

Part of Wang’s approach is to work closely with non-profit and non-governmental organizations. This collaboration helps Wang better understand problems at the point-of-need and gain knowledge from domain experts to custom-build AI models.

“It is very important to me to see my research impacting human lives and society,” Wang said. That reinforces my interest and motivation in using AI for social impact.”

[Related: Wang, New Faculty Bolster School’s Machine Learning Expertise]

This year’s cohort is only the second in the fellowship’s history. Wang joins a class that spans four countries, six disciplines, and seventeen institutions.

AI2050 commits $125 million over five years to identify and support talented individuals seeking solutions to ensure society benefits from AI. Last year’s AI2050 inaugural class of 15 early career fellows received $4 million.

The namesake of AI2050 comes from the central motivating question that fellows answer through their projects:

It’s 2050. AI has turned out to be hugely beneficial to society. What happened? What are the most important problems we solved and the opportunities and possibilities we realized to ensure this outcome?

AI2050 encourages young researchers to pursue bold and ambitious work on difficult challenges and promising opportunities in AI. These projects involve research that is multidisciplinary, risky, and hard to fund through traditional means.

Schmidt Sciences, LLC is a 501(c)3 non-profit organization supported by philanthropists Eric and Wendy Schmidt. Schmidt Sciences aims to accelerate and deepen understanding of the natural world and develop solutions to real-world challenges for public benefit.

Schmidt Sciences identify under-supported or unconventional areas of exploration and discovery with potential for high impact. Focus areas include AI and advanced computing, astrophysics and space, biosciences, climate, and cross-science.

“I am most grateful for the advice from my mentors, colleagues, and collaborators, and of course AI2050 for choosing me for this prestigious fellowship,” Wang said. “The School of CSE has given me so much support, including career advice from junior and senior level faculty.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 05, 2024

Computer graphic simulations can represent natural phenomena such as tornados, underwater, vortices, and liquid foams more accurately thanks to an advancement in creating artificial intelligence (AI) neural networks.

Working with a multi-institutional team of researchers, Georgia Tech Assistant Professor Bo Zhu combined computer graphic simulations with machine learning models to create enhanced simulations of known phenomena. The new benchmark could lead to researchers constructing representations of other phenomena that have yet to be simulated.

Zhu co-authored the paper Fluid Simulation on Neural Flow Maps. The Association for Computing Machinery’s Special Interest Group in Computer Graphics and Interactive Technology (SIGGRAPH) gave it a best paper award in December at the SIGGRAPH Asia conference in Sydney, Australia.

The authors say the advancement could be as significant to computer graphic simulations as the introduction of neural radiance fields (NeRFs) was to computer vision in 2020. Introduced by researchers at the University of California-Berkley, University of California-San Diego, and Google, NeRFs are neural networks that easily convert 2D images into 3D navigable scenes.

NeRFs have become a benchmark among computer vision researchers. Zhu and his collaborators hope their creation, neural flow maps, can do the same for simulation researchers in computer graphics.

“A natural question to ask is, can AI fundamentally overcome the traditional method’s shortcomings and bring generational leaps to simulation as it has done to natural language processing and computer vision?” Zhu said. “Simulation accuracy has been a significant challenge to computer graphics researchers. No existing work has combined AI with physics to yield high-end simulation results that outperform traditional schemes in accuracy.”

In computer graphics, simulation pipelines are the equivalent of neural networks and allow simulations to take shape. They are traditionally constructed through mathematical equations and numerical schemes.

Zhu said researchers have tried to design simulation pipelines with neural representations to construct more robust simulations. However, efforts to achieve higher physical accuracy have fallen short.

Zhu attributes the problem to the pipelines’ incapability of matching the capacities of AI algorithms within the structures of traditional simulation pipelines. To solve the problem and allow machine learning to have influence, Zhu and his collaborators proposed a new framework that redesigns the simulation pipeline.

They named these new pipelines neural flow maps. The maps use machine learning models to store spatiotemporal data more efficiently. The researchers then align these models with their mathematical framework to achieve a higher accuracy than previous pipeline simulations.

Zhu said he does not believe machine learning should be used to replace traditional numerical equations. Rather, they should complement them to unlock new advantageous paradigms.

“Instead of trying to deploy modern AI techniques to replace components inside traditional pipelines, we co-designed the simulation algorithm and machine learning technique in tandem,” Zhu said.

“Numerical methods are not optimal because of their limited computational capacity. Recent AI-driven capacities have uplifted many of these limitations. Our task is redesigning existing simulation pipelines to take full advantage of these new AI capacities.”

In the paper, the authors state the once unattainable algorithmic designs could unlock new research possibilities in computer graphics.

Neural flow maps offer “a new perspective on the incorporation of machine learning in numerical simulation research for computer graphics and computational sciences alike,” the paper states.

“The success of Neural Flow Maps is inspiring for how physics and machine learning are best combined,” Zhu added.

News Contact

Nathan Deen, Communications Officer

Georgia Tech School of Interactive Computing

nathan.deen@cc.gatech.edu

Mar. 05, 2024

Georgia Tech is developing a new artificial intelligence (AI) based method to automatically find and stop threats to renewable energy and local generators for energy customers across the nation’s power grid.

The research will concentrate on protecting distributed energy resources (DER), which are most often used on low-voltage portions of the power grid. They can include rooftop solar panels, controllable electric vehicle chargers, and battery storage systems.

The cybersecurity concern is that an attacker could compromise these systems and use them to cause problems across the electrical grid like, overloading components and voltage fluctuations. These issues are a national security risk and could cause massive customer disruptions through blackouts and equipment damage.

“Cyber-physical critical infrastructures provide us with core societal functionalities and services such as electricity,” said Saman Zonouz, Georgia Tech associate professor and lead researcher for the project.

“Our multi-disciplinary solution, DerGuard, will leverage device-level cybersecurity, system-wide analysis, and AI techniques for automated vulnerability assessment, discovery, and mitigation in power grids with emerging renewable energy resources.”

The project’s long-term outcome will be a secure, AI-enabled power grid solution that can search and protect the DER’s on its network from cyberattacks.

“First, we will identify sets of critical DERs that, if compromised, would allow the attacker to cause the most trouble for the power grid,” said Daniel Molzahn, assistant professor at Georgia Tech.

“These DERs would then be prioritized for analysis and patching any identified cyber problems. Identifying the critical sets of DERs would require information about the DERs themselves- like size or location- and the power grid. This way, the utility company or other aggregator would be in the best position to use this tool.”

Additionally, the team will establish a testbed with industry partners. They will then develop and evaluate technology applications to better understand the behavior between people, devices, and network performance.

Along with Zonouz and Molzahn, Georgia Tech faculty Wenke Lee, professor, and John P. Imlay Jr. chair in software, will also lead the team of researchers from across the country.

The researchers are collaborating with the University of Illinois at Urbana-Champaign, the Department of Energy’s National Renewable Energy Lab, the Idaho National Labs, the National Rural Electric Cooperative Association, and Fortiphyd Logic. Industry partners Network Perception, Siemens, and PSE&G will advise the researchers.

The work will be carried out at Georgia Tech’s Cyber-Physical Security Lab (CPSec) within the School of Cybersecurity and Privacy (SCP) and the School of Electrical and Computer Engineering (ECE).

The U.S. Department of Energy (DOE) announced a $45 million investment at the end of February for 16 cybersecurity initiatives. The projects will identify new cybersecurity tools and technologies designed to reduce cyber risks for energy infrastructure followed by tech-transfer initiatives. The DOE’s Office of Cybersecurity, Energy Security, and Emergency Response (CESER) awarded $4.2 million for the Institute’s DerGuard project.

News Contact

JP Popham, Communications Officer II

Georgia Tech School of Cybersecurity & Privacy

john.popham@cc.gatech.edu

Feb. 05, 2024

Scientists are always looking for better computer models that simulate the complex systems that define our world. To meet this need, a Georgia Tech workshop held Jan. 16 illustrated how new artificial intelligence (AI) research could usher the next generation of scientific computing.

The workshop focused AI technology toward optimization of complex systems. Presentations of climatological and electromagnetic simulations showed these techniques resulted in more efficient and accurate computer modeling. The workshop also progressed AI research itself since AI models typically are not well-suited for optimization tasks.

The School of Computational Science and Engineering (CSE) and Institute for Data Engineering and Science jointly sponsored the workshop.

School of CSE Assistant Professors Peng Chen and Raphaël Pestourie led the workshop’s organizing committee and moderated the workshop’s two panel discussions. The duo also pitched their own research, highlighting potential of scientific AI.

Chen shared his work on derivative-informed neural operators (DINOs). DINOs are a class of neural networks that use derivative information to approximate solutions of partial differential equations. The derivative enhancement results in neural operators that are more accurate and efficient.

During his talk, Chen showed how DINOs makes better predictions with reliable derivatives. These have potential to solve data assimilation problems in weather and flooding prediction. Other applications include allocating sensors for early tsunami warnings and designing new self-assembly materials.

All these models contain elements of uncertainty where data is unknown, noisy, or changes over time. Not only is DINOs a powerful tool to quantify uncertainty, but it also requires little training data to become functional.

“Recent advances in AI tools have become critical in enhancing societal resilience and quality, particularly through their scientific uses in environmental, climatic, material, and energy domains,” Chen said.

“These tools are instrumental in driving innovation and efficiency in these and many other vital sectors.”

[Related: Machine Learning Key to Proposed App that Could Help Flood-prone Communities]

One challenge in studying complex systems is that it requires many simulations to generate enough data to learn from and make better predictions. But with limited data on hand, it is costly to run enough simulations to produce new data.

At the workshop, Pestourie presented his physics-enhanced deep surrogates (PEDS) as a solution to this optimization problem.

PEDS employs scientific AI to make efficient use of available data while demanding less computational resources. PEDS demonstrated to be up to three times more accurate than models using neural networks while needing less training data by at least a factor of 100.

PEDS yielded these results in tests on diffusion, reaction-diffusion, and electromagnetic scattering models. PEDS performed well in these experiments geared toward physics-based applications because it combines a physics simulator with a neural network generator.

“Scientific AI makes it possible to systematically leverage models and data simultaneously,” Pestourie said. “The more adoption of scientific AI there will be by domain scientists, the more knowledge will be created for society.”

[Related: Technique Could Efficiently Solve Partial Differential Equations for Numerous Applications]

Study and development of AI applications at these scales require use of the most powerful computers available. The workshop invited speakers from national laboratories who showcased supercomputing capabilities available at their facilities. These included Oak Ridge National Laboratory, Sandia National Laboratories, and Pacific Northwest National Laboratory.

The workshop hosted Georgia Tech faculty who represented the Colleges of Computing, Design, Engineering, and Sciences. Among these were workshop co-organizers Yan Wang and Ebeneser Fanijo. Wang is a professor in the George W. Woodruff School of Mechanical Engineering and Fanjio is an assistant professor in the School of Building Construction.

The workshop welcomed academics outside of Georgia Tech to share research occurring at their institutions. These speakers hailed from Emory University, Clemson University, and the University of California, Berkeley.

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Pagination

- Previous page

- 4 Page 4

- Next page