May. 12, 2026

Developed through Georgia Tech research and supported by the Institute’s commercialization ecosystem, Kinemo is helping people with limited mobility regain independence through wearable assistive technology. The startup, founded by researchers from the Inan Research Lab, collaborated with Shepherd Center clinicians and patients to refine the technology and expand accessibility for users navigating life with spinal cord injuries and mobility limitations.

Apr. 30, 2026

A Georgia Tech School of Interactive Computing professor and his Ph.D. student have been named to the 2026 list of Microsoft Research Fellows and Fellowship Advisors.

Associate Professor Alan Ritter and Ph.D. student Ethan Mendes were awarded fellowships for their work on creating artificial intelligence (AI) agents that function as teammates.

Mendes was named a fellow, while Ritter will serve as his fellowship advisor.

The Microsoft Research Fellowship is open to faculty, students, and postdocs. Ritter said that if Microsoft sees alignment in a project, it gives recipients the opportunity to work even closer with their collaborators by inviting them to join as additional fellows.

That turned out to be the case with Mendes after Ritter listed him as a collaborator in his fellowship proposal.

“I’m delighted to serve as Ethan Mendes’ fellowship advisor,” Ritter said. “He is an exceptionally strong researcher, and I’m excited to see his work recognized through the Microsoft Research Fellowship.”

Through the fellowship, Ritter and Mendes will design AI systems that better support collaboration and decision-making within organizations.

“The goal is to move beyond AI as a tool for a single user and instead study how AI can help groups make more informed, transparent, and coordinated decisions,” Ritter said. “We will focus on methods that bring together information from many different sources, help people reason under uncertainty, and generate analyses that support collective problem-solving in complex work settings.”

Professor Named to Sustainability Cohort

The Purple Mai’a Foundation has selected Associate Professor Josiah Hester to join its Eahou Global Immersion Cohort.

The Purple Mai’a Foundation is a technology education nonprofit headquartered in Aiea, Hawaii, that teaches coding and computer science to Native Hawaiian students.

The 29 members of the Eahou Global Immersion Cohort from 15 countries are leaders from indigenous communities recognized for their contributions to sustainability.

Hester is a Native Hawaiian whose research centers on sustainable and battery-free technology.

The cohort will gather on O’ahu May 1-3 for Eahou Fest, where they will share stories and solutions from research around the world.

“I’m honored to be selected for the Eahou Global Immersion Cohort and to learn alongside such an inspiring group of resilience leaders who come from around the globe,” Hester said.

“Participants are selected for their significant leadership over the past decade and their ability to bring what they learn back to their communities and integrate it into ongoing work and partnerships. I’m excited to connect these experiences with my work and bring these lessons back into research and teaching at Georgia Tech.”

Jill Watson Creator Receives AAAI Lecture Award

Professor Ashok Goel received one of the most distinguished awards from the Association for the Advancement of Artificial Intelligence (AAAI).

Goel was selected as the 20th recipient of the AAAI Robert S. Engel Memorial Lecture Award. Established in 2003, the award is given to those who have demonstrated excellence in AI scholarship, outstanding applications of AI, and extraordinary service to AAAI and the AI community.

Goel received the award in January during the AAAI Conference on Artificial Intelligence in Singapore. According to the awards program, Goel was recognized for contributions to biologically inspired design, case-based reasoning, and application of AI in virtual teaching.

Goel is the inventor of Jill Watson, one of the first AI virtual teaching assistants used in higher education classrooms.

AAAI is also the publisher of AI Magazine, which Goel served as editor-in-chief from 2016 to 2021.

“I am both honored and humbled to receive AAAI's Robert Engelmore Award,” Goel said. “Bob was a long-time editor of AAAI's AI Magazine, and many years after he retired, I became the editor of the magazine. This makes the Engelmore Award special to me.”

Apr. 29, 2026

Georgia Tech hosted an event on April 21 examining the rapid expansion of data centers and the social and policy issues emerging alongside the growth of AI infrastructure. The program, The Future of Data Centers: Shaping the Social and Policy Landscape of Our AI Infrastructure, was held at the Alumni House and co-sponsored by the Institute for People and Technology and the Brook Byers Institute for Sustainable Systems (BBISS).

Georgia has become the world’s second-largest data center market, a shift that has brought economic opportunity as well as concerns about water use, energy demand, land development, and impacts on host communities. One recurring theme throughout the event was the tendency for environmental and resource issues to overshadow other important policy questions about community impact, transparency, and long-term governan

Introductory remarks were made by Beril Toktay, executive director of the Brook Byers Institute for Sustainable Systems, and Michael Best, executive director of the Institute for People and Technology.

Verghese Jacob, senior vice president of technology at the DayOne corporation, delivered the keynote address. Jacob discussed how DayOne works with governments in Asia to plan data centers and said early policy development and consistent communication can help communities better understand the impact and manage growth for long-term, mutually beneficial partnerships between governments and communities.

The event also included a BBISS Connect Workshop, led by Kristin Janacek, a senior extension professional with BBISS. The workshop built on BBISS’s Sustainability for Data Centers Insights Series and asked participants to contribute to a collaborative “blue paper” intended to guide future research partnerships and responses to funding opportunities.

Two panel discussions explored the social and political dimensions of data center development. The first, moderated by Cindy Lin, an assistant professor in the School of Interactive Computing, focused on international perspectives. Panelists included Celine Benoit of the Atlanta Regional Commission, Matthew Wesley Williams of Groundswell, Kahlil Bostick of Ryan Companies, and Ding Wang of Google Research. They discussed global examples of community-centered planning and the need for transparency in negotiations.

A second panel, moderated by Allen Hyde, an associate professor in the School of History and Sociology, examined collaboration between communities and government agencies. Panelists were Georgia Public Service Commissioner Peter Hubbard; Donnie Beamer, senior technology advisor for the City of Atlanta; Atlanta Journal-Constitution reporter Zachary Hansen; and Michael Czajkowski, director of advocacy for Science for Georgia. The group highlighted the importance of proactive regulation and clear communication with residents as data center development accelerates.

Speakers throughout the day emphasized that Atlanta’s continued growth in the data center sector will require coordinated planning and meaningful engagement with affected communities. The event closed with a call for all stakeholders to be proactive about creating policies that balance the technological and economic promise of the data center building boom with environmental and community concerns.

News Contact

Walter Rich

Apr. 28, 2026

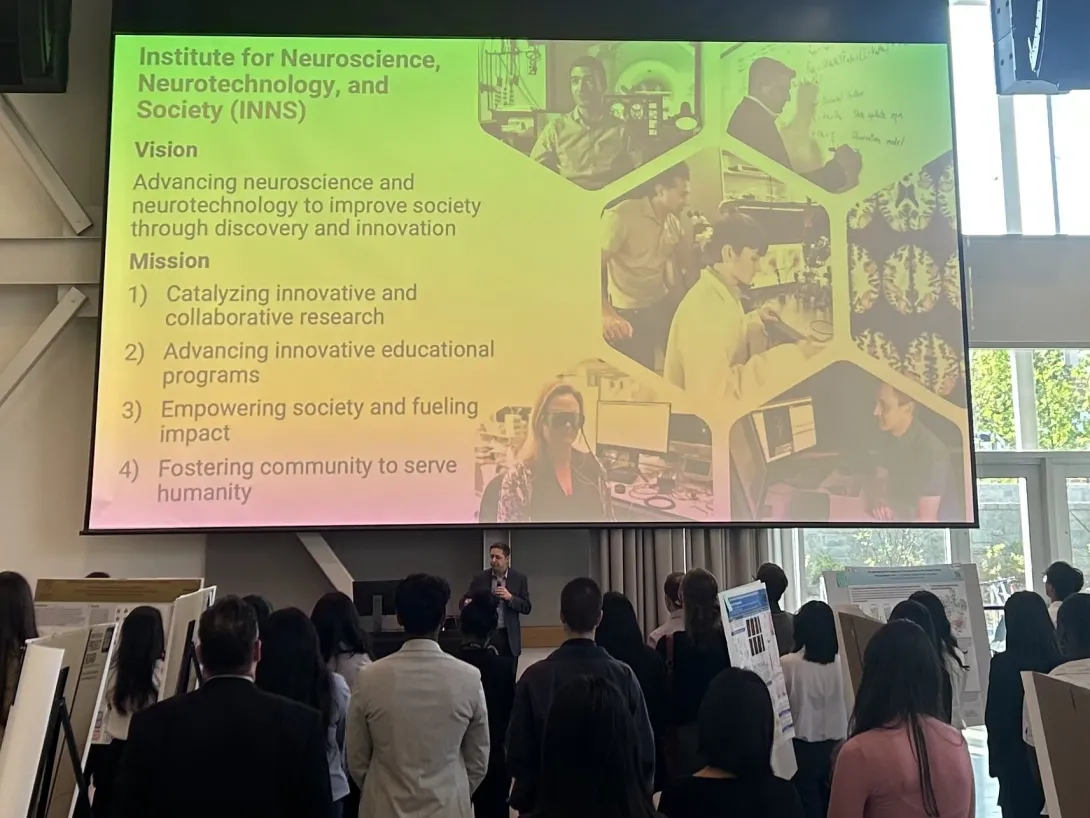

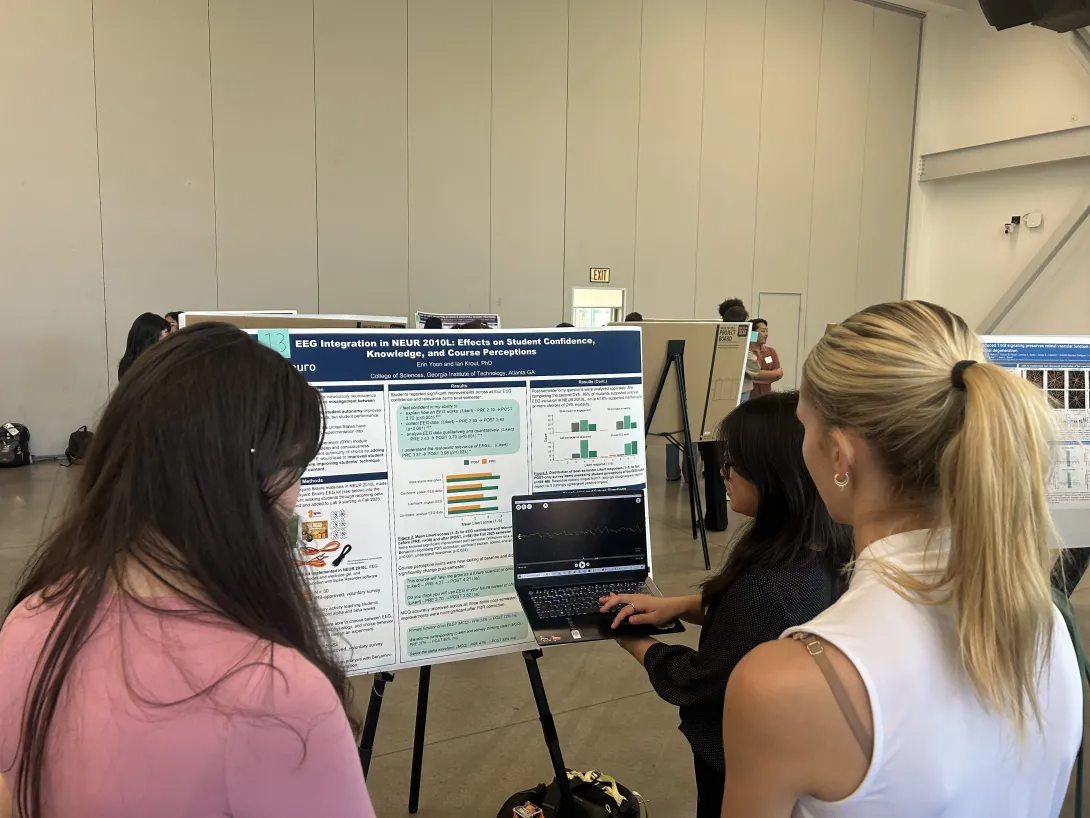

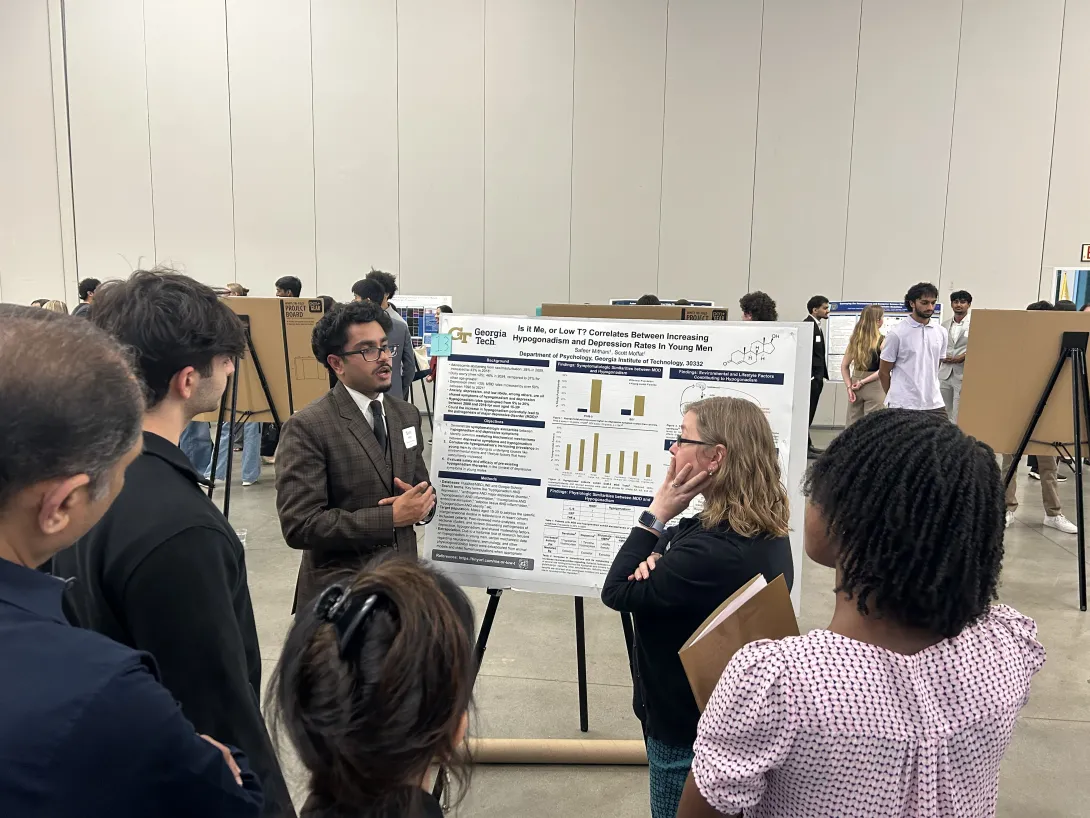

At Georgia Tech, undergraduate students are an integral part of the research enterprise – particularly when it comes to neuroscience. That dedication to undergraduate research was on full display on April 8, when more than 100 students from Atlanta-area universities gathered for the annual ATL Neuro Networking and Symposium Night.

This student-run event, hosted by the Georgia Tech Student Neuroscience Association (SNA) and co-sponsored by the Institute for Neuroscience, Neurotechnology, and Society (INNS) and the Neuroscience Undergraduate Program at Georgia Tech, aimed to bring together students and faculty from the broader Atlanta neuroscience community for an evening of data-blitz talks showcasing faculty research, undergraduate poster presentations, and catered networking.

“Our goal was to bridge the gap between Atlanta’s institutions and showcase the diversity of undergraduate research,” says Harshin Vijay, symposium director of SNA. “By bringing these groups together through SNA, we’re fostering an ecosystem where the next generation of scientists can exchange ideas and build collaborative networks essential for future innovation."

The impact of undergraduate neuroscience research is “more than bench to bedside,” said INNS Executive Director Chris Rozell at the event. “It’s about advancing neuroscience and neurotechnology to improve society through discovery and innovation. Undergraduate research catalyzes innovation – invigorating and advancing educational programs through collaboration that empowers society – fueling impact and fostering the community of next-generation scientists.”

Featuring more than 40 undergraduate posters, research topics ranged anywhere from the impact of music on associative memory to the role of taste projection neurons in Drosophila. Some students even examined their own coursework, either as a TA or their involvement with capstone research.

“There are neuroscientists in every College at Georgia Tech, and we have undergraduate neuroscience students performing research all over campus and in the broader Atlanta neuroscience community,” says Katharine McCann, the director of Undergraduate Research for Georgia Tech’s neuroscience program. “Events like this bring those students together to learn from each other and broaden their networks. It is exciting to see so many students passionate about their research.”

Four posters were awarded for their work:

Best Poster Design: “Role of Taste Projection Neurons in Drosophila Taste Processing”

- Hanti Jiang, Emory University

Best Presentation: “Neuroscience and Computer Science Roots of Pattern Recognition”

- Rishi Polepally, Georgia Tech

- Aryan Kumar, Georgia Tech

- Vedanth Natarajan, Georgia Tech

Best 4001 Group: “Evaluating Cognitive Engagement in AI-Generated VS. Human-Created Educational Content”

- Hannah Ammari, Georgia Tech

- Shobini Palaniappan, Georgia Tech

- Rayhan Quraishi, Georgia Tech

- Aryan Shah, Georgia Tech

- Divya Tadanki, Georgia Tech

People's Choice Award: “Vibration as an effective facilitation of sensorimotor learning in Blaptica dubia cockroaches”

- Diana Sethna, Georgia Tech

- Jacob Hayes, Georgia Tech

- Ellie Kate Watson, Georgia Tech

- Arya Oak, Georgia Tech

Esha Panse, Georgia Tech

- Hersh Mathur, Georgia Tech

News Contact

Writer: Hunter Ashcraft

Communications Student Assistant

Institute for Neuroscience, Neurotechnology, and Society

Media Contact: Audra Davidson

Research Communications Program Manager

Institute for Neuroscience, Neurotechnology, and Society

Apr. 22, 2026

- written by Seungho Lee

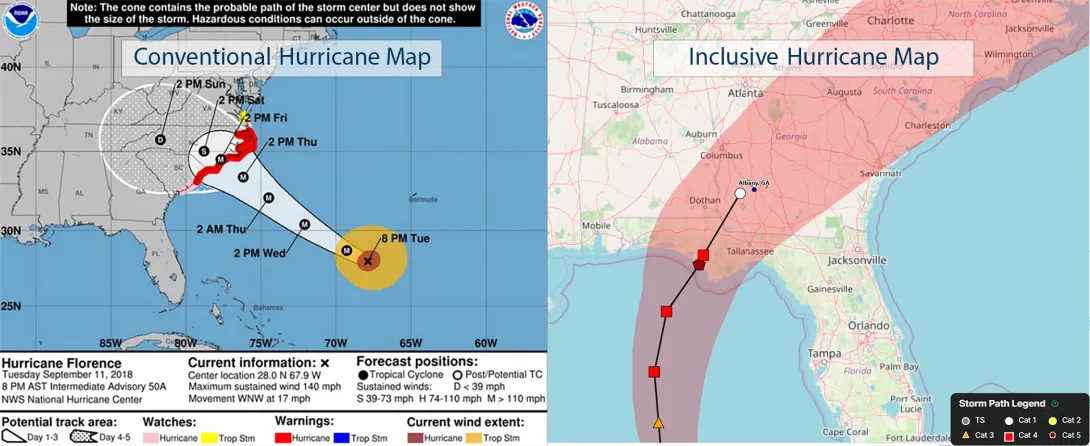

The North American hurricane season is, for many on the East Coast and Gulf Coast, six months of vigilance, and among the resources most likely to be consulted during this time are storm tracking maps. If you learn that your home might be in the path of a storm, you probably actively search for the most current version of one of these maps. Bruce Walker, a professor in the schools of Psychology and Interactive Computing at Georgia Tech, wants to ensure that storm-tracking maps and other emergency and environmental communication tools convey the most important information in the most understandable manner to the largest number of people possible. “Weather and climate affect every single person on Earth,” he said, “so no one can be left behind when it comes to these critical communications.”

Walker is director of the Center for Inclusive Climate Communication (CICC) at Georgia Tech. CICC is a new and growing consortium of researchers, organizations, agencies, and companies whose goal is to ensure that climate information of all types is widely accessible. The center is housed in the School of Psychology but has affiliated faculty from all around campus, and several universities around the U.S. CICC is expanding internationally as well, developing sub-networks in Europe, Africa, and Australia.

As part of its efforts, the CICC is working with the coastal city of Brunswick, Georgia. Situated about 65 miles northeast of Jacksonville, Florida, Brunswick is no stranger to hurricanes and tropical storms. The city is working to develop a comprehensive Community-Based Emergency Warning System, which will include maps and other emergency communications that ensure language, culture, level of education, or other differences in lived experience are not barriers to residents understanding critical safety information. This work is supported by the Brook Byers Institute for Sustainable Systems (BBISS) and the Center for Sustainable Communities Research and Education (SCoRE) through the Sustainability Next Seed Grant Program.

Hurricane maps and related information can come from many sources. Government agencies, municipal emergency management agencies, media outlets, and meteorological organizations all may have their own versions, which vary in how they visually display data. The information used to generate the maps is collected and distributed to the public domain by the National Oceanic and Atmospheric Administration (NOAA) every few hours. The maps that the public sees show the important information that one would expect, but they may not do so with an eye for how different people might interpret, or misinterpret, that info.

“Once we determine the best way to present hurricane data to the most people, we will work with content providers to standardize the way they generate these resources,” says Walker. “Reliable data and what we call inclusive communications lead to better decisions by the public.”

The CICC investigators’ process aspires to the philosophy of Universal Design, but since no design can be 100% universal, they refer to what they create as “inclusive designs.” Inclusive design means adapting to the diverse needs of the broadest possible audience. Since the language skills, education, lived experience, and physical ability of the person in the storm’s path can vary, these maps must present information in many alternative ways.

For those who can see the map, for example, improving the visual design (e.g., a better use of symbols and a clearer visual layout) can help. For those with vision impairment, adding audio layers (called “sonification”) to the map can help. For many people, simply comprehending a map can itself be a challenge. In that case, adding more explanations about how to interpret a map, what different terms mean, and what the storm is likely to do can make it more understandable.

All of these strategies provide multiple means of accessing, understanding, and acting on the data represented by the map. When studying how to design inclusive maps, soliciting input and suggestions from as many different potential users as possible helps the CICC team ensure that vital information is understandable and useful to the most people.

One of CICC’s primary goals is to take lessons from their research projects, such as the inclusive hurricane map, and derive general principles for the effective design of emergency communications tools of all types. While every disaster, from floods and wildfires to tsunamis, tornadoes, and ice storms, will require the distribution of unique pieces of data, the CICC researchers and their community partners are identifying design strategies that will make these communications understandable and actionable to everyone.

Walker and other CICC researchers engage students in this work. Isabella Martincic, a Ph.D. student in engineering psychology, shepherds many of the center’s research and design efforts, including AccessCORPS, a team that makes educational materials more inclusive and accessible. Jessica Herring and Ishan Vepa, students in the M.S. program in human-computer interaction, have led the hurricane map project, including overhauling existing maps from recent storms by applying CICC design guidelines to them. And undergraduate student Cal Price has been the lead researcher on the Brunswick collaboration, engaging with both community members and civic officials.

These efforts — adding more features, revamping existing maps, and consulting with weather experts and end users — demonstrate how seemingly simple changes can lead to significantly better interpretations of the data by the target audience. The research behind the inclusive hurricane maps will be presented at the 23rd International Web for All Conference, which takes place later this year.

CICC researchers are also engaging in partnerships with companies that see the potential benefits of this approach. Data visualization company Highcharts, for example, is a supporter and collaborator. Since their business models revolve around distributing such information, they have a keen interest in the lessons learned from CICC research. CICC does not regard its findings as intellectual property; they prefer that good design guidelines proliferate.

“Ultimately, our goal is for anyone to be able to look at a communication tool, quickly grasp critical pieces of information that may impact their lives and well-being, and take appropriate actions,” Walker said, “whether that be for the daily weather or for an impending natural disaster.”

News Contact

Brent Verrill, Research Communications Program Manager, BBISS

Apr. 21, 2026

When Team Atlanta claimed first place in the DARPA AI Cyber Challenge last year, they weren’t just celebrating a win—they were demonstrating that artificial intelligence (AI) could autonomously detect and patch software vulnerabilities at a scale once considered impossible.

Now, the team is working with the Linux Foundation and the Open Source Security Foundation (OpenSSF) to ensure that its breakthrough doesn’t remain confined to a competition environment. The team’s new initiative, OSS-CRS, aims to standardize and operationalize cyber reasoning systems (CRSs) for real-world use.

“The AI Cyber Challenge pushed the boundaries of autonomous software security, with seven teams developing systems capable of finding and remediating vulnerabilities at scale,” said Andrew Chin, a Georgia Tech Ph.D. student and lead on the OSS-CRS program.

“However, after the competition’s conclusion, it has been difficult to apply these advancements to the open-source community due to infrastructure incompatibilities and the lack of long-term maintenance for the open-sourced CRS implementations.”

To address this gap, Georgia Tech’s Systems Software Lab (SSLab), directed by Professor Taesoo Kim, is leading the development of OSS-CRS, which provides both a common framework for CRS development and the infrastructure needed to deploy these systems seamlessly across open-source projects.

As part of this effort, the team has ported its competition-winning system, Atlantis, into the OSS-CRS framework. The move makes it compatible with laptops and other everyday machines with flexible resource and budget configurations.

Interoperability is also central to the framework’s design. Atlantis can be combined with other CRSs to improve performance, including systems developed by fellow AIxCC finalists and newer agentic, command-line-based tools. This modular approach reflects a key lesson the team learned from the competition: collaboration between systems can outperform any single solution.

OSS-CRS has been accepted as a sandbox project within OpenSSF’s AI/ML Security Working Group, a milestone that brings added technical guidance and community support to the project. This includes:

- Access to mentorship

- Dedicated working group meetings

- Broader visibility through industry events, publications, and outreach efforts

The collaboration will also foster stronger connections with open-source maintainers, helping streamline vulnerability disclosure and remediation workflows.

News Contact

John Popham

School of Cybersecurity and Privacy

Georgia Tech

Mar. 31, 2026

While people use search engines, chatbots, and generative artificial intelligence tools every day, most don’t know how they work. This sets unrealistic expectations for AI and leads to misuse. It also slows progress toward building new AI applications.

Georgia Tech researchers are making AI easier to understand through their work on Transformer Explainer. The free, online tool shows non-experts how ChatGPT, Claude, and other large language models (LLMs) process language.

Transformer Explainer is easy to use and runs on any web browser. It quickly went viral after its debut, reaching 150,000 users in its first three months. More than 563,000 people worldwide have used the tool so far.

Global interest in Transformer Explainer continues when the team presents the tool at the 2026 Conference on Human Factors in Computing Systems (CHI 2026). CHI, the world’s most prestigious conference on human-computer interaction, will take place in Barcelona, April 13-17.

“There are moments when LLMs can seem almost like a person with their own will and personality, and that misperception has real consequences. For example, there have been cases where teenagers have made poor decisions based on conversations with LLMs,” said Ph.D. student Aeree Cho.

“Understanding that an LLM is fundamentally a model that predicts the probability distribution of the next token helps users avoid taking its outputs as absolute. What you put in shapes what comes out, and that understanding helps people engage with AI more carefully and critically.”

A transformer is a neural network architecture that changes data input sequence into an output. Text, audio, and images are forms of processed data, which is why transformers are common in generative AI models. They do this by learning context and tracking mathematical relationships between sequence components.

Transformer Explainer demystifies how transformers work. The platform uses visualization and interaction to show, step by step, how text flows through a model and produces predictions.

Using this approach, Transformer Explainer impacts the AI landscape in four main ways:

- It counters hype and misconceptions surrounding AI by showing how transformers work.

- It improves AI literacy among users by removing technical barriers and lowering the entry for learning about AI.

- It expands AI education by helping instructors teach AI mechanisms without extensive setup or computing resources.

- It influences future development of AI tools and educational techniques by providing a blueprint for interpretable AI systems.

“When I first learned about transformers, I felt overwhelmed. A transformer model has many parts, each with its own complex math. Existing resources typically present all this information at once, making it difficult to see how everything fits together,” said Grace Kim, a dual B.S./M.S. computer science student.

“By leveraging interactive visualization, we use levels of abstraction to first show the big picture of the entire model. Then users click into individual parts to reveal the underlying details and math. This way, Transformer Explainer makes learning far less intimidating.”

Many users don’t know what transformers are or how they work. The Georgia Tech team found that people often misunderstand AI. Some label AI with human-like characteristics, such as creativity. Others even describe it as working like magic.

Furthermore, barriers make it hard for students interested in transformers to start learning. Tutorials tend to be too technical and overwhelm beginners with math and code. While visualization tools exist, these often target more advanced AI experts.

Transformer Explainer overcomes these obstacles through its interactive, user-focused platform. It runs a familiar GPT model directly in any web browser, requiring no installation or special hardware.

Users can enter their own text and watch the model predict the next word in real time. Sankey-style diagrams show how information moves through embeddings, attention heads, and transformer blocks.

The platform also lets users switch between high-level concepts and detailed math. By adjusting temperature settings, users can see how randomness affects predictions. This reveals how probabilities drive AI outputs, rather than creativity.

“Millions of people around the world interact with transformer-driven AI. We believe that it is crucial to bridge the gap between day-to-day user experience and the models' technical reality, ensuring these tools are not misinterpreted as human-like or seen as sentient,” said Ph.D. student Alex Karpekov.

“Explaining the architecture helps users recognize that language generated by models is a product of computation, leading to a more grounded engagement with the technology.”

Cho, Karpekov, and Kim led the development of Transformer Explainer. Ph.D. students Alec Helbling, Seongmin Lee, Ben Hoover, and alumni Zijie (Jay) Wang (Ph.D. ML-CSE 2024) and Minsuk Kahng (Ph.D. CS-CSE 2019) assisted on the project.

Professor Polo Chau supervised the group and their work. His lab focuses on data science, human-centered AI, and visualization for social good.

Acceptance at CHI 2026 stems from the team winning the best poster award at the 2024 IEEE Visualization Conference. This recognition from one of the top venues in visualization research highlights Transformer Explainer’s effectiveness in teaching how transformers work.

“Transformer Explainer has reached over half a million learners worldwide,” said Chau, a faculty member in the School of Computational Science and Engineering.

“I'm thrilled to see it extend Georgia Tech's mission of expanding access to higher education, now to anyone with a web browser.”

News Contact

Bryant Wine, Communications Officer

bryant.wine@cc.gatech.edu

Mar. 31, 2026

Voice-activated, conversational artificial intelligence (AI) agents must provide clear explanations for their suggestions, or older adults aren’t likely to trust them.

That’s one of the main findings from a study by AI Caring on what older adults expect from explainable AI (XAI).

AI Caring is one of three AI Institutions led by Georgia Tech and funded by the National Science Foundation (NSF). The institution supports AI research that benefits older adults and their caregivers.

Niharika Mathur, a Ph.D. candidate in the School of Interactive Computing, was the lead author of a paper based on the study. The paper will be presented in April at the 2026 ACM Conference on Human Factors in Computing Systems (CHI) in Barcelona.

Mathur worked with the Cognitive Empowerment Program at Emory University to interview 23 older adults who live alone and use voice-activated AI assistants like Amazon’s Alexa and Google Home.

Many of them told her they feel excluded from the design of these products.

“The assumption is that all people want interactions the same way and across all kinds of situations, but that isn’t true,” Mathur said. “How older people use AI and what they want from it are different from what younger people prefer.”

One example she gave is that young people tend to be informal when talking with AI. Older people, on the other hand, talk to the agent like they would a person.

“If Older adults are talking to their family members about Alexa, they usually refer to Alexa as ‘she’ instead of ‘it,’” Mathur said. “They tend to humanize these systems a lot more than young people.”

Good Explanations

The study evaluated AI explanations that drew information from four sources of data:

- User history (past conversations with the agent)

- Environmental data (indoor temperature or the weather forecast)

- Activity data (how much time a user spends in different areas of the home)

- Internal reasoning (mathematical probabilities and likely outcomes)

Mathur said older users trust the agent more when it bases its explanations on data from the first three sources. However, internal reasoning creates skepticism.

Internal reasoning means the AI doesn’t have enough data from the other sources to give an explanation. It provides a percentage to reflect its confidence based on what it knows.

“The overwhelming response was negative toward confidence scores,” Mathur said. “If the AI says it’s 92% confident, older adults want to know what that’s based on.”

This is another example that Mathur said points to generational preferences.

“There’s a lot of explainable AI research that shows younger people like to see numbers in explanations, and they also tend to rely too much on explanations that contain numerical confidence. Older adults are the opposite. It makes them trust it less.”

Knowing the Context

Mathur said that AI agents interacting with older adults should serve a dual purpose. They should provide users with companionship and support independence while reducing the caretaking burden often placed on family members.

Some studies have shown that engineers have tended to favor caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are merely a box to be checked.

She discovered that in urgent situations, older users prefer the AI to be straightforward, while in casual settings, they desire more conversation.

“How people interact with technological systems is grounded in what the stakes of the situation are,” she said. “If it had anything to do with their immediate sense of safety, they did not want conversational elaboration. They want the AI to be very direct and factual.”

Not Just Checking Boxes

Mathur said AI agents that interact with older adults are ideally constructed with a dual purpose. They should provide companionship and autonomy for the users while alleviating the burden of caretaking that is often placed on their family members.

Some studies have shown that engineers have strayed toward favoring caretakers in the design of these tools. They prioritize daily tasks and routines, leaving some older adults to feel like they are a box to be checked.

“They’re not being thought of as consumers,” Mathur said. “A lot of products are being made for them but not with them.”

She also said psychological well-being is one of the most important outcomes these tools should produce.

Showing older adults that they are listened to can significantly help in gaining their trust. Some interviewees told Mathur they want agents who are deliberate about understanding their preferences and don’t dismiss their questions.

Meeting these needs reduces the likelihood of protesting and creating conflict with family members.

“It highlights just how important well-designed explanations are,” she said. “We must go beyond a transparency checklist.”

News Contact

Nathan Deen

College of Computing

Georgia Tech

Mar. 25, 2026

Georgia Tech has announced the recipients of the 2026 Institute Research Awards, honoring faculty, staff, and research teams whose work has made significant scientific, technological, and societal impact. Presented by the Office of the Executive Vice President for Research, the awards recognize excellence across six categories spanning innovation, mentorship, collaboration, engagement, and research program development and impact. This year’s honorees reflect the breadth of Georgia Tech’s research enterprise — from foundational discovery to commercialization and community partnerships — and will be recognized at the Faculty and Staff Honors Luncheon on April 24.

Mar. 18, 2026

Five Georgia Tech computer science (CS) students have been named Squarepoint Foundation Scholars, receiving merit- and need-based scholarships for their undergraduate studies. The Squarepoint Foundation is providing $100,000 to fund the awards, which offer $10,000 per year for two years to rising third-year students.

Now in its second year of supporting the College of Computing, the Squarepoint Foundation continues to expand opportunities, enabling students to focus fully on their studies and pursue activities outside the classroom.

A selection committee led by Mary Hudachek-Buswell, interim chair of the School of Computing Instruction (SCI), chose this year’s cohort.

“These students exemplify the curiosity, talent, and determination we strive to cultivate in computer science,” Hudachek-Buswell said. “The Squarepoint Foundation Scholarships will give them the opportunity to focus fully on their studies while pursuing research and projects that have the potential to make a real-world impact.”

The scholars have demonstrated strong leadership across campus, with all five serving as teaching assistants (TAs) and earning faculty honors. The cohort is also engaged in research and study abroad opportunities.

Founded in 2021, the Squarepoint Foundation supports STEM education and research while partnering with organizations worldwide to expand opportunity and access.

“We are proud to continue our partnership with Georgia Tech, as we extend our support to a number of students working towards achieving their academic goals,” said Allison Henry, Squarepoint Foundation manager.

“The Squarepoint Foundation aims to increase access to education, ensuring that all individuals have the opportunity to pursue the degree of their choice, no matter their circumstances. We wish these talented students the best of luck as they undertake their studies and recognize them for their hard work and dedication to the STEM field."

Meet the Scholars

Maria Cymbalyuk

Cymbalyuk studies Cybersecurity and Information Internetwork threads, focusing on how technical systems shape who is protected or exposed in digital environments. She’s interested in supporting public defenders and improving access to justice through technology.

“This scholarship made this semester feel less financially stressful and more like I can focus on building the skills and experiences I care about,” Cymbalyuk said. “I want to use my skills to build tools and do research that supports public interest organizations.”

Marziah Islam

Islam concentrates on the People and Intelligence threads, exploring how humans interact with technology. She is developing a sign-language learning mobile app through a Vertically Integrated Project and hopes to build accessible, reliable systems in healthcare technology.

“I am fascinated by the intersection of humans and computing, and I want to design technology that better supports real people,” Islam said.

Sahadev Bharath

Bharath studies Architecture and Information Internetworks threads, with interests in low-level programming, operating systems, and large-scale systems. He plans to begin his career in software engineering, focusing on distributed systems and AI infrastructure.

“Coming from India, being able to afford out-of-state tuition has been a challenge. This scholarship relieves financial stress and gives me more time to focus on my academics and career,” Bharath said.

“I am passionate about teaching and sharing my knowledge with fellow students. Being a TA has been extremely fulfilling and motivates me to continue contributing to education.”

Joie Yeung

Yeung studies Information Internetworks and Intelligence threads, with a focus on data and artificial intelligence. She has received the President’s Volunteer Service Award for completing more than 100 service hours in one year. In addition to pursuing a career in software engineering, she is passionate about mentoring younger girls and addressing the gender gap in STEM.

“I want to create meaningful and impactful technology while giving back to my communities. I also aim to show younger girls that they can succeed in computing despite the gender gap,” Yeung said.

Jun Hong Wang

Wang studies system architecture and intelligence with a minor in mathematics, concentrating on computer architecture and low-level optimization. He is considering careers in software engineering, research, or entrepreneurship at the intersection of hardware and software.

“I’m especially interested in how hardware and software intersect, and I hope to use my work to create solutions that are meaningful and helpful for the world,” Wang said.

The scholarships offer vital support as these students keep advancing research, leadership, and influence in computing.

News Contact

Emily Smith

College of Computing

Georgia Tech

Pagination

- Page 1

- Next page